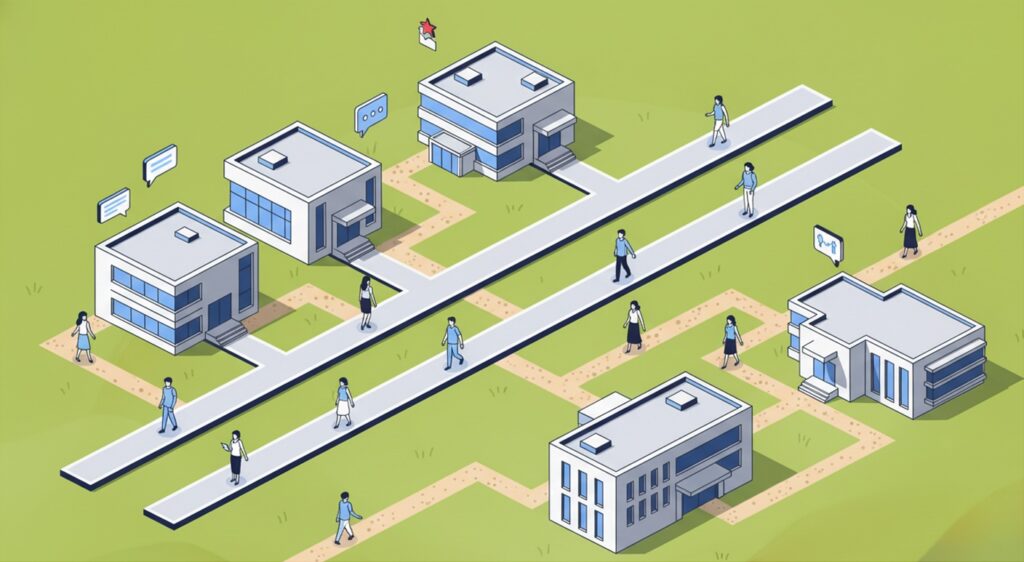

any metropolis park and you’ll discover slender grime trails slicing throughout the grass. They seem between sidewalks, throughout lawns, and thru corners planners by no means supposed folks to cross.

City designers name these need paths.

They kind when folks select their very own routes as an alternative of the official walkways. Over time the grass disappears and the casual path turns into seen proof of how folks really transfer by way of an area.

For many years, planners handled these paths as errors. Right now many see them in a different way. Need paths reveal one thing worthwhile. They present the place the unique design didn’t match human habits.

One thing related is occurring inside trendy organizations.

Workers are already utilizing synthetic intelligence to draft emails, analyze information, summarize paperwork, and generate concepts. A advertising supervisor could use a language mannequin to arrange marketing campaign copy. A finance analyst could summarize experiences with an AI assistant. A product supervisor could take a look at concepts by way of generative instruments.

Typically this experimentation occurs quietly, outdoors official methods or insurance policies.

This phenomenon has a reputation: Shadow AI.

The time period echoes the older idea of shadow IT, when workers put in software program with out approval from company IT departments. Right now the sample is repeating itself with synthetic intelligence. Employees deliver generative instruments into their each day workflows lengthy earlier than organizations set up governance buildings or accredited platforms.

This raises apparent issues. Delicate company data can enter exterior methods with out clear visibility into how that information is processed or saved. Regulatory frameworks equivalent to GDPR or the EU AI Act could also be violated unintentionally. Safety groups lose oversight of how data strikes by way of the group.

But focusing solely on danger misses one thing essential.

Shadow AI typically reveals the place present methods are not holding tempo with how folks have to work. Like need paths in a park, Shadow AI exposes the place workers are trying to find quicker and extra clever methods to finish on a regular basis duties.

If this habits had been uncommon it is perhaps manageable. The numbers recommend in any other case.

Surveys point out that just about 4 out of 5 folks utilizing AI at work deliver their very own instruments reasonably than counting on methods offered by their employer. Many work together with these instruments by way of private accounts as an alternative of enterprise platforms designed to guard delicate information.

The implications are starting to floor. Research recommend that greater than half of workers admit to getting into confidential data into AI methods. Organizations experiencing widespread Shadow AI utilization report increased breach prices and larger publicity to regulatory danger.

In different phrases, synthetic intelligence is already spreading by way of workplaces at scale. Governance, coaching, and safety frameworks are arriving later.

This hole creates actual dangers. It additionally reveals one thing about how technological change really unfolds inside organizations.

Shadow AI as an organizational sign

There may be one other option to interpret Shadow AI.

When workers undertake new instruments outdoors official channels they don’t seem to be solely bypassing governance buildings. They’re additionally revealing the place present workflows are failing them.

In lots of organizations, generative AI seems first on the margins of each day work. Workers experiment with drafting emails quicker, summarizing paperwork, analyzing spreadsheets, making ready shows, or exploring concepts. These experiments occur quietly as a result of the official methods accessible to them don’t but assist these capabilities.

What safety groups see as unauthorized utilization can due to this fact perform as a type of organizational diagnostic. Shadow AI reveals the place individuals are attempting to maneuver quicker than the methods round them permit.

City thinkers have lengthy noticed an identical sample in cities. Jane Jacobs argued that cities ought to be designed round how folks really transfer by way of them, not round how planners think about they need to. The casual paths throughout parks and campuses present a map of actual habits.

Organizations dealing with the rise of Shadow AI could have to undertake the identical mindset.

As a substitute of viewing Shadow AI solely as a governance failure, leaders can deal with it as an early sign of the place synthetic intelligence may ship the best worth. The casual experiments showing throughout groups typically level to workflows the place automation, augmentation, or improved entry to data might considerably improve productiveness.

When organizations method these patterns with curiosity reasonably than concern, the scattered experiments start to disclose one thing worthwhile. They spotlight repetitive duties workers are already attempting to speed up and expose processes the place higher instruments might unlock significant effectivity beneficial properties.

What first seems chaotic typically factors to alternatives for consolidation. As a substitute of dozens of fragmented experiments throughout departments, organizations can establish frequent wants and construct ruled, scalable options round them.

Dealt with properly, this shift does greater than scale back danger. It empowers workers with safe instruments that assist the way in which they already work, turning synthetic intelligence from one thing that requires fixed supervision right into a multiplier of creativity and innovation. Ignoring Shadow AI means lacking these alerts. It permits pricey and uncoordinated experiments to proceed within the shadows whereas organizations overlook insights that might information smarter adoption.

Studying from the AI footpaths

Organizations that need to govern synthetic intelligence successfully should first perceive how it’s already getting used.

Shadow AI shouldn’t solely be investigated as a compliance drawback. It ought to be examined as a sign of the place workers try to maneuver quicker than the methods round them permit. Step one is visibility. Leaders want to grasp which instruments workers are already utilizing and why. Worker surveys, technical audits, and open discussions throughout departments typically reveal the place experimentation is occurring first. Advertising and marketing, gross sales, finance, HR, and product groups continuously emerge as early adopters.

As soon as these patterns turn into seen the problem shifts from suppression to construction. Organizations should outline which instruments are applicable, set up governance insurance policies aligned with information sensitivity and regulation, and design processes that replicate how work really occurs contained in the group.

Tradition issues simply as a lot as coverage. Workers ought to really feel protected discussing how they’re experimenting with synthetic intelligence reasonably than hiding it. When folks concern punishment or further workload for adopting new instruments, experimentation doesn’t disappear. It merely strikes additional into the shadows.

Efficient governance due to this fact requires greater than guidelines. It requires an setting the place accountable experimentation is inspired and guided. Coaching, entry to accredited instruments, and clear guardrails permit organizations to remodel scattered experiments into coordinated progress.

Understanding what already exists within the shadows is commonly step one towards constructing a resilient and clever AI technique.

A last thought

In apply, Shadow AI is never the results of malice. Extra typically it displays misalignment and an absence of communication contained in the group. When workers really feel unsafe sharing their experiments, when curiosity is met primarily with correction, the predictable consequence is silence.

Folks don’t cease experimenting. They merely cease sharing.

If organizations need to govern AI successfully, they have to start by creating environments the place considerate exploration is feasible. Coaching, sensible examples, and clear guardrails make accountable experimentation seen as an alternative of hidden.

However tradition issues most. When curiosity replaces suspicion, experimentation strikes out of the shadows and into the open.

Step one towards governing Shadow AI is easy: perceive the place individuals are already strolling.

About Aleksandra Osipova

Aleksandra Osipova is the founding father of Apricity Lab, the place she works with leaders and organizations navigating the transition towards AI-enabled methods.

She writes about synthetic intelligence, methods considering, and the way forward for work. Extra of her work and insights might be discovered on her LinkedIn.