For the previous couple of years, the AI world has adopted a easy rule: if you would like a Giant Language Mannequin (LLM) to resolve a more durable downside, make its Chain-of-Thought (CoT) longer. However new analysis from the College of Virginia and Google proves that ‘thinking long’ isn’t the identical as ‘thinking hard’.

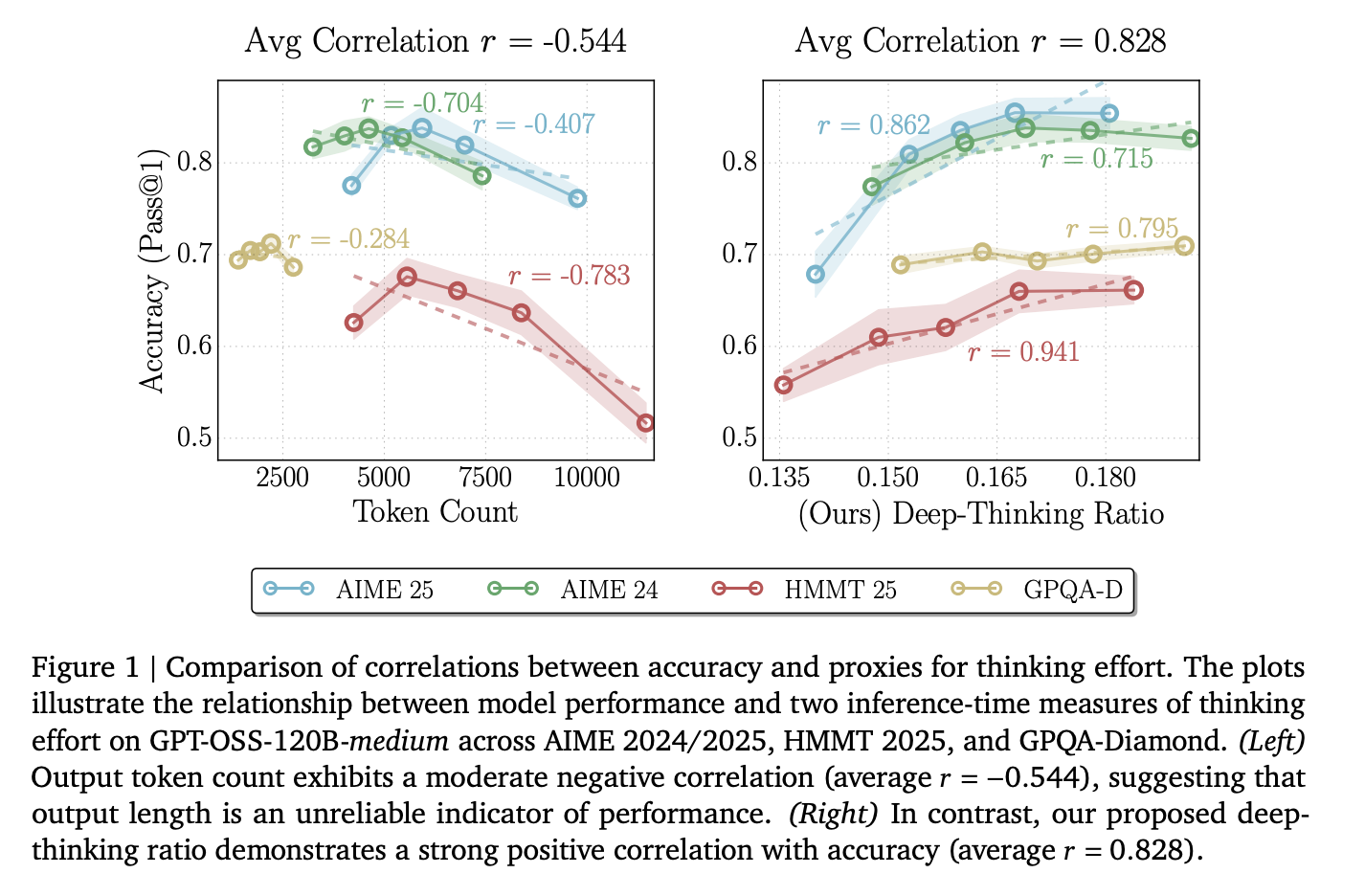

The analysis staff reveals that merely including extra tokens to a response can really make an AI much less correct. As an alternative of counting phrases, the Google researchers introduce a brand new measurement: the Deep-Considering Ratio (DTR).

The Failure of ‘Token Maxing‘

Engineers typically use token rely as a proxy for the trouble an AI places right into a job. Nevertheless, the researchers discovered that uncooked token rely has a median correlation of r= -0.59 with accuracy.

This unfavourable quantity signifies that because the mannequin generates extra textual content, it’s extra prone to be flawed. This occurs due to ‘overthinking,’ the place the mannequin will get caught in loops, repeats redundant steps, or amplifies its personal errors. Counting on size alone wastes costly compute on uninformative tokens.

What are Deep-Considering Tokens?

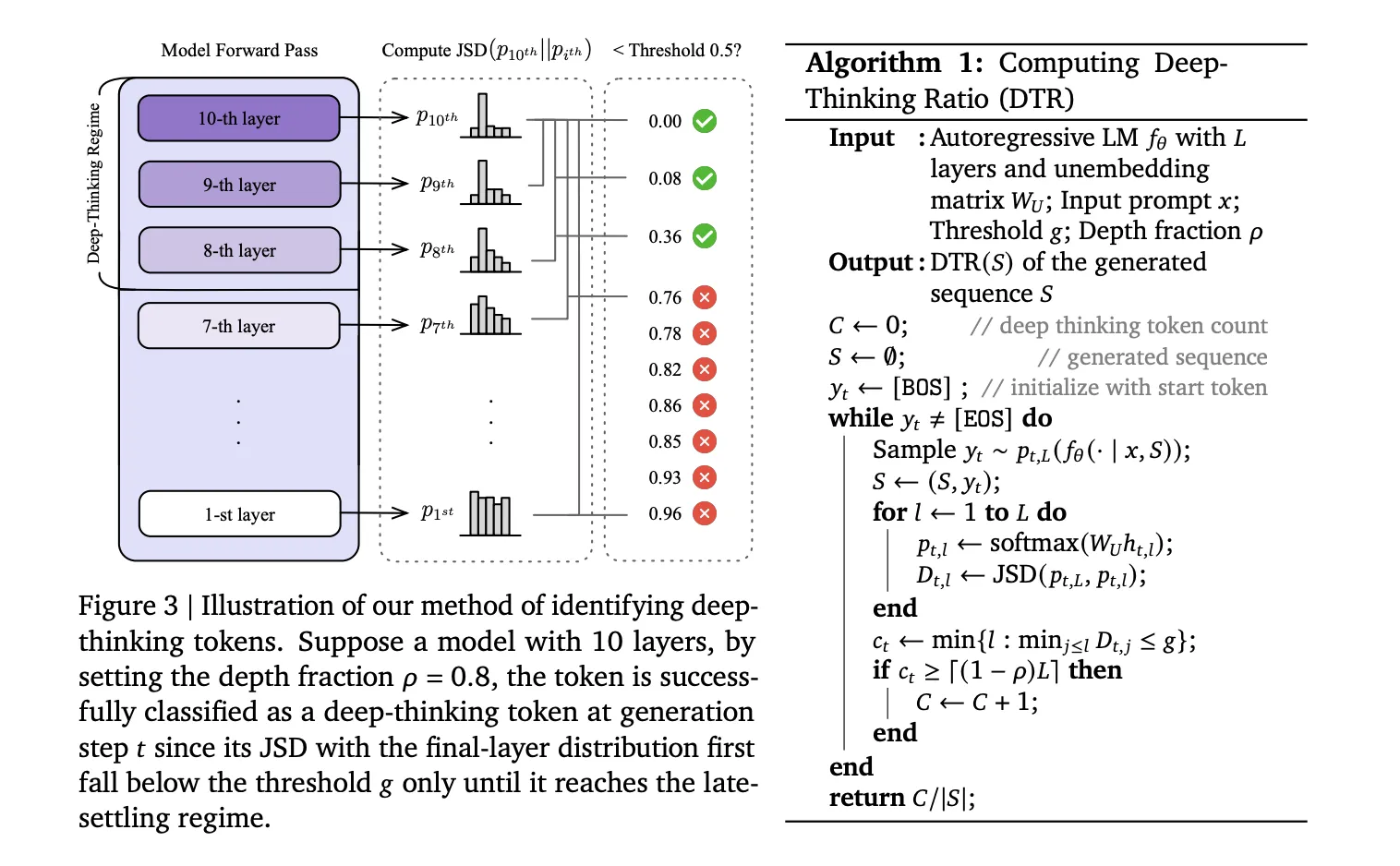

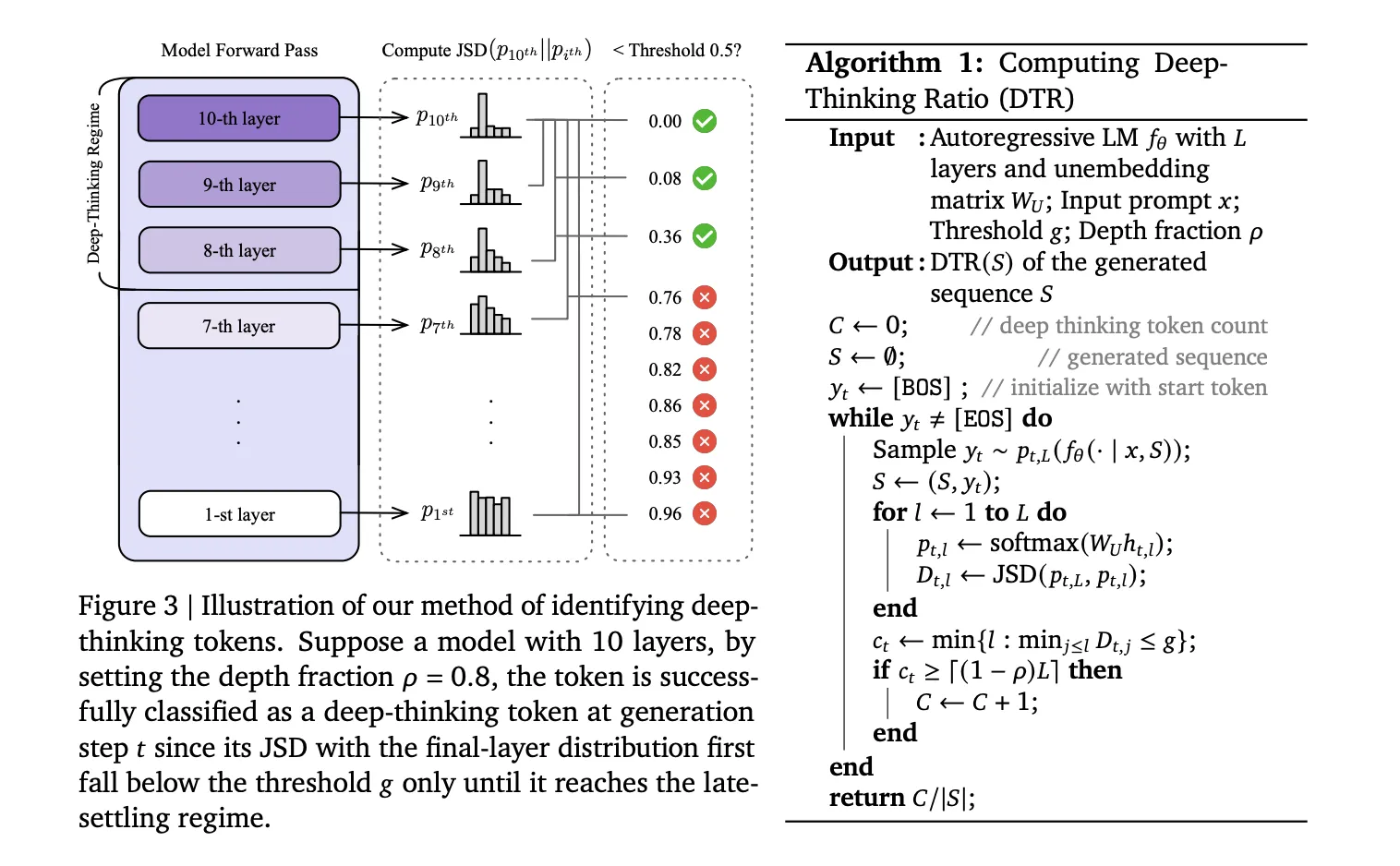

The analysis staff argued that actual ‘thinking’ occurs contained in the layers of the mannequin, not simply within the ultimate output. When a mannequin predicts a token, it processes information by means of a collection of transformer layers (L).

- Shallow Tokens: For simple phrases, the mannequin’s prediction stabilizes early. The ‘guess’ doesn’t change a lot from layer 5 to layer 36.

- Deep-Considering Tokens: For tough logic or math symbols, the prediction shifts considerably within the deeper layers.

Measure Depth

To establish these tokens, the analysis staff makes use of a method to peek on the mannequin’s inner ‘drafts’ at each layer. They mission the intermediate hidden states (htl) into the vocabulary house utilizing the mannequin’s unembedding matrix (WU). This produces a chance distribution (pt,l) for each layer.

They then calculate the Jensen-Shannon Divergence (JSD) between the intermediate layer distribution and the ultimate layer distribution (pt,L):

Dt,l := JSD(pt,L || pt,l)

A token is a deep-thinking token if its prediction solely settles within the ‘late regime’—outlined by a depth fraction (⍴). Of their assessments, they set ⍴= 0.85, which means the token solely stabilized within the ultimate 15% of the layers.

The Deep-Considering Ratio (DTR) is the share of those ‘hard’ tokens in a full sequence. Throughout fashions like DeepSeek-R1-70B, Qwen3-30B-Considering, and GPT-OSS-120B, DTR confirmed a robust common optimistic correlation of r = 0.683 with accuracy.

Suppose@n: Higher Accuracy at 50% the Value

The analysis staff used this progressive strategy to create Suppose@n, a brand new method to scale AI efficiency throughout inference.

Most devs use Self-Consistency (Cons@n), the place they pattern 48 totally different solutions and use majority voting to choose one of the best one. That is very costly as a result of you must generate each single token for each reply.

Suppose@n adjustments the sport through the use of ‘early halting’:

- The mannequin begins producing a number of candidate solutions.

- After simply 50 prefix tokens, the system calculates the DTR for every candidate.

- It instantly stops producing the ‘unpromising’ candidates with low DTR.

- It solely finishes the candidates with excessive deep-thinking scores.

The Outcomes on AIME 2025

| Methodology | Accuracy | Avg. Value (okay tokens) |

| Cons@n (Majority Vote) | 92.7% | 307.6 |

| Suppose@n (DTR-based Choice) | 94.7% | 155.4 |

On the AIME 25 math benchmark, Suppose@n achieved larger accuracy than normal voting whereas lowering the inference value by 49%.

Key Takeaways

- Token rely is a poor predictor of accuracy: Uncooked output size has a median unfavourable correlation (r = -0.59) with efficiency, which means longer reasoning traces typically sign ‘overthinking’ fairly than larger high quality.

- Deep-thinking tokens outline true effort: In contrast to easy tokens that stabilize in early layers, deep-thinking tokens are these whose inner predictions bear important revision in deeper mannequin layers earlier than converging.

- The Deep-Considering Ratio (DTR) is a superior metric: DTR measures the proportion of deep-thinking tokens in a sequence and reveals a strong optimistic correlation with accuracy (common r = 0.683), persistently outperforming length-based or confidence-based baselines.

- Suppose@n permits environment friendly test-time scaling: By prioritizing and ending solely the samples with excessive deep-thinking ratios, the Suppose@n technique matches or exceeds the efficiency of normal majority voting (Cons@n).

- Large value discount through early halting: As a result of DTR may be estimated from a brief prefix of simply 50 tokens, unpromising generations may be rejected early, lowering complete inference prices by roughly 50%.

Take a look at the Paper. Additionally, be happy to comply with us on Twitter and don’t overlook to affix our 100k+ ML SubReddit and Subscribe to our Publication. Wait! are you on telegram? now you’ll be able to be part of us on telegram as effectively.