we shipped the primary model of our inside information base, I acquired a Slack message from a colleague within the compliance staff. She had requested the system about our contractor onboarding course of. The reply was assured, well-structured, and unsuitable in precisely the way in which that issues most in compliance work: it described the final course of however neglected the exception clause that utilized to contractors in regulated tasks.

The exception was within the doc. It had been ingested. The embedding mannequin had encoded it. The LLM, given the suitable context, would have dealt with it with out hesitation. However the retrieval system by no means surfaced it as a result of the chunk containing that exception had been break up proper on the paragraph boundary the place the final rule ended and the qualification started.

I bear in mind opening the chunk logs and watching two consecutive data. The primary ended mid-argument: ‘Contractors follow the standard onboarding process as described in Section 4…’ The second started in a approach that made no sense with out its predecessor: ‘…unless engaged on a project classified under Annex B, in which case…’. Every chunk, in isolation, was a fraction. Collectively, they contained an entire, essential piece of data. Individually, neither was retrievable in any significant approach.

The pipeline appeared nice on our check queries. The pipeline was not nice.

That second the compliance Slack message and the chunk log open aspect by aspect is the place I ended treating chunking as a configuration element and began treating it as essentially the most consequential design determination within the stack. Every part that follows is what I discovered after that, within the order I discovered it.

Here’s what I discovered, and the way I discovered it.

In This Article

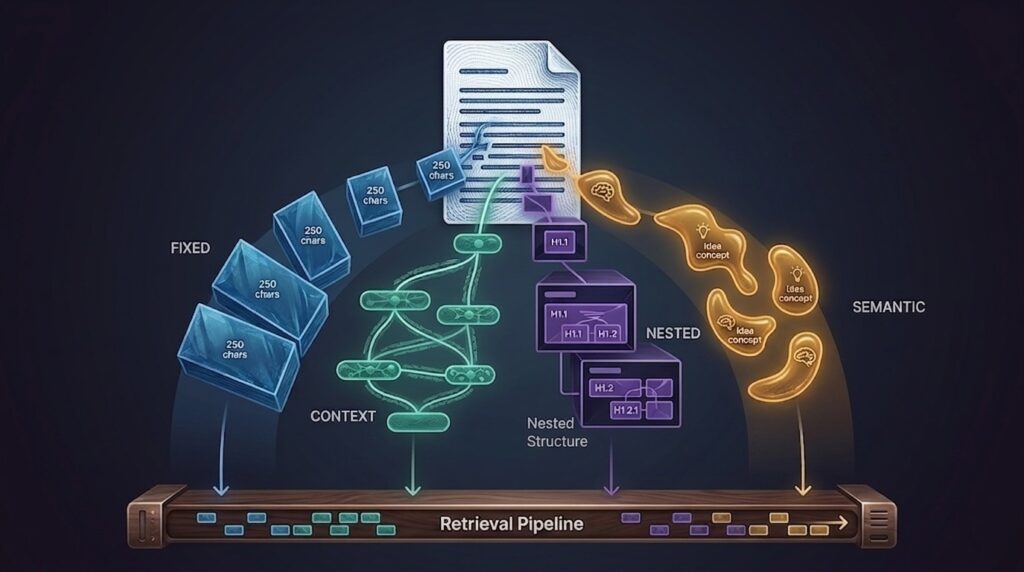

- What Chunking Is and Why Most Engineers Underestimate It

- The First Crack: Mounted-Dimension Chunking

- Getting Smarter: Sentence Home windows

- When Your Paperwork Have Construction: Hierarchical Chunking

- The Alluring Choice: Semantic Chunking

- The Drawback No person Talks About: PDFs, Tables, and Slides

- A Resolution Framework, Not a Rating

- What RAGAS Tells You About Your Chunks

- The place This Leaves Us

What Chunking Is and Why Most Engineers Underestimate It

Within the earlier article, I described chunking as ‘the step that most teams get wrong.’ I stand by that and let me attempt to clarify it in a lot particulars.

Should you haven’t learn it but test it out right here: A sensible information to RAG for Enterprise Data Bases

A RAG pipeline doesn’t retrieve paperwork. It retrieves chunks. Each reply your system ever produces is generated from a number of of those models, not from the total supply doc, not from a abstract, however from the precise fragment your retrieval system discovered related sufficient to go to the mannequin. The form of that fragment determines every thing downstream.

Here’s what meaning concretely. A bit that’s too giant incorporates a number of concepts: the embedding that represents it’s a mean of all of them, and no single concept scores sharply sufficient to win a retrieval contest. A bit that’s too small is exact however stranded: a sentence with out its surrounding paragraph is usually uninterpretable, and the mannequin can’t generate a coherent reply from a fraction. A bit that cuts throughout a logical boundary provides you the contractor exception: full info break up into two incomplete items, every of which seems irrelevant in isolation.

Chunking sits upstream of each mannequin in your stack. Your embedding mannequin can’t repair a foul chunk. Your re-ranker can’t resurface a piece that was by no means retrieved. Your LLM can’t reply from context it was by no means given.

The explanation this will get underestimated is that it fails silently. A retrieval failure doesn’t throw an exception. It produces a solution that’s nearly proper, believable, fluent, and subtly unsuitable in the way in which that issues. In a demo, with hand-picked queries, nearly proper is okay. In manufacturing, with the total distribution of actual consumer questions, it’s a sluggish erosion of belief. And the system that erodes belief quietly is more durable to repair than one which breaks loudly.

I discovered this the laborious approach. What follows is the development of methods I labored by means of, not a taxonomy of choices, however a sequence of issues and the pondering that led from one to the following.

The First Crack: Mounted-Dimension Chunking

We began the place most groups begin: fixed-size chunking. Break up each doc into 512-token home windows, 50-token overlap. It took a day to arrange. The early demos appeared nice. No person questioned it.

from llama_index.core.node_parser import TokenTextSplitter

parser = TokenTextSplitter(

chunk_size=512,

chunk_overlap=50

)

nodes = parser.get_nodes_from_documents(paperwork)The logic is intuitive. Embedding fashions have token limits. Paperwork are lengthy. Break up them into mounted home windows and also you get a predictable, uniform index. The overlap ensures that boundary-crossing info will get a second likelihood. Easy, quick, and fully detached to what the textual content is definitely saying.

That final half “completely indifferent to what the text is saying” is the issue. Mounted-size is aware of nothing about the place a sentence ends, or {that a} three-paragraph coverage exception ought to keep collectively, or that splitting a numbered checklist at step 4 produces two ineffective fragments. It’s a mechanical operation utilized to a semantic object, and the mismatch will finally present up in your context recall.

For our corpus: Confluence pages, HR insurance policies, engineering runbooks, it confirmed up in context recall. After I ran our first RAGAS analysis, we have been sitting at 0.72 on context recall. That meant roughly one in 4 queries was lacking a chunk of data that existed within the corpus. For an inside information base, that isn’t a rounding error. That’s the compliance Slack message, ready to occur.

When fixed-size works: Quick, uniform paperwork the place each part is self-contained like product FAQs, information summaries, help ticket descriptions. In case your corpus seems like a listing of unbiased entries, fixed-size will serve you properly and the simplicity is a real benefit.

The overlap does assist. However there’s a restrict to how a lot a 50-token overlap can compensate for a 300-token paragraph that occurs to cross a piece boundary. It’s a patch, not an answer. I saved it in our pipeline for a subset of paperwork the place it genuinely match. For every thing else, I began trying elsewhere.

Getting Smarter: Sentence Home windows

The contractor exception downside, as soon as I understood it clearly, pointed on to what was wanted: a technique to retrieve on the precision of a single sentence, however generate with the context of a full paragraph. Not one or the opposite, each, at completely different levels of the pipeline.

LlamaIndex SentenceWindowNodeParser is constructed precisely for this. At indexing time, it creates one node per sentence. Every node carries the sentence itself as its retrievable textual content, however shops the encircling window of sentences, three both aspect by default, in its metadata. At question time, the retriever finds essentially the most related sentence. At era time, a post-processor expands it again to its window earlier than the context reaches the LLM.

from llama_index.core.node_parser import SentenceWindowNodeParser

from llama_index.core.postprocessor import MetadataReplacementPostProcessor

# Index time: one node per sentence, window saved in metadata

parser = SentenceWindowNodeParser.from_defaults(

window_size=3,

window_metadata_key="window",

original_text_metadata_key="original_text"

)

# Question time: increase sentence again to its surrounding window

postprocessor = MetadataReplacementPostProcessor(

target_metadata_key="window"

)The compliance exception that had been invisible to the fixed-size pipeline turned retrievable instantly. The sentence ‘unless engaged on a project classified under Annex B’ scored extremely on a question about contractor onboarding exceptions as a result of it contained precisely that info, with out dilution. The window enlargement then gave the LLM the three sentences earlier than and after it, which supplied the context to generate an entire, correct reply.

On our analysis set, context recall went from 0.72 to 0.88 after switching to condemn home windows for our narrative doc sorts. That single metric enchancment was price a number of days of debugging mixed.

The place sentence home windows fail: Tables and code blocks. A sentence parser has no idea of a desk row. It can break up a six-column desk throughout dozens of single-sentence nodes, every of which is meaningless in isolation. In case your corpus incorporates structured information, and most enterprise corpora do, sentence home windows will resolve one downside whereas creating one other. Extra on this shortly.

I additionally found that window_size wants tuning to your particular area. A window of three works properly for narrative coverage textual content the place context is native. For technical runbooks the place a step in a process references a setup part 5 paragraphs earlier, three sentences just isn’t sufficient context and you will note it in your reply relevancy scores. I ended up working evaluations at window sizes of two, 3, and 5, evaluating RAGAS metrics throughout all three earlier than deciding on 3 as one of the best stability for our corpus. Don’t assume the default is right to your use case. Measure it.

When Your Paperwork Have Construction: Hierarchical Chunking

Our engineering corpus: structure determination data, system design paperwork, API specs appeared nothing just like the HR coverage information. The place the HR paperwork have been flowing prose, the engineering paperwork have been structured: sections with headings, subsections with numbered steps, tables of parameters, code examples. The sentence window strategy that labored fantastically on coverage textual content was producing mediocre outcomes on these.

The explanation turned clear after I appeared on the retrieved chunks. A question about our API price limiting coverage would floor a sentence from the speed limiting part, expanded to its window however the window was three sentences in the midst of a twelve-step configuration course of. The mannequin acquired context that was technically about price limiting however was lacking the precise numbers as a result of these appeared in a desk two paragraphs away from the explanatory sentence that had been retrieved.

The repair was apparent as soon as I framed it accurately: retrieve at paragraph granularity, however generate with section-level context. The paragraph is restricted sufficient to win a retrieval contest. The part is full sufficient for the LLM to motive from. I wanted one thing that did each not one or the opposite.

from llama_index.core.node_parser import HierarchicalNodeParser, get_leaf_nodes

from llama_index.core.storage.docstore import SimpleDocumentStore

from llama_index.core.indices.postprocessor import AutoMergingRetriever

# Three-level hierarchy: web page -> part (512t) -> paragraph (128t)

parser = HierarchicalNodeParser.from_defaults(

chunk_sizes=[2048, 512, 128]

)

nodes = parser.get_nodes_from_documents(paperwork)

leaf_nodes = get_leaf_nodes(nodes) # Solely leaf nodes go into the vector index

# Full hierarchy saved in docstore so AutoMergingRetriever can stroll it

docstore = SimpleDocumentStore()

docstore.add_documents(nodes)

# At question time: if sufficient sibling leaves match, return the father or mother as an alternative

retriever = AutoMergingRetriever(

vector_retriever, storage_context, verbose=True

)AutoMergingRetriever is what makes this sensible. If it retrieves sufficient sibling leaf nodes from the identical father or mother, it promotes them to the father or mother node robotically. You don’t hardcode ‘retrieve paragraphs, return sections’ the retrieval sample drives the granularity determination at runtime. Particular queries get paragraphs. Broad queries that contact a number of components of a bit get the part.

For our engineering paperwork, context precision improved noticeably. We have been not passing the mannequin a sentence about price limiting stripped of the desk that contained the precise limits. We have been passing it the part, which meant the mannequin had the numbers it wanted.

Earlier than selecting hierarchical chunking: Verify your doc construction. Run a fast audit of heading ranges throughout your corpus. If the median doc has fewer than two significant heading ranges, the hierarchy has nothing to work with and you’ll get behaviour nearer to fixed-size. Hierarchical chunking earns its complexity solely when the construction it exploits is genuinely there.

The Alluring Choice: Semantic Chunking

After hierarchical chunking, I got here throughout semantic chunking and for a day or two I used to be satisfied I had been doing every thing unsuitable. The thought is clear: as an alternative of imposing boundaries primarily based on token depend or doc construction, you let the embedding mannequin detect the place the subject truly shifts. When the semantic distance between adjoining sentences crosses a threshold, that’s your lower level. Each chunk, in concept, covers precisely one concept.

from llama_index.core.node_parser import SemanticSplitterNodeParser

parser = SemanticSplitterNodeParser(

buffer_size=1,

breakpoint_percentile_threshold=95, # prime 5% of distances = boundaries

embed_model=embed_model

)In concept, that is the suitable abstraction. In apply, it introduces two issues that matter in manufacturing.

The primary is indexing latency. Semantic chunking requires embedding each sentence earlier than it could actually decide a single boundary. For a corpus of fifty,000 paperwork, this isn’t a one-afternoon job. We ran a check on a subset of 5,000 paperwork and the indexing time was roughly 4 occasions longer than hierarchical chunking on the identical corpus. For a system that should re-index incrementally as paperwork change, that price is actual.

The second is threshold sensitivity.

The breakpoint_percentile_threshold controls how aggressively the parser cuts. At 95, it cuts hardly ever and produces giant chunks. At 80, it cuts incessantly and produces fragments. The correct worth is dependent upon your area, your embedding mannequin, and the density of your paperwork and you can not comprehend it with out working evaluations. I spent two days tuning it on our corpus and settled on 92, which produced affordable outcomes however nothing that clearly justified the indexing price over the hierarchical strategy for our structured engineering paperwork.

The place semantic chunking genuinely outperformed the options was on our mixed-format paperwork – pages exported from Notion that mixed narrative explanations, inline tables, brief code snippets, and bullet lists in no specific hierarchy. For these, neither sentence home windows nor hierarchical parsing had a robust structural sign to work with, and semantic splitting a minimum of produced topically coherent chunks.

My sincere take: Semantic chunking is price making an attempt when your corpus is genuinely unstructured and homogeneous technique approaches are constantly underperforming. It’s not a default improve from hierarchical or sentence-window approaches. It trades simplicity and velocity for theoretical coherence, and that commerce is barely price making with proof from your individual analysis information.

The Drawback No person Talks About: PDFs, Tables, and Slides

Every part I’ve described to this point assumes that your paperwork are clear, well-formed textual content. In apply, enterprise information bases are filled with issues that aren’t clear, well-formed textual content. They’re scanned PDFs with two-column layouts. They’re spreadsheet exports the place an important info is in a desk. They’re PowerPoint decks the place the important thing perception is a diagram with a caption, and the caption solely is sensible alongside the diagram.

Not one of the methods above deal with these instances. And in lots of enterprise corpora, these instances usually are not edge instances they’re nearly all of the content material.

Scanned PDFs and Structure-Conscious Parsing

The usual LlamaIndex PDF loader makes use of PyPDF beneath the hood. It extracts textual content in studying order, which works acceptably for easy single-column paperwork however fails badly on something with a posh structure. A two-column tutorial paper will get its textual content interleaved column-by-column. A scanned type will get garbled textual content or nothing in any respect. A report with side-bar callouts will get these callouts inserted mid-sentence into the principle physique.

For critical PDF processing, I switched to PyMuPDF (additionally known as fitz) for layout-aware extraction, and pdfplumber for paperwork the place I wanted granular management over desk detection. The distinction on advanced paperwork was important sufficient that I thought-about it a separate preprocessing pipeline reasonably than only a completely different loader.

import fitz # PyMuPDF

def extract_with_layout(pdf_path):

doc = fitz.open(pdf_path)

pages = []

for web page in doc:

# get_text('blocks') returns textual content grouped by visible block

blocks = web page.get_text('blocks')

# Kind top-to-bottom, left-to-right inside every column

blocks.kind(key=lambda b: (spherical(b[1] / web page.rect.peak * 20), b[0])) # normalised row bucket

page_text = ' '.be part of(b[4] for b in blocks if b[6] == 0) # sort 0 = textual content

pages.append({'textual content': page_text, 'web page': web page.quantity})

return pagesThe important thing perception with PyMuPDF is that get_text(‘blocks’) provides you textual content grouped by visible structure block and never in uncooked character order. Sorting these blocks by vertical place after which horizontal place reconstructs the proper studying order for multi-column layouts in a approach that easy character-order extraction can’t.

For paperwork with heavy scanning artefacts or handwritten parts, Tesseract OCR through pytesseract is the fallback. It’s slower and noisier, however for scanned HR varieties or signed contracts, it’s usually the one possibility. I routed paperwork to OCR solely when PyMuPDF returned fewer than 50 phrases per web page – a heuristic that recognized scanned paperwork reliably with out including OCR latency to the clear PDF path.

LlamaParse as a managed different: LlamaCloud’s LlamaParse handles advanced PDF layouts, tables, and even diagram extraction with out requiring you to construct and preserve a preprocessing pipeline. For groups that can’t afford to speculate engineering time in PDF parsing infrastructure, it’s price evaluating. The trade-off is sending paperwork to an exterior service. Verify your information residency necessities earlier than utilizing it in a regulated atmosphere.

Tables: The Retrieval Black Gap

Tables are the only commonest explanation for silent retrieval failures in enterprise RAG methods, and they’re nearly by no means addressed in normal tutorials. The reason being simple: a desk is a two-dimensional construction in a one-dimensional illustration. Once you flatten a desk to textual content, the row-column relationships disappear, and what you get is a sequence of values that’s primarily uninterpretable with out the context of the headers.

Take a desk with columns for Product, Area, Q3 Income, and YoY Progress. Flatten it naively and the retrieved chunk seems like this: ‘Product A EMEA 4.2M 12% Product B APAC 3.1M -3%’. The embedding mannequin has no concept what these numbers imply in relation to one another. The LLM, even when it receives that chunk, can’t reliably reconstruct the row-column relationships. You get a confident-sounding reply that’s arithmetically unsuitable.

The strategy that labored for us was to deal with tables as a separate extraction sort and reconstruct them as natural-language descriptions earlier than indexing. For every desk row, generate a sentence that encodes the row in readable type, preserving the connection between values and headers.

import pdfplumber

def tables_to_sentences(pdf_path):

sentences = []

with pdfplumber.open(pdf_path) as pdf:

for web page in pdf.pages:

for desk in web page.extract_tables():

if not desk or len(desk) < 2:

proceed

headers = desk[0]

for row in desk[1:]:

# Reconstruct row as a readable sentence

pairs = [f'{h}: {v}' for h, v in zip(headers, row) if h and v]

sentences.append(', '.be part of(pairs) + '.')

return sentencesThis can be a deliberate trade-off. You lose the visible construction of the desk, however you achieve one thing extra beneficial: chunks which might be semantically full. A question asking for ‘Product A revenue in EMEA for Q3’ now matches a piece that reads ‘Product: Product A, Region: EMEA, Q3 Revenue: 4.2M, YoY Growth: 12%’ – which is each retrievable and interpretable.

For tables which might be too advanced for row-by-row reconstruction, pivot tables, multi-level headers, merged cells, I discovered it extra dependable to go the uncooked desk to a succesful LLM at indexing time and ask it to generate a prose abstract. This provides price and latency to the indexing pipeline however it’s price it for tables that carry genuinely vital info.

Slide Decks and Picture-Heavy Paperwork

PowerPoint decks and PDF shows are a specific problem as a result of essentially the most significant info is usually not within the textual content in any respect. A slide with a title of ‘Q3 Architecture Decision’ and a diagram exhibiting service dependencies carries most of its which means within the diagram, not within the six bullet factors beneath it.

For textual content extraction from slide decks, python-pptx handles the mechanical extraction of slide titles, textual content bins, speaker notes. Speaker notes are sometimes extra information-dense than the slide physique and may at all times be listed alongside it.

from pptx import Presentation

def extract_slide_content(pptx_path):

prs = Presentation(pptx_path)

slides = []

for i, slide in enumerate(prs.slides):

title = ''

body_text = []

notes = ''

for form in slide.shapes:

if not form.has_text_frame:

proceed

# Use placeholder_format to reliably detect title (idx 0)

# shape_type alone is unreliable throughout slide layouts

if form.is_placeholder and form.placeholder_format.idx == 0:

title = form.text_frame.textual content

else:

body_text.append(form.text_frame.textual content)

if slide.has_notes_slide:

notes = slide.notes_slide.notes_text_frame.textual content

slides.append({

'slide': i + 1,

'title': title,

'physique': ' '.be part of(body_text),

'notes': notes

})

return slidesFor diagrams, screenshots, and image-heavy slides the place the visible content material carries which means, textual content extraction alone is inadequate. The sensible choices are two: use a multimodal mannequin to generate an outline of the picture at indexing time, or use LlamaParse, which handles blended content material extraction together with photos.

I used GPT-4V at indexing time for our most vital slide decks the quarterly structure evaluations and the system design paperwork that engineers referenced incessantly. The price was manageable as a result of it utilized solely to slides flagged as diagram-heavy, and the advance in retrieval high quality on technical queries was noticeable. For a totally native stack, LLaVA through Ollama is a viable different, although the outline high quality for advanced diagrams is meaningfully decrease than GPT-4V on the time of writing.

The sensible heuristic: If a slide has fewer than 30 phrases of textual content however incorporates a picture, flag it for multimodal processing. If it has greater than 30 phrases, textual content extraction might be enough. This easy rule accurately routes roughly 85% of slides in a typical enterprise deck corpus with out guide classification.

A Resolution Framework, Not a Rating

By the point I had labored by means of all of this, I had stopped fascinated with chunking methods as a ranked checklist the place one was ‘best.’ The correct query just isn’t ‘which strategy is most sophisticated?’ It’s ‘which strategy matches this document type?’ And in most enterprise corpora, the reply is completely different for various doc sorts.

The routing logic we ended up with was easy sufficient to implement in a day and it made a measurable distinction throughout the board.

from llama_index.core.node_parser import (

SentenceWindowNodeParser, HierarchicalNodeParser, TokenTextSplitter

)

def get_parser(doc):

doc_type = doc.metadata.get('doc_type', 'unknown')

supply = doc.metadata.get('source_format', '')

# Structured docs with clear heading hierarchy

if doc_type in ['spec', 'runbook', 'adr', 'contract']:

return HierarchicalNodeParser.from_defaults(

chunk_sizes=[2048, 512, 128]

)

# Narrative textual content: insurance policies, HR docs, onboarding guides

elif doc_type in ['policy', 'hr', 'guide', 'faq']:

return SentenceWindowNodeParser.from_defaults(window_size=3)

# Slides and PDFs undergo their very own preprocessing first

elif supply in ['pptx', 'pdf_complex']:

return TokenTextSplitter(chunk_size=256, chunk_overlap=30)

# Protected default for unknown or short-form content material

else:

return TokenTextSplitter(chunk_size=512, chunk_overlap=50)The doc_type metadata comes out of your doc loaders. LlamaIndex’s Confluence connector exposes web page template sort. SharePoint exposes content material sort. For PDFs and slides loaded from a listing, you possibly can infer sort from filename patterns or folder construction. Tag paperwork at load time, retrofitting sort metadata throughout an current index is painful.

Right here is the total determination map:

What RAGAS Tells You About Your Chunks

Every part I’ve described above, the contractor exception, the context recall enchancment, the desk retrieval failures, I may solely quantify as a result of I had RAGAS working from early within the undertaking. With out it, I’d have been debugging by instinct, fixing the queries I occurred to note and lacking those I didn’t.

One factor price saying clearly earlier than we go additional is that it’s a measurement software, not a restore software. It can inform you exactly which class of failure you’re looking at. It is not going to inform you repair it. That half continues to be yours.

Within the context of chunking particularly, the 4 core metrics every diagnose a unique failure mode:

The sample that first informed me fixed-size chunking was failing was a context recall rating of 0.72 alongside a faithfulness rating of 0.86. The mannequin was grounding faithfully on what it acquired. The issue was what it acquired was incomplete. The retriever was lacking roughly one in 4 related items of data. That sample, particularly, factors upstream: the problem is in chunking or retrieval, not in era.

After switching to condemn home windows for our narrative paperwork, context recall moved to 0.88 and faithfulness held at 0.91. Context precision additionally improved from 0.71 to 0.83 as a result of sentence-level nodes are extra topically particular than 512-token home windows, and the retriever was surfacing fewer irrelevant chunks alongside the suitable ones.

Know which metric is failing earlier than you alter something. A low context recall is a retrieval downside. A low faithfulness is a era downside. A low context precision is a chunking downside. Treating them interchangeably is the way you spend every week making adjustments that do nothing helpful.

The purpose is straightforward: run RAGAS earlier than and after each important chunking change. The numbers will prevent days of guesswork.

The place This Leaves Us

My compliance colleague by no means knew that her Slack message was the beginning of weeks of chunking work. From her aspect, the system simply began giving higher solutions after just a few weeks. From mine, that message was essentially the most helpful suggestions I received throughout your complete undertaking not as a result of it informed me what to construct, however as a result of it informed me the place I had been unsuitable.

That hole between the consumer expertise and the engineering actuality is why chunking is very easy to underestimate. When it fails, customers don’t file tickets about ‘poor context recall at K=5.’ They quietly cease trusting the system. And by the point you discover the drop in utilization, the issue has been there for weeks.

The behavior this pressured on me was easy: consider earlier than you optimise. Run RAGAS on a practical question set earlier than you resolve your chunking is sweet sufficient. Manually learn a random pattern of chunks together with those that produced unsuitable solutions, not simply those you examined on. Have a look at the chunk log when a consumer stories an issue. The proof is there. Most groups simply don’t look.

Chunking just isn’t glamorous engineering. It doesn’t make for spectacular convention talks. However it’s the layer that determines whether or not every thing above it, the embedding mannequin, the retriever, the re-ranker, the LLM, truly has an opportunity of working. Get it proper, measure it rigorously, and the remainder of the pipeline has a basis price constructing on.

The LLM just isn’t the bottleneck. In most manufacturing RAG methods, the bottleneck is the choice about the place one chunk ends and the following begins.

There’s extra coming on this collection and if manufacturing RAG has taught me something, it’s that each layer you suppose you perceive has a minimum of one failure mode you haven’t discovered but. The subsequent article will discover one other one. Keep tuned.