The real question isn’t when the next improved model will arrive, but rather who will create the proper framework to support it. Think of this framework as the infrastructure surrounding the model — the agent loop, tool definitions, context management, memory systems, prompts, and workflows that transform a basic language model into a practical product. The model serves as the engine, while the framework is everything that makes it functional. Cursor and Claude Desktop are examples of such frameworks.

A persistent discussion in the AI coding tool community revolves around whether adopting a specific framework leads to vendor dependency. Memory represents the most critical aspect of this issue. When your agent’s memory is contained within a closed framework or accessible only through a proprietary API, you don’t truly control it, and the cost of switching increases rapidly. However, this doesn’t have to be the case.

The concept behind this blog post is straightforward: maintain the memory layer independently from the framework, and allow any framework to connect to it.

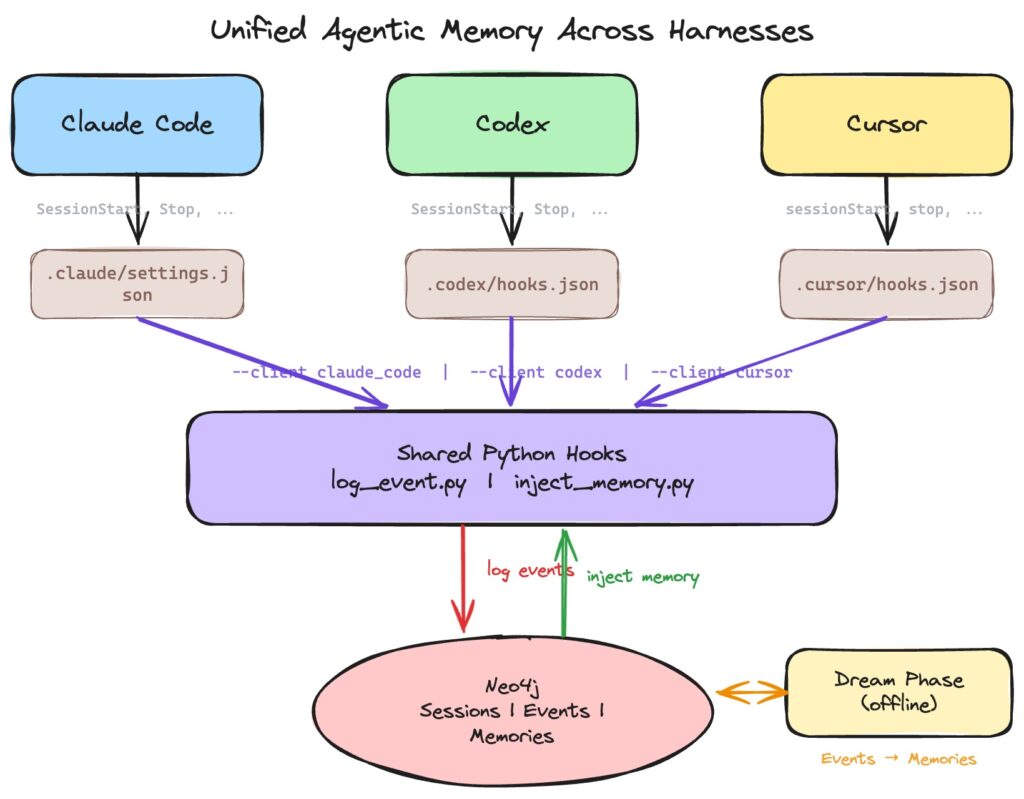

In this article, I’ll demonstrate how to create a single, shared memory layer that functions across three different coding agents — Claude Code, OpenAI’s Codex, and Cursor — using hooks as the integration method and Neo4j as the storage solution.

The hook integration code is available on GitHub.

MCP tools have limitations for memory management

MCP (Model Context Protocol) servers are the standard approach for connecting agents to external systems. They work well. You can set up a Neo4j database as an MCP tool and allow the agent to query it when needed.

However, MCP tools are agent-initiated. The model must choose to use the tool and understand when and why to do so. This means:

- The agent must “remember to remember” — it needs to actively decide to store information worth recalling later.

- Consistency isn’t guaranteed — one session might record everything, while the next might record nothing.

- You depend on the model’s judgment about what’s worth remembering, in real time, while it’s focused on other tasks.

What’s really needed is passive, deterministic logging — a system that captures every session event regardless of what the model is doing, without using any of its context or attention.

This is precisely what hooks provide.

Introducing hooks

Hooks are shell commands that execute automatically during lifecycle events: when a session begins, when a user submits a prompt, before and after each tool use, and when the session concludes. The agent doesn’t choose to trigger them — they run automatically.

The crucial insight is that hooks are remarkably consistent across providers. Claude Code, Codex, Cursor, and others all support essentially the same lifecycle events:

- SessionStart for when the agent session begins

- UserPromptSubmit (or

beforeSubmitPromptin Cursor) for when the user sends a message - PreToolUse / PostToolUse for before and after each tool call

- Stop for when the session ends

The hook receives a JSON payload via stdin containing the session ID, event name, tool details, and user prompt. The hook can also output JSON via stdout to inject additional context back into the conversation. Same interface, three different frameworks/clients.

There are additional hooks available, such as notification events, subagent stop, or pre-compact hooks, but we won’t be using those here.

Shared memory layer

Now we need a place to store the memory. Quick disclosure: I work at Neo4j, so we’ll be using it in this example.

The model is simple. Each agent session is represented as a node, connected to a linked list of event nodes, one for each hook invocation. Events are categorized by the lifecycle event that triggered them: SessionStart, UserPromptSubmit, PreToolUse, PostToolUse, Stop. A session becomes an ordered timeline of everything that occurred during that run.

All five event types are recorded in the store, providing a complete audit trail of every session across every framework. Two of them also serve as injection points. SessionStart triggers before the agent reads its system prompt, so any output from the hook gets added to the beginning of the system prompt. This is how persistent, agent-level memory enters the context. UserPromptSubmit triggers just before the user message is sent, and any output gets appended to the user prompt. This handles turn-level context, such as retrieving memories relevant to what the user just typed.

So, what happens when we start a new session in any of these frameworks with active hooks, for example Cursor?

If we examine the results in Neo4j browser.

One important limitation: hooks operate outside the framework’s model session. You can’t use the same LLM that the agent is communicating with. If you need LLM-powered functionality within a hook, you must make your own model call, which adds latency to every event the agent triggers. That’s why the hooks here only perform two tasks: logging events and injecting pre-computed memories. They remain fast and deterministic.

Dream phase

The actual memory processing occurs in a separate dream phase: extracting facts from sessions, summarizing what

When a session ends, the system updates the graph. This is simply a scheduled task that runs every few hours, processes the events collected since the previous run, and saves them back to the memory store. While you could trigger a memory update asynchronously each time a session ends, that seems excessive; a periodic batch approach is more straightforward and works well for this demo.

The dream job collects all events since the session’s last checkpoint, passes them to Claude along with the current memory store, and requests it to generate a concise set of lasting notes. These notes follow a markdown wiki format—the same structure Karpathy and others have adopted for personal LLM memory, and the same format used by Anthropic’s skills: each memory is stored as a file at a semantic path like profile/role.md, tools/bash/common-flags.md, or project/neo4j-skills.md, with YAML frontmatter at the top and descriptive content below. Claude is instructed to merge rather than append, so each path represents a living document rather than a log; if new events conflict with an existing note, the existing note is updated. The outcome is a collection of compact, self-contained markdown files that a future session can load fresh—identical in structure to a skill, just generated by the dream phase rather than manually written.

Running this on our example produces the following memories.

Now, if I launched a different harness—Claude Code Desktop with hooks enabled—I would see the following response.

Accessing the memory

The final component is enabling the agent to access the memory layer. As noted, there are two methods for injecting information into the agent: hooks and MCP tools.

Hooks operate deterministically and execute at the beginning of each session to populate the system prompt. This is where profile details and guidance on efficient memory usage should be placed. You can also add extra context when a user prompt submission event occurs, but it’s limited to appending only—you can’t modify other parts of the prompt.

MCP tools, conversely, provide the LLM with direct, on-demand access to the memory layer. Rather than passively receiving context at startup, the agent can search for pertinent memories, save new information, and modify or delete existing entries. In essence, it provides basic CRUD operations over the abstracted markdown files stored in Neo4j.

Ultimately, I believe you’ll nearly always need both. In this project, we only implemented hooks—no MCP tools—but you can always integrate the official Neo4j MCP to allow the agent to explore the graph.

Getting it to work

Interestingly, my approach to setting up the hooks was to direct the agent within any harness and ask it to install hooks, though I’m certain there are more effective methods as well.

Summary

If you don’t control your memory, you don’t control your agent. Every harness today creates its own isolated ecosystem of context, preferences, and session history. Switch between them and you start over. That doesn’t have to be the reality.

Hooks disrupt this pattern. They enable you to build integrations that connect to any harness externally, and the interface is remarkably uniform. Claude Code, Codex, and Cursor all trigger the same lifecycle events: session start, prompt submission, tool use, session end. The hook receives JSON via stdin, optionally outputs JSON on stdout to inject context, and that’s the complete interface. Because hooks execute deterministically on every event, they don’t consume model attention or depend on the agent to determine what’s worth preserving. The same two Python scripts support all three clients; minimal shell wrappers that accept a --client flag are the only per-harness adaptation needed.

The architecture consists of three layers:

- Hooks (online) — passively record every event into Neo4j as a linked list per session. No model calls, no latency overhead, just appending.

- Dream phase (offline) — a batch job processes accumulated events, asks Claude to distill them into lasting markdown memories, and saves them back. Memories are organized by topic and merged rather than appended, so they remain current instead of growing indefinitely.

- Injection (online) — at the next session start in any harness, profile memories are loaded into context. With each user prompt, relevant memories are automatically searched and appended.

The result is a memory layer that exists beneath all three harnesses, functions without any of them being aware of the others, and is entirely yours. You can transition from Cursor to Claude Code to Codex mid-project and continue exactly where you stopped. Your agent’s knowledge of who you are, what you’re working on, and how you prefer to work travels with you—not with the tool.

Code is available here.

P.S.: All images were created by the author.