We automated the analysis and made the code available on GitHub.

This project began when I attempted to replicate the paper “Learning Word Vectors for Sentiment Analysis” by Maas et al. (2011).

At the time, I was finishing my final year of engineering school. My objective was to reproduce the study, critically examine the authors’ methodology, and, where possible, benchmark their approach against alternative word representation techniques, including those based on large language models.

What stood out to me was the method’s simplicity and elegance. It reminded me of logistic regression in credit scoring: straightforward, interpretable, and remarkably effective when applied properly.

I found the paper so insightful that I wanted to share the key takeaways from my experience.

I highly encourage you to read the original paper. It offers valuable insights into word representation, particularly how to measure the closeness between two words—both in terms of meaning and sentiment polarity—based on the specific contexts in which they appear.

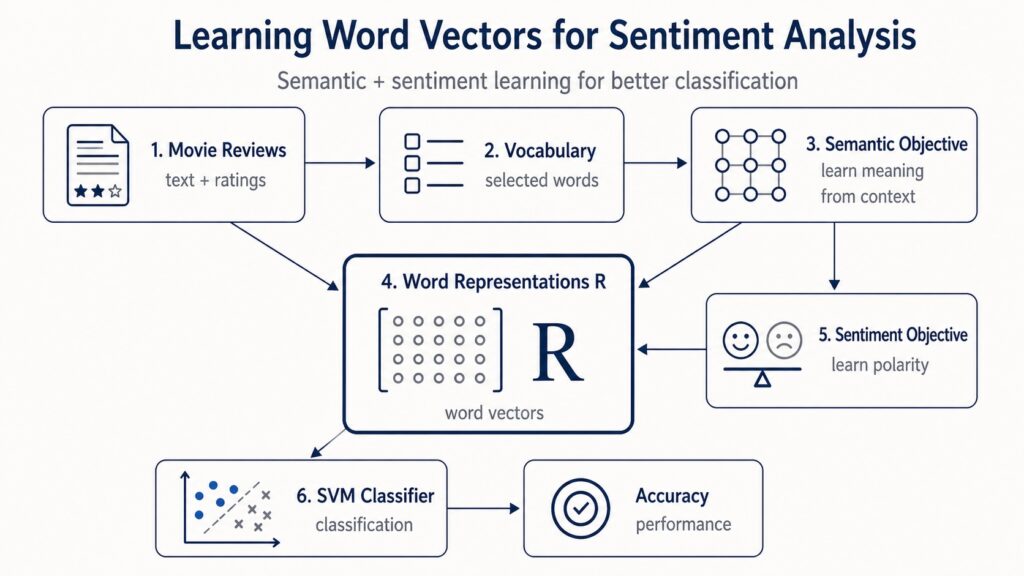

On the surface, the model appears straightforward: build a vocabulary, learn word vectors, integrate sentiment signals, and test performance on IMDb reviews.

However, as I began coding it up, I discovered that several implementation details significantly impact results: how the vocabulary is constructed, how document vectors are formed, how the semantic learning objective is optimized, and how sentiment information is embedded into the word vectors.

In this guide, we’ll walk through the core concepts of the paper using Python.

We’ll start by explaining the intuition behind the model. Next, we’ll describe the dataset structure, build the vocabulary, implement the semantic component, incorporate the sentiment objective, and finally assess the learned representations using a linear SVM classifier.

The SVM will let us quantify classification accuracy and compare our outcomes with those reported in the original study.

What problem does the paper address?

Traditional Bag-of-Words models work well for classification tasks, but they fail to capture meaningful relationships between words. For instance, “wonderful” and “amazing” should be close in vector space because they convey similar meanings and sentiments. Conversely, “wonderful” and “terrible” might appear in similar movie review contexts but express opposite sentiments.

The paper aims to learn word embeddings that reflect both semantic similarity and sentiment orientation.

Data structure

The dataset includes:

- 25,000 labeled training reviews

- 50,000 unlabeled training reviews

- 25,000 labeled test reviews

The labeled reviews are categorized by sentiment:

- Negative reviews have ratings from 1 to 4

- Positive reviews have ratings from 7 to 10

These ratings are linearly scaled to the range [0, 1], enabling the model to treat sentiment as a continuous probability of positive polarity.

aclImdb/

├── train/

│ ├── pos/ "0_10.txt" -> review #0, 10 stars, very positive

│ │ "1_7.txt" -> review #1, 7 stars, positive

│ ├── neg/ "10_2.txt" -> review #10, 2 stars, very negative

│ │ "25_4.txt" -> review #25, 4 stars, negative

│ └── unsup/ "938_0.txt" -> review #938, 0 stars, unlabeled

└── test/

├── pos/ positive reviews, never seen during training

└── neg/ negative reviews, never seen during trainingWe can represent each document using a Review class with the following fields: text, stars, label, and bucket.

Of course, it doesn’t have to be a class named Review—any data structure works as long as it includes these attributes.

from dataclasses import dataclass

from typing import Optional

@dataclass

class Review:

text: str

stars: int

label: str

bucket: strVocabulary construction

The paper constructs a fixed vocabulary by first excluding the 50 most common words, then selecting the next 5,000 most frequent tokens.

No stemming is performed, and standard stopword lists are not removed. This is crucial because certain stopwords—especially negations like “not” or “never”—can carry important sentiment cues.

Before building the vocabulary, we first examine the raw data.

We observed that the reviews aren’t fully preprocessed. Some contain HTML tags, which we strip during data loading. We also remove punctuation attached to words, such as ".", ",", "!", or "?".

This differs slightly from the original paper. The authors retain certain non-word tokens because they may help capture sentiment—for example, "!" or ":-)" can signal emotional tone. In our version, we remove such punctuation and later assess how much this choice affects model performance.

When working with text data, one question always arises:

How should we numerically represent documents and words?

The authors begin by gathering all tokens from the training set—both labeled and unlabeled reviews. Think of this as collecting every word from all training documents into a single pool.

Next, to enable numerical modeling, they create a vocabulary—a curated set of words.

They build a dictionary mapping each token (which we’ll loosely refer to as a “word”) to its frequency—the total number of times it appears across the entire training set, including both labeled and unlabeled reviews.

From this, they select the 5,000 most frequent words after discarding the top 50 most common terms.

These 5,000 words form the vocabulary V.

Each word in V corresponds to one column in the representation matrix R. The authors choose to embed each word in a 50-dimensional space. As a result, the matrix R has the following dimensions:

Each column in the matrix R represents a vector for a single word:

The model’s objective is to learn this matrix R so that the resulting word vectors capture two key aspects simultaneously:

- Semantic information — words appearing in similar contexts should have similar vectors;

- Sentiment information — words expressing similar emotional tone or polarity should also be close together.

This dual objective forms the core idea of the paper.

After loading and cleaning the data and building the vocabulary, we can proceed to constructing the model itself.

The first stage of the model is unsupervised. It learns semantic word representations from both labeled and unlabeled reviews.

The second stage then introduces supervision by leveraging star ratings to inject sentiment information into the same vector space.

Semantic component

The semantic component defines a probabilistic model for documents.

Each document is linked to a hidden vector theta, which captures the overall semantic direction of that document.

Each word is represented by a vector , stored as a column within the matrix R.

The likelihood of seeing a word w in a document is computed using a softmax model:

In simple terms, a word is more likely to appear when its vector aligns well with the document’s vector theta.

MAP estimation of theta

The model alternates between two steps.

First, it holds R and b fixed and estimates one theta vector for each document.

Then, it holds theta fixed and updates R and b.

The theta vectors are not kept as permanent parameters. They are temporary, document-specific variables used to refine the word representations.

To estimate the model’s parameters, the authors use maximum likelihood.

The concept is straightforward: we aim to find the parameters R and b that make the observed documents as probable as possible under the model.

Starting from the probabilistic formulation of a document, they introduce a MAP estimate θ̂ₖ for each document dₖ. Then, by taking the logarithm of the likelihood and adding regularization terms, they derive the objective function used to learn the word representation matrix R and the bias vector b:

This function is maximized with respect to R and b. The model’s hyperparameters include the regularization weights (λ and ν) and the word vector dimensionality β.

During this step, we learn the semantic representation matrix. This matrix captures how words relate to one another based on the contexts in which they appear.

Sentiment component

The semantic model alone can identify that words appear in similar contexts. However, this is insufficient for capturing sentiment.

For instance, “wonderful” and “terrible” may both frequently appear in movie reviews, yet they convey opposite opinions.

To address this, the paper introduces a supervised sentiment objective:

The vector ψ defines a sentiment direction within the word vector space. Only the labeled data is used in this component.

If a word vector falls on one side of the hyperplane, it is classified as positive. If it falls on the other side, it is classified as

negative.

They merged the sentiment goal with the sentiment component to create the complete learning objective:

The first component captures semantic relationships between words. The second component incorporates sentiment signals. The regularization terms keep the vector values from becoming excessively large.

|| represents the count of documents in the dataset that share the same rounded value of . The weight factor is applied to address the common imbalance in ratings found in review datasets.

Classification and results

After learning the word representation matrix R, we can use it to construct features at the document level.

The goal now is to determine whether each movie review expresses a positive or negative opinion.

To accomplish this, the researchers train a linear SVM using 25,000 labeled training reviews and test its performance on 25,000 labeled test reviews.

The key question is not just whether the word vectors are meaningful, but whether they actually enhance sentiment classification accuracy.

To investigate this, we test several document representation methods and compare the outcomes with those shown in Table 2 of the paper.

The only variation across different configurations is how each review is encoded before being fed into the classifier.

1. Bag of Words baseline

The first approach uses a standard Bag of Words model. In the paper, this baseline is labeled Bag of Words (bnc). The notation indicates:

- b = binary weighting

- n = no IDF weighting

- c = cosine normalization

Each review is encoded as a vector v with 5,000 dimensions, matching the vocabulary size of 5,000 words.

For each word j in the vocabulary:

This method simply tracks whether a word is present at least once, without considering its frequency.

The vector is then normalized using its Euclidean norm:

This normalized vector serves as the Bag of Words baseline for training the SVM.

This baseline performs well because sentiment classification often depends on clear lexical indicators. Terms like excellent, boring, awful, or great already convey strong sentiment cues.

2. Semantic-only word vector representation

The second approach employs word vectors trained using the semantic-only model.

The authors first encode a document as a Bag of Words vector v. Next, they derive a dense document representation by multiplying this

vector by the learned matrix:

Where

This vector can be understood as a weighted blend of the word vectors found in the review.

In the paper, when creating document features using the product Rv, the authors apply bnn weighting to v. This stands for:

- b = binary weighting

- n = no IDF weighting

- n = no cosine normalization before projection

After calculating Rv, they then apply cosine normalization to the resulting dense vector.

The final representation is therefore:

This representation draws on semantic knowledge extracted from the training reviews, covering both labeled and unlabeled documents.

3. Full semantic + sentiment representation

The third representation is built in the same way, but uses the full matrix Rfull.

This matrix is trained using both parts of the model:

- the semantic objective, which captures contextual relationships between words;

- the sentiment objective, which incorporates polarity signals from the star ratings.

For each document, we calculate:

Then we normalize it:

The idea behind is that it should generate document features that reflect both the topic of the review and whether the tone is positive or negative.

This is the central contribution of the paper: learning word vectors that merge semantic similarity with sentiment orientation.

4. Full representation + Bag of Words

The last configuration merges the learned dense representation with the original Bag of Words representation.

We join the two representations together to get:

This gives the classifier two complementary types of information:

- a dense 50-dimensional representation learned by the model;

- a sparse lexical representation that retains exact word-presence details.

This pairing is effective because word vectors can generalize across related words, while Bag of Words features maintain precise lexical evidence.

For instance, the dense representation may recognize that wonderful and amazing are similar, while the Bag of Words representation still tracks the exact occurrence of each word.

We then train a linear SVM on the labeled training set and test it on the test set.

This lets us address two questions.

First, do the learned word vectors enhance sentiment classification?

Second, does incorporating sentiment information into the word vectors provide an advantage over using semantic information alone?

Implementation in Python

We build the model in five steps:

- Load and clean the IMDb dataset

- Build the vocabulary

- Train the semantic component

- Train the full semantic + sentiment model

- Evaluate the learned representations using SVM

The table below shows the nearest neighbors of selected target words

Here is the paraphrased version of the article in HTML format:

in the learned vector space.

For every target word, we list the top five most similar words based on cosine similarity. The complete model, which merges both the semantic and sentiment objectives, generally retrieves words that are similar in both meaning and emotional tone. The semantic-only model identifies contextual and lexical similarities but does not directly incorporate sentiment labels during the training process.

The table below presents a comparison between our findings and those documented in the original paper. For each representation, we train a linear SVM on the labeled training reviews and measure the classification accuracy on the test set. This approach helps us assess how effectively each document representation handles the IMDb sentiment classification challenge.

The full model achieves results very close to those reported in the paper, indicating that the sentiment objective has been implemented accurately.

The most significant difference occurs in the semantic-only model. This discrepancy may stem from optimization specifics, preprocessing steps, or the method used to construct document-level features for classification.

Conclusion

In this article, we recreated the core components of the model introduced by Maas et al. (2011).

We built the semantic objective, incorporated the sentiment objective, and tested the learned word vectors on the IMDb sentiment classification task.

The model demonstrates how unlabeled data can assist in learning semantic structure, while labeled data can embed sentiment information within the same vector space.

This is a straightforward yet impactful concept: word vectors should capture not only the meaning of words but also their emotional tone.

Although this post does not address every aspect of the paper, we strongly encourage reading the authors’ original work. Our intention was to convey the ideas that motivated us and the satisfaction we experienced both in studying the paper and crafting this article.

We hope you find it as enjoyable as we did.

Image Credits

All images and visualizations in this article were generated by the author using Python (pandas, matplotlib, seaborn, and plotly) and Excel, unless otherwise noted.

References

[1] Andrew L. Maas, Raymond E. Daly, Peter T. Pham, Dan Huang, Andrew Y. Ng, and Christopher Potts. 2011. Learning Word Vectors for Sentiment Analysis. In Proceedings of the 49th Annual Meeting of the Association for Computational Linguistics: Human Language Technologies, pages 142–150, Portland, Oregon, USA. Association for Computational Linguistics.

Dataset: IMDb Large Movie Review Dataset (CC BY 4.0).