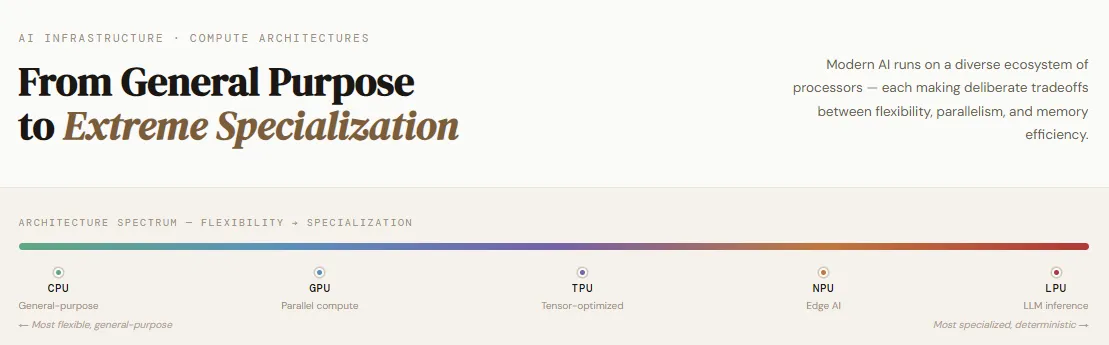

Fashionable AI is now not powered by a single sort of processor—it runs on a various ecosystem of specialised compute architectures, every making deliberate tradeoffs between flexibility, parallelism, and reminiscence effectivity. Whereas conventional programs relied closely on CPUs, immediately’s AI workloads are distributed throughout GPUs for enormous parallel computation, NPUs for environment friendly on-device inference, and TPUs designed particularly for neural community execution with optimized knowledge movement.

Rising improvements like Groq’s LPU additional push the boundaries, delivering considerably sooner and extra energy-efficient inference for big language fashions. As enterprises shift from general-purpose computing to workload-specific optimization, understanding these architectures has develop into important for each AI engineer.

On this article, we’ll discover a number of the commonest AI compute architectures and break down how they differ in design, efficiency, and real-world use instances.

Central Processing Unit (CPU)

The CPU (Central Processing Unit) stays the foundational constructing block of recent computing and continues to play a crucial position even in AI-driven programs. Designed for general-purpose workloads, CPUs excel at dealing with advanced logic, branching operations, and system-level orchestration. They act because the “brain” of a pc—managing working programs, coordinating {hardware} parts, and executing a variety of functions from databases to internet browsers. Whereas AI workloads have more and more shifted towards specialised {hardware}, CPUs are nonetheless indispensable as controllers that handle knowledge movement, schedule duties, and coordinate accelerators like GPUs and TPUs.

From an architectural standpoint, CPUs are constructed with a small variety of high-performance cores, deep cache hierarchies, and entry to off-chip DRAM, enabling environment friendly sequential processing and multitasking. This makes them extremely versatile, simple to program, extensively accessible, and cost-effective for normal computing duties.

Nonetheless, their sequential nature limits their skill to deal with massively parallel operations resembling matrix multiplications, making them much less appropriate for large-scale AI workloads in comparison with GPUs. Whereas CPUs can course of various duties reliably, they usually develop into bottlenecks when coping with huge datasets or extremely parallel computations—that is the place specialised processors outperform them. Crucially, CPUs aren’t changed by GPUs; as a substitute, they complement them by orchestrating workloads and managing the general system.

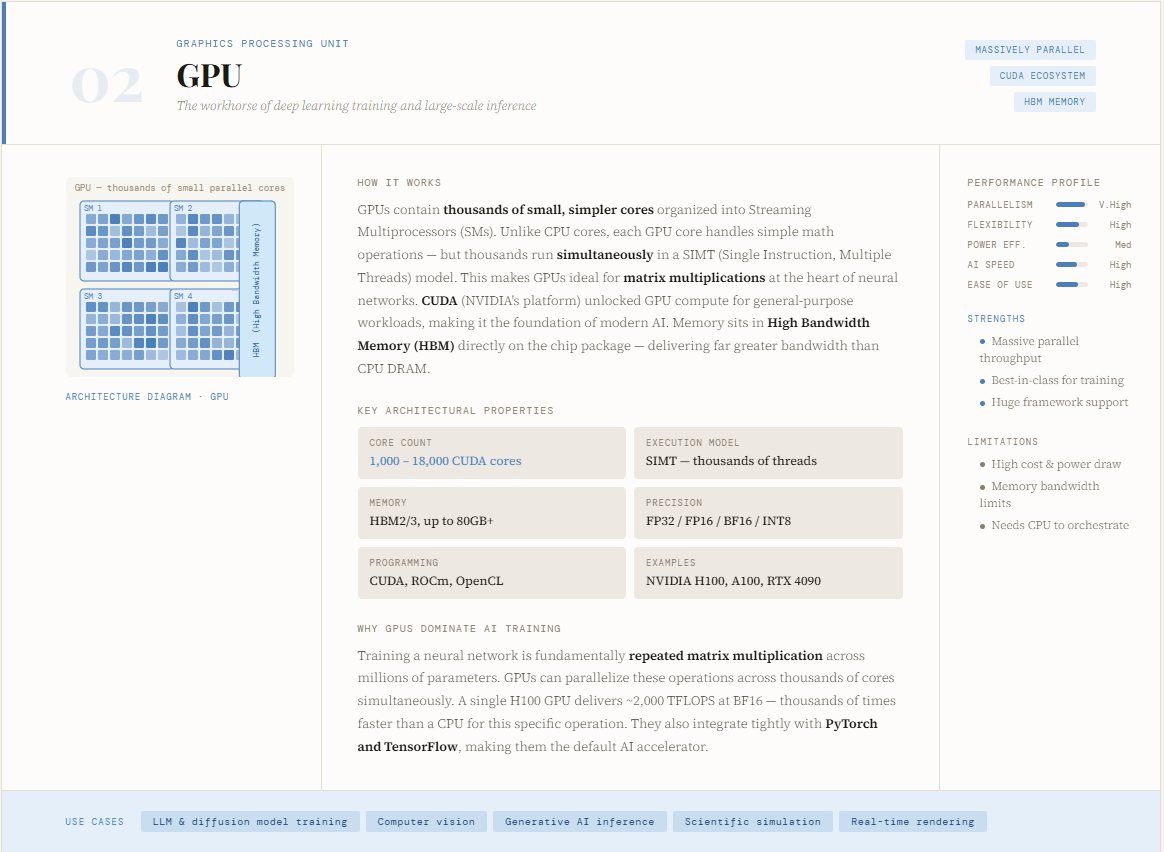

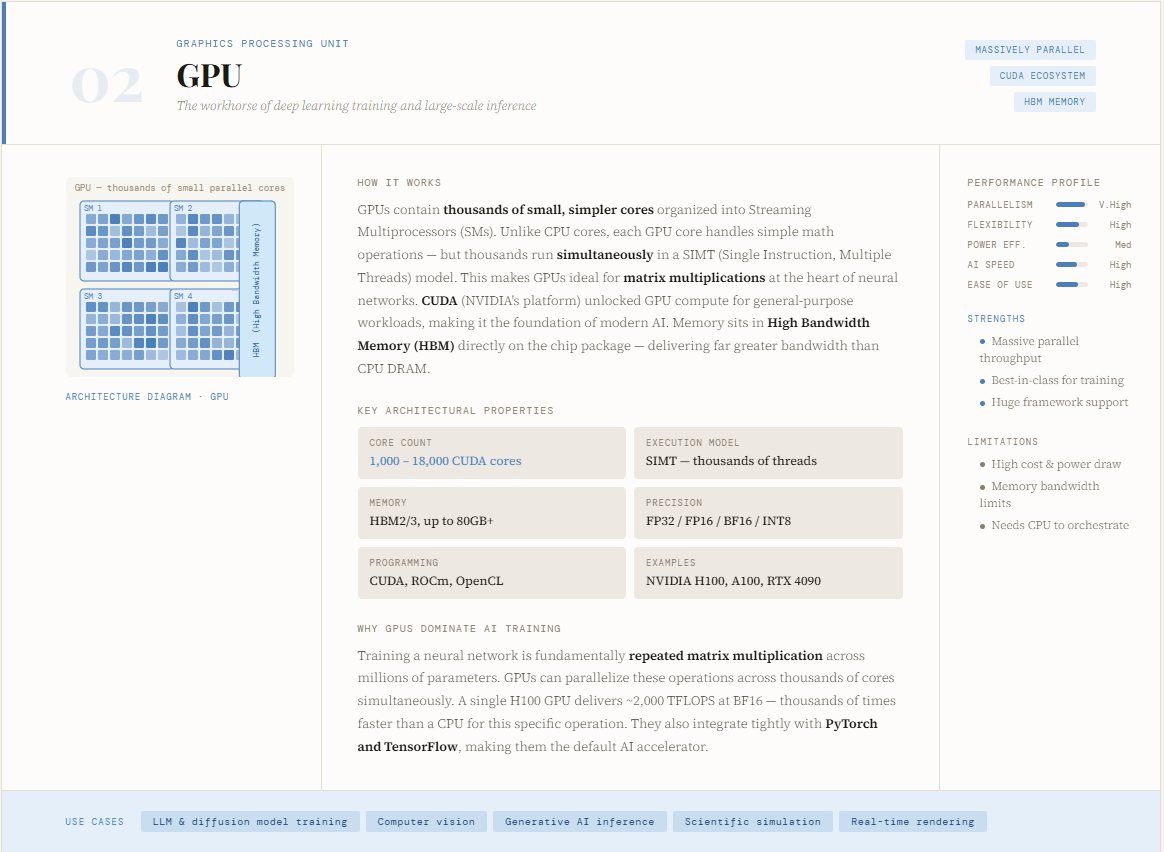

Graphics Processing Unit (GPU)

The GPU (Graphics Processing Unit) has develop into the spine of recent AI, particularly for coaching deep studying fashions. Initially designed for rendering graphics, GPUs advanced into highly effective compute engines with the introduction of platforms like CUDA, enabling builders to harness their parallel processing capabilities for general-purpose computing. Not like CPUs, which deal with sequential execution, GPUs are constructed to deal with hundreds of operations concurrently—making them exceptionally well-suited for the matrix multiplications and tensor operations that energy neural networks. This architectural shift is exactly why GPUs dominate AI coaching workloads immediately.

From a design perspective, GPUs encompass hundreds of smaller, slower cores optimized for parallel computation, permitting them to interrupt massive issues into smaller chunks and course of them concurrently. This allows huge speedups for data-intensive duties like deep studying, laptop imaginative and prescient, and generative AI. Their strengths lie in dealing with extremely parallel workloads effectively and integrating properly with standard ML frameworks like Python and TensorFlow.

Nonetheless, GPUs include tradeoffs—they’re dearer, much less available than CPUs, and require specialised programming data. Whereas they considerably outperform CPUs in parallel workloads, they’re much less environment friendly for duties involving advanced logic or sequential decision-making. In apply, GPUs act as accelerators, working alongside CPUs to deal with compute-heavy operations whereas the CPU manages orchestration and management.

Tensor Processing Unit (TPU)

The TPU (Tensor Processing Unit) is a extremely specialised AI accelerator designed by Google particularly for neural community workloads. Not like CPUs and GPUs, which retain some stage of general-purpose flexibility, TPUs are purpose-built to maximise effectivity for deep studying duties. They energy lots of Google’s large-scale AI programs—together with search, suggestions, and fashions like Gemini—serving billions of customers globally. By focusing purely on tensor operations, TPUs push efficiency and effectivity additional than GPUs, notably in large-scale coaching and inference eventualities deployed through platforms like Google Cloud.

On the architectural stage, TPUs use a grid of multiply-accumulate (MAC) models—also known as a matrix multiply unit (MXU)—the place knowledge flows in a systolic (wave-like) sample. Weights stream in from one facet, activations from one other, and intermediate outcomes propagate throughout the grid with out repeatedly accessing reminiscence, drastically bettering velocity and vitality effectivity. Execution is compiler-controlled somewhat than hardware-scheduled, enabling extremely optimized and predictable efficiency. This design makes TPUs extraordinarily highly effective for big matrix operations central to AI.

Nonetheless, this specialization comes with tradeoffs: TPUs are much less versatile than GPUs, depend on particular software program ecosystems (like TensorFlow, JAX, or PyTorch through XLA), and are primarily accessible by way of cloud environments. In essence, whereas GPUs excel at parallel general-purpose acceleration, TPUs take it a step additional—sacrificing flexibility to realize unmatched effectivity for neural community computation at scale.

Neural Processing Unit (NPU)

The NPU (Neural Processing Unit) is an AI accelerator designed particularly for environment friendly, low-power inference—particularly on the edge. Not like GPUs that focus on large-scale coaching or knowledge heart workloads, NPUs are optimized to run AI fashions immediately on gadgets like smartphones, laptops, wearables, and IoT programs. Firms like Apple (with its Neural Engine) and Intel have adopted this structure to allow real-time AI options resembling speech recognition, picture processing, and on-device generative AI. The core design focuses on delivering excessive throughput with minimal vitality consumption, usually working inside single-digit watt energy budgets.

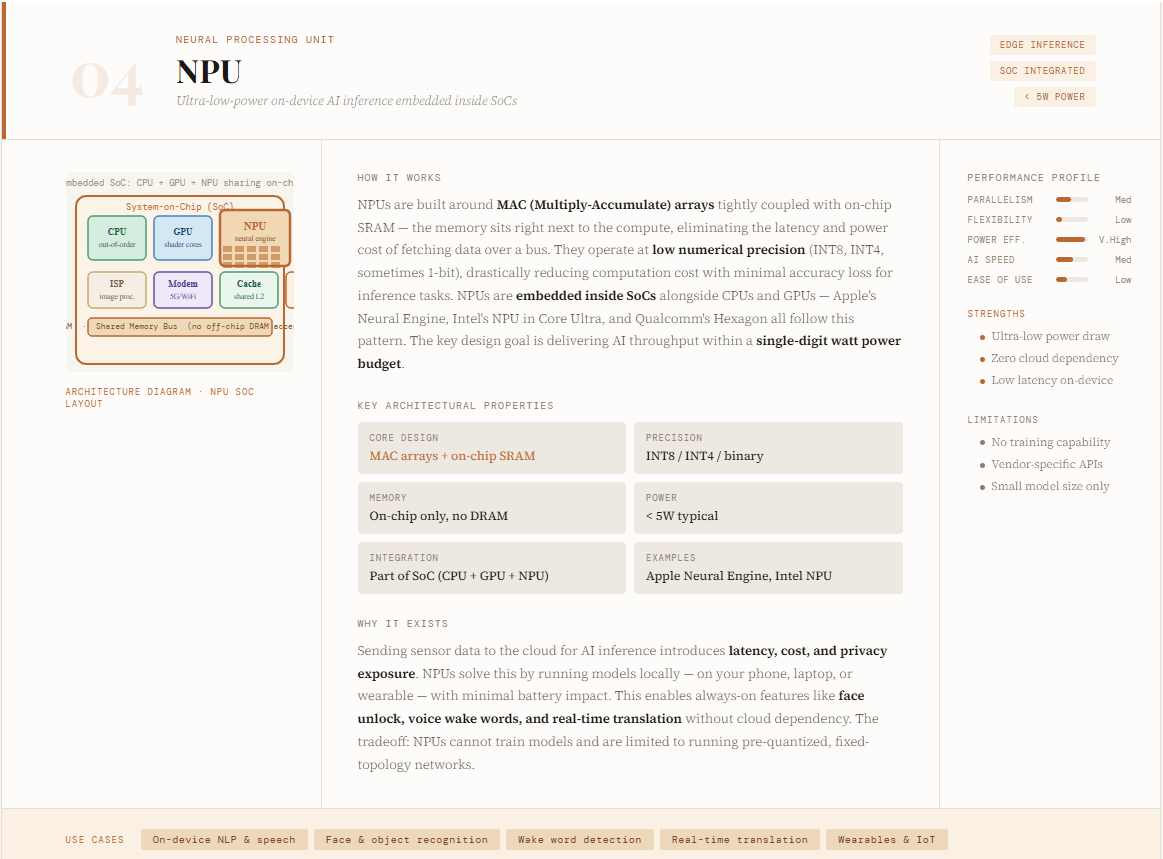

Architecturally, NPUs are constructed round neural compute engines composed of MAC (multiply-accumulate) arrays, on-chip SRAM, and optimized knowledge paths that decrease reminiscence motion. They emphasize parallel processing, low-precision arithmetic (like 8-bit or decrease), and tight integration of reminiscence and computation utilizing ideas like synaptic weights—permitting them to course of neural networks extraordinarily effectively. NPUs are sometimes built-in into system-on-chip (SoC) designs alongside CPUs and GPUs, forming heterogeneous programs.

Their strengths embrace ultra-low latency, excessive vitality effectivity, and the flexibility to deal with AI duties like laptop imaginative and prescient and NLP regionally with out cloud dependency. Nonetheless, this specialization additionally means they lack flexibility, aren’t suited to general-purpose computing or large-scale coaching, and sometimes rely upon particular {hardware} ecosystems. In essence, NPUs carry AI nearer to the person—buying and selling off uncooked energy for effectivity, responsiveness, and on-device intelligence.

Language Processing Unit (LPU)

The LPU (Language Processing Unit) is a brand new class of AI accelerator launched by Groq, purpose-built particularly for ultra-fast AI inference. Not like GPUs and TPUs, which nonetheless retain some general-purpose flexibility, LPUs are designed from the bottom as much as execute massive language fashions (LLMs) with most velocity and effectivity. Their defining innovation lies in eliminating off-chip reminiscence from the crucial execution path—maintaining all weights and knowledge in on-chip SRAM. This drastically reduces latency and removes frequent bottlenecks like reminiscence entry delays, cache misses, and runtime scheduling overhead. Because of this, LPUs can ship considerably sooner inference speeds and as much as 10x higher vitality effectivity in comparison with conventional GPU-based programs.

Architecturally, LPUs observe a software-first, compiler-driven design with a programmable “assembly line” mannequin, the place knowledge flows by way of the chip in a deterministic, completely scheduled method. As a substitute of dynamic {hardware} scheduling (like in GPUs), each operation is pre-planned at compile time—guaranteeing zero execution variability and absolutely predictable efficiency. The usage of on-chip reminiscence and high-bandwidth knowledge “conveyor belts” eliminates the necessity for advanced caching, routing, and synchronization mechanisms.

Nonetheless, this excessive specialization introduces tradeoffs: every chip has restricted reminiscence capability, requiring a whole bunch of LPUs to be linked for serving massive fashions. Regardless of this, the latency and effectivity positive aspects are substantial, particularly for real-time AI functions. In some ways, LPUs symbolize the far finish of the AI {hardware} evolution spectrum—transferring from general-purpose flexibility (CPUs) to extremely deterministic, inference-optimized architectures constructed purely for velocity and effectivity.

Evaluating the completely different architectures

AI compute architectures exist on a spectrum—from flexibility to excessive specialization—every optimized for a distinct position within the AI lifecycle. CPUs sit on the most versatile finish, dealing with general-purpose logic, orchestration, and system management, however wrestle with large-scale parallel math. GPUs transfer towards parallelism, utilizing hundreds of cores to speed up matrix operations, making them the dominant alternative for coaching deep studying fashions.

TPUs, developed by Google, go additional by specializing in tensor operations with systolic array architectures, delivering increased effectivity for each coaching and inference in structured AI workloads. NPUs push optimization towards the sting, enabling low-power, real-time inference on gadgets like smartphones and IoT programs by buying and selling off uncooked energy for vitality effectivity and latency. On the far finish, LPUs, launched by Groq, symbolize excessive specialization—designed purely for ultra-fast, deterministic AI inference with on-chip reminiscence and compiler-controlled execution.

Collectively, these architectures aren’t replacements however complementary parts of a heterogeneous system, the place every processor sort is deployed primarily based on the precise calls for of efficiency, scale, and effectivity.

I’m a Civil Engineering Graduate (2022) from Jamia Millia Islamia, New Delhi, and I’ve a eager curiosity in Knowledge Science, particularly Neural Networks and their utility in varied areas.