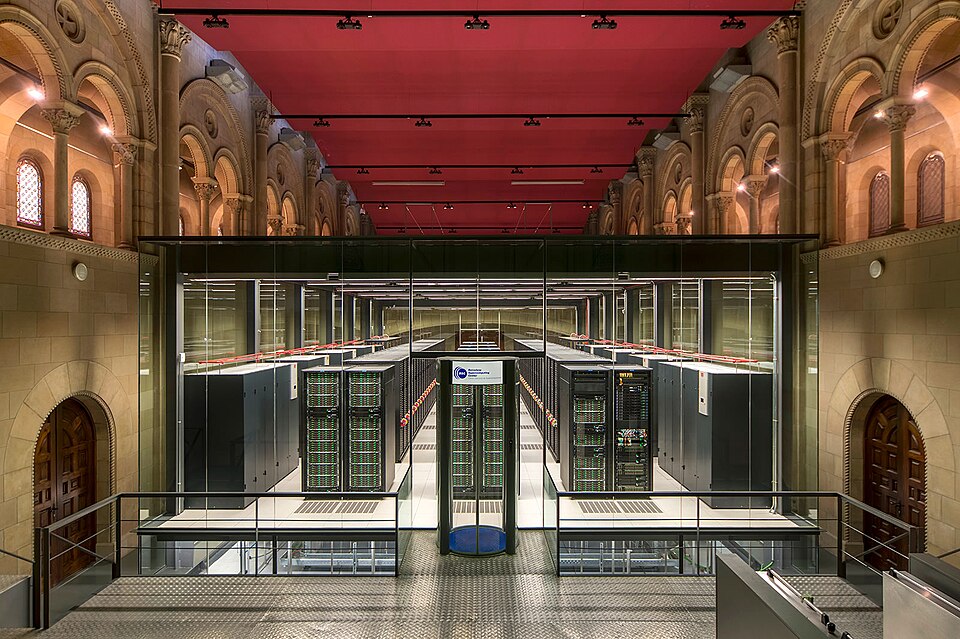

you stroll throughout the campus of the Polytechnic College of Catalonia in Barcelona, you would possibly come upon the Torre Girona chapel on a phenomenal park. Constructed within the nineteenth century, it incorporates a large cross, excessive arches, and stained glass. However inside the primary corridor, encased in an infinite illuminated glass field, sits a distinct form of structure.

That is the historic dwelling of MareNostrum. Whereas the unique 2004 racks stay on show within the chapel as a museum piece, the most recent iteration, MareNostrum V, one of many fifteen strongest supercomputers on this planet, spans a devoted, closely cooled facility proper subsequent door.

Most knowledge scientists are used to spinning up a heavy EC2 occasion on AWS or using distributed frameworks like Spark or Ray. Excessive-Efficiency Computing (HPC) on the supercomputer stage is a distinct beast totally. It operates on completely different architectural guidelines, completely different schedulers, and a scale that’s tough to fathom till you utilize it.

I just lately had the possibility to make use of MareNostrum V to generate large quantities of artificial knowledge for a machine studying surrogate mannequin. What follows is a glance underneath the hood of a 200M€ machine: what it’s, why its structure seems to be the way in which it does, and the way you really work together with it.

The Structure: Why You Ought to Care In regards to the Wiring

The psychological mannequin that causes probably the most confusion when approaching HPC is that this: you aren’t renting time on a single, impossibly highly effective laptop. You might be submitting work to be distributed throughout hundreds of impartial computer systems that occur to share a particularly quick community.

Why ought to an information scientist care in regards to the bodily networking? As a result of in the event you’ve ever tried to coach an enormous neural community throughout a number of AWS cases and watched your costly GPUs idle whereas ready for an information batch to switch, you already know that in distributed computing, the community is the pc.

To stop bottlenecks, MareNostrum V makes use of an InfiniBand NDR200 material organized in a fat-tree topology. In a normal workplace community, as a number of computer systems attempt to discuss throughout the identical primary change, bandwidth will get congested. A fat-tree topology solves this by rising the bandwidth of the hyperlinks as you progress up the community hierarchy, actually making the “branches” thicker close to the “trunk.” This ensures non-blocking bandwidth: any of the 8,000 nodes can discuss to every other node at precisely the identical minimal latency.

The machine itself represents a joint funding from the EuroHPC Joint Enterprise, Spain, Portugal, and Turkey, break up into two primary computational partitions:

Common Goal Partition (GPP):

It’s designed for extremely parallel CPU duties. It comprises 6,408 nodes, every packing 112 Intel Sapphire Rapids cores, with a mixed peak efficiency of 45.9 PFlops. That is the one you’re going to be utilizing most frequently for the “general” computing duties.

Accelerated Partition (ACC):

This one is extra specialised, designed with AI coaching, molecular dynamics and such in thoughts. It comprises 1,120 nodes, every with 4 NVIDIA H100 SXM GPUs. Contemplating a single H100 retails for roughly $25,000, the GPU price alone exceeds $110 million.

The GPUs give it a a lot increased peak efficiency than that of the GPP, reaching as much as 260 PFlops.

There are additionally a particular sort of nodes referred to as the Login Nodes. These act because the entrance door to the supercomputer. Once you SSH into Mare Nostrum, that is the place you land. Login nodes are strictly for light-weight duties: shifting recordsdata, compiling code, and submitting job scripts to the scheduler. They don’t seem to be for computing.

Quantum Infrastructure: Classical nodes are not the one {hardware} contained in the glass field. As of just lately, Mare Nostrum 5 has been bodily and logically built-in with Spain’s first quantum computer systems. This features a digital gate-based quantum system and the newly acquired MareNostrum-Ona, a state-of-the-art quantum annealer primarily based on superconducting qubits. Fairly than changing the classical supercomputer, these quantum processing items (QPUs) act as extremely specialised accelerators.

When the supercomputer encounters fiercely advanced optimization issues or quantum chemistry simulations that might choke even the H100 GPUs, it could actually offload these particular calculations to the quantum {hardware}, creating an enormous hybrid classical-quantum computing powerhouse.

Airgaps, Quotas, and the Actuality of HPC

Understanding the {hardware} is just half the battle. The operational guidelines of a supercomputer are totally completely different from a industrial cloud supplier. Mare Nostrum V is a shared public useful resource, which suggests the setting is closely restricted to make sure safety and truthful play.

The Airgap: One of many largest shocks for knowledge scientists transitioning to HPC is the community restriction. You may entry the supercomputer from the surface world by way of SSH, however the compute nodes completely can not entry the surface world. There is no such thing as a outbound web connection. You can’t pip set up a lacking library, wget a dataset, or hook up with an exterior HuggingFace repository as you see match. Every little thing your script wants should be pre-downloaded, compiled, and sitting in your storage listing earlier than you submit your job.

In actuality, it’s much less of a difficulty than it seems, because the Marenostrum directors present many of the libraries and software program you might want by way of a module system.

Transferring Knowledge: Due to this strict boundary, knowledge ingress and egress occur by way of scp or rsync via the login nodes. You push your uncooked datasets in over SSH, look forward to the compute nodes to chew via the simulations, and pull the processed tensors again out to your native machine. One shocking facet of this restriction is that, because the precise computation might be so extremely quick, the bottleneck turns into extracting the completed outcomes to your native machine for postprocessing and visualization.

Limits and Quotas: You can’t merely launch a thousand jobs and monopolize the machine. Your challenge is assigned a particular CPU-hour price range. Moreover, there are onerous limits on what number of concurrent jobs a single consumer can have operating or queuing at any given time.

You could additionally specify a strict wall-time restrict for each single job you submit. Supercomputers don’t tolerate loitering, in the event you request two hours of compute time and your script wants two hours and one second, the scheduler will ruthlessly kill your course of mid-calculation to make room for the following researcher.

Logging within the Darkish: Since you submit these jobs to a scheduler and stroll away, there is no such thing as a dwell terminal output to stare at. As an alternative, all customary output (stdout) and customary error (stderr) are robotically redirected into log recordsdata (e.g., sim_12345.out and sim_12345.err). When your job completes, or if it crashes in a single day, you must comb via these generated textual content recordsdata to confirm the outcomes or debug your code. You do, nonetheless, have instruments to observe the standing of your submitted jobs, similar to squeue or doing the basic tail -f on the log recordsdata.

Understanding SLURM Workload Supervisor

Once you lastly get your analysis allocation permitted and log into MareNostrum V by way of SSH, your reward is… a very customary Linux terminal immediate.

After months of writing proposals for entry to a 200M€ machine, it’s, frankly, a bit underwhelming. There aren’t any flashing lights, no holographic progress bars, nothing to sign simply how highly effective the engine behind the wheel is.

As a result of hundreds of researchers are utilizing the machine concurrently, you can not simply execute a heavy python or C++ script immediately within the terminal. In case you do, it can run on the “login node,” rapidly grinding it to a halt for everybody else and incomes you an extremely well mannered however reasonably agency and indignant electronic mail from the system directors.

As an alternative, HPC depends on a workload supervisor referred to as SLURM. You write a bash script detailing precisely what {hardware} you want, what software program environments to load, and what code to execute. SLURM places your job in a queue, finds the {hardware} when it turns into obtainable, executes your code, and releases the nodes.

SLURM stands for Simple Linux Utility for Resource Management, and it’s a free and open supply software program that handles job-scheduling in lots of laptop clusters and supercomputers.

Earlier than taking a look at a fancy pipeline, it’s essential to perceive learn how to talk with the scheduler. That is carried out utilizing #SBATCH directives positioned on the high of your submission script. These directives act as your buying listing for sources:

--nodes: The variety of distinct bodily machines you want.--ntasks: The overall variety of separate MPI processes (duties) you need to spawn. SLURM handles distributing these duties throughout your requested nodes.--time: The strict wall-clock time restrict in your job. Supercomputers don’t tolerate loitering; in case your script runs even one second over this restrict, SLURM ruthlessly kills the job.--account: The particular challenge ID that might be billed in your CPU-hours.--qos: The “Quality of Service” or particular queue you might be focusing on. As an illustration, utilizing a debug queue grants sooner entry however limits you to quick runtimes for testing.

A Sensible Instance: Orchestrating an OpenFOAM Sweep

To floor this in actuality, right here is how I really used the machine. I used to be constructing an ML surrogate mannequin to foretell aerodynamic downforce, which required ground-truth knowledge from 50 high-fidelity computational fluid dynamics (CFD) simulations throughout 50 completely different 3D meshes.

Right here is the precise SLURM job script for a single OpenFOAM CFD case on the Common Goal Partition:

#!/bin/bash

#SBATCH --job-name=cfd_sweep

#SBATCH --output=logs/sim_percentj.out

#SBATCH --error=logs/sim_percentj.err

#SBATCH --qos=gp_debug

#SBATCH --time=00:30:00

#SBATCH --nodes=1

#SBATCH --ntasks=6

#SBATCH --account=nct_293

module purge

module load OpenFOAM/11-foss-2023a

supply $FOAM_BASH

# MPI launchers deal with core mapping robotically

srun --mpi=pmix surfaceFeatureExtract

srun --mpi=pmix blockMesh

srun --mpi=pmix decomposePar -force

srun --mpi=pmix snappyHexMesh -parallel -overwrite

srun --mpi=pmix potentialFoam -parallel

srun --mpi=pmix simpleFoam -parallel

srun --mpi=pmix reconstructPar

Fairly than manually submitting this 50 occasions and flooding the scheduler, I used SLURM dependencies to chain every job behind the earlier one. This creates a clear, automated knowledge pipeline:

#!/bin/bash

PREV_JOB_ID=""

for CASE_DIR in instances/case_*; do

cd $CASE_DIR

if [ -z "$PREV_JOB_ID" ]; then

OUT=$(sbatch run_all.sh)

else

OUT=$(sbatch --dependency=afterany:$PREV_JOB_ID run_all.sh)

fi

PREV_JOB_ID=$(echo $OUT | awk '{print $4}')

cd ../..

carried out

This orchestrator drops a sequence of fifty jobs into the queue in seconds. I walked away, and by the following morning, my 50 aerodynamic evaluations have been processed, logged, and able to be formatted into tensors for ML coaching.

Parallelism Limits: Amdahl’s Legislation

A standard query from newcomers is: In case you have 112 cores per node, why did you solely request 6 duties (ntasks=6) in your CFD simulation?

The reply is Amdahl’s Legislation. Each program has a serial fraction that can’t be parallelized. It explicitly states that the theoretical speedup of executing a program throughout a number of processors is strictly restricted by the fraction of the code that should be executed serially. It’s a really intuitive legislation and, mathematically, it’s expressed as:

[

S=frac{1}{(1-p)+frac{p}{N}}

]

The place S is the general speedup, p is the proportion of the code that may be parallelized, 1−p is the strictly serial fraction, and N is the variety of processing cores.

Due to that (1−p) time period within the denominator, you face an insurmountable ceiling. If simply 5% of your program is essentially sequential, the utmost theoretical speedup you possibly can obtain, even in the event you use each single core in MareNostrum V, is 20x.

Moreover, dividing a process throughout too many cores will increase the communication overhead over that InfiniBand community we mentioned earlier. If the cores spend extra time passing boundary situations to one another than doing precise math, including extra {hardware} slows this system down.

As proven on this determine, when simulating a small system (N=100), runtime will increase after 16 threads. Solely at large scales (N=10k+) does the {hardware} develop into absolutely productive. Writing code for a supercomputer is an train in managing this compute-to-communication ratio.

The Entry to the Immediate

Regardless of the staggering price of the {hardware}, entry to MareNostrum V is free for researchers, as compute time is handled as a publicly funded scientific useful resource.

In case you are affiliated with a Spanish establishment, you possibly can apply via the Spanish Supercomputing Community (RES). For researchers throughout the remainder of Europe, the EuroHPC Joint Enterprise runs common entry calls. Their “Development Access” monitor is particularly designed for tasks porting code or benchmarking ML fashions, making it extremely accessible for knowledge scientists.

Once you sit at your desk gazing that utterly unremarkable SSH immediate, it’s straightforward to overlook what you might be really taking a look at. What that blinking cursor doesn’t present is the 8,000 nodes it connects to, the fat-tree material routing messages between them at 200 Gb/s, or the scheduler coordinating a whole bunch of concurrent jobs from researchers throughout six international locations.

The “single powerful computer” image persists in our heads as a result of it’s less complicated. However the distributed actuality is what makes fashionable computing potential, and it’s far more accessible than most individuals understand.

References

[1] Barcelona Supercomputing Middle, MareNostrum 5 Technical Specs (2024), BSC Press Room. https://towardsdatascience.com/what-it-actually-takes-to-run-code-on-200me-supercomputer/

[2] EuroHPC Joint Enterprise, MareNostrum 5 Inauguration Particulars (2023), EuroHPC JU. https://towardsdatascience.com/what-it-actually-takes-to-run-code-on-200me-supercomputer/