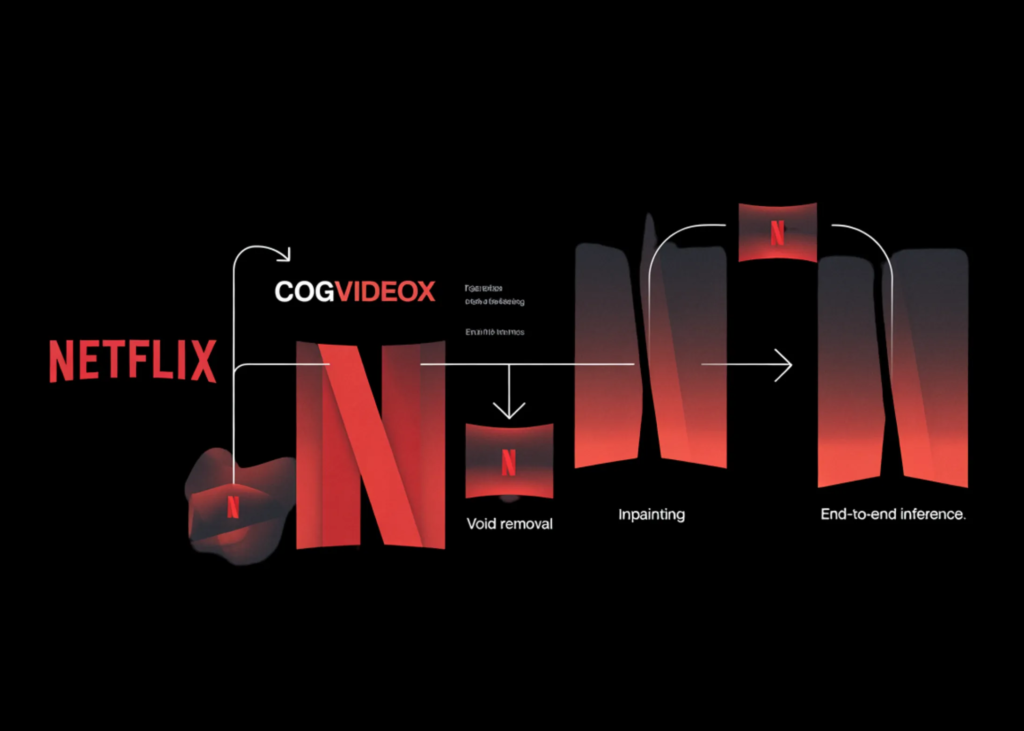

On this tutorial, we construct and run a complicated pipeline for Netflix’s VOID mannequin. We arrange the setting, set up all required dependencies, clone the repository, obtain the official base mannequin and VOID checkpoint, and put together the pattern inputs wanted for video object elimination. We additionally make the workflow extra sensible by permitting safe terminal-style secret enter for tokens and optionally utilizing an OpenAI mannequin to generate a cleaner background immediate. As we transfer by means of the tutorial, we load the mannequin parts, configure the pipeline, run inference on a built-in pattern, and visualize each the generated consequence and a side-by-side comparability, giving us a full hands-on understanding of how VOID works in observe. Try the Full Codes

import os, sys, json, shutil, subprocess, textwrap, gc

from pathlib import Path

from getpass import getpass

def run(cmd, examine=True):

print(f"n[RUN] {cmd}")

consequence = subprocess.run(cmd, shell=True, textual content=True)

if examine and consequence.returncode != 0:

increase RuntimeError(f"Command failed with exit code {result.returncode}: {cmd}")

print("=" * 100)

print("VOID — ADVANCED GOOGLE COLAB TUTORIAL")

print("=" * 100)

attempt:

import torch

gpu_name = torch.cuda.get_device_name(0) if torch.cuda.is_available() else "CPU"

print(f"PyTorch already available. CUDA: {torch.cuda.is_available()} | Device: {gpu_name}")

besides Exception:

run(f"{sys.executable} -m pip install -q torch torchvision torchaudio")

import torch

gpu_name = torch.cuda.get_device_name(0) if torch.cuda.is_available() else "CPU"

print(f"CUDA: {torch.cuda.is_available()} | Device: {gpu_name}")

if not torch.cuda.is_available():

increase RuntimeError("This tutorial needs a GPU runtime. In Colab, go to Runtime > Change runtime type > GPU.")

print("nThis repo is heavy. The official notebook notes 40GB+ VRAM is recommended.")

print("A100 works best. T4/L4 may fail or be extremely slow even with CPU offload.n")

HF_TOKEN = getpass("Enter your Hugging Face token (input hidden, press Enter if already logged in): ").strip()

OPENAI_API_KEY = getpass("Enter your OpenAI API key for OPTIONAL prompt assistance (press Enter to skip): ").strip()

run(f"{sys.executable} -m pip install -q --upgrade pip")

run(f"{sys.executable} -m pip install -q huggingface_hub hf_transfer")

run("apt-get -qq update && apt-get -qq install -y ffmpeg git")

run("rm -rf /content/void-model")

run("git clone /content/void-model")

os.chdir("/content/void-model")

if HF_TOKEN:

os.environ["HF_TOKEN"] = HF_TOKEN

os.environ["HUGGINGFACE_HUB_TOKEN"] = HF_TOKEN

os.environ["HF_HUB_ENABLE_HF_TRANSFER"] = "1"

run(f"{sys.executable} -m pip install -q -r requirements.txt")

if OPENAI_API_KEY:

run(f"{sys.executable} -m pip install -q openai")

os.environ["OPENAI_API_KEY"] = OPENAI_API_KEY

from huggingface_hub import snapshot_download, hf_hub_downloadWe arrange the total Colab setting and ready the system for working the VOID pipeline. We set up the required instruments, examine whether or not GPU assist is offered, securely acquire the Hugging Face and elective OpenAI API keys, and clone the official repository into the Colab workspace. We additionally configure setting variables and set up challenge dependencies so the remainder of the workflow can run easily with out handbook setup later.

print("nDownloading base CogVideoX inpainting model...")

snapshot_download(

repo_id="alibaba-pai/CogVideoX-Fun-V1.5-5b-InP",

local_dir="./CogVideoX-Fun-V1.5-5b-InP",

token=HF_TOKEN if HF_TOKEN else None,

local_dir_use_symlinks=False,

resume_download=True,

)

print("nDownloading VOID Pass 1 checkpoint...")

hf_hub_download(

repo_id="netflix/void-model",

filename="void_pass1.safetensors",

local_dir=".",

token=HF_TOKEN if HF_TOKEN else None,

local_dir_use_symlinks=False,

)

sample_options = ["lime", "moving_ball", "pillow"]

print(f"nAvailable built-in samples: {sample_options}")

sample_name = enter("Choose a sample [lime/moving_ball/pillow] (default: lime): ").strip() or "lime"

if sample_name not in sample_options:

print("Invalid sample selected. Falling back to 'lime'.")

sample_name = "lime"

use_openai_prompt_helper = False

custom_bg_prompt = None

if OPENAI_API_KEY:

ans = enter("nUse OpenAI to generate an alternative background prompt for the selected sample? [y/N]: ").strip().decrease()

use_openai_prompt_helper = ans == "y"We obtain the bottom CogVideoX inpainting mannequin and the VOID Cross 1 checkpoint required for inference. We then current the out there built-in pattern choices and let ourselves select which pattern video we need to course of. We additionally initialize the elective prompt-helper circulation to determine whether or not to generate a refined background immediate with OpenAI.

if use_openai_prompt_helper:

from openai import OpenAI

consumer = OpenAI(api_key=OPENAI_API_KEY)

sample_context = {

"lime": {

"removed_object": "the glass",

"scene_hint": "A lime falls on the table."

},

"moving_ball": {

"removed_object": "the rubber duckie",

"scene_hint": "A ball rolls off the table."

},

"pillow": {

"removed_object": "the kettlebell being placed on the pillow",

"scene_hint": "Two pillows are on the table."

},

}

helper_prompt = f"""

You're serving to put together a clear background immediate for a video object elimination mannequin.

Guidelines:

- Describe solely what ought to stay within the scene after eradicating the goal object/motion.

- Don't point out elimination, deletion, masks, enhancing, or inpainting.

- Preserve it quick, concrete, and bodily believable.

- Return just one sentence.

Pattern title: {sample_name}

Goal being eliminated: {sample_context[sample_name]['removed_object']}

Recognized scene trace from the repo: {sample_context[sample_name]['scene_hint']}

"""

attempt:

response = consumer.chat.completions.create(

mannequin="gpt-4o-mini",

temperature=0.2,

messages=[

{"role": "system", "content": "You write short, precise scene descriptions for video generation pipelines."},

{"role": "user", "content": helper_prompt},

],

)

custom_bg_prompt = response.decisions[0].message.content material.strip()

print(f"nOpenAI-generated background prompt:n{custom_bg_prompt}n")

besides Exception as e:

print(f"OpenAI prompt helper failed: {e}")

custom_bg_prompt = None

prompt_json_path = Path(f"./sample/{sample_name}/prompt.json")

if custom_bg_prompt:

backup_path = prompt_json_path.with_suffix(".json.bak")

if not backup_path.exists():

shutil.copy(prompt_json_path, backup_path)

with open(prompt_json_path, "w") as f:

json.dump({"bg": custom_bg_prompt}, f)

print(f"Updated prompt.json for sample '{sample_name}'.")We use the elective OpenAI immediate helper to generate a cleaner and extra targeted background description for the chosen pattern. We outline the scene context, ship it to the mannequin, seize the generated immediate, after which replace the pattern’s immediate.json file when a customized immediate is offered. This permits us to make the pipeline a bit extra versatile whereas nonetheless conserving the unique pattern construction intact.

import numpy as np

import torch.nn.useful as F

from safetensors.torch import load_file

from diffusers import DDIMScheduler

from IPython.show import Video, show

from videox_fun.fashions import (

AutoencoderKLCogVideoX,

CogVideoXTransformer3DModel,

T5EncoderModel,

T5Tokenizer,

)

from videox_fun.pipeline import CogVideoXFunInpaintPipeline

from videox_fun.utils.fp8_optimization import convert_weight_dtype_wrapper

from videox_fun.utils.utils import get_video_mask_input, save_videos_grid, save_inout_row

BASE_MODEL_PATH = "./CogVideoX-Fun-V1.5-5b-InP"

TRANSFORMER_CKPT = "./void_pass1.safetensors"

DATA_ROOTDIR = "./sample"

SAMPLE_NAME = sample_name

SAMPLE_SIZE = (384, 672)

MAX_VIDEO_LENGTH = 197

TEMPORAL_WINDOW_SIZE = 85

NUM_INFERENCE_STEPS = 50

GUIDANCE_SCALE = 1.0

SEED = 42

DEVICE = "cuda"

WEIGHT_DTYPE = torch.bfloat16

print("nLoading VAE...")

vae = AutoencoderKLCogVideoX.from_pretrained(

BASE_MODEL_PATH,

subfolder="vae",

).to(WEIGHT_DTYPE)

video_length = int(

(MAX_VIDEO_LENGTH - 1) // vae.config.temporal_compression_ratio * vae.config.temporal_compression_ratio

) + 1

print(f"Effective video length: {video_length}")

print("nLoading base transformer...")

transformer = CogVideoXTransformer3DModel.from_pretrained(

BASE_MODEL_PATH,

subfolder="transformer",

low_cpu_mem_usage=True,

use_vae_mask=True,

).to(WEIGHT_DTYPE)We import the deep studying, diffusion, video show, and VOID-specific modules required for inference. We outline key configuration values, akin to mannequin paths, pattern dimensions, video size, inference steps, seed, machine, and information kind, after which load the VAE and base transformer parts. This part presents the core mannequin objects that type the underpino inpainting pipeline.

print(f"Loading VOID checkpoint from {TRANSFORMER_CKPT} ...")

state_dict = load_file(TRANSFORMER_CKPT)

param_name = "patch_embed.proj.weight"

if state_dict[param_name].measurement(1) != transformer.state_dict()[param_name].measurement(1):

latent_ch, feat_scale = 16, 8

feat_dim = latent_ch * feat_scale

new_weight = transformer.state_dict()[param_name].clone()

new_weight[:, :feat_dim] = state_dict[param_name][:, :feat_dim]

new_weight[:, -feat_dim:] = state_dict[param_name][:, -feat_dim:]

state_dict[param_name] = new_weight

print(f"Adapted {param_name} channels for VAE mask.")

missing_keys, unexpected_keys = transformer.load_state_dict(state_dict, strict=False)

print(f"Missing keys: {len(missing_keys)}, Unexpected keys: {len(unexpected_keys)}")

print("nLoading tokenizer, text encoder, and scheduler...")

tokenizer = T5Tokenizer.from_pretrained(BASE_MODEL_PATH, subfolder="tokenizer")

text_encoder = T5EncoderModel.from_pretrained(

BASE_MODEL_PATH,

subfolder="text_encoder",

torch_dtype=WEIGHT_DTYPE,

)

scheduler = DDIMScheduler.from_pretrained(BASE_MODEL_PATH, subfolder="scheduler")

print("nBuilding pipeline...")

pipe = CogVideoXFunInpaintPipeline(

tokenizer=tokenizer,

text_encoder=text_encoder,

vae=vae,

transformer=transformer,

scheduler=scheduler,

)

convert_weight_dtype_wrapper(pipe.transformer, WEIGHT_DTYPE)

pipe.enable_model_cpu_offload(machine=DEVICE)

generator = torch.Generator(machine=DEVICE).manual_seed(SEED)

print("nPreparing sample input...")

input_video, input_video_mask, immediate, _ = get_video_mask_input(

SAMPLE_NAME,

sample_size=SAMPLE_SIZE,

keep_fg_ids=[-1],

max_video_length=video_length,

temporal_window_size=TEMPORAL_WINDOW_SIZE,

data_rootdir=DATA_ROOTDIR,

use_quadmask=True,

dilate_width=11,

)

negative_prompt = (

"Watermark present in each frame. The background is solid. "

"Strange body and strange trajectory. Distortion."

)

print(f"nPrompt: {prompt}")

print(f"Input video tensor shape: {tuple(input_video.shape)}")

print(f"Mask video tensor shape: {tuple(input_video_mask.shape)}")

print("nDisplaying input video...")

input_video_path = os.path.be part of(DATA_ROOTDIR, SAMPLE_NAME, "input_video.mp4")

show(Video(input_video_path, embed=True, width=672))We load the VOID checkpoint, align the transformer weights when wanted, and initialize the tokenizer, textual content encoder, scheduler, and remaining inpainting pipeline. We then allow CPU offloading, seed the generator for reproducibility, and put together the enter video, masks video, and immediate from the chosen pattern. By the top of this part, we could have every little thing prepared for precise inference, together with the adverse immediate and the enter video preview.

print("nRunning VOID Pass 1 inference...")

with torch.no_grad():

pattern = pipe(

immediate,

num_frames=TEMPORAL_WINDOW_SIZE,

negative_prompt=negative_prompt,

top=SAMPLE_SIZE[0],

width=SAMPLE_SIZE[1],

generator=generator,

guidance_scale=GUIDANCE_SCALE,

num_inference_steps=NUM_INFERENCE_STEPS,

video=input_video,

mask_video=input_video_mask,

power=1.0,

use_trimask=True,

use_vae_mask=True,

).movies

print(f"Output shape: {tuple(sample.shape)}")

output_dir = Path("/content/void_outputs")

output_dir.mkdir(dad and mom=True, exist_ok=True)

output_path = str(output_dir / f"{SAMPLE_NAME}_void_pass1.mp4")

comparison_path = str(output_dir / f"{SAMPLE_NAME}_comparison.mp4")

print("nSaving output video...")

save_videos_grid(pattern, output_path, fps=12)

print("Saving side-by-side comparison...")

save_inout_row(input_video, input_video_mask, pattern, comparison_path, fps=12)

print(f"nSaved output to: {output_path}")

print(f"Saved comparison to: {comparison_path}")

print("nDisplaying generated result...")

show(Video(output_path, embed=True, width=672))

print("nDisplaying comparison (input | mask | output)...")

show(Video(comparison_path, embed=True, width=1344))

print("nDone.")We run the precise VOID Cross 1 inference on the chosen pattern utilizing the ready immediate, masks, and mannequin pipeline. We save the generated output video and likewise create a side-by-side comparability video so we will examine the enter, masks, and remaining consequence collectively. We show the generated movies instantly in Colab, which helps us confirm that the total video object-removal workflow works finish to finish.

In conclusion, we created a whole, Colab-ready implementation of the VOID mannequin and ran an end-to-end video inpainting workflow inside a single, streamlined pipeline. We went past primary setup by dealing with mannequin downloads, immediate preparation, checkpoint loading, mask-aware inference, and output visualization in a approach that’s sensible for experimentation and adaptation. We additionally noticed how the totally different mannequin parts come collectively to take away objects from video whereas preserving the encompassing scene as naturally as attainable. On the finish, we efficiently ran the official pattern and constructed a powerful working basis that helps us lengthen the pipeline for customized movies, prompts, and extra superior analysis use instances.

Try the Full Codes. Additionally, be happy to observe us on Twitter and don’t neglect to affix our 120k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you possibly can be part of us on telegram as properly.

Have to accomplice with us for selling your GitHub Repo OR Hugging Face Web page OR Product Launch OR Webinar and so on.? Join with us