Researchers at Meta’s FAIR lab have launched NeuralSet, a Python framework designed to remove one of the vital persistent bottlenecks in Neuro-AI analysis: the painful, fragmented technique of getting mind information right into a deep studying pipeline.

The Downside: Neuroscience Knowledge Is Caught within the Pre-Deep-Studying Period

Neuroscience already has wonderful, battle-tested software program. Instruments like MNE-Python, EEGLAB, FieldTrip, Brainstorm, Nilearn, and fMRIPrep are the gold normal for sign processing throughout electrophysiology and neuroimaging. The difficulty is that these instruments have been designed for a pre-deep-learning world: they depend on keen loading, assuming complete datasets match into RAM, and so they lack native abstractions to temporally align neural time sequence with high-dimensional embeddings from fashionable AI frameworks like HuggingFace Transformers.

The end result? Researchers spend monumental effort constructing ad-hoc pipelines that require handbook information wrangling, handbook caching, and complicated backend configurations — simply to get mind indicators paired with, say, GPT-2 textual content embeddings for a single experiment. As public datasets on platforms like OpenNeuro now attain the terabyte scale, and experimental protocols more and more incorporate steady speech and video stimuli, this infrastructure hole is now not simply inconvenient — it’s a scientific bottleneck.

What NeuralSet Really Does

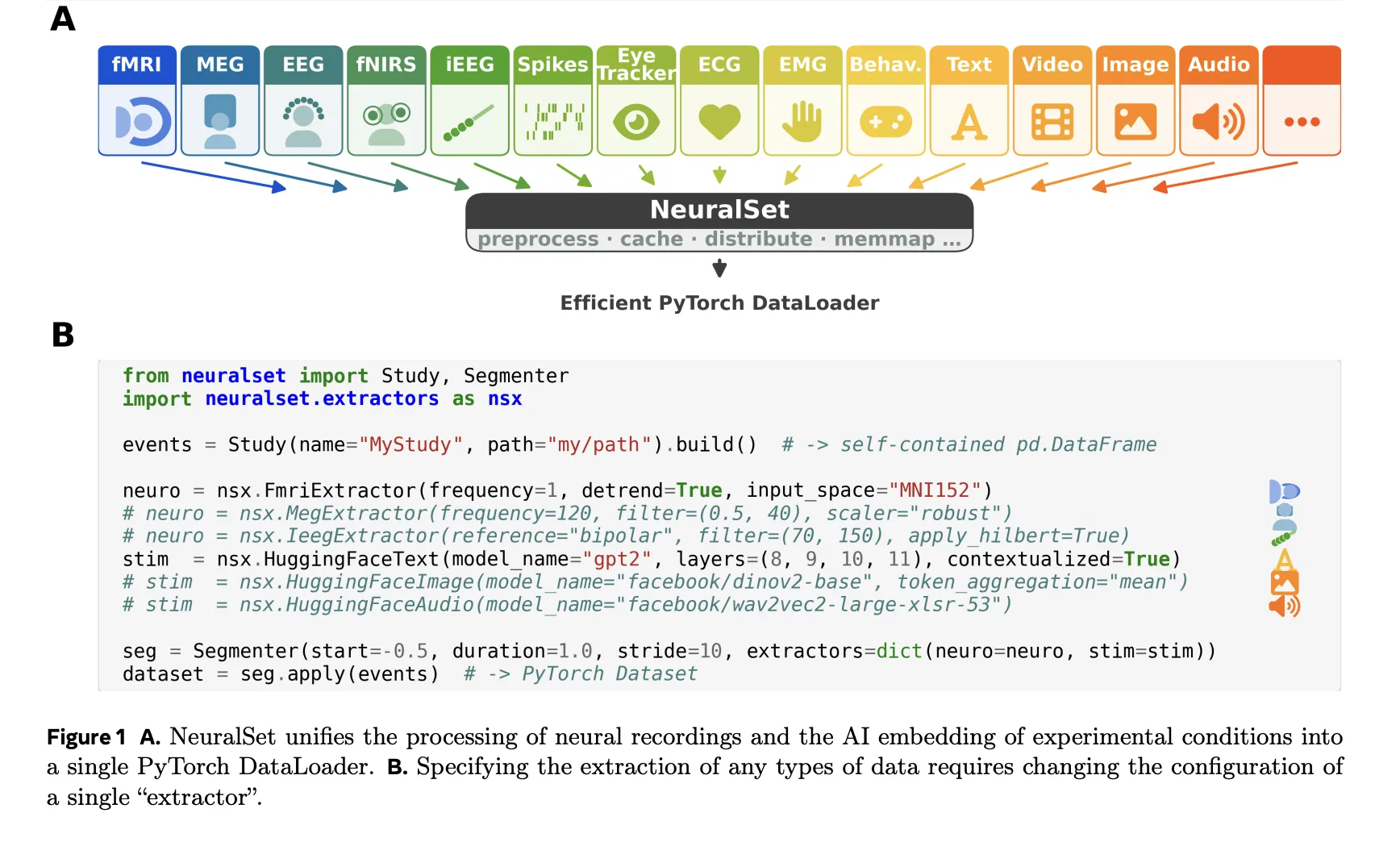

NeuralSet’s core design precept is construction–information decoupling. As a substitute of loading uncooked indicators upfront, NeuralSet represents the logical construction of any experiment as light-weight, event-driven metadata — utterly separate from the memory- and compute-intensive extraction of precise indicators. The framework is organized round 5 core abstractions: Occasions, Extractors, Segments, Batch Knowledge, and a Backend layer.

In follow, every little thing in an experiment — an fMRI run, a phrase spoken throughout a activity, a video stimulus — is modeled as an Occasion: a light-weight Python dictionary outlined by a sort, a begin time, a period, and a timeline (a novel identifier for a steady recording session). A Research object assembles all occasions in a complete dataset right into a single pandas DataFrame. Importantly, NeuralSet helps BIDS-compliant datasets, although it isn’t restricted to them. As a result of the DataFrame accommodates solely light-weight metadata — not the uncooked indicators themselves — engineers can filter, discover, and recombine huge datasets utilizing normal pandas operations with out loading a single byte of uncooked information into reminiscence.

Composable EventsTransform operations can then be chained to counterpoint or filter occasions — for instance, annotating phrases with their sentence context, assigning cross-validation splits, or chunking lengthy audio and video occasions into shorter segments. A number of Research and Remodel steps will also be composed collectively utilizing a Chain, which creates a single reproducible, cacheable pipeline object.

When it’s really time to work with information, NeuralSet makes use of Extractors to bridge the hole between the metadata layer and numerical arrays required by machine studying fashions. For neural recordings, NeuralSet wraps the preprocessing stacks of domain-specific libraries immediately: an FmriExtractor delegates to Nilearn for sign cleansing, spatial smoothing, and floor or atlas-based projection, whereas a MegExtractor or EegExtractor delegates to MNE-Python for filtering, re-referencing, and resampling. The identical unified interface covers iEEG, fNIRS, EMG, and spike recordings — switching modalities requires solely altering a configuration parameter, not rewriting a pipeline.

For experimental stimuli, NeuralSet supplies native integration with the HuggingFace ecosystem. A single HuggingFaceImage extractor can embed stimulus frames by way of DINOv2 or CLIP; analogous extractors exist for audio (Wav2Vec, Whisper), textual content (GPT-2, LLaMA), and video (VideoMAE). Critically, NeuralSet can increase a static embedding — say, a single vector per picture — right into a time sequence at an arbitrary frequency, in order that stimulus representations are all the time temporally aligned with neural recordings.

Extractors comply with a three-phase execution mannequin: configure (parameter validation at building time), put together (pre-compute and cache heavy outputs for all occasions), and extract (lazy retrieval from cache throughout mannequin coaching). This implies costly computations — like working a big language mannequin over each phrase in a corpus — are carried out as soon as and reused throughout experiments. The output of an Extractor for a single phase is Batch Knowledge: a dictionary of tensors keyed by extractor title, together with the corresponding segments.

Segmenter, DataLoader, and Cluster-Prepared Infrastructure

A Segmenter slices the occasions DataFrame into Segments — contiguous temporal home windows representing single coaching examples — both on a sliding window grid or anchored to particular set off occasions akin to picture or phrase onsets. The ensuing SegmentDataset is an ordinary PyTorch Dataset, immediately appropriate with DataLoader, PyTorch Lightning, or any PyTorch-based framework.

NeuralSet is constructed on the exca package deal, which handles deterministic, hash-based caching, full computational provenance, and hardware-agnostic execution. Altering a single preprocessing parameter invalidates solely the affected downstream cache, leaving unbiased branches untouched. Full provenance is maintained, that means any processed tensor could be traced again to the precise model of the uncooked information and the precise preprocessing chain used to generate it. Researchers can prototype on a single topic on their laptop computer, then dispatch 100 topics to a SLURM-based HPC cluster by altering a single configuration flag — no infrastructure-specific code required.

NeuralSet makes use of Pydantic to implement strict schema validation at initialization time throughout each configurable object — Occasions, Research, Extractors, Segmenters, and Transforms are all Pydantic BaseModel subclasses. This implies a misconfigured parameter (for instance, a detrimental filter frequency or an invalid BIDS listing path) raises a transparent error instantly, earlier than any job is submitted, moderately than failing hours right into a processing run.

How It Stacks Up In opposition to Present Instruments

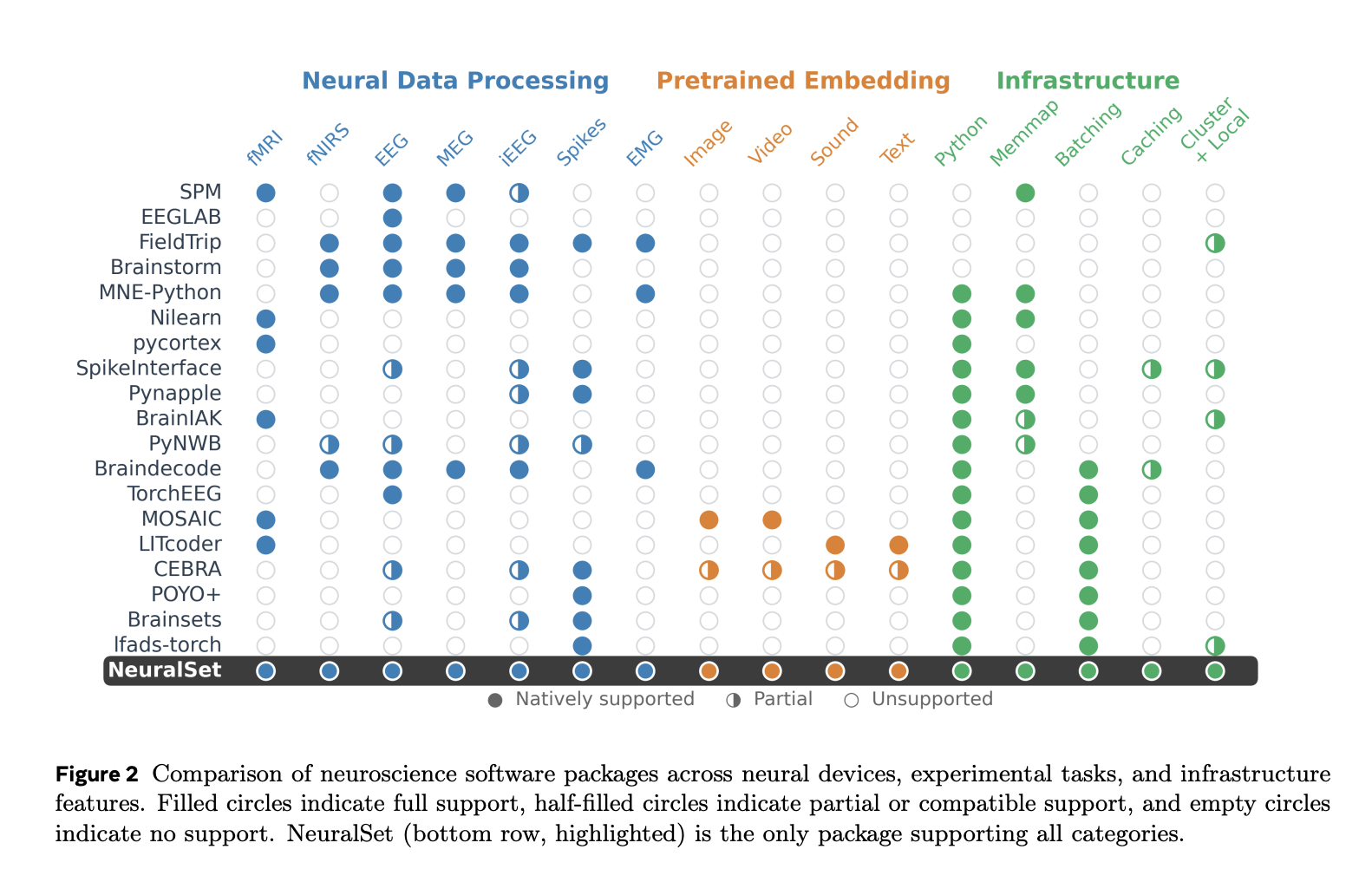

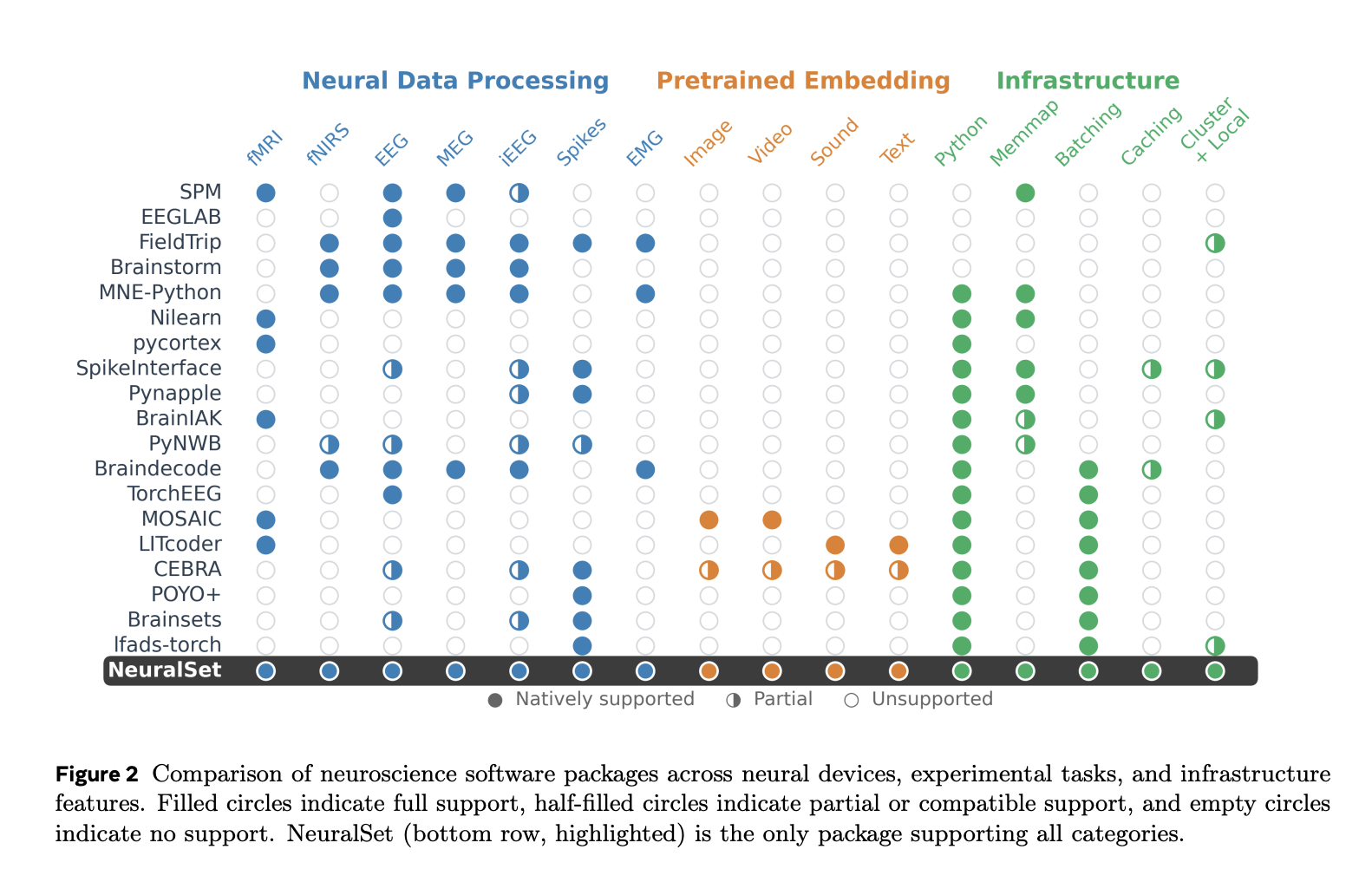

Within the analysis paper, the analysis workforce presents an in depth comparability of NeuralSet in opposition to 18 present neuroscience software program packages throughout neural gadgets (fMRI, EEG, MEG, iEEG, spikes, and extra), experimental activity varieties (picture, video, sound, textual content), and infrastructure options (Python assist, memmap, batching, caching, cluster execution). NeuralSet is the one package deal within the comparability that achieves full assist throughout all classes.

Key Takeaways

- NeuralSet unifies mind information and AI in a single pipeline. Researchers at Meta FAIR constructed NeuralSet to bridge the hole between various neural recordings (fMRI, M/EEG, spikes) and fashionable deep studying frameworks, delivering a single PyTorch-ready DataLoader for each.

- Construction–information decoupling eliminates reminiscence bottlenecks. NeuralSet separates light-weight occasion metadata from heavy sign extraction, so AI devs and researchers can filter and discover terabyte-scale datasets with out loading a single byte of uncooked information into RAM.

- Switching recording modalities requires altering just one config parameter. A unified Extractor interface wraps MNE-Python, Nilearn, and HuggingFace fashions — overlaying fMRI, EEG, MEG, iEEG, fNIRS, EMG, spikes, textual content, audio, and video — with no pipeline rewriting wanted.

- Pydantic validation and deterministic caching stop wasted compute. Configuration errors are caught at initialization earlier than any job runs, and a hash-based caching system ensures costly computations like LLM embeddings are carried out as soon as and reused throughout all experiments.

- The identical code runs on a laptop computer or a SLURM cluster. NeuralSet’s hardware-agnostic backend, powered by the

excapackage deal, lets researchers and AI devs scale seamlessly from native prototyping to high-performance cluster execution by updating a single configuration flag.

Take a look at the Paper and GitHub Web page. Additionally, be at liberty to comply with us on Twitter and don’t neglect to affix our 130k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you’ll be able to be part of us on telegram as effectively.

Must accomplice with us for selling your GitHub Repo OR Hugging Face Web page OR Product Launch OR Webinar and so forth.? Join with us