Researchers from China have introduced AntAngelMed, a large-scale open-source language model tailored for the medical field. According to the team, it is currently the largest and most capable medical language model available.

What Is AntAngelMed?

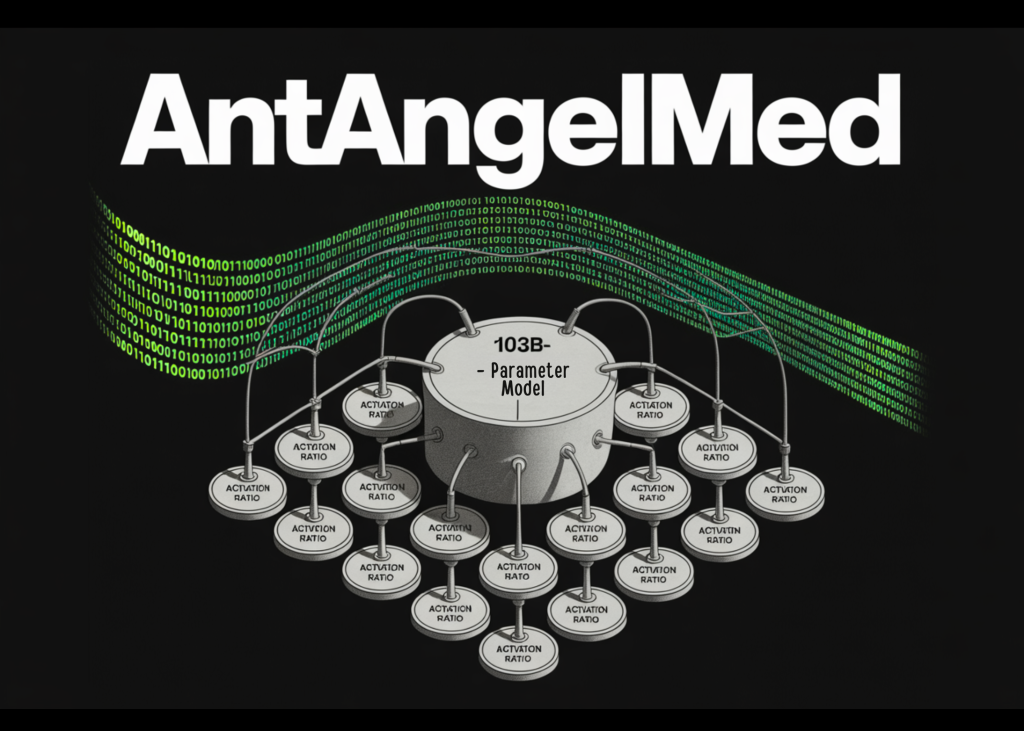

AntAngelMed is a medical-focused language model with 103 billion total parameters. However, it does not use all of them at once during inference. It relies on a Mixture-of-Experts (MoE) architecture with a 1/32 activation ratio, meaning only 6.1 billion parameters are engaged at any given moment when handling a query.

To understand how MoE works: in a traditional dense model, every parameter is involved in processing each token. In an MoE setup, the network is split into multiple “expert” sub-networks, and a routing system picks only a small group of them for each input. This approach allows for a massive total parameter count — which generally means greater knowledge capacity — while keeping the actual computational cost tied to the much smaller number of active parameters.

AntAngelMed builds on Ling-flash-2.0, a base model created by inclusionAI and shaped by what the team refers to as Ling Scaling Laws. Key optimizations added on top include: refined expert granularity, an adjusted shared expert ratio, attention balance mechanisms, sigmoid routing without auxiliary loss, an MTP (Multi-Token Prediction) layer, QK-Norm, and Partial-RoPE (where Rotary Position Embedding is applied to only some attention heads rather than all of them). The research team states that these combined design choices enable small-activation MoE models to achieve up to 7× greater efficiency compared to dense models of similar size — meaning AntAngelMed, with just 6.1B active parameters, can deliver performance comparable to a roughly 40B dense model. Additionally, as output length increases during inference, the speed advantage can also reach 7× or more over similarly sized dense models.

Training Pipeline

AntAngelMed follows a three-stage training process that layers broad language understanding with deep medical domain expertise.

In the first stage, the model undergoes continual pre-training on extensive medical corpora, including encyclopedias, web content, and academic publications. This phase starts from the Ling-flash-2.0 checkpoint, ensuring the model has a solid foundation in general reasoning before medical specialization begins.

The second stage involves Supervised Fine-Tuning (SFT) using a multi-source instruction dataset. This dataset blends general reasoning tasks — such as math, programming, and logic — to maintain chain-of-thought abilities, along with medical scenarios like doctor–patient Q&A, diagnostic reasoning, and safety and ethics cases.

The third stage applies Reinforcement Learning through the GRPO (Group Relative Policy Optimization) algorithm, paired with task-specific reward models. GRPO, first introduced in the DeepSeekMath paper, is a PPO variant that estimates baselines from group scores instead of a separate critic model, reducing computational overhead. Here, reward signals are crafted to guide the model toward empathy, structured clinical responses, safety boundaries, and evidence-based reasoning — all aimed at minimizing hallucinations on medical questions.

Inference Performance

Running on H20 hardware, AntAngelMed achieves over 200 tokens per second, which the team reports is roughly 3× faster than a 36 billion parameter dense model. With YaRN (Yet Another RoPE extensioN) extrapolation, it supports a 128K context window — sufficient for processing full clinical documents, lengthy patient histories, or multi-turn medical conversations.

The team has also released an FP8 quantized version of the model. When this quantization

Combined with EAGLE3 Speculative Decoding Optimization

When integrated with EAGLE3 speculative decoding, inference throughput at a concurrency level of 32 shows substantial gains compared to FP8 alone: 71% on HumanEval, 45% on GSM8K, and 94% on Math-500. While these benchmarks assess coding and math reasoning rather than medical tasks directly, they reflect the model’s general throughput stability across different output types.

Benchmark Results

In HealthBench — OpenAI’s open-source medical evaluation benchmark that uses simulated multi-turn medical conversations to assess real-world clinical performance — AntAngelMed holds the top position among all open-source models, outperforming several leading proprietary models as well, with its greatest advantage on the HealthBench-Hard subset.

In MedAIBench, an evaluation platform managed by China’s National Artificial Intelligence Medical Industry Pilot Facility, AntAngelMed places among the highest performers, especially excelling in medical knowledge Q&A and medical ethics and safety domains.

In MedBench, a Chinese- healthcare LLM benchmark encompassing 36 independently compiled datasets and roughly 700,000 samples spanning five areas — medical knowledge question answering, medical language understanding, medical language generation, complex medical reasoning, and safety and ethics — AntAngelMed ranks first overall.

Marktechpost’s Visual Explainer

Key Takeaways

- AntAngelMed is a 103-billion-parameter open-source medical large language model that activates only 6.1 billion parameters during inference, thanks to a 1/32 activation-ratio Mixture-of-Experts architecture inherited from Ling-flash-2.0.

- It follows a three-stage training pipeline: continual pre-training on medical corpora, supervised fine-tuning with a blend of general and clinical instruction data, and GRPO-based reinforcement learning to improve safety and diagnostic reasoning.

- On H20 hardware, the model delivers over 200 tokens per second and supports a 128K context window via YaRN extrapolation — roughly three times faster than a comparable 36-billion-parameter dense model.

- AntAngelMed claims the top spot among open-source models on OpenAI’s HealthBench, outperforms several proprietary models, and leads both the MedAIBench and MedBench leaderboards.

- The model is accessible on Hugging Face, ModelScope, and GitHub; model weights are licensed under Apache 2.0, code under MIT, and an FP8 quantized version has also been released.

Check out the Model Weights on Hugging Face, GitHub Repository, and Technical Details. Also, feel free to follow us on Twitter and don’t forget to join our 150k+ ML SubReddit and subscribe to our Newsletter. Wait — are you on Telegram? You can now join us on Telegram as well.

Looking to partner with us to promote your GitHub repository, Hugging Face page, product launch, or webinar? Get in touch with us