On June 4, 1996, Ariane 5 completed its maiden flight — a new European heavy-lift rocket engineered to place payloads into low Earth orbit. The vehicle blew up less than 40 seconds after launch. The root cause was specification and design flaws embedded in the software of the inertial reference system. A piece of software had been carried over from the earlier Ariane 4 without confirming that its assumptions still held in the updated environment. The failure went on to rank among the most costly software bugs ever recorded.

Why bring up an incident from three decades ago in a discussion about technical debt introduced by AI tools? Because it illustrates an important lesson: in complex systems, the danger lies not just in obviously broken code, but in code that appears fine yet is misaligned with its surroundings. AI-assisted development can produce a very similar problem.

As someone specializing in the Industrial Internet of Things, particularly in predictive maintenance, here is what I observe: AI tools readily produce working code that seems to fit a particular task perfectly, yet they fail to validate their own assumptions at the full-system level. In IIoT, this means a piece of code may be correct within the scope of a single function or service, but overlook the realities of specific hardware, data-flow patterns, architectural boundaries, or the conditions under which physical devices actually operate. As a result, locally sound code becomes the seed of systemic breakdowns and expensive remediation, dragging down the pace at which the whole platform evolves.

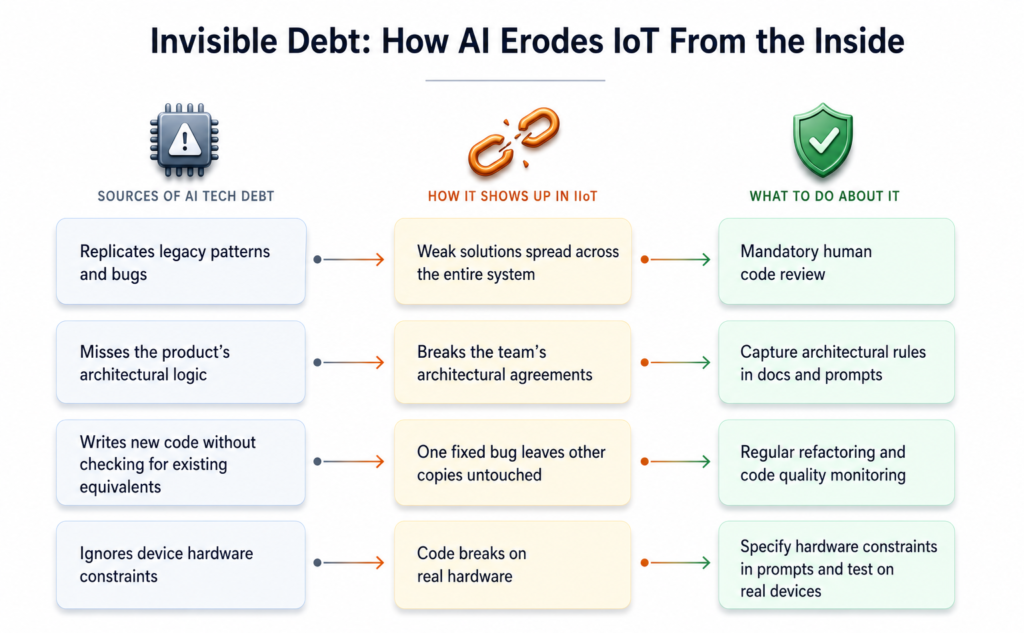

Four varieties of technical debt spawned by AI

Technical debt is any shortcut that accelerates progress today at the expense of higher costs down the line. Below are four key ways AI tools can accumulate it.

Carrying forward old patterns and mistakes

An AI assistant crafts suggestions based on the surrounding code it can see at the moment and often misses wider design or architectural problems. GitHub itself acknowledges that Copilot has a narrow field of vision, relies heavily on the context it is given, and may absorb errors and biases from the repositories it draws on. So if a project is already riddled with legacy approaches, unnecessary data duplication, or ad-hoc workarounds in place of well-thought-out architecture, the AI accepts this as the baseline and keeps reproducing those patterns. It acts like a feedback loop: bad habits are not merely maintained — they scale more rapidly.

This is more than a theoretical concern. A review of 304,000 vetted AI-generated commits spanning over 6,000 real-world projects revealed that more than 15% of commits from each of the five AI tools analyzed still contained at least one code quality issue, and a quarter of those issues persisted untouched in the final version.

In IoT ecosystems, this behavior is especially risky because a legacy pattern seldom stays confined to a single module. When an assistant copies a flawed approach into firmware, gateway services, or telemetry handling, it cascades through the entire pipeline — from the edge device all the way to the cloud layer.

AI excels at handling specific, localized engineering chores: drafting tests, wiring up boilerplate code, or spinning out standard CRUD endpoints. What it lacks is a view of the bigger picture — which databases hold which kinds of data, what operational boundaries exist, and how pieces of the system communicate. A study conducted by Ox Security across 300 open-source projects — 50 of which were wholly or partly AI-authored — found the code to be functional yet consistently lacking in architectural awareness.

Consequently, AI can pile up technical debt even without recycling outdated patterns. If architectural principles are not spelled out explicitly — in documentation, architectural decision records, or the prompts themselves — the model optimizes each task in isolation. In a sophisticated IIoT setup, the picture looks like this: time-series data, reference tables, and logs each live in purpose-built databases tuned for different workloads — but when asked to persist a new data type, the assistant is unaware of this layout and generates code that quietly undermines the agreements the team has set in place.

Repeated logic and growing maintenance burden

An AI assistant has no knowledge that the code it is about to write already lives somewhere else in the system, so it produces a fresh copy. The outcome is several independent versions of the same logic — and when a change becomes necessary, engineers waste time hunting down every instance.

GitClear’s examination of 211 million altered lines of code between 2020 and 2024 showed that duplicated code climbed from 8.3% to 12.3%. 2024 was the first year when the volume of duplicated code surpassed the volume of refactored code. AI tools are likely to intensify this trajectory. They allow a new code block to be dropped in with a single keystroke, but they rarely point a developer toward reusing an existing function elsewhere in the project — partly because of the limited context window available to the model.

In IoT deployments, when identical logic — such as message parsing or connection verification — exists in several independent places, patching a bug in one copy while overlooking the others can cause field devices to respond differently to the exact same input. Clearing up these inconsistencies means not just editing code, but coordinating firmware upgrades across thousands of devices at once.

Overlooking hardware limitations

IoT devices do not enjoy the virtually unlimited resources of cloud infrastructure. A gateway has a defined quantity of memory, restricted network bandwidth, and a finite power budget. An AI assistant can factor in these constraints — but only if the developer states them clearly.

When that does not happen, the assistant generates code suited to the environment it was predominantly trained on — cloud and server platforms where memory is plentiful and network connectivity is reliable. The outcome is predictable: retry loops with no timeout ceiling, heavyweight text-based formats instead of efficient binary protocols, and code that compiles without error yet ignores the hardware realities of a given board.

A solution that passes every test inside an emulator may falter the moment it runs on a real device with constrained resources.

How to prevent AI from generating technical debt in your project

Incorporating AI into IIoT systems demands even stricter engineering discipline than development without it. Below are four practices that help my team keep code quality in check.

Mandatory human code review

This may sound like common sense, yet when using AI assistants there is a real temptation to accept generated code at face value without deep scrutiny — especially since over half