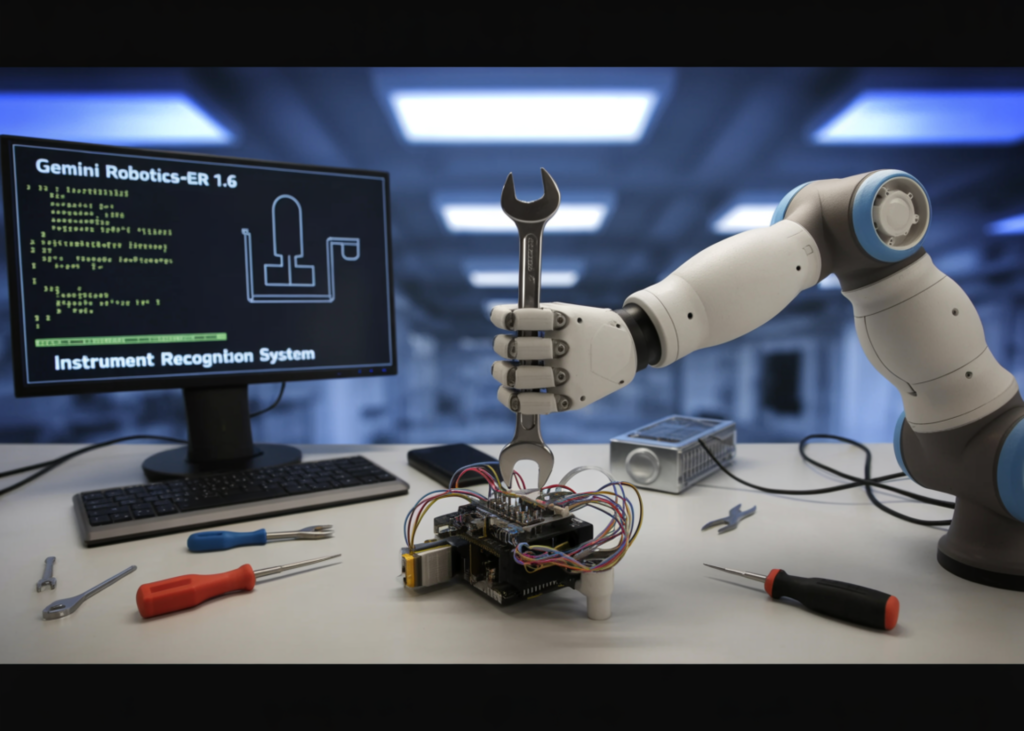

Google DeepMind analysis group launched Gemini Robotics-ER 1.6, a big improve to its embodied reasoning mannequin designed to function the ‘cognitive brain’ of robots working in real-world environments. The mannequin focuses on reasoning capabilities important for robotics, together with visible and spatial understanding, process planning, and success detection — appearing because the high-level reasoning mannequin for a robotic, able to executing duties by natively calling instruments like Google Search, vision-language-action fashions (VLAs), or every other third-party user-defined features.

Right here is the important thing architectural concept to grasp: Google DeepMind takes a dual-model strategy to robotics AI. Gemini Robotics 1.5 is the vision-language-action (VLA) mannequin — it processes visible inputs and person prompts and instantly interprets them into bodily motor instructions. Gemini Robotics-ER, alternatively, is the embodied reasoning mannequin: it focuses on understanding bodily areas, planning, and making logical selections, however doesn’t instantly management robotic limbs. As an alternative, it gives high-level insights to assist the VLA mannequin determine what to do subsequent. Consider it because the distinction between a strategist and an executor — Gemini Robotics-ER 1.6 is the strategist.

What’s New in Gemini Robotics-ER 1.6

Gemini Robotics-ER 1.6 exhibits vital enchancment over each Gemini Robotics-ER 1.5 and Gemini 3.0 Flash, particularly enhancing spatial and bodily reasoning capabilities similar to pointing, counting, and success detection. However the important thing addition is a functionality that didn’t exist in prior variations in any respect: instrument studying.

Pointing as a Basis for Spatial Reasoning

Pointing — the mannequin’s potential to determine exact pixel-level places in a picture — is way extra highly effective than it sounds. Factors can be utilized to specific spatial reasoning (precision object detection and counting), relational logic (making comparisons similar to figuring out the smallest merchandise in a set, or defining from-to relationships like ‘move X to location Y’), movement reasoning (mapping trajectories and figuring out optimum grasp factors), and constraint compliance (reasoning by advanced prompts like “point to every object small enough to fit inside the blue cup”).

In inner benchmarks, Gemini Robotics-ER 1.6 demonstrates a transparent benefit over its predecessor. Gemini Robotics-ER 1.6 appropriately identifies the variety of hammers, scissors, paintbrushes, pliers, and backyard instruments in a scene, and doesn’t level to requested gadgets that aren’t current within the picture — similar to a wheelbarrow and Ryobi drill. As compared, Gemini Robotics-ER 1.5 fails to determine the proper variety of hammers or paintbrushes, misses scissors altogether, and hallucinates a wheelbarrow. For AI Robotics professionals this issues as a result of hallucinated object detections in robotic pipelines may cause cascading downstream failures — a robotic that ‘sees’ an object that isn’t there’ll try to work together with empty house.

Success Detection and Multi-View Reasoning

In robotics, figuring out when a process is completed is simply as necessary as figuring out learn how to begin it. Success detection serves as a important decision-making engine that enables an agent to intelligently select between retrying a failed try or progressing to the following stage of a plan.

This can be a tougher downside than it appears. Most trendy robotics setups embody a number of digicam views similar to an overhead and wrist-mounted feed. This implies a system wants to grasp how totally different viewpoints mix to type a coherent image at every second and throughout time. Gemini Robotics-ER 1.6 advances multi-view reasoning, enabling it to higher fuse info from a number of digicam streams, even in occluded or dynamically altering environments.

Instrument Studying: A Actual-World Breakthrough

The genuinely new functionality in Gemini Robotics-ER 1.6 is instrument studying — the power to interpret analog gauges, strain meters, sight glasses, and digital readouts in industrial settings. This process stems from facility inspection wants, a important focus space for Boston Dynamics. Spot, a Boston Dynamics robotic, is ready to go to devices all through a facility and seize pictures of them for Gemini Robotics-ER 1.6 to interpret.

Instrument studying requires advanced visible reasoning: one should exactly understand a wide range of inputs — together with the needles, liquid degree, container boundaries, tick marks, and extra — and perceive how all of them relate to one another. Within the case of sight glasses, this entails estimating how a lot liquid fills the sightglass whereas accounting for distortion from the digicam perspective. Gauges sometimes have textual content describing the unit, which should be learn and interpreted, and a few have a number of needles referring to totally different decimal locations that must be mixed.

Gemini Robotics-ER 1.6 achieves its instrument readings through the use of agentic imaginative and prescient (a functionality that mixes visible reasoning with code execution, launched with Gemini 3.0 Flash and prolonged in Gemini Robotics-ER 1.6). The mannequin takes intermediate steps: first zooming into a picture to get a greater learn of small particulars in a gauge, then utilizing pointing and code execution to estimate proportions and intervals, and in the end making use of world data to interpret which means.

Gemini Robotics-ER 1.5 achieves a 23% success fee on instrument studying, Gemini 3.0 Flash reaches 67%, Gemini Robotics-ER 1.6 reaches 86%, and Gemini Robotics-ER 1.6 with agentic imaginative and prescient hits 93%. One necessary caveat: Gemini Robotics-ER 1.5 was evaluated with out agentic imaginative and prescient as a result of it doesn’t help that functionality. The opposite three fashions have been evaluated with agentic imaginative and prescient enabled for the instrument studying process, making the 23% baseline much less a efficiency hole and extra a elementary architectural distinction. For AI builders evaluating mannequin generations, this distinction issues — you aren’t evaluating apples to apples throughout the complete benchmark column.

Key Takeaways

- Gemini Robotics-ER 1.6 is a reasoning mannequin, not an motion mannequin: It acts because the high-level ‘brain’ of a robotic — dealing with spatial understanding, process planning, and success detection — whereas the separate VLA mannequin (Gemini Robotics 1.5) handles the precise bodily motor instructions.

- Pointing is extra highly effective than it appears: Gemini Robotics-ER 1.6’s pointing functionality goes far past easy object detection — it allows relational logic, movement trajectory mapping, grasp level identification, and constraint-based reasoning, all of that are foundational to dependable robotic manipulation.

- Instrument studying is the largest new functionality: In-built collaboration with Boston Dynamics’ Spot robotic for industrial facility inspection, Gemini Robotics-ER 1.6 can now learn analog gauges, strain meters, and sight glasses with 93% accuracy utilizing agentic imaginative and prescient — up from simply 23% in Gemini Robotics-ER 1.5, which lacked the aptitude fully.

- Success detection is what allows true autonomy: Figuring out when a process is definitely full — throughout a number of digicam views, in occluded or dynamic environments — is what permits a robotic to determine whether or not to retry or transfer to the following step with out human intervention.

Take a look at the Technical particulars and Mannequin Data. Additionally, be happy to comply with us on Twitter and don’t overlook to hitch our 130k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you’ll be able to be a part of us on telegram as effectively.

Must accomplice with us for selling your GitHub Repo OR Hugging Face Web page OR Product Launch OR Webinar and so forth.? Join with us