Preparation of HT-SELEX knowledge of TF-binding DNA for mannequin coaching

The HT-SELEX datasets of ten TFs (Ar, Atf4, Dbp, Egr3, Foxg1, Klf12, Mlx, Nr2e1, Sox10 and Srebf1) used on this research have been downloaded from the European Nucleotide Archive database (accession quantity: ERP001824)35. The FASTQ recordsdata have been additional processed by eradicating the variable-length and redundant sequences, to acquire the cleaned sequences used for additional continuous pretraining of NA-LLM. The lengths and numbers of cleaned distinctive DNA sequences in every TF dataset are summarized in Supplementary Fig. 1.

Evaluating the binding specificity of TF-binding DNA sequences

The binding specificity rating of TF to a DNA sequence is calculated utilizing the 8mer_sum algorithm as described beforehand36:

$${S}_{mathrm{whole}}=mathop{sum }limits_{i=1}^{n}{S}_{8mathrm{mer}}^{i}$$

(1)

the place Swhole is the binding specificity rating of the full-length DNA sequence, ({S}_{8mathrm{mer}}^{i}) is the protein-binding microarray fluorescence depth of the ith 8-mer motif, which is extracted utilizing a sliding window of size 8 and stride 1, and n represents the overall variety of 8-mer motifs within the full-length DNA sequence.

The relative binding specificity is normalized to a variety from 0 to 100 utilizing min–max normalization of binding specificity rating:

$${S}_{{rm{norm}}}=frac{S-{S}_{min }}{{S}_{max }-{S}_{min }}instances 100$$

(2)

the place ({S}_{min }) and ({S}_{max }) characterize the minimal and most binding specificity scores within the dataset, respectively. This scaling transforms the uncooked scores to the [0, 100] vary.

InstructNA coaching and inference utilizing DNABERT (3-mers)

Tokenization in DNABERT (3-mers)

Within the area of language modeling, tokens characterize the basic semantic items {that a} mannequin employs to interpret linguistic knowledge. They’ll characterize discrete items comparable to phrases or much more granular semantic parts comparable to particular person characters. The act of tokenization includes reworking these linguistic items into distinctive integer identifiers, every akin to an entry inside a reference lookup desk. Subsequently, these integers are translated by embedding layers into vectorial representations, which the mannequin then processes in a complete, end-to-end method. For the precise utility throughout the InstructNA framework utilizing DNABERT (3-mers), DNA sequences have been tokenized utilizing a trinucleotide (3-mers) decision technique. This strategy ends in a combinatorial range of 64 (43) doable distinct 3-mers. Moreover, particular utility tokens comparable to [CLS], [UNK], [SEP], [MASK] and [PAD] have been built-in into the mannequin for particular useful roles throughout the modeling course of.

Coaching

The coaching strategy of InstructNA utilizing DNABERT (3-mers) consists of two levels. Within the first stage, we carried out continuous pretraining on a pretrained DNABERT DNA-LLM utilizing HT-SELEX knowledge, with the coaching goal aligned with that of the unique DNABERT pretraining (Supplementary Fig. 18). Particularly, 15% of the tokens have been masked, and the mannequin was skilled to foretell the masked tokens. To facilitate BO, a dimensionality discount layer was included throughout coaching, decreasing the dimensionality of every token embedding to eight (Supplementary Fig. 19). This course of ends in a domain-adapted FNA-LLM (encoder).

Within the second stage, we froze the encoder and launched a randomly initialized decoder composed of six-layer transformer blocks. We then carried out a sequence reconstruction job, the place HT-SELEX sequence knowledge are fed into the mannequin once more. The encoder reworked these sequences into embeddings, which have been subsequently handed to the decoder, enabling it to reconstruct the unique sequence tokens. The target of this coaching stage is to reconstruct the unique enter sequence from the embeddings. Particularly, the decoder outputs a vector of logits for the ith place token primarily based on the corresponding embedding ei, and a softmax layer is utilized to acquire the chance distribution over the vocabulary. The loss perform for this stage is

$${L}_{mathrm{recon}}=-sum log ,P({x}_{i}|{mathbf{e}}_{{{i}}})$$

(3)

the place P(xi|ei) represents the chance assigned to the ground-truth token xi given the embedding ei after the softmax transformation.

Inference

Utilizing embeddings derived from clustering facilities or BO, the decoder processes these embeddings to provide an preliminary generated sequence. Subsequent, the 15% of tokens with the bottom chances on this sequence have been changed with a particular token, [MASK], and the modified sequence was fed again into the encoder to foretell and refine the masked-out parts. This iterative refinement course of is repeated a number of instances, progressively enhancing the accuracy of the generated sequence.

HC-HEBO in InstructNA

The HC-HEBO algorithm combines HC with HEBO to effectively optimize FNAs.

-

(1)

Preliminary resolution development. Sequences from numerous sources are evaluated and their embeddings are denoted as E0 = {e0, e1, e2,…,en}. E0 is split into n teams and every ei ∈ E0 (0 ≤ i ≤ n) represents the preliminary resolution for the group respectively.

-

(2)

Search area definition. For the n teams, outline the preliminary search area as a neighborhood centered round E0, with a search radius R0 (default ΔR0 = 5). The sensitivity experiment of the search radius and minimal threshold is proven in Supplementary Fig. 20. The n preliminary search area S0 = {s0, s1, s2, …, sn} is outlined as

$${E}_{0},({rm{preliminary}},{rm{resolution}})$$

(4)

$${S}_{0}={mathbf{e}|parallel mathbf{e}-{E}_{0}parallel le Delta {R}_{0}},({rm{preliminary}},{rm{search}},{rm{area}}).$$

(5)

-

(3)

Looking out and era. Use HEBO to look subsequent embeddings within the search area with the hyperparameters in HEBO stored as default. InstructNA generates and evaluates a complete of n new sequences, with one new sequence generated per group.

-

(4)

Grasping refinement. At every iteration, the answer (embedding) with the perfect evaluated efficiency inside its group is chosen because the candidate resolution for the subsequent optimization. The ith group resolution obtained on the tth iteration is Ei,t:

$${mathbf{E}}_{i,t}=textual content{arg}mathop{max }limits_{textbf{e}in {G}_{t,i}}f(textbf{e})$$

(6)

the place Gt,i represents the ith group of evaluated options at iteration t and f(e) represents the evaluated efficiency.

-

(5)

Shrinking search radius. After every iteration, the search radius is decreased by half, however as soon as it reaches a minimal threshold ΔRmin (default ΔRmin = 1.25), the search radius will not shrink and stays fixed:

$${S}_{t+1}=left{textbf{e}|Vert textbf{e}-{E}_{t}Vert le frac{Delta {R}_{t}}{2}proper},,mathrm{if},Delta {R}_{t} > Delta {R}_{min }$$

(7)

$${S}_{t+1}={mathbf{e}|Vert mathbf{e}-{E}_{t}Vert le Delta {R}_{min }},,mathrm{if},Delta {R}_{t}le Delta {R}_{min }.$$

(8)

-

(6)

Get hold of the tth iteration finest resolution Et, search area St. Repeat steps 3–5 for iteration.

Assessing correlation between embeddings and sequences

We randomly sampled 1,000 distinctive sequences from the HT-SELEX dataset. From these sequences, we generated all doable pairs (({C}_{1,000}^{2})), leading to a complete of 499,500 sequence pairs. We explored the connection between sequences and embeddings by analyzing the cosine similarity and pairwise alignment similarity of those sequence pairs.

Cosine similarity calculation

For InstructNA and DNABERT, we calculated the cosine similarity of token embeddings at corresponding positions for every pair of sequences, after which averaged the cosine similarities throughout all tokens to acquire the ultimate cosine similarity rating representing the total embedding similarity of the sequence pair.

$${{rm{Similarity}}}_{{rm{sequence}}{rm{pair}}}({S}_{1},{S}_{2})=frac{1}{N}displaystyle mathop{sum }limits_{i=1}^{N}frac{{mathbf{e}}_{i}^{1}cdot {mathbf{e}}_{i}^{2}}{| {mathit{e}}_{mathit{i}}^{1}| | {mathit{e}}_{mathit{i}}^{2}| }$$

(9)

the place S1 and S2 characterize the sequences within the sequence pair, ({mathbf{e}}_{i}^{1}) and ({mathbf{e}}_{i}^{2}) characterize the embedding of the ith token in S1 and S2, and · denotes the vector interior product.

For RaptGen, we compute the cosine similarity between the 2D embeddings of sequence pairs instantly.

Sequence similarity

Sequence similarity is calculated utilizing the EMBOSS Needle instrument48 that creates an optimum international alignment of two sequences utilizing the Needleman–Wunsch algorithm with default parameters: matrix = DNA full, hole open penalty = 10, hole prolonged penalty = 0.5, finish hole open penalty = 10 and finish hole lengthen penalty = 0.5. For the DNA sequences binding to TFs, the sequence similarity is calculated on the 14-nucleotide or 20-nucleotide random DNA (Supplementary Fig. 1). For the DNA aptamers binding to LOX1 or CXCL5, the sequence similarity is calculated on the 36-nucleotide random DNA (Supplementary Desk 2).

Binary classification of binding specificity

For the cleaned distinctive sequences in every TF dataset as proven in Supplementary Fig. 1, we cluster them utilizing cd-hit49 at an 80% sequence similarity cutoff18,50, acquire consultant sequences from every cluster and randomly cut up the collected sequences into coaching (40%), validation (10%) and take a look at (50%) units. The numbers of sequences in coaching, validation and take a look at units are summarized in Supplementary Desk 1. On the idea of the median binding specificity rating within the coaching and validation units, we label every sequence as 0 (low binding specificity) or 1 (excessive binding specificity) and practice the mannequin.

Within the classification pipeline, encoders pretrained by InstructNA and RaptGen stay frozen, with a trainable linear layer appended for binary classification. Mannequin efficiency is assessed on the take a look at set utilizing the next metrics:

$$mathrm{Precision}=frac{mathrm{TP}}{mathrm{TP}+mathrm{FP}}$$

(10)

$$mathrm{Accuracy}=frac{mathrm{TP}+mathrm{TN}}{mathrm{TP}+mathrm{TN}+mathrm{FP}+mathrm{FN}}$$

(11)

$$mathrm{Recall}=mathrm{TPR}=frac{mathrm{TP}}{mathrm{TP}+mathrm{FN}}$$

(12)

$${F}_{1}=2times frac{{rm{Precision}}instances {rm{Recall}}}{{rm{Precision}}+{rm{Recall}}}$$

(13)

$$mathrm{FPR}=frac{mathrm{FP}}{mathrm{FP}+mathrm{TN}}$$

(14)

$$mathrm{AUROC}={int }_{0}^{1}mathrm{TPR}(mathrm{FPR}),{rm{d}}(mathrm{FPR})$$

(15)

the place TP, TN, FP and FN characterize true optimistic, true detrimental, false optimistic and false detrimental respectively within the confusion matrix.

KDE perturbation era experiment

A perturbation era experiment was carried out individually on the ten HT-SELEX datasets of TF-binding DNA sequences. The embeddings of all DNA sequences from every HT-SELEX dataset have been obtained utilizing the InstructNA and RaptGen encoders. These embeddings have been then used to coach a KDE37 mannequin to study the chance density distribution of the embeddings. Two thousand new embeddings have been sampled from the skilled KDE mannequin primarily based on the realized chance density perform. These new embeddings have been enter into the decoder for inference. Lastly, InstructNA and RaptGen generated 2,000 sequences for every HT-SELEX dataset, respectively. For the actual sequences, we carried out stratified sampling to pick out 2,000 sequences on the idea of sequence frequency from every HT-SELEX dataset. Then, ok-mer frequency and G/C content material of those sequences have been calculated. The ok-mer counts have been obtained utilizing sliding home windows of lengths 3–6 with a stride of 1 for every sequence, and frequency was calculated on the idea of the ok-mer counts. The G/C content material was decided by calculating the proportion of G and C nucleotides in every sequence.

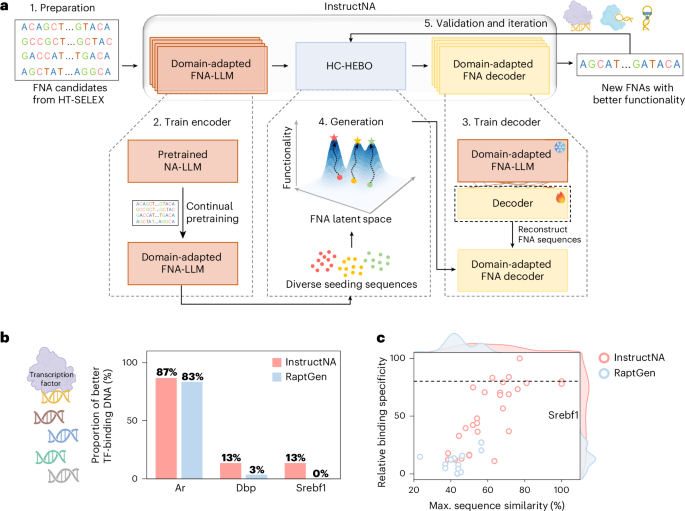

Pipeline of InstructNA to generate new DNA sequences for TF binding

To generate new DNA sequences which have excessive binding specificity to a TF, we skilled InstructNA as said above. Through the era and optimization by HC-HEBO, 30 beginning seeds have been collected from three sources, together with ten top-frequency sequences within the unique HT-SELEX knowledge (S1), ten sequences decoded from every of the ten cluster facilities of your entire HT-SELEX embeddings clustered by the Gaussian combination mannequin (GMM)51 (S2) and ten sequences with related embeddings (calculated by the minimal Euclidean distance) to the DNAs with the very best binding specificity from S1 and S2 (S3). Notably, the variety of seeding sequences can differ. The 30 candidates have been then clustered into ten clusters utilizing GMM, and the sequence of the very best binding specificity rating in every group was chosen to represent the preliminary ten seeding sequences for HC-HEBO.

HT-SELEX for screening protein-binding DNA aptamers

The ssDNA library and primers proven in Supplementary Desk 2 have been bought from Sangon Biotech. The ssDNA library was denatured at 95 °C for 10 min, 4 °C for six min and 25 °C for two min. COOH-modified magnetic beads (400 μl; Zecen Biotech) have been washed with 1 ml of 10-mM NaOH as soon as and 1 ml of double-distilled water twice, and activated in 800 μl of 0.4-M 1-(3-dimethylaminopropyl)-3-ethylcarbodiimide (EDC) and 0.1-M N-hydroxysuccinimide (NHS) (1:1, v/v) for 15 min. Then, ~80 μg of protein (PTM Bio) diluted in sodium acetate was added to the magnetic beads and incubated for two h. Supernatant was discarded and the beads have been washed thrice with 1 ml of Dulbecco’s phosphate-buffered saline (DPBS). Then, the beads have been blocked in 1 ml of 10 mM ethanolamine (pH 8.0), incubated for 15 min, washed thrice with 800 μl of 1× washing buffer after which resuspended in 400 μl of antibody diluent buffer.

Every spherical of counter-selection and optimistic choice was carried out utilizing the standard magnetic bead-based HT-SELEX technique. The His–magnetic beads have been washed with 1× DPBS, after which incubated with the ssDNA library for 1 h. The combination was washed with 1× washing buffer, after which the His–magnetic beads have been discarded, leaving the remaining DNA pool for optimistic choice. DNAs sure to the goal protein have been eluted by boiling the protein–magnetic beads in 100 μl of double-distilled water at 95 °C for 10 min, amplified with PCR utilizing rTaq DNA polymerase (Takara) and used for the subsequent spherical of choice. For PCR amplification, we used MIX configuration with the ahead primer and biotin-modified reverse primer, and the ssDNA eluted from the optimistic choice because the template. Double-stranded DNA (1 ml) obtained from PCR was incubated with 80 μl of streptavidin-modified magnetic beads (Good-Lifesciences Biotech) in 1-M NaCl for 30 min, washed with 1× washing buffer thrice and handled with 100 μl of 40-mM NaOH. The biotin-modified strands left on streptavidin-modified magnetic beads have been eliminated by magnetic suction, neutralized with 4 μl of 1-M HCl and mixed with an equal quantity of two× DPBS buffer. For top-throughput sequencing, every spherical of the ssDNA library was amplified utilizing the ahead and reverse primers, adopted by means of a normal library development package (E7335S NEB). Polyclonal amplicons have been purified utilizing VAHTS DNA Clear Beads (Vazyme) following the producer’s protocol, quantified with QUBIT (Invitrogen). The sequencing was carried out utilizing the GeneMind sequencer. The high-throughput sequencing knowledge from the final spherical of SELEX have been used for InstructNA.

Pipeline of InstructNA to generate DNA aptamers

The final spherical of aptamer HT-SELEX knowledge was used to coach InstructNA as said above. The 30 seeding sequence candidates from S1, S2 and S3 have been engineered in the identical approach as said above for these of TF-binding DNAs. The 30 candidate sequences have been clustered into ten clusters utilizing GMM, and the sequence with the smallest OkD measured by SPR to the goal protein was chosen to represent the preliminary seeding sequences for HC-HEBO optimization.

SPR experiments

The DNA oligonucleotides used for SPR experiments proven in Supplementary Tables 3–6 have been bought from Sangon Biotech. The SPR experiments have been carried out on a Biacore 8K instrument (GE Healthcare). The sensor chip (CM5, Cytiva) was activated by injecting a 50:50 (v/v) combination of NHS (0.1 M) and EDC (0.4 M) for five min. The protein (50 µg ml−1) was diluted in sodium acetate and injected into circulate cell 2 at a circulate charge of 10 µl min−1 for five min. Subsequent, the His protein (5 µg ml−1) diluted in sodium acetate was injected into circulate cell 1 as a management channel. Lastly, the sensor chip was blocked utilizing ethanolamine-HCl (1 M, pH 8.5) for two min. The DNA aptamers have been diluted to totally different concentrations utilizing 1× PBS containing 5-mM magnesium chloride. Aptamers at varied concentrations in operating buffer (DPBS supplemented with 5-mM MgCl2) have been injected into the analyte channel at a circulate charge of 30 μl min−1, a contact time of 120 s and a dissociation time of 120 s. After every evaluation cycle, the analyte channels have been regenerated through a 30-s injection of 1.5-M NaCl. The OkD was obtained by becoming the sensorgram with a 1:1 binding mannequin utilizing Biacore analysis software program (GE Healthcare).

Docking and unrestrained MD simulations

The AlphaFold3 webserver ( was utilized to foretell the 3D buildings of DNA aptamers T1L, G1L (nucleotides 1–76) and LOX1 (amino acids 61–237), that are per the sequence lengths utilized in SPR experiments. Initially, we tried to foretell the advanced construction instantly from the enter protein and DNA sequences, however the ensuing buildings have been visibly not sure collectively. Due to this fact, on the idea of the monomer buildings of the protein and DNAs, the LOX1–T1L and LOX1–G1L complexes have been obtained through the molecular docking utilizing HDOCKlite v.1.1, primarily based on a hybrid algorithm of template-based modeling and ab initio free docking. The LOX1 protein was set because the receptor, and the DNA aptamer was handled because the ligand. Blind docking was carried out, and the highest docking pose primarily based on the docking rating was chosen because the preliminary construction for MD simulations.

The advanced construction was subjected to unrestrained MD simulations on the Amber22 package deal. The advanced was modeled utilizing the tleap module in Amber22. The Amber ff19SB drive discipline was used for the protein and the OL15 drive discipline was employed for DNA. After including Na+ to neutralize the phosphate spine fees, the system was then solvated with TIP3P water in a 12-Å cuboid field. Preliminary minimizations have been carried out with 2,000 steps of steepest descent adopted by 3,000 steps of conjugated gradient, throughout which the DNA positions have been fastened. The particle mesh Ewald algorithm was employed to effectively deal with the long-range electrostatic interplay. Then the system was heated to 300 Ok below fixed quantity in 50 ps, adopted by a 50-ps constant-pressure rest utilizing the Langevin thermostat at 300 Ok. All covalent bonds involving hydrogen atoms have been constrained utilizing the SHAKE algorithm. The cutoff was set to 12.0 Å for the electrostatic and van der Waals interactions. The ultimate manufacturing was carried out at 300 Ok with out restraints on DNA for 100 ns. Ten impartial runs of MD simulations have been carried out for every advanced.

Statistics and reproducibility

No statistical technique was used to predetermine pattern dimension. No knowledge have been excluded from the analyses. Coaching, validation and take a look at units for coaching fashions of binary classification have been generated through random splits of the total datasets. No randomization was utilized within the comparative experiments. Blinding was not related to this research.

Reporting abstract

Additional info on analysis design is obtainable within the Nature Portfolio Reporting Abstract linked to this text.