In our earlier weblog, we explored a GitOps use case for on-premises infrastructure, managing a number of clusters hosted on the k3s Kubernetes distribution utilizing k0rdent.

However the platform engineering ecosystem is huge, and one weblog barely scratches the floor of what it takes to handle multi-cluster environments comfy, or to profit from completely different Kubernetes distributions.

Finally, success isn’t about working Kubernetes, it’s about working it at scale, effectively, and constantly.

That’s precisely what hosted management planes are designed to realize.

The size drawback no one talks about sufficient

How do you handle dozens or a whole bunch of clusters with out prices and complexity spiralling uncontrolled?

Open infrastructure is neither small nor shrinking. In truth, most practitioners I encounter day-to-day are working their workloads on OpenStack. And for those who’re on OpenStack, the problem of managing multi-cluster purposes doesn’t simply exist, it compounds. Each new cluster provides overhead, and that overhead provides up quick.

This weblog explores how combining k0s, k0rdent, and Hosted Management Planes (HCP) can provide you a scalable, cost-efficient, and production-ready Kubernetes platform on OpenStack.

What are we fixing?

In a typical Kubernetes setup, each cluster ships with its personal devoted management aircraft — which means at the very least 3 nodes per cluster only for the management aircraft itself. Multiply that throughout dev, staging, and manufacturing environments, and also you’re burning by way of sources earlier than your first workload even lands.

That is the issue Hosted Management Planes had been constructed to unravel.

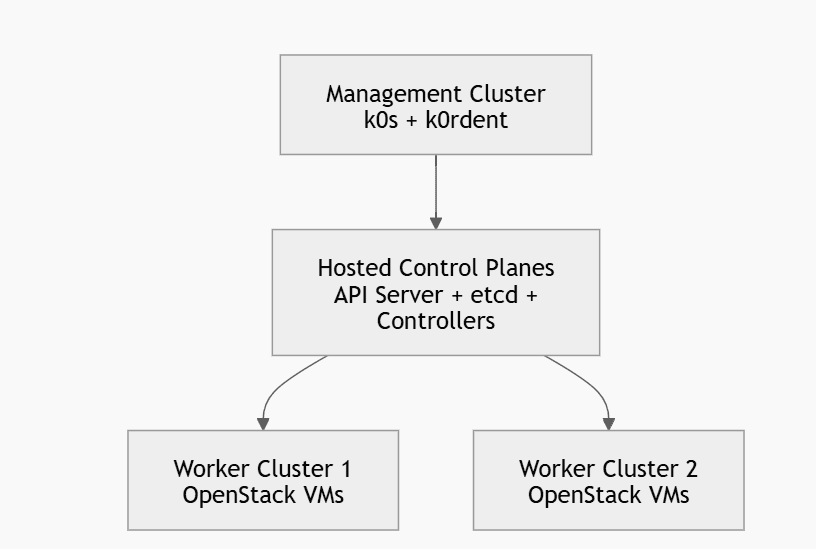

As a substitute of working the API server, etcd, and controllers on devoted nodes per cluster, HCP runs all of them inside a central administration cluster. The result’s fewer VMs, decrease prices, easier upgrades, and a single pane of management throughout your total fleet.

The mix of:

- k0s (light-weight Kubernetes)

- k0rdent (multi-cluster orchestration)

- OpenStack (non-public cloud infrastructure)

creates a highly effective platform engineering stack.

1. Put together your surroundings

Earlier than you contact Kubernetes, ensure that your base surroundings is right. Most failures occur right here.

Infrastructure necessities

You want:

- One Linux VM (Ubuntu 20.04/22.04 beneficial) for the administration cluster

- Minimal: 4 CPU, 8 GB RAM (extra is best)

- Entry to an OpenStack undertaking with:

- Sufficient quota (cases, volumes, floating IPs)

- A working community + subnet + router

- A usable picture (Ubuntu cloud picture)

- Not less than one taste (e.g., m1.medium)

Instruments to put in in your VM:

sudo apt replace

sudo apt set up -y curl wget jq unzipSet up kubectl:

curl -LO

chmod +x kubectl

sudo mv kubectl /usr/native/bin/Set up Helm:

curl | bashSet up OpenStack CLI:

pip set up python-openstackclient2. Create the administration cluster utilizing k0s

k0s is used as a result of it’s light-weight and easy, which is right for a administration cluster.

Set up k0s:

curl -sSLf | sudo shInitialize and begin the controller:

sudo k0s set up controller --single

sudo k0s begin

Look ahead to about 30–60 seconds, then export kubeconfig:

sudo k0s kubeconfig admin > ~/.kube/configConfirm:

kubectl get nodesIt is best to see one node in Prepared state.

3. Set up k0rdent on the administration cluster

k0rdent runs as controllers inside your administration cluster and handles cluster lifecycle.

Add Helm repository:

helm repo add k0rdent

helm repo replaceSet up k0rdent:

helm set up kcm oci://ghcr.io/k0rdent/kcm/charts/kcm --version 1.8.0 -n kcm-system --create-namespaceConfirm set up:

kubectl get pods -n kcm-systemWait till all pods are in Working state. If they aren’t, verify logs earlier than continuing.

4. Configure OpenStack entry

This step is essential. k0rdent wants credentials to create VMs in your behalf.

Load your OpenStack credentials

It is best to have an openrc.sh file out of your OpenStack surroundings.

supply openrc.sh

Confirm entry:

openstack server record

openstack community record

openstack picture record

If these instructions fail, repair OpenStack entry earlier than shifting ahead.

5. Create Kubernetes secret for OpenStack credentials

k0rdent expects a clouds.yaml format.

Create a file:

apiVersion: v1

sort: Secret

metadata:

title: openstack-cloud-config

namespace: kcm-system

sort: Opaque

stringData:

clouds.yaml: |

clouds:

openstack:

auth:

auth_url: https://

username:

password:

project_name:

user_domain_name: Default

project_domain_name: Default

region_name: RegionOne

interface: public

identity_api_version: 3

Apply it:

kubectl apply -f cloud-config.yaml6. Create k0rdent credential object

This tells k0rdent to make use of the OpenStack secret.

apiVersion: k0rdent.mirantis.com/v1beta1

sort: Credential

metadata:

title: openstack-credential

namespace: kcm-system

spec:

sort: openstack

secretRef:

title: openstack-cloud-config

Apply:

kubectl apply -f credential.yaml7. Establish required OpenStack sources

You should use actual names out of your OpenStack surroundings.

Run:

openstack community record

openstack subnet record

openstack router record

openstack picture record

openstack taste record

You’ll need:

- Exterior community title (e.g., public)

- Picture title (e.g., ubuntu-20.04)

- Taste (e.g., m1.medium)

Word: If these values are flawed, cluster creation will fail silently or partially.

8. Creating your ClusterDeployment (core step)

That is the place you outline your Kubernetes cluster declaratively. That is how we have now outlined our Cluster Deployment, you may can deploy it in response to your wants:

apiVersion: k0rdent.mirantis.com/v1beta1

sort: ClusterDeployment

metadata:

title: openstack-hcp

namespace: kcm-system

spec:

template: openstack-hosted-cp

credential: openstack-credential

config:

workersNumber: 2

taste: m1.medium

picture:

filter:

title: ubuntu-20.04

externalNetwork:

filter:

title: public

identityRef:

title: openstack-cloud-config

cloudName: openstack

Apply:

9. Observe cluster creation

kubectl apply -f clusterdeployment.yamlNow the system begins working within the background.

Watch sources:

kubectl get clusterdeployments -n kcm-system

kubectl get pods -n kcm-system

Additionally monitor OpenStack:

openstack server record

It is best to see employee VMs being created.

Essential level:

- Management aircraft parts run contained in the administration cluster

- Solely employee nodes are created in OpenStack

10. Retrieve kubeconfig of the brand new cluster

As soon as the cluster is prepared, k0rdent creates a secret containing kubeconfig.

kubectl get secret openstack-hcp-kubeconfig

-n kcm-system

-o jsonpath="{.data.value}" | base64 -d > kubeconfig.yaml

Use it:

kubectl get nodes --kubeconfig=kubeconfig.yamlIt is best to see your OpenStack employee nodes.

11. Validate with an actual workload

Deploy one thing easy:

kubectl run nginx --image=nginx

kubectl get pods

Expose it if wanted:

kubectl expose pod nginx --port=80 --type=NodePortThis confirms the cluster is useful.

12. Check scaling (vital for demo and validation)

Edit cluster:

kubectl edit clusterdeployment openstack-hcp -n kcm-systemChange:

workersNumber: 3Watch OpenStack once more:

openstack server record

A brand new VM needs to be created.

This proves:

“Declarative scaling works / k0rdent reconciles desired state

It’s simple to stroll away pondering you’ve simply provisioned a cluster. You haven’t. What we constructed is essentially completely different:

A centralized management aircraft structure

In a standard setup, each cluster is an island: its personal API server, its personal etcd, its personal all the things. Multiply that by fifty and also you don’t have a platform, you will have a sprawl. Groups spend extra time protecting clusters alive than utilizing them.

Centralized management aircraft structure breaks this sample. All management planes dwell inside one administration cluster, one place for state, one place for API requests, one place to go when one thing wants consideration. The administration cluster turns into the mind. Workload clusters turn out to be its extensions.

This isn’t simply an infrastructure optimization. It’s an architectural shift in how duty is distributed.

A declarative cluster provisioning system

Within the previous world, provisioning a cluster meant scripts, runbooks, and CLI instructions fired in a particular order. It was crucial and it lived in somebody’s head.

With k0rdent, you describe what you need. The system figures out how you can get there. Provisioning turns into reproducible, auditable, and version-controlled by design — not accidentally.

A multi-cluster platform basis

A single cluster is a software. A well-architected multi-cluster system is a platform constructed to serve many groups, many workloads, and lots of environments constantly, with out each shopper needing to grasp what’s beneath.

That’s what we’ve laid down right here. Onboard new clusters with out rethinking the structure. Implement insurance policies from a single level. Improve and observe your total fleet with out treating every cluster as a singular snowflake.

The core shift: From cluster-centric to platform-centric

Earlier than this structure, every cluster managed itself, your consideration and tooling fragmented throughout nonetheless many clusters you had. After this structure, one system manages all clusters. Each new cluster is simply one other anticipated, dealt with occasion.

You cease pondering of your self as somebody who manages clusters, and begin pondering as somebody who operates a system that manages clusters. That shift is what lets groups scale infrastructure with out scaling their operational toil on the similar charge.

You didn’t simply construct a cluster. You constructed the system that builds clusters.

Be at liberty to take a look at extra on the k0s and k0rdent group

The stack we’ve walked by way of on this weblog k0s, k0rdent, and OpenStack, isn’t only a technical mixture. It represents a rising ecosystem of open-source contributors, platform engineers, and infrastructure practitioners who’re actively shaping what manufacturing Kubernetes seems to be like at scale.

Each tasks are open supply, actively maintained, and genuinely community-driven. If something on this weblog sparked a query, a use case concept, or perhaps a disagreement, the communities are the best place to take it.

If you wish to discover k0rdent additional, dig into the undertaking on GitHub and produce your questions or suggestions to the #k0rdent channel on the CNCF Slack. It’s an energetic area the place contributors and customers are constructing within the open.

For k0s, the GitHub repository is the most effective place to observe growth and contribute. On the Kubernetes Slack, the #k0s-users channel is the place you’ll discover practitioners sharing real-world expertise, and #k0s-dev is the place the core growth dialog occurs, price becoming a member of if you wish to go deeper or contribute upstream.

The easiest way to study this stack isn’t simply to examine it: it’s to construct with it, break it, and ask questions alongside people who find themselves doing the identical.