Knowledge and moral approval

A steady microneurography research was carried out between 01/11/2019 and 31/12/2024 on the RWTH Aachen College Medical School (Namer’s Lab), authorised by the Ethics Board of the College Hospital RWTH Aachen with numbers Vo-Nr. EK141-19 and Vo-Nr. EK143-21. The members had been comprehensively knowledgeable concerning the experimental process, supplied their written knowledgeable consent, and the research was carried out in accordance with the Declaration of Helsinki. Microneurography recordings had been acquired throughout laboratory-based experiments designed to research peripheral nerve exercise and to develop and consider sign processing and evaluation strategies. On this analysis, we analyze the information from seven wholesome volunteers (one male, six females). Not one of the members had any neurological, dermatological, or different power medical situations, and none had taken common medicine or any acute medicine inside 24 h previous to the experiments. Six recordings embrace totally out there floor reality labels for all electrically evoked spikes, and one recording with chemical stimulation is included to show a proof-of-concept utility.

Experimental setup

With microneurography, spikes from particular person C-fibers throughout the cutaneous C-fiber fascicles of the superficial peroneal nerve had been recorded. An in depth rationalization of this method might be present in8,11. For the experiments, a recording electrode (Frederick-Haer, Bowdoinham, ME, USA) was positioned close to the nerve bundle, and innervation territories had been mapped utilizing a 0.5-diameter tipped electrode. As soon as the focused territories had been recognized, 0.2 mm diameter needle electrodes (Frederick-Haer) had been inserted into the pores and skin for intracutaneous electrical stimulation through a Digitimer DS7 fixed present stimulator. Neural alerts had been amplified and processed in parallel utilizing a Neuro Amp EX (ADInstruments) amplifier, adopted by a further bandpass filter (500–1000 Hz) and a 50 Hz notch filter to attenuate electrical noise. The info acquisition was carried out with two programs in parallel: (1) with a sampling fee of 10 kHz utilizing an analog-to-digital converter from Nationwide Devices and the software program Dapsys (http://www.dapsys.net) by Brian Turnquist12, and (2) Power1401 as a digital analogue converter from Cambridge Electronics Design and the software program Spike2 v10.08.

Marking methodology

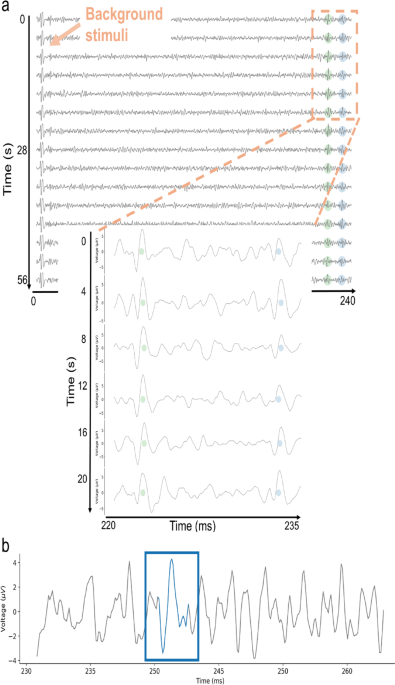

In microneurography, the marking methodology is routinely used as an ordinary method to distinguish particular person C-fiber responses and C-fiber sorts11,20. After figuring out the receptive subject and figuring out {the electrical} threshold required to reliably activate the recorded fibers, low-frequency “background” electrical stimuli are utilized. These background stimuli evoke precisely one spike per activated fiber and are delivered at a set interval (right here, each 4 s), making a predictable time window through which the evoked spike is predicted because of a continuing conduction velocity of C-fibers below low-frequency stimulation. The sign is segmented relative to every background stimulus onset, permitting spikes originating from a single C-fiber to align temporally at a constant latency. The microneurography recording is visualized in a raster-like “waterfall plot”, which creates temporal alignment of spikes no matter amplitude (see Fig. 1a, blue and inexperienced circles). These vertically aligned, repeated spikes are known as tracks.

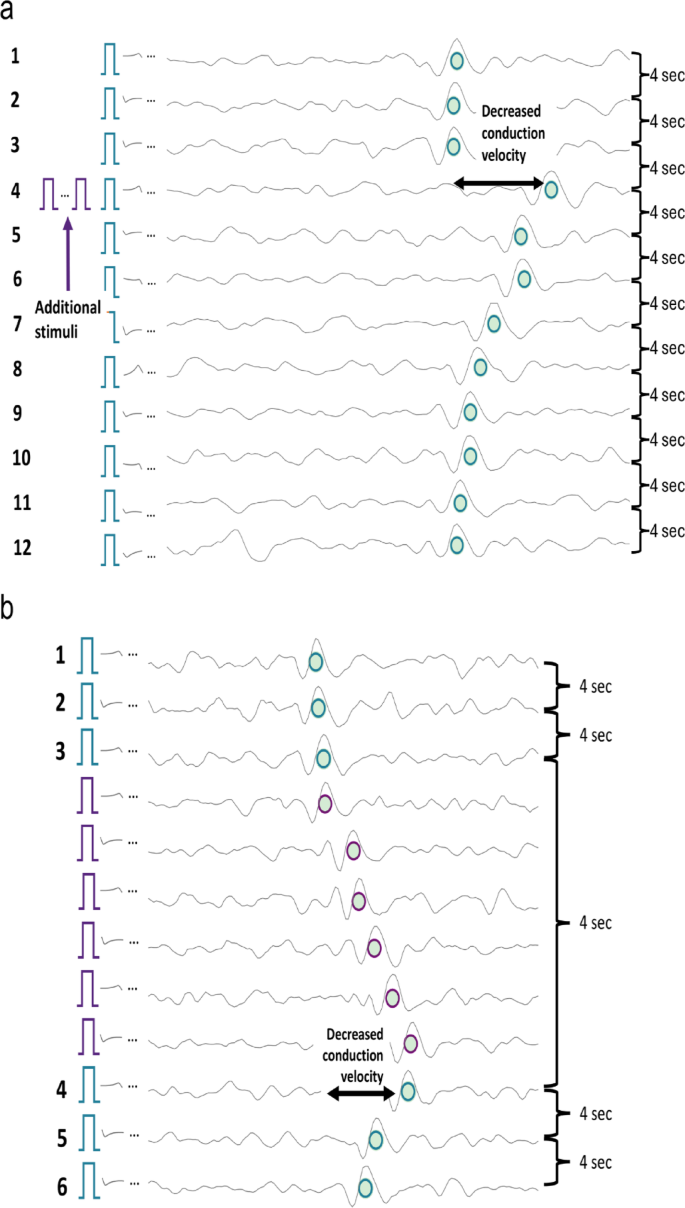

The ensuing tracks allow dependable identification and sorting with monitoring algorithms12, even for spikes close to the noise stage (see Fig. 1b, blue rectangle). Because the monitor displays constant latency throughout many repetitions, it stays strong to particular person missed spikes, moderately than being depending on a single response. When a fiber is activated shortly earlier than {an electrical} background pulse, its conduction velocity quickly decreases, resulting in a delayed response to the following background pulse (see Fig. 2a, line 4). This latency shift, which scales roughly with the variety of previous spikes, is named activity-dependent conduction velocity slowing (ADS)23. ADS serves as a useful “marker” for particular person fibers and permits for semi-quantitative estimation of fiber exercise.

The marking methodology reliably differentiates spikes from particular person C-fibers by assigning them to vertical tracks shaped by responses time-locked to low-frequency stimulation. These tracks are routinely current and may help coaching of supervised machine studying, however their restricted quantity motivates the usage of light-weight algorithms. In typical experimental protocols, this background stimulation is mixed with further stimuli utilized in parallel to imitate fiber exercise and evoked sensations like itch and ache8,11. Nonetheless, this spike identification and sorting are inherently restricted to spikes that happen because of background pulses. Spikes evoked independently of this background stimulation, resembling electrically, mechanically, spontaneously or pruritogen-evoked exercise, don’t align with these tracks and due to this fact can’t be instantly detected or assigned to particular fibers. Their affect is simply not directly observable by activity-dependent conduction-velocity slowing.

To beat this limitation with out altering the muse of the marking methodology, we applied a hardware- and software-level extension of the stimulation protocol that preserves the background stimuli however redefines how further electrical stimuli are processed. The purpose is to create recordings through which all electrically evoked spikes, together with these mimicking additional fiber exercise, are time-locked and reliably traceable.

(a) Instance waterfall plot of two tracks. The sign is segmented each 4 s, starting with {the electrical} background stimulus. Two tracks are seen, marked by the inexperienced and blue dots, indicating the exercise of two C-fibers. The conduction velocity stays virtually fixed, resulting in vertical alignment of the elicited spikes. (b) An instance spike on the noise stage. The spike within the blue field wouldn’t be detectable with out the vertical alignment of repeated responses enabled by the marking methodology and waterfall illustration. This temporal alignment facilitates the identification of low-amplitude spikes by leveraging constant latencies evoked by background stimuli.

(a) Instance of activity-dependent slowing with the marking methodology and extra stimuli. Every line begins with the periodic background pulse indicated by the blue rectangle, leading to sign segments with a length of 4 s. When no additional exercise is noticed (strains 1–3), the spikes (inexperienced dots) align vertically as a result of virtually fixed conduction velocity. When further (electrical) stimuli are utilized, there’s a lower in conduction velocity (line 4). Responses to background stimuli are marked by inexperienced dots with a blue circle. (b) Instance of activity-dependent slowing stimulated with the bottom reality protocol. As within the routinely used marking methodology, there are background stimuli each 4 s and extra pulses as in (a). In distinction to the “normal” marking methodology, the sign is reduce earlier than every stimulation pulse, whether or not it’s a background pulse or a further pulse. This ensures that every one spikes are aligned on the inexperienced monitor and never solely those evoked by background stimuli. Responses to background stimuli stay marked by inexperienced dots with blue circles, whereas responses to further stimuli are indicated by purple rectangles and circles, each for the stimuli and the evoked spikes.

“Ground truth” stimulation protocol for mannequin improvement and validation

In our developed “ground truth” protocol, the stimulation sample stays similar to different electrical experiments: periodic 0.25 Hz background stimuli with further pulses inserted between background pulses to imitate additional fiber exercise. The important thing modification is the {hardware}/software program interpretation of those occasions. In distinction to the routinely used marking methodology, the bottom reality protocol is an engineered extension used solely for algorithm coaching and validation. It differs essentially in that every one stimuli, together with the inserted further pulses, are handled as background stimuli, which means that every stimulus defines its personal segmentation window and permits any evoked spike to be aligned to the corresponding fiber monitor.

Because of this, the segmentation home windows within the waterfall illustration are dynamically reduce after every stimulus, producing variable-length intervals between consecutive stimuli moderately than the fastened 4 s home windows utilized in the usual marking methodology (see Fig. 2b). This ensures that spikes occurring between two classical background pulses are projected onto the vertical tracks and thus turn into reliably detectable and sortable.

Importantly, as within the marking methodology, the method is powerful to sometimes missed spikes. A fiber might fail to answer a further pulse, however this could solely lead to a smaller or absent latency shift and doesn’t compromise the interpretability of the monitor. The important goal of the protocol is to make sure that each time a spike is electrically evoked, it’s time-locked and might be appropriately labeled.

Collectively, these modifications generate recordings with totally verified spike timestamps and fiber identities, offering a dependable floor reality for validating spike detection and sorting algorithms below real looking single-electrode noise situations.

Further pulse parameters within the floor reality protocol

The quantity and timing of further pulses within the floor reality protocol weren’t fastened however diverse throughout recordings. The prolonged marking methodology method is unbiased of the precise variety of inserted pulses, as a result of every stimulus is processed identically by the {hardware}/software program and offers a time-locked response for fiber-specific spike project. Whether or not an interval accommodates two or ten pulses, the ensuing latency tracks stay steady, secure, and totally interpretable. In Desk 1, the vary of further pulses, the inter-stimulus frequency, and the distribution of distances to the following background stimulus are summarized. These values spotlight the variety of stimulation patterns and present that it’s the technical modifications of the protocol, not any single numerical parameter, that defines the bottom reality protocol. The variability doesn’t have an effect on the result of the method and moderately captures the fact of variance in fiber conduct. For a visible overview of the stimulation protocol, schematic representations of all recordings are supplied in Supplementary Determine S1.

Recording with pruritogen injection

For instance the sensible applicability of our computational method to spike detection and sorting, we included one consultant recording through which electrical background stimulation was mixed with intracutaneous injection of fifty µL bovine adrenal medulla peptide 8–22 (BAM 8–22, Cat. No. SML0729, Sigma, Taufkirchen, Germany), as beforehand defined in8. In contrast to the bottom reality datasets, this recording didn’t embrace labels for spikes elicited by further exercise, making it a practical illustration of the challenges posed by the detection and sorting of chemically induced spikes.

Floor reality labeling with Dapsys through guide spike sorting

Spike identification and sorting for background stimulation-evoked exercise had been carried out utilizing the built-in monitoring algorithm in Dapsys24, adopted by human professional corrections. Within the floor reality recordings, this method enabled the detection and sorting of spikes elicited by each background and extra stimuli. In distinction, for the recording with chemical stimulation, solely spikes time-locked to background stimuli may very well be reliably recognized with Dapsys.

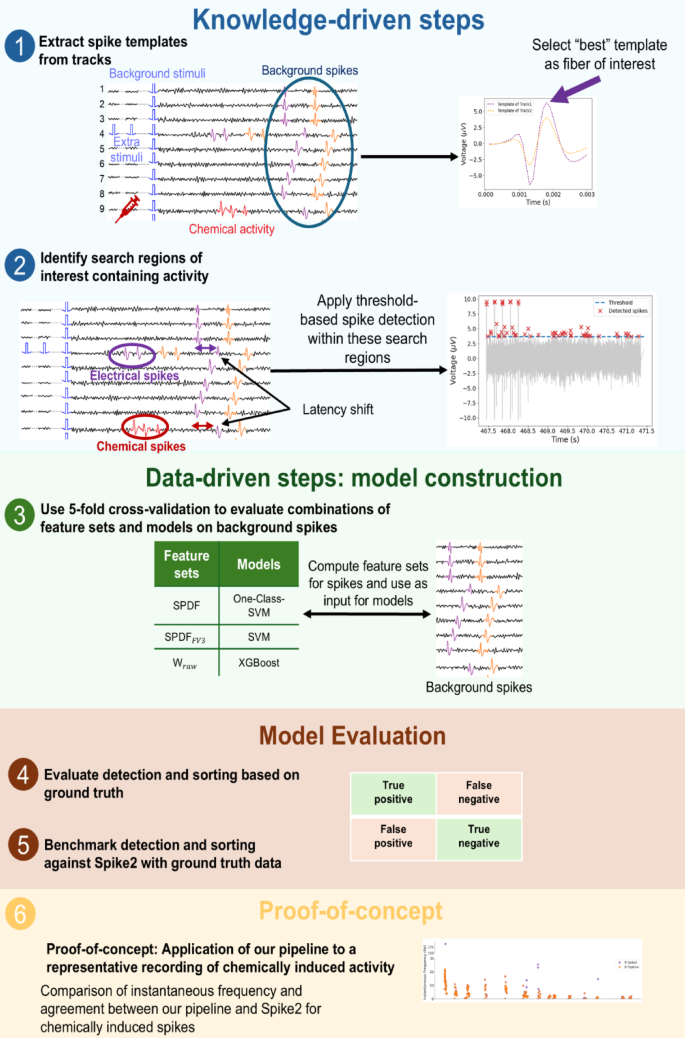

A knowledge- and data-driven spike sorting pipeline

We developed a Snakemake-based25 computational pipeline for microneurography recordings (see Fig. 3), integrating knowledge-driven steps (for instance, background spike alignment and constraining the detection area) with data-driven machine studying for spike detection and classification.

Knowledge pre-processing

All recordings had been learn in and parsed into structured pandas DataFrames (v2.2.3) containing the uncooked sign, spike labels, and timestamps, and simulation using our customized Python bundle PyDapsys26.

Spike waveforms had been extracted from the uncooked sign with a set window of 30 information factors, similar to a 3 ms interval at a sampling frequency of 10,000 Hz. Waveform alignment was carried out in accordance with the strategy described by Caro-Martín et al.27, the place every spike is temporally centered based mostly on the time level similar to the most important downward slope (i.e., the unfavourable peak within the first by-product of the sign). This alignment step ensures that extracted spike options are computed persistently throughout spikes.

Template-based identification of optimum items

For every monitor, a template was computed by averaging all spikes aligned on that monitor. This template serves each as a visible illustration of spike morphology and as a quantitative measure of spike amplitude, aiding within the identification of high-amplitude tracks the place thresholding-based detection is extra dependable. The monitor with the very best amplitude template was chosen because the monitor of curiosity for subsequent spike sorting.

To evaluate the final high quality, we computed the signal-to-noise ratio (SNR) for the monitor of curiosity. A noise phase of 40 ms was extracted from the uncooked sign previous the primary evoked spike to make sure the absence of fiber exercise. The SNR was then calculated because the ratio of the spike template amplitude to the noise normal deviation, estimated utilizing the median absolute deviation (MAD), following the implementation in SpikeInterface16.

Spikes had been additional categorized by their consecutive temporal variations. Background-evoked spikes had been outlined as these occurring at the very least 3.8 s aside, in keeping with the 4-second interval of the stimuli. In distinction, spikes with shorter inter-spike intervals had been labeled as responses to further stimulation. Notably, background-evoked spikes are persistently out there in recordings obtained through the marking methodology, whereas spikes evoked by further stimuli are unique to datasets acquired utilizing the bottom reality stimulation protocol.

Template distance evaluation was carried out following our beforehand described methodology for estimating template separability and anticipated sortability19. We quantified the similarity between spike templates throughout tracks. Pairwise template distances had been computed for all background-evoked templates utilizing normal error-based metrics, together with imply squared error (MSE), root imply squared error (RMSE), and imply absolute error (MAE). The definitions of the RMSE, MSE, and MAE metrics are supplied within the Supplementary Strategies. For every recording, the minimal distance throughout all template pairs was retained as an indicator of probably the most overlapping templates. Low template distance values point out excessive similarity between spike waveforms and lowered separability and had been due to this fact interpreted as reflecting decrease anticipated sortability. These template-based distance measures had been used as a pre-sorting high quality indicator and later associated to the measured F1-scores of the sorting consequence.

Waveform drift check

For recordings exhibiting lengthy recording durations, waveform drift can affect spike classification. We evaluated template stability over time for the chosen monitor of curiosity evoked by background spikes. Spike templates had been computed individually for the start, center, and finish of every recording and in comparison with assess potential modifications within the spike form. The monitor was divided into three subsets and all spikes inside every phase had been averaged to generate templates representing the start, center, and finish of the recording. These comparisons function a quality-control measure to substantiate that the templates remained secure in the course of the recording.

Spike detection with constrained search area

Spike detection thresholds for the fiber of curiosity had been set manually based mostly on visible inspection of the height amplitudes within the corresponding templates of background-evoked spikes. To ascertain these thresholds, the height amplitudes had been first sorted in ascending order to facilitate the identification of the minimal threshold at which spikes happen, and the ensuing distribution was examined for variance and outliers. In recordings exhibiting excessive variance, we manually chosen the brink, in any other case the smallest amplitude worth was chosen. The ultimate thresholds used are reported in Desk 3. We deliberately determined to not use totally automated thresholding algorithms, as such strategies are sometimes delicate to fluctuations and noise. Handbook threshold choice allowed cautious tailoring to the particular sign traits of every recording, thereby lowering the speed of false-positive spike detections.

To constrain the search area for threshold-based spike detection, we first calculated the spike latencies relative to the background stimuli for the monitor of curiosity. From the distribution of those latencies, we recognized the minimal latency deviation, when no further stimulation was utilized, which was 0.9 ms. Any latency shift exceeding this worth was then used to mark potential time home windows throughout the 4-second sign intervals. These shifts point out the presence of spikes evoked by further stimuli and due to this fact constrain the search area for spike detection.

Spike detection was then carried out utilizing the find_peaks perform from SciPy (v1.15.2)28, making use of the manually decided thresholds. To exclude stimulation artifacts, we excluded any detected peaks occurring inside 100 ms of the stimulation onset.

Overview of a hybrid spike detection and analysis pipeline combining knowledge- and data-driven steps. (1) Spike templates are extracted from background spikes (blue circle) utilizing low-frequency stimulation to establish the monitor of curiosity for additional evaluation. (2) Search areas containing exercise are recognized, and spikes are detected utilizing threshold-based strategies. (3) Characteristic units are computed from background spikes and used as enter to totally different classification fashions (One-class SVM, SVM, XGBoost). Mannequin efficiency is evaluated by 5-fold cross-validation to find out the optimum model-feature set mixture for the background spikes. (4) Consider detection and sorting based mostly on floor reality. First, spike detection is assessed by evaluating detected spikes to recognized floor reality spikes, classifying outcomes as true positives, false positives, false negatives, or true negatives. Subsequent, sorting efficiency is evaluated by verifying whether or not detected spikes are appropriately assigned to their originating fibers. (5) Benchmark detection and sorting in opposition to Spike2 with floor reality information. The pipeline’s detection and sorting efficiency is instantly in comparison with Spike2 by making use of each strategies independently to the identical recording segments. (6) Proof-of-concept evaluation of chemically evoked spikes. For the recording with pruritogen injection, we compute the instantaneous frequency, spike fee in 4-second home windows, and latency of chemically evoked spikes sorted by each the pipeline and Spike2. As no floor reality is on the market for these spikes, this evaluation serves as proof-of-concept, evaluating each strategies for the plausibility and physiological consistency of the detected exercise and the settlement between each sorting approaches.

Spike classification utilizing machine studying

To assign detected spikes to their corresponding monitor, we examined three machine studying classifiers: a one-class help vector machine (One-class SVM) and an ordinary help vector classifier (SVM), applied in scikit-learn (v1.6.1)29, and eXtreme Gradient Boosting classifier (XGBoost, v3.0.2)30. Characteristic vectors had been extracted from the 30 datapoints describing the uncooked waveform. Three function units had been computed: SPDF, a 23-dim function vector, adjusted and applied from the SS-SPDF options introduced by Caro-Martín et al.27, (:{textual content{S}textual content{P}textual content{D}textual content{F}}_{textual content{F}textual content{V}3}), a lowered three-dimensional subset of SPDF, and (:{textual content{W}}_{textual content{r}textual content{a}textual content{w}}), the uncooked waveform function vector, the 30 datapoints extracted from the uncooked sign. Detailed definitions of those function units are described in our earlier work19.

For the SVM and XGBoost classifiers, fashions had been skilled on background-evoked spikes from all out there tracks in a recording, enabling multi-class discrimination throughout fibers. The One-class SVM mannequin was skilled utilizing solely background spikes from the monitor of curiosity, aiming to establish whether or not newly detected spikes match the recognized distribution of that monitor. Hyperparameter optimization for all fashions was carried out utilizing the Optuna framework (v 4.3.0)31, making use of 5-fold cross-validation on the background spikes. Maximizing the imply F1-score throughout folds was used because the optimization goal.

Validation and analysis strategies

Detection and sorting efficiency had been evaluated independently. For each, efficiency metrics included true positives (TP), true negatives (TN), false positives (FP), and false negatives (FN) charges, from which F1-score, precision, and recall had been derived.

Within the case of detection, we moreover report the false constructive per true constructive (FP/TP) fee as a further indicator for reliability. Detection efficiency was assessed by evaluating the timestamps of detected spikes in opposition to floor reality spike annotations utilizing a temporal tolerance window of two ms.

For sorting, the ultimate fashions had been retrained on all background spikes utilizing the beforehand chosen optimum hyperparameters. Every mannequin was then utilized to the set of detected spikes, after extracting every of the three function units from these spikes to function enter. Classifier efficiency was assessed with spikes evoked by floor reality.

Statistical evaluation

Knowledge was collected from a number of datasets and totally different efficiency metrics (F1-score, precision, recall, TP, FP, TN, and FN) had been calculated. The metrics F1-score, precision, and recall are bounded in [0, 1]. As such proportions usually deviate from normality, we thought of fashions appropriate for bounded information. The metrics TP, FP, TN, and FN are depend information and we due to this fact utilized separate modeling methods. All statistical evaluation was executed in R.

Knowledge inspection and preprocessing

We first carried out exploratory analyses (histograms, boxplots) to look at the general distribution of every metric and establish any potential outliers or anomalies. The bounded metrics usually approached boundary values (0 or 1). To keep away from points with values precisely on the boundary, every 0 or 1 was shifted barely (ε = 0.0001) into the interval (0, 1).

Evaluating statistical fashions

For the bounded metrics, we in contrast two totally different statistical fashions: a Linear combined mannequin with logit transformation and a Beta combined mannequin, explicitly appropriate for (:left[text{0,1}right]) information, fitted with a logit hyperlink. The mannequin components was expressed by (:textual content{O}textual content{u}textual content{t}textual content{c}textual content{o}textual content{m}textual content{e}textual content{M}textual content{e}textual content{t}textual content{r}textual content{i}textual content{c}:sim:textual content{M}textual content{o}textual content{d}textual content{e}textual content{l}:textual content{*}textual content{F}textual content{e}textual content{a}textual content{t}textual content{u}textual content{r}textual content{e}textual content{S}textual content{e}textual content{t}:+:left(1:proper|:textual content{D}textual content{a}textual content{t}textual content{a}textual content{s}textual content{e}textual content{t})). For the depend information, we in contrast a Poisson combined mannequin with a log hyperlink with a unfavourable Binomial mannequin. To check for overdispersion, we calculated the Pearson residuals and their dispersion ratio (sum of squared Pearson residuals divided by the residual levels of freedom). The mannequin components was expressed by (:textual content{O}textual content{u}textual content{t}textual content{c}textual content{o}textual content{m}textual content{e}textual content{M}textual content{e}textual content{t}textual content{r}textual content{i}textual content{c}:sim:textual content{M}textual content{o}textual content{d}textual content{e}textual content{l}:textual content{*}:textual content{F}textual content{e}textual content{a}textual content{t}textual content{u}textual content{r}textual content{e}textual content{S}textual content{e}textual content{t}:+:textual content{o}textual content{f}textual content{f}textual content{s}textual content{e}textual content{t}left(textual content{l}textual content{o}textual content{g}proper(textual content{R}textual content{e}textual content{c}textual content{o}textual content{r}textual content{d}textual content{i}textual content{n}textual content{g}textual content{D}textual content{u}textual content{r}textual content{a}textual content{t}textual content{i}textual content{o}textual content{n}left)proper):+:left(1:proper|:textual content{D}textual content{a}textual content{t}textual content{a}textual content{s}textual content{e}textual content{t})).

To check the totally different fashions for the bounded in addition to for the depend information, we in contrast the data standards (AIC/BIC) and carried out an evaluation of the plots of the residuals in comparison with the fitted values.

Baseline comparability with Spike2

To create a baseline for our algorithm, spike detection and sorting had been additionally carried out with Spike2 (v10.08), which implements a template-matching method. The “New WaveMark” perform was used with default parameters to outline a reference spike, based mostly on unfavourable peak thresholds. This reference spike corresponds to a response evoked by a background stimulus and was temporally recognized by the latency obtained from Dapsys, guaranteeing correct affiliation with a specific monitor. The recognized waveform served because the preliminary template for spike sorting. Spike2 utilized a template to detect and kind all subsequent spikes. The info was exported as spreadsheets and additional processed in pandas DataFrames.

To take away stimulation artifacts from the Spike2 consequence, spikes occurring inside a ten ms window following every stimulus pulse (stimulus onset + 10 ms) had been excluded. Concurrently recorded datasets from Dapsys had been used to acquire floor reality labels. A key problem was the temporal misalignment between the 2 programs: Dapsys recording classes had been segmented throughout a number of recordsdata, whereas Spike2 generated a single recording file. A dynamic offset correction was utilized to align Spike2 spike timestamps with their corresponding Dapsys timestamps. This offset was computed individually for every stimulus, accounting for time drift between programs.

After alignment, spike sorting efficiency was assessed by evaluating Spike2-detected spikes in opposition to Dapsys floor reality labels. A Spike2 spike was thought of a real constructive if it occurred inside (:pm:3) ms of a Dapsys spike. This tolerance window corrected inherited variations in timestamp definitions: Spike2 timestamps correspond to the spike onset, whereas Dapsys timestamps approximate the midpoint of the spike. This analysis enabled the evaluation of Spike2’s template-based sorting efficiency relative to the manually labeled Dapsys consequence.

Proof-of-concept comparability of chemically evoked spikes within the absence of direct floor reality

For instance the applying of the computational spike sorting pipeline below experimental situations with out direct floor reality, we carried out a proof-of-concept comparability with Spike2 for a consultant recording with pruritogen injection. Spikes assigned to the fiber of curiosity by each strategies had been in comparison with assess the diploma of settlement between the sorting approaches. The instantaneous firing frequency (IF) was calculated because the inverse of the interspike interval between consecutive spikes and the spike fee was moreover quantified in 4-second time bins. These measures had been used as an oblique measure of fiber exercise evoked by chemical stimulation, enabling them to look at the consistency between the 2 sorting approaches. To additional characterize the spike sorting high quality, spike latency relative to the background stimuli was visualized as a physiological marker of fiber activation. Latency shifts had been plotted alongside instantaneous frequency and spike fee to detect inconsistencies and assess the plausibility of detected bursts in each strategies.

Software program accessibility

The whole spike sorting pipeline, together with information preprocessing, function extraction, and classification, is brazenly out there at The repository contains supply code, documentation, and an instance recording.