Image by Editor

# Introduction

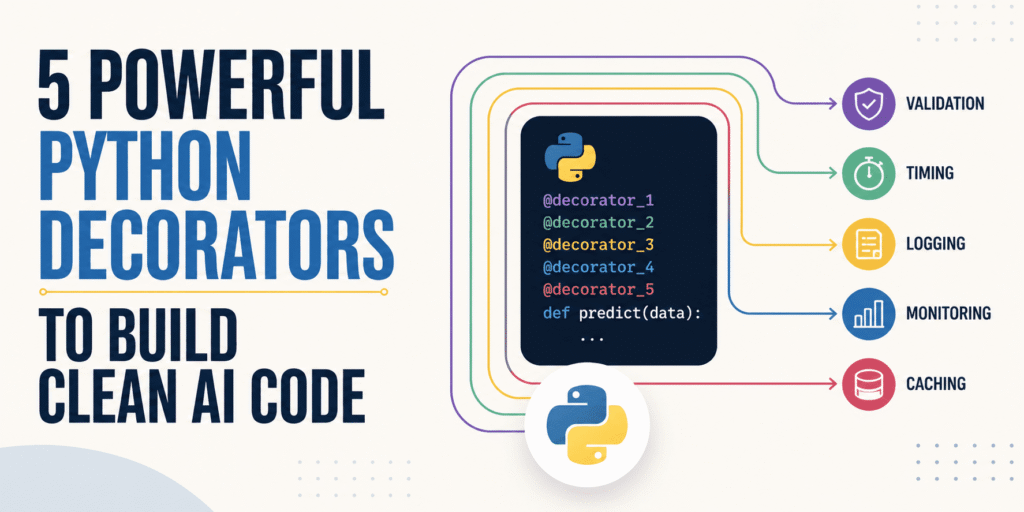

Python decorators offer significant advantages for AI and machine learning projects. They are especially effective at isolating core modeling and data pipeline logic from repetitive utility tasks such as validation, timing, and logging.

This guide highlights five practical Python decorators that developers have found particularly effective for producing cleaner AI code.

The code samples provided rely on Python standard libraries and well-established patterns like functools.wraps. Their main purpose is to demonstrate how each decorator works, allowing you to focus on customizing the logic for your own AI projects.

# 1. Concurrency Limiter

This decorator is highly beneficial when working with third-party large language models (LLMs) under restrictive free-tier quotas. When excessive asynchronous calls trigger these limits, this pattern applies a throttling mechanism to manage request flow. It uses semaphores to cap the number of simultaneous executions of an async function:

import asyncio

from functools import wraps

def limit_concurrency(limit=5):

sem = asyncio.Semaphore(limit)

def decorator(func):

@wraps(func)

async def wrapper(*args, **kwargs):

async with sem:

return await func(*args, **kwargs)

return wrapper

return decorator

# Usage

@limit_concurrency(5)

async def fetch_llm_batch(prompt):

return await async_api_client.generate(prompt)# 2. Structured Machine Learning Logger

In complex software like machine learning systems, basic print() statements quickly become useless, especially in production.

This logging decorator captures runs and errors, formatting them into structured JSON logs that are easy to search and useful for fast following troubleshooting. The example can be used as a template for decorating functions, such as one that runs a single training cycle in a neural network:

import logging, json, time

from functools import wraps

def json_log(func):

@wraps(func)

def wrapper(*args, **kwargs):

start = time.time()

try:

res = func(*args, **kwargs)

logging.info(json.dumps({"step": func.__name__, "status": "success", "time": time.time() - start}))

return res

except Exception as e:

logging.error(json.dumps({"step": func.__name__, "error": str(e)}))

raise

return wrapper

# Usage

@json_log

def train_epoch(model, training_data):

return model.fit(training_data)# 3. Feature Injector

This decorator is extremely helpful during model deployment and inference. Imagine moving a model from a notebook into a production setting like a FastAPI endpoint. A common issue is making sure raw user input goes through the same transformations as the original training data. The feature injector handles this automatically behind the scenes to ensure consistency.

This decorator ensures that features are created from raw data in a consistent manner before the data ever reaches your model.

The example below demonstrates how to easily add an 'is_weekend' feature by checking if a date column in a dataframe falls on a Saturday or Sunday:

from functools import wraps

def add_weekend_feature(func):

@wraps(func)

def wrapper(df, *args, **kwargs):

df = df.copy() # Prevents Pandas mutation warnings

df['is_weekend'] = df['date'].dt.dayofweek.isin([5, 6]).astype(int)

return func(df, *args, **kwargs)

return wrapper

# Usage

@add_weekend_feature

def process_data(df):

# 'is_weekend' is guaranteed to exist here

return df.dropna()# 4. Deterministic Seed Setter

This decorator is especially relevant during experimentation and hyperparameter tuning. These stages often require setting a random seed to fine-tune parameters like a model’s learning rate. Suppose you adjust the seed and model accuracy suddenly drops; you need to determine if the change is due to the new hyperparameter or simply an unfortunate random initialization of weights. Locking the seed helps isolate variables, making results from A/B tests more dependable.

import random, numpy as np

from functools import wraps

def lock_seed(seed=42):

def decorator(func):

@wraps(func)

def wrapper(*args, **kwargs):

random.seed(seed)

np.random.seed(seed)

return func(*args, **kwargs)

return wrapper

return decorator

# Usage

@lock_seed(42)

def initialize_weights():

return np.random.randn(10, 10)# 5. Dev-Mode Fallback

This decorator is invaluable for local development and CI/CD testing. Consider building a retrieval-augmented generation (RAG) system that depends on an external LLM. If a decorated function fails due to issues like connection timeouts or API rate limits, instead of raising an exception, the decorator catches the error and returns a set of predefined mock test data.

This approach is essential because it prevents your application from crashing entirely if an external service experiences a temporary outage.

from functools import wraps

def fallback_mock(mock_data):

def decorator(func):

@wraps(func)

def wrapper(*args, **kwargs):

try:

return func(*args, **kwargs)

except Exception: # Catches timeouts and rate limits

return mock_data

return wrapper

return decorator

# Usage

@fallback_mock(mock_data=[0.01, -0.05, 0.02])

def get_text_embeddings(text):

return external_api.embed(text)# Wrapping Up

This article explored five practical Python decorators designed to enhance the clarity of your AI and machine learning code in various contexts—from structured, searchable logging to controlled random seeding for data sampling, testing, and more.

Iván Palomares Carrascosa is a leader, writer, speaker, and adviser in AI, machine learning, deep learning, and LLMs. He trains and guides others in harnessing AI in the real world.