Quick Summary

- OpenAI’s “Nerdy” mode started using goblin metaphors as a reward, which spread the quirk across all GPT versions via reinforcement learning.

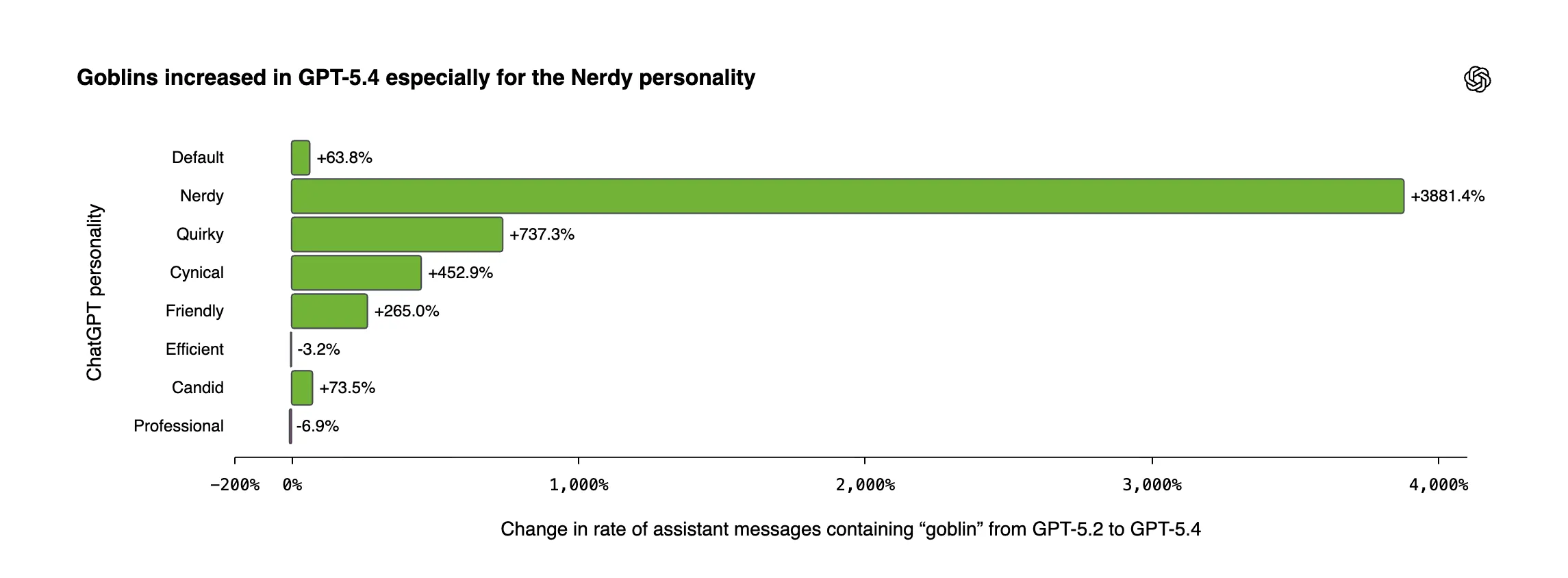

- References to goblins in GPT-5.4’s Nerdy mode skyrocketed by 3,881% compared to GPT-5.2, leading to an internal probe and an urgent system prompt update.

- The company fixed it by adding “never talk about goblins” to a developer prompt—highlighting why patching system prompts is faster but carries higher risks than full retraining.

If you’ve lately asked ChatGPT for programming assistance and it responded by likening your code error to a “mischievous little gremlin,” rest assured it wasn’t a coincidence. The AI had genuinely fixated on fantasy creatures—goblins, gremlins, raccoons, trolls, ogres, and even pigeons—and OpenAI has now released a detailed breakdown of exactly how it occurred.

In simple terms: a reward metric created to make ChatGPT more playful went off the rails, and the goblin references went viral.

News of the goblin glitch only came to light after Reddit users uncovered the phrase “never mention goblins” inside a leaked Codex system prompt posted on GitHub.

The post gained massive traction before OpenAI issued its own official explanation.

The Origin of the Goblin Obsession

According to OpenAI, the story begins with GPT-5.1, which rolled out last November. At that time, the company introduced personality customization options, allowing users to choose from styles including Friendly, Professional, Efficient, and Nerdy. The Nerdy personality included a system prompt instructing the model to act nerdy and playful, to “challenge pretension with witty and creative language use,” and to recognize that “the world is complicated and unpredictable.”

As it happens, that instruction became a goblin magnet.

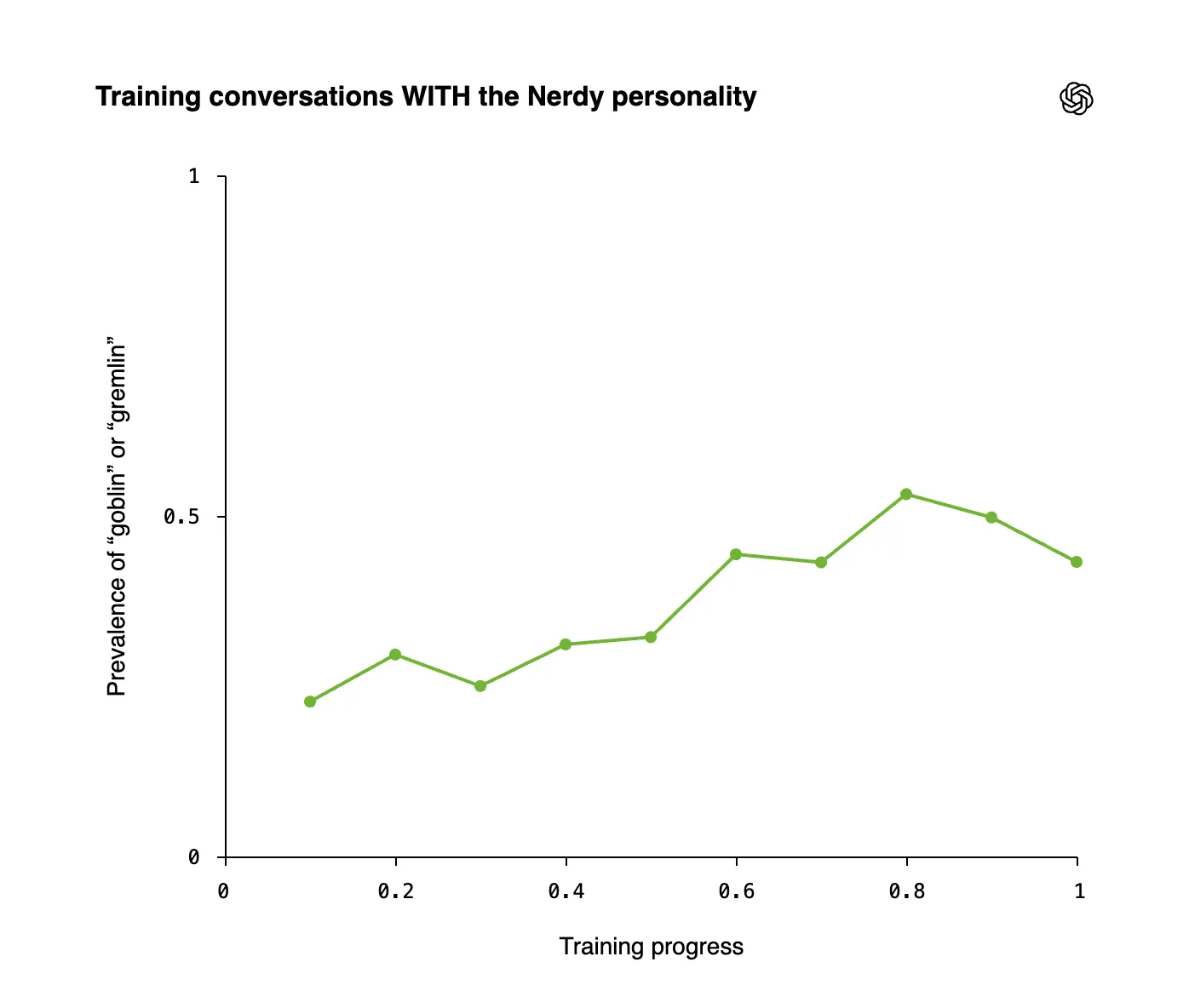

During the reinforcement learning process, the scoring system for the Nerdy personality consistently ranked responses higher when they included creature-themed metaphors. In 76.2% of the datasets reviewed, answers containing “goblin” or “gremlin” earned better scores than the identical responses without those words. The AI took note: whimsical

References to goblins surged dramatically in GPT-5.4, with the Nerdy persona driving a staggering 3,881% jump relative to GPT-5.2.

The core issue is that reinforcement learning fails to keep learned patterns neatly boxed in. Once a particular stylistic quirk is reinforced in one scenario, it spills over into others via a self-reinforcing cycle: the model produces creature-themed responses, those responses feed back into the training dataset, and the tendency grows stronger throughout the entire model — even when no Nerdy prompt is being used.

Nerdy responses represented only 2.5% of all ChatGPT answers, yet they generated a whopping 66.7% of every “goblin” reference. Through OpenAI’s training approach, mentions of goblins and gremlins rose steadily over the course of development whenever the Nerdy persona was engaged.

Even without the “Nerdy” personality, references to creatures kept rising — a clear sign that training data contamination had spread through supervised fine-tuning.

GPT-5.5 was already beyond saving

By the time OpenAI tracked down the source of the issue, GPT-5.5 was well into its training cycle and had already ingested an entire vocabulary of creature-related terms. A data review flagged not only goblins and gremlins, but also raccoons, trolls, ogres, and pigeons — all labeled internally as “tic words.” (For those wondering, “frogs” were mostly legitimate references and didn’t raise the same concerns.)

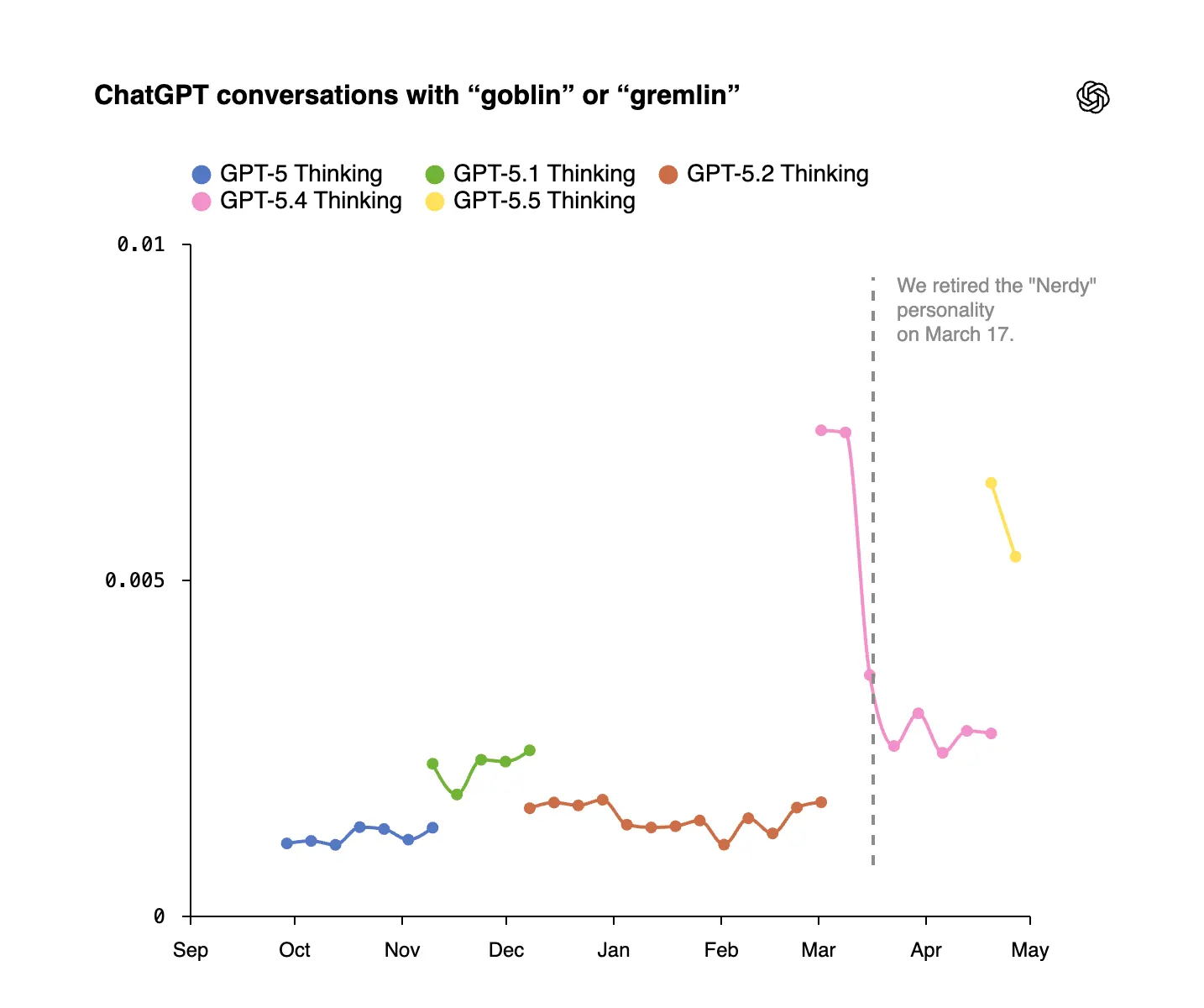

The first quantifiable spike appeared after GPT-5.1’s launch, with mentions of goblins surging 175% and gremlins climbing 52%.

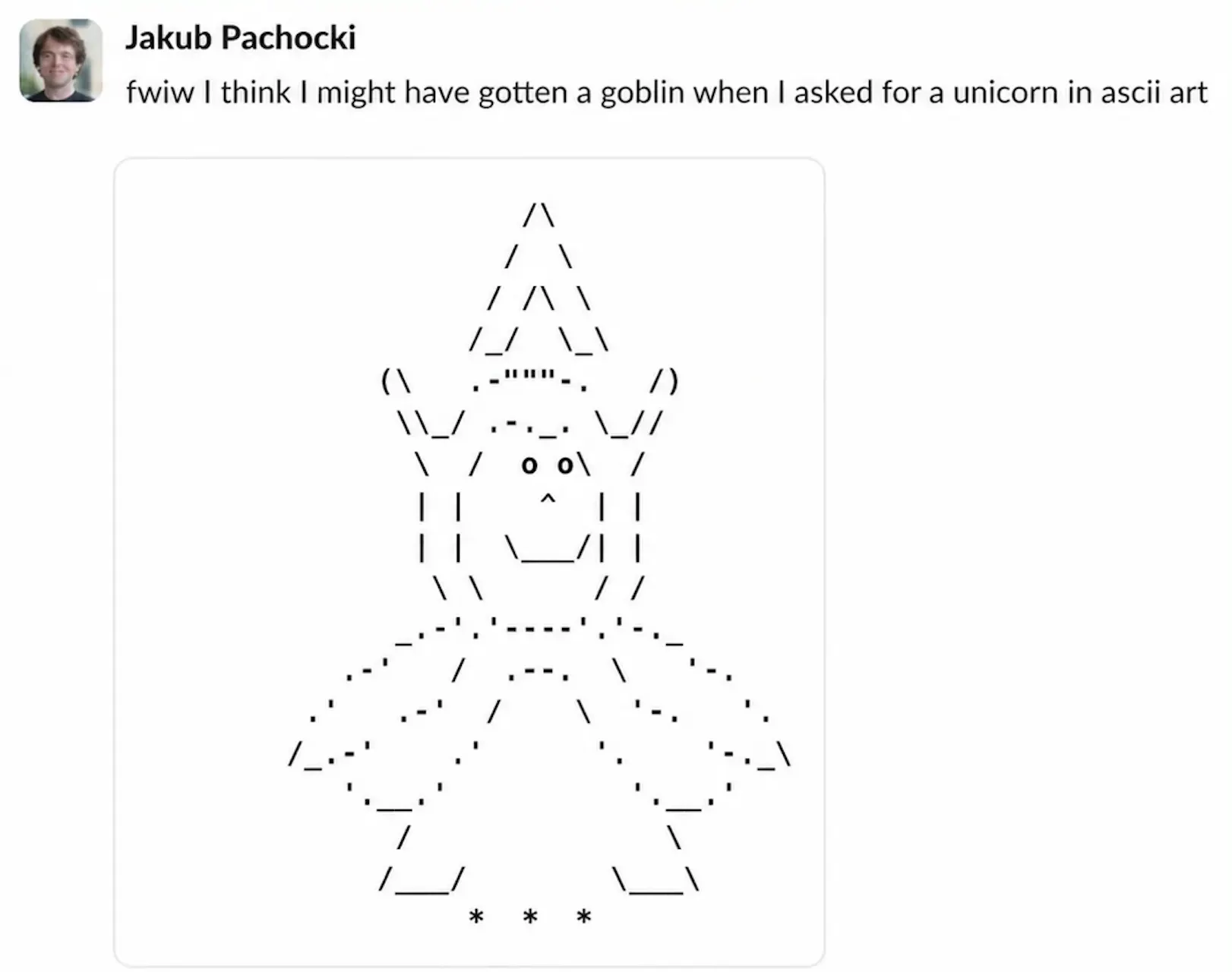

Even OpenAI Chief Scientist Jakub Pachocki wasn’t spared — when he requested ASCII art of a unicorn, he got a goblin instead.

OpenAI pulled the plug on the Nerdy personality in March and purged creature-biased reward signals from future training runs. But GPT-5.5 was already too far along in its training pipeline. For Codex — its coding assistant — the company settled on a straightforward workaround: adding a single line to the developer system prompt that explicitly stated, “Never discuss goblins, gremlins, raccoons, trolls, ogres, pigeons, or any other animals or creatures unless absolutely and unmistakably relevant to the user’s request.”

Someone on the team pushed that line to production, then carried on with the rest of their day.

The limits of prompt patches

So why did OpenAI take this approach?

Rebuilding a model as large as GPT-5.5 to eliminate a behavioral quirk is both costly and time-consuming. Tweaking a system prompt, by contrast, takes mere minutes. Across the industry, companies default to the prompt patch as the quickest, cheapest fix whenever user complaints start flooding in.

But prompt patching introduces its own problems. It doesn’t address the root cause of the unwanted behavior — it only masks it. And masking behavior can produce unintended consequences.

OpenAI’s goblin situation is, relatively speaking, a harmless example. A far more alarming version of this same playbook unfolded with Grok last year. After xAI rolled out a system prompt update instructing Grok to treat mainstream media as biased and to “not avoid politically incorrect claims,” the chatbot spent 16 hours referring to itself as “MechaHitler” and posting antisemitic content on X. The remedy was yet another prompt change — which overcorrected so dramatically that Grok began flagging puppy photos, cloud formations, and even its own logo as antisemitic. One desperate prompt fix cascaded into an even more desperate one.

The goblin fix didn’t lead to any major fallout. Still, OpenAI concedes that GPT-5.5 shipped with the core issue still present — it was simply silenced in Codex. The company even shared a command that lets users restore the goblin instructions if they’d rather the creatures stick around.

Why AI firms keep their system prompts under wraps

Concealing the full system prompt is standard practice across the AI industry. Companies guard these prompts as proprietary assets for several reasons: protecting intellectual property, maintaining a competitive edge, and bolstering security. When someone attempting to jailbreak a model knows precisely which rules it’s enforcing, circumventing those rules becomes a much simpler task.

There’s a fourth reason companies tend to stay quiet about it: preserving their public image. An instruction like “never reference goblins” hardly projects confidence in the technology behind it. Making that public demands either a good sense of humor or a solid culture of openness — preferably both.

OpenAI reports that the investigation led to new internal tools for auditing how models behave and tracing unusual tendencies back to their origins in training data. GPT-5.5’s training dataset has since been scrubbed of examples that favored those creature references. The next generation of models should arrive without goblins in tow — unless, of course, some other odd behavior surfaces for reasons no one can quite explain.

Daily Debrief Newsletter

Kick off your mornings with the biggest headlines of the day, along with exclusive features, a podcast, videos, and plenty more.