I not too long ago and instantly closed it.

Not as a result of it was incorrect. The code labored. The numbers checked out.

However I had no thought what was happening.

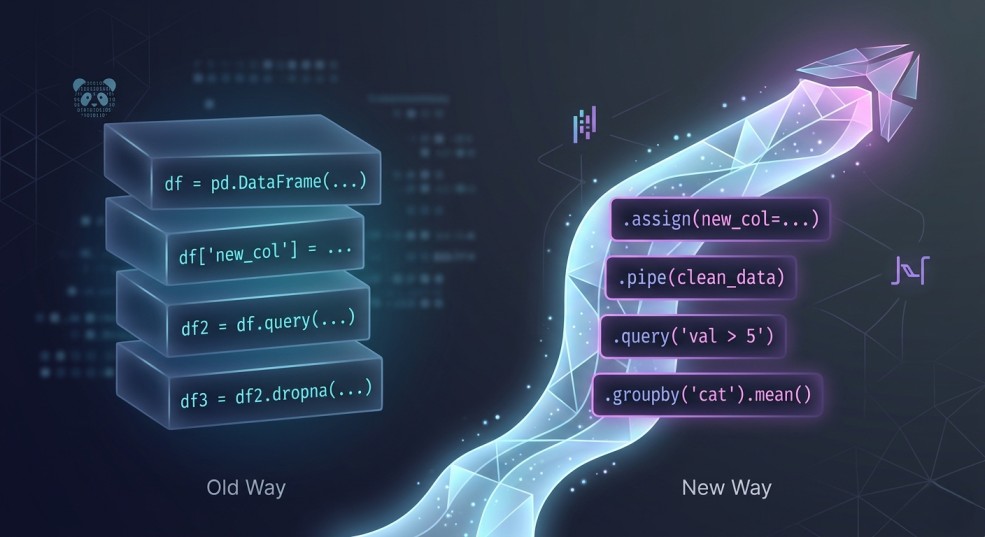

There have been variables all over the place. df1, df2, final_df, final_final. Every step made sense in isolation, however as a complete it felt like I used to be tracing a maze. I needed to learn line by line simply to know what I had already achieved.

And the humorous factor is, that is how most of us begin with Pandas.

You be taught a number of operations. You filter right here, create a column there, group and combination. It will get the job achieved. However over time, your code begins to really feel more durable to belief, more durable to revisit, and positively more durable to share.

That was the purpose I noticed one thing.

The hole between newbie and intermediate Pandas customers will not be about understanding extra features. It’s about the way you construction your transformations.

There’s a sample that quietly modifications every little thing when you see it. Your code turns into simpler to learn. Simpler to debug. Simpler to construct on.

It’s known as technique chaining.

On this article, I’ll stroll by way of how I began utilizing technique chaining correctly, together with assign() and pipe(), and the way it modified the way in which I write Pandas code. If in case you have ever felt like your notebooks are getting messy as they develop, this can most likely click on for you.

The Shift: What Intermediate Pandas Customers Do In a different way

At first, I believed getting higher at Pandas meant studying extra features.

Extra tips. Extra syntax. Extra methods to control knowledge.

However the extra I constructed, the extra I observed one thing. The individuals who have been really good at Pandas weren’t essentially utilizing extra features than I used to be. Their code simply appeared… completely different.

Cleaner. Extra intentional. Simpler to comply with.

As an alternative of writing step-by-step code with a lot of intermediate variables, they wrote transformations that flowed into one another. You possibly can learn their code from high to backside and perceive precisely what was occurring to the information at every stage.

It virtually felt like studying a narrative.

That’s when it clicked for me. The true improve will not be about what you utilize. It’s about how you construction it.

As an alternative of considering:

“What do I do next to this DataFrame?”

You begin considering:

“What transformation comes next?”

That small shift modifications every little thing.

And that is the place technique chaining is available in.

Methodology chaining isn’t just a cleaner approach to write Pandas. It’s a completely different approach to consider working with knowledge. Every step takes your DataFrame, transforms it, and passes it alongside. No pointless variables. No leaping round.

Only a clear, readable movement from uncooked knowledge to last end result.

Within the subsequent part, I’ll present you precisely what this seems like utilizing an actual instance.

The “Before”: How Most of Us Write Pandas

To make this concrete, let’s say we wish to reply a easy query:

Which product classes are producing probably the most income every month?

I pulled a small gross sales dataset with order particulars, product classes, costs, and dates. Nothing fancy.

import pandas as pd

df = pd.read_csv("sales.csv")

print(df.head())Output

order_id customer_id product class amount value order_date

0 1001 C001 Laptop computer Electronics 1 1200 2023-01-05

1 1002 C002 Headphones Electronics 2 150 2023-01-07

2 1003 C003 Sneakers Trend 1 80 2023-01-10

3 1004 C001 T-Shirt Trend 3 25 2023-01-12

4 1005 C004 Blender House 1 60 2023-01-15Now, right here is how I’d have written this not too way back:

# Create a brand new column for income

df["revenue"] = df["quantity"] * df["price"]

# Filter for orders from 2023 onwards

df_filtered = df[df["order_date"] >= "2023-01-01"]

# Convert order_date to datetime and extract month

df_filtered["month"] = pd.to_datetime(df_filtered["order_date"]).dt.to_period("M")

# Group by class and month, then sum income

grouped = df_filtered.groupby(["category", "month"])["revenue"].sum()

# Convert Collection again to DataFrame

end result = grouped.reset_index()

# Type by income descending

end result = end result.sort_values(by="revenue", ascending=False)

print(end result)This works. You get your reply.

class month income

1 Electronics 2023-02 2050

2 Electronics 2023-03 1590

0 Electronics 2023-01 1500

8 House 2023-03 225

6 House 2023-01 210

5 Trend 2023-03 205

7 House 2023-02 180

4 Trend 2023-02 165

3 Trend 2023-01 155However there are a number of issues that begin to present up as your evaluation grows.

First, the movement is tough to comply with. It’s important to preserve monitor of df, df_filtered, grouped, and end result. Every variable represents a barely completely different state of the information.

Second, the logic is scattered. The transformation is going on step-by-step, however not in a approach that feels linked. You’re mentally stitching issues collectively as you learn.

Third, it’s more durable to reuse or take a look at. If you wish to tweak one a part of the logic, you now should hint the place every little thing is being modified.

That is the form of code that works fantastic right now… however turns into painful once you come again to it per week later.

Now examine that to how the identical logic seems once you begin considering in transformations as a substitute of steps.

The “After”: When All the things Clicks

Now let’s clear up the very same downside once more.

Identical dataset. Identical aim.

Which product classes are producing probably the most income every month?

Right here’s what it seems like once you begin considering in transformations:

end result = (

pd.read_csv("sales.csv") # Begin with uncooked knowledge

.assign(

# Create income column

income=lambda df: df["quantity"] * df["price"],

# Convert order_date to datetime

order_date=lambda df: pd.to_datetime(df["order_date"]),

# Extract month from order_date

month=lambda df: df["order_date"].dt.to_period("M")

)

# Filter for orders from 2023 onwards

.loc[lambda df: df["order_date"] >= "2023-01-01"]

# Group by class and month, then sum income

.groupby(["category", "month"], as_index=False)["revenue"]

.sum()

# Type by income descending

.sort_values(by="revenue", ascending=False)

)

print(end result)Identical output. Utterly completely different really feel.

class month income

1 Electronics 2023-02 2050

2 Electronics 2023-03 1590

0 Electronics 2023-01 1500

8 House 2023-03 225

6 House 2023-01 210

5 Trend 2023-03 205

7 House 2023-02 180

4 Trend 2023-02 165

3 Trend 2023-01 155The very first thing you discover is that every little thing flows. There isn’t a leaping between variables or attempting to recollect what df_filtered or grouped meant.

Every step builds on the final one.

You begin with the uncooked knowledge, then:

- create income

- convert dates

- extract the month

- filter

- group

- combination

- kind

Multi function steady pipeline.

You’ll be able to learn it high to backside and perceive precisely what is going on to the information at every stage.

That’s the half that stunned me probably the most.

It’s not simply shorter code. It’s clearer code.

And when you get used to this, going again to the previous approach feels… uncomfortable.

There are a few issues occurring right here that make this work so nicely.

We’re not simply chaining strategies. We’re utilizing a number of particular instruments that make chaining really sensible.

Within the subsequent part, let’s break these down.

Breaking Down the Sample

Once I first noticed this fashion of Pandas code, it appeared a bit intimidating.

All the things was chained collectively. No intermediate variables. So much occurring in a small area.

However as soon as I slowed down and broke it into items, it began to make sense.

There are actually simply three concepts carrying every little thing right here:

- technique chaining

assign()pipe()

Let’s undergo them one after the other.

Methodology Chaining (The Basis)

At its core, technique chaining is straightforward. Every step takes a DataFrame, applies a metamorphosis, and returns a brand new DataFrame. That new DataFrame is straight away handed into the following step.

So as a substitute of this:

df = step1(df)

df = step2(df)

df = step3(df)You do that:

df = step1(df).step2().step3()That’s actually it.

However the affect is larger than it seems.

It forces you to assume by way of movement. Every line turns into one transformation. You’re not leaping round or storing momentary states. You’re simply shifting ahead.

That’s the reason the code begins to really feel extra readable. You’ll be able to comply with the transformation from begin to end with out holding a number of variations of the information in your head.

assign() — Preserving All the things within the Stream

That is the one that actually unlocked chaining for me.

Earlier than this, anytime I wished to create a brand new column, I’d break the movement:

df["revenue"] = df["quantity"] * df["price"]That works, but it surely interrupts the pipeline.

assign() permits you to do the identical factor with out breaking the chain:

.assign(income=lambda df: df["quantity"] * df["price"])At first, the lambda df: half felt bizarre.

However the thought is straightforward. You’re saying:

“Take the current DataFrame, and use it to define this new column.”

The important thing profit is that every little thing stays in a single place. You’ll be able to see the place the column is created and the way it’s used, all throughout the similar movement.

It additionally encourages a cleaner fashion the place transformations are grouped logically as a substitute of scattered throughout the pocket book.

pipe() — The place Issues Begin to Really feel Highly effective

pipe() is the one I ignored at first.

I believed, “I can already chain methods, why do I need this?”

Then I bumped into an issue.

Some transformations are simply too advanced to suit neatly into a sequence.

You both:

write messy inline logic

or break the chain fully

That’s the place pipe() is available in.

It lets you move your DataFrame right into a customized operate with out breaking the movement.

For instance:

def filter_high_value_orders(df):

return df[df["revenue"] > 500]

df = (

pd.read_csv("sales.csv")

.assign(income=lambda df: df["quantity"] * df["price"])

.pipe(filter_high_value_orders)

)Now your logic is cleaner, reusable and simpler to check

That is the purpose the place issues began to really feel completely different for me.

As an alternative of writing lengthy scripts, I used to be beginning to construct small, reusable transformation steps.

And that’s when it clicked.

This isn’t nearly writing cleaner Pandas code. It’s about writing code that scales as your evaluation will get extra advanced.

Within the subsequent part, I wish to present how this modifications the way in which you concentrate on working with knowledge completely.

Considering in Pipelines (The Actual Improve)

Up till this level, it’d really feel like we simply made the code look nicer.

However one thing deeper is going on right here.

Once you begin utilizing technique chaining persistently, the way in which you concentrate on working with knowledge begins to vary.

Earlier than, my method was very step-by-step.

I’d have a look at a DataFrame and assume:

“What do I do next?”

- Filter it.

- Modify it.

- Retailer it.

- Transfer on.

Every step felt a bit disconnected from the final.

However with technique chaining, that query modifications.

Now it turns into:

“What transformation comes next?”

That shift is small, but it surely modifications the way you construction every little thing.

You cease considering by way of remoted steps and begin considering by way of a movement. A pipeline. Knowledge is available in, will get remodeled stage by stage, and produces an output.

And the code displays that.

Every line isn’t just doing one thing. It’s a part of a sequence. A transparent development from uncooked knowledge to perception.

This additionally makes your code simpler to cause about.

If one thing breaks, you would not have to scan your entire pocket book. You’ll be able to have a look at the pipeline and ask:

- which transformation may be incorrect?

- the place did the information change in an sudden approach?

It turns into simpler to debug as a result of the logic is linear and visual.

One other factor I observed is that it naturally pushes you towards higher habits.

- You begin writing smaller transformations.

- You begin naming issues extra clearly.

- You begin fascinated by reuse with out even attempting.

And that’s the place it begins to really feel much less like “just Pandas” and extra like constructing precise knowledge workflows.

At this level, you aren’t simply analyzing knowledge.

You’re designing how knowledge flows.

Actual-World Refactor: From Messy to Clear

Let me present you the way this really performs out.

As an alternative of leaping straight from messy code to an ideal chain, I wish to stroll by way of how I’d refactor this step-by-step. That is often the way it occurs in actual life anyway.

Step 1: The Beginning Level (Messy however Works)

df = pd.read_csv("sales.csv") # Load dataset

# Create income column

df["revenue"] = df["quantity"] * df["price"]

# Filter orders from 2023 onwards

df_filtered = df[df["order_date"] >= "2023-01-01"]

# Convert order_date and extract month

df_filtered["month"] = pd.to_datetime(df_filtered["order_date"]).dt.to_period("M")

# Group by class and month, then sum income

grouped = df_filtered.groupby(["category", "month"])["revenue"].sum()

# Convert to DataFrame

end result = grouped.reset_index()

# Type outcomes

end result = end result.sort_values(by="revenue", ascending=False)Nothing incorrect right here. That is how most of us begin.

However we will already see:

- too many intermediate variables

- transformations are scattered

- more durable to comply with because it grows

Step 2: Scale back Pointless Variables

First, take away variables that aren’t actually wanted.

df = pd.read_csv("sales.csv") # Load dataset

# Create new columns upfront

df["revenue"] = df["quantity"] * df["price"]

df["month"] = pd.to_datetime(df["order_date"]).dt.to_period("M")

end result = (

# Filter related rows

df[df["order_date"] >= "2023-01-01"]

# Mixture income by class and month

.groupby(["category", "month"])["revenue"]

.sum()

# Convert to DataFrame

.reset_index()

# Type outcomes

.sort_values(by="revenue", ascending=False)

)Already higher. There are fewer shifting components, and a few movement is beginning to seem

Step 3: Introduce Fundamental Chaining

Now we begin chaining extra intentionally.

end result = (

pd.read_csv("sales.csv") # Begin with uncooked knowledge

.assign(

# Create income column

income=lambda df: df["quantity"] * df["price"],

# Extract month from order_date

month=lambda df: pd.to_datetime(df["order_date"]).dt.to_period("M")

)

# Filter for current orders

.loc[lambda df: df["order_date"] >= "2023-01-01"]

# Group and combination

.groupby(["category", "month"])["revenue"]

.sum()

# Convert to DataFrame

.reset_index()

# Type outcomes

.sort_values(by="revenue", ascending=False)

)At this level, the movement is evident, transformations are grouped logically, and we’re not leaping between variables.

Step 4: Clear It Up Additional

Small tweaks make a giant distinction.

end result = (

pd.read_csv("sales.csv") # Load knowledge

.assign(

# Create income

income=lambda df: df["quantity"] * df["price"],

# Guarantee order_date is datetime

order_date=lambda df: pd.to_datetime(df["order_date"]),

# Extract month from order_date

month=lambda df: df["order_date"].dt.to_period("M")

)

# Filter related time vary

.loc[lambda df: df["order_date"] >= "2023-01-01"]

# Mixture income

.groupby(["category", "month"], as_index=False)["revenue"]

.sum()

# Type outcomes

.sort_values(by="revenue", ascending=False)

)Now there aren’t any redundant conversions, there’s cleaner grouping and extra constant construction.

Step 5: When pipe() Turns into Helpful

Let’s say the logic grows. Possibly we solely care about high-revenue rows.

As an alternative of stuffing that logic into the chain, we extract it:

def filter_high_revenue(df):

# Maintain solely rows the place income is above threshold

return df[df["revenue"] > 500]Now we plug it into the pipeline:

end result = (

pd.read_csv("sales.csv") # Load knowledge

.assign(

# Create income

income=lambda df: df["quantity"] * df["price"],

# Convert and extract time options

order_date=lambda df: pd.to_datetime(df["order_date"]),

month=lambda df: df["order_date"].dt.to_period("M")

)

# Apply customized transformation

.pipe(filter_high_revenue)

# Filter by date

.loc[lambda df: df["order_date"] >= "2023-01-01"]

# Mixture outcomes

.groupby(["category", "month"], as_index=False)["revenue"]

.sum()

# Type output

.sort_values(by="revenue", ascending=False)

)That is the place it begins to really feel completely different. Your code is not only a script. Now, it’s a sequence of reusable transformations.

What I like about this course of is that you don’t want to leap straight to the ultimate model.

You’ll be able to evolve your code progressively.

- Begin messy.

- Scale back variables.

- Introduce chaining.

- Extract logic when wanted.

That’s how this sample really sticks.

Subsequent, let’s discuss a number of errors I made whereas studying this so you don’t run into the identical points.

Widespread Errors (I Made Most of These)

Once I began utilizing technique chaining, I positively overdid it.

All the things felt cleaner, so I attempted to pressure every little thing into a sequence. That led to some… questionable code.

Listed here are a number of errors I bumped into so that you would not have to.

1. Over-Chaining All the things

In some unspecified time in the future, I believed longer chains = higher code.

Not true.

# This will get onerous to learn in a short time

df = (

df

.assign(...)

.loc[...]

.groupby(...)

.agg(...)

.reset_index()

.rename(...)

.sort_values(...)

.question(...)

)Sure, it’s technically clear. However now it’s doing an excessive amount of in a single place.

Repair:

- Break your chain when it begins to really feel dense.

- Group associated transformations collectively

- Cut up logically completely different steps

- Suppose readability first, not cleverness.

2. Forcing Logic Into One Line

I used to cram advanced logic into assign() or loc() simply to maintain the chain going.

That often makes issues worse.

.assign(

revenue_flag=lambda df: np.the place(

(df["quantity"] * df["price"] > 500) & (df["category"] == "Electronics"),

"High",

"Low" ) )This works, however it’s not very readable.

Repair:

If the logic is advanced, extract it.

def add_revenue_flag(df):

df["revenue_flag"] = np.the place(

(df["quantity"] * df["price"] > 500) & (df["category"] == "Electronics"),

"High",

"Low"

)

return df

df = df.pipe(add_revenue_flag)Cleaner. Simpler to check. Simpler to reuse.

3. Ignoring pipe() for Too Lengthy

I averted pipe() at first as a result of it felt pointless. However with out it, you hit a ceiling.

You both:

break your chain

or write messy inline logic

Repair:

- Use

pipe()as quickly as your logic stops being easy. - It’s what turns your code from a script into one thing modular.

4. Shedding Readability With Poor Naming

Once you begin utilizing customized features with pipe(), naming issues so much.

Dangerous:def remodel(df): ...

Higher:def filter_high_revenue(df): ...

Now your pipeline reads like a narrative:.pipe(filter_high_revenue)

That small change makes a giant distinction.

5. Considering This Is About Shorter Code

This one took me some time to understand. Methodology chaining will not be about writing fewer strains. It’s about writing code that’s simpler to learn, cause about and are available again to later

Generally the chained model is longer. That’s fantastic. Whether it is clearer, it’s higher.

Let’s wrap this up and tie it again to the “intermediate” thought.

Conclusion: Leveling Up Your Pandas Recreation

In case you’ve adopted alongside, you’ve seen a small shift with a big effect.

By considering in transformations as a substitute of steps, utilizing technique chaining, assign(), and pipe(), your code stops being only a assortment of strains and turns into a transparent, readable movement.

Right here’s what modifications once you internalize this sample:

- You’ll be able to learn your code high to backside with out getting misplaced.

- You’ll be able to reuse transformations simply, making your notebooks extra modular.

- You’ll be able to debug and take a look at with out tracing dozens of intermediate variables.

- You begin considering in pipelines, not simply steps.

That is precisely what separates a newbie from an intermediate Pandas person.

You’re not simply “making it work.” You’re designing your evaluation in a approach that scales, is maintainable, and appears good to anybody who reads it—even future you.

Strive It Your self

Decide a messy pocket book you’ve been engaged on and refactor only one half utilizing technique chaining.

- Begin with

assign()for brand new columns - Use

loc[]to filter - Introduce

pipe()for any customized logic

You’ll be stunned how a lot clearer your pocket book turns into, virtually instantly.

That’s it. You’ve simply unlocked intermediate Pandas.

The next move? Maintain training, construct your individual pipelines, and spot how your fascinated by knowledge transforms alongside together with your code.