Devices in a broad range of edge AI applications are increasingly at risk of hacking or tampering, with the stakes varying greatly depending on how much the device can impact and interact with human life. Design methods and protection techniques must now be included up front in the design cycle for optimal protection of consumers and companies as the quantum threat looms.

In today’s factories, homes, and streets, AI-enabled, Wi-Fi-connected, and mobile devices are promising ever greater convenience and optimization of routine tasks. These edge devices include the Internet of Things (IoT), home appliances, industrial devices, robots, and vehicles. Wherever they are used, edge AI devices are vulnerable to cyberattacks, malware, counterfeits, and more. Data security is also a big concern as consumers and companies feed increasing amounts of personal and proprietary data into hungry algorithms.

In the home, IoT includes the toaster found in almost every kitchen, which could run simple algorithms to determine if it’s achieving the right amount of browning on the bread. “But what if a hacker were to manipulate the weights in the model so that brown toast is no longer the desired output, and black toast is the desired output?” said John Weil, vice president of IoT and edge AI processors at Synaptics. “From a security point of view, IoT has been exposed for years now. You take IoT, and add the fact that this device is connected, so you add a model that is no longer a tightly coupled embedded model where you put in an input, and you get an output. It’s now a model that makes decisions on the fly, and security becomes paramount.”

While today’s home assistant hubs, such as Alexa and Nest, offer convenience, it comes at a price. “Security is a major topic, and privacy is a major topic,” said Thomas Rosteck, division president of connected secure systems at Infineon Technologies. “Why does everything that I say in my house need to be transferred to wherever, hoping it’s only used for what I want it to be used for? This is a privacy concern, and data in motion is always at risk.”

Others agree privacy is a concern, whether in smart glasses, cameras, or a speaker microphone. “People are afraid that their privacy or personal data has been taken,” said Michael Pan, senior director of engineering at Synaptics. “This is the same thing as the camera or speaker microphone in our house. We need some kind of morals in the AI industry. This is one thing. The second thing is that we need to have data control. We need to make users or end consumers understand how the data is being used, stored, and inferenced. In our edge AI products, we have secure inferencing, so I am not taking your raw data to do the AI inferencing. The AI inferencing results are not just sent out to the cloud in an open way that everybody can tap into. This is another beauty about edge AI, for example, in live translation. There’s no network connection to it.”

Edge AI security design

Designers of consumer and industrial IoT chips can include a secure root of trust, but there’s only so much they can do once the chip goes into the end-product edge device.

“We tell customers, if you follow this mindset and you use the security blocks in this way, then you have a pretty good chance of securing the overall product,” said Synaptics’ Weil. “An embedded edge AI MCU is the brain or the heart of the system, and the peripheral chips are the area where people will attack. Typically, wireless chips are one of the biggest threats. The MCU would be capable of saying, ‘No, leave me alone.’ The root of trust security is going to preserve the integrity of the original product. That’s the dream.”

Therefore, IoT and edge AI processor design teams need to build in security from day one. “It has to be at the DNA level,” said Weil. “We basically started with a software mindset and figured out how to make the hardware fit it.”

This means software engineers must work with hardware engineers from the get-go. “In the design stage, we have to align the hardware,” said Synaptics’ Pan. “For all of the registered values, how do we do the pin locks? How do we do the strap tables? Our latest processor has three different cores, three different subsystems. We have the security subsystem, the M3, which does the initial security booting, so I need to make sure my device is securely booted. The M3 hands off the booting chain or responsibility to M52, which runs our MCU. The MCU has a different set of subsystems that the software needs to drive as well. The M52 runs a real-time OS. The M52 will drive MQ855, a two-core 855 subsystem that runs Linux, and that Linux drives the rest of the graphics.”

Designers must build layers of security to make the systems robust against intrusion. “Going forward, all our processor and connectivity technology will be PSA Level 3 compliant, and we are working with software partners to enable us to do that,” said Ananda Roy, senior product manager of low-power edge AI at Synaptics.

Devices can then be secured by the MCU, a secure element, or security services, and IP protection is also important. “Many of our customers who are using AI are investing a lot of money into their AI models, and they want to have this IP protected,” noted Infineon’s Rosteck.

Fig. 1: Edge AI MCUs need to be built with security in the DNA. Source: Semiconductor Engineering/Infineon OktoberTech

An edge AI processor with security functionality built into it gives OEMs the possibility to make their end device secure. “But later on, more work needs to be done by engineers, not only on the hardware side but also on the software side and also on the connectivity side,” said Robert Otreba, co-founder and CEO of Grinn. “Security needs to be implemented on the whole chain of the system.”

Taking cues from the automotive sector

For physical AI, many of the safety and security requirements carry over from automotive. “As you’re building these SoCs for automotive, and you’re adding that robustness, you have to also incorporate that for a lot of the applications on the edge,” said Pallavi Sharma, director of product management at Imagination Technologies. “Especially with autonomous driving, they realize that there is this need for making sure that it’s protected from cyberattacks and hacks, and that’s going to be very similar to on the edge, because we’re going to have edge devices that are connected, processing data, and trying to make decisions on their own in an autonomous way.”

However, security risks increase when IoT and physical AI are connected to large language models. “In any physical AI application or robotics, to ensure the safety of people interacting, LLM systems must be trained from the start with the goal of safety and security embedded,” said Scott Best, senior technical director, Security IP at Rambus. “Asimov’s ‘Laws of Robotics’ may seem quaint, but it is dangerously naïve to assume that any safety/security considerations will fortuitously emerge in a purely mechanical, goal-oriented, agentic system.”

Both the hardware and the LLMs are vulnerable. “If the LLM is subject to tampering, it can make poor judgments about the information that it has or even produce the wrong answers because it’s being either trained to do it, or its behavior is being modified through its commands and instructions,” said Dana Neustadter, senior director of product management at Synopsys.

Protection techniques

Industrial IoT, consumer IoT, physical AI such as robotics, and automotive all share common attack vectors, including multi-modal sensors, wireless connectivity, and AI/LLMs processing data locally or sending it back to the cloud.

“That whole thing is an attack surface,” said Neustadter. “The first thing to protect is the integrity of data, which always involves cryptography such as message authentication, codes, and hashing; those are crucial. A ton of that information is confidential. Another crucial aspect is that every device participating in the network must be authenticated before it’s allowed in. You need to know exactly what the device is, its identity, and where it’s supposed to be. You can do all kinds of logical checks if you know the identity of something, because you can ask questions about where it is. Is it coming at me from portals that it shouldn’t be? Or is this coming at me from a new network direction? These are all distinct networks, and even though you integrate them to be one large network, they have their own characteristics, and so you need to do all those logical kinds of checks.”

A cryptographic identifier needs to be uniquely tied to that device. “Then you can identify it, and make it prove to you who it is,” said Synopsys’ Neustadter. “Once you’ve done that, then you can allow it into the network by negotiating the parameters for what its network security is going to look like. Then there’s the whole policy enforcement and following it over time, making sure that it doesn’t deviate from the behaviors and the permissions that it has that unique identifier. That unique identifier, where you start the security in the system, also applies to other security solutions. It’s becoming very, very critical to protect it against cloning and tampering. It’s got to be very, very secure using capabilities such as PUF, as an example.”

Fig. 2: IoT physically unclonable function. Source: Synopsys.

Also, according to Rambus, the Secure Hash Algorithm (SHA-x), along with hash-based message authenticated code (HMAC), can be used for symmetric keys, and the Elliptic Curve Digital Signature Algorithm (ECDSA) for asymmetric keys.

Industrial IoT is at extra risk

Built-in hardware security and cybersecurity best practices are especially important for the industrial IoT sector, as a breach or failure can take out an entire plant or even a network of plants across multiple countries.

“In industrial IoT, the consequence of failure could be catastrophic,” said Hezi Saar, executive director of product management, mobile, automotive, and consumer IP at Synopsys. “There is the potential for physical damage, massive financial loss, environmental hazard, or threat to human life and safety in critical infrastructure. That means availability and integrity are paramount. Security protocols must be highly stringent to protect physical processes and prevent unauthorized control or disruption.”

Attacks can be focused on either getting corporate data out, corrupting corporate data, or just holding it hostage. “Attackers can essentially encrypt all the data and threaten to throw away the keys if you don’t pay off,” Synopsys’ Neustadter explained. “Sometimes it comes in because there are vulnerable components to the system. There have been some big, big attacks that were the result of crucial security suppliers supplying products that weren’t actually secure and leaving all of their customers vulnerable. The quintessential incident is the SolarWinds attacks. A lot of it was just extremely poor security practices around how they deployed software and how they protected their updates.”

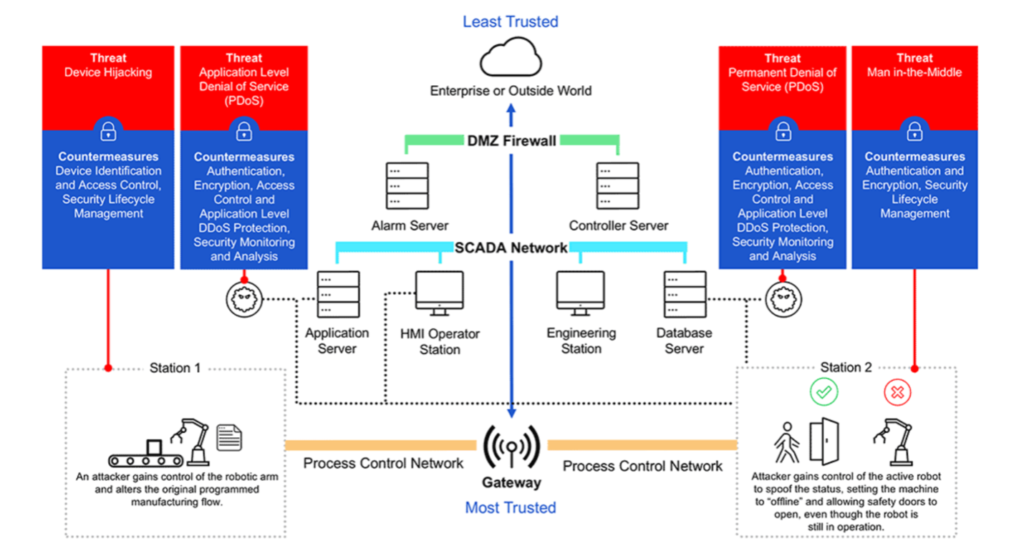

A summary of the types of security attacks on industrial IoT, according to Rambus:

- Man-in-the-middle: In an IIoT scenario, an attacker could assume control of a smart actuator and knock an industrial robot out of its designated lane and speed limit – potentially damaging an assembly line or injuring operators.

- Device hijacking: In an IIoT scenario, a hijacker could assume control of a smart meter and use the compromised device to launch ransomware attacks against Energy Management Systems (EMSs) or illegally siphon unmetered power lines.

- Distributed Denial of Service (DDoS): Incoming traffic flooding a target originates from multiple sources, making it difficult to stop the cyber offensive by simply blocking a single source, causing serious disruptions for utility services and manufacturing facilities in IIoT.

- Permanent Denial of Service (PDoS): Malware such as BrickerBot, which was coded to exploit hard-coded passwords in IoT devices and cause permanent denial of service, can be used to disable critical equipment on a factory floor, in a wastewater treatment plant, or in an electrical substation.

Fig. 3: Industrial IoT: Threats and Countermeasures. Source: Rambus

If a violation is detected, a broad range of actions formulated in the context of an overall system security policy should be executed, such as revoking device credentials or quarantining an IoT device based on anomalous behavior. It is critical to ensure that endpoint devices are secured from possible tampering and data manipulation, which could result in the incorrect reporting of events.

Security lifecycle management is also key. Rapid over-the-air device key replacement during cyber disaster recovery ensures minimal service disruption. In addition, secure device decommissioning ensures that scrapped devices will not be repurposed and exploited to connect to a service without authorization.

The challenge in industrial IoT is that companies are spread so widely over legacy and cutting-edge systems and equipment.

“One of the biggest pain points in industrial IoT integration is the fragmentation of data across silos and the inconsistent formats that come from mixing old and new systems,” said Amol Borkar, automotive segment senior director, DSP product management and marketing at Cadence. “Legacy machines often produce data in proprietary formats or through protocols that were never intended for cloud or AI-driven analytics, while modern sensors and robotics generate high-frequency, multi-modal data streams — think vibration readings, thermal images, and predictive insights — all in different structures. This creates a messy data landscape where interoperability becomes a nightmare. If these silos aren’t broken down, you end up with isolated pockets of information that can’t talk to each other, making holistic decision-making nearly impossible.”

To break down these isolated systems, Borkar said a step-by-step approach should be taken. “First, they can use middleware or edge gateways to convert old, proprietary protocols into modern standards like MQTT (message queuing telemetry transport) or OPC UA (open platform communications unified architecture),” he explained. “After that, they should set up an industrial data platform that brings all kinds of data together under a unified model, so everything fits into the same framework. With this foundation in place, AI and analytics tools can work across different data sources, providing valuable insights without losing context.”

Finally, interoperability frameworks and APIs allow robotics, sensors, and business systems to share information smoothly. “But it’s not just about having the right technology,” said Borkar. “It’s also crucial to have strong governance in place to ensure the data is high-quality, secure, and scalable.”

Evolving threats and regulations

As the threat landscape is always changing, new regulations are being rolled out in Europe, the U.S., and Singapore, requiring devices to provide a certain level of security and cyber resilience. “The semiconductors are the foundation for the security levels,” said Infineon’s Rosteck. PSA is an industry standard for security with four levels. In the first two levels, there’s not so much physical protection inside, but Levels 3 and 4 require physical protection as well. “It’s an industry-agreed standard against which you have to attest that your product has a certain resilience, and this is made for microcontrollers as a main target. If you have PSA Level 4, it’s way easier to achieve the other requirements.”

The EU’s Cyber Resilience Act (CRA) states that security measures must include products that can be connected physically via hardware interfaces as well as products that are connected logically, such as via network sockets, pipes, files, application programming interfaces, or any other types of software interface.

Meanwhile, the Security Evaluation Standard for IoT Platforms (SESIP) standard aims to make it easier for device manufacturers to comply. “By using certified components with in-built security assurances, device makers can integrate, manage, and demonstrate security without incurring additional cost, effort, or time-to-market,” according to its website.

Fig. 4: Different levels of CRA, PSA, and SESIP security in various edge product categories. Source: Infineon

A key part of complying with regulations is that devices can have their security software updated, over the air or by wire. “You must comply with new regulations, but because you don’t know what the entire threat surface looks like, you’re going to need to adapt over time to new threats,” said Synopsys’ Neustadter. “The term ‘over the air’ gets abused a bit in this regard, as it is just code for being able to update it from a network. You don’t care, actually, whether it comes over wires or whether it comes over the air. The principles and the specific protocols can be the same, regardless of what the network path is. Wireless networks have different physical characteristics about availability and reliability. Generally, they’re just part of a continuum of networks. That’s the thing about the Internet of Things. They’re networks of networks.”

Security also may be updated via a reprogrammable component such as an FPGA.

Conclusion

Attackers are working overtime to hack everything from critical infrastructure to car factories and consumer devices. They want to disrupt, steal, and advertise for free. Responsibility for warding off attacks lands on everyone in the supply chain, but starts with secure semiconductor processors in the IoT devices that are increasingly a part of industrial and domestic life.

The hardware security and cybersecurity requirements for physical AI and IoT are similar to those in the automotive sector, giving designers a good starting point.

“I don’t see a difference,” said Synopsys’ Saar. “Cybersecurity coming from automotive is being adopted and talked about also in physical AI,” he said. “For example, MIPI introduced the camera service extension (CSE). It has a security component to it, like security for the camera interface. They’re jumping on it in the automotive side. It’s safety and security, and you need to have it so nobody is tampering with your system. We see edge AI developers asking for the same thing because they want to ensure that the person who is using the edge device and connecting to another edge is a reliable source, and nobody is hacking into it. The linkage between automotive safety and cybersecurity to edge and physical AI is very critical.”

Crucially, security and safety must go hand in hand. “For legal and ethical reasons, all AI and machine learning tools, products, and technology must be underpinned by the specifics of good, functional safety engineering standards,” said Andrew Johnston, engineering and technology leader, systems and functional safety engineering specialist at Imagination Technologies. “This includes life cycle practices, tool evaluation, tool qualification, and — increasingly more importantly — cybersecurity and cyber threat resilience.”

Related Reading

Physical AI Takes Functional Safety Cues From Automotive

The automotive industry has established safety standards, but rules concerning safety-critical physical AI are still evolving as more robots work alongside humans.

Edge AI Is Starting To Transform Industrial IoT

Multi-modal sensors generate data that edge AI can turn into actionable insights, provided new devices can be integrated with legacy equipment.

LLMs Add Safety Risks To Physical AI

Extra measures are needed to avoid accidents and bias with robots and drones.