Failure of confidence calibration in deep neural networks

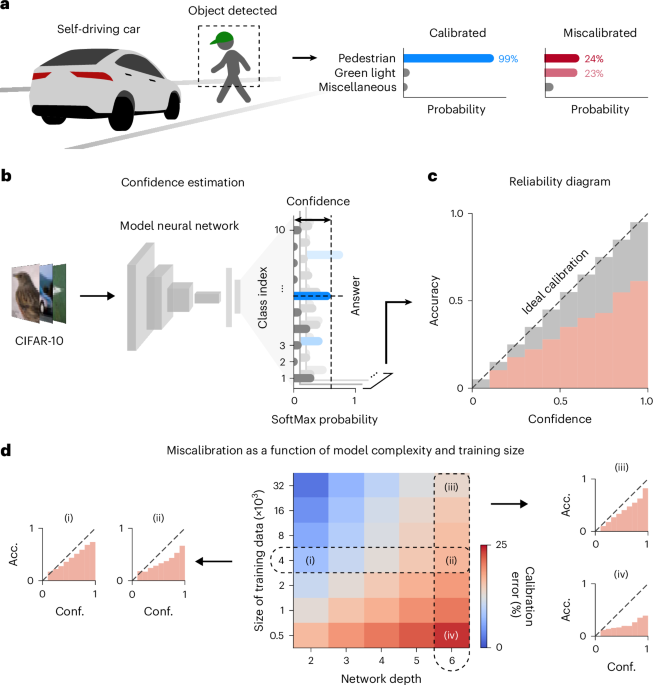

To research the sample of confidence miscalibration in deep neural community fashions, we first used a feed-forward neural community designed for sample classification of three-channel pure photos (32 × 32 × 3) into ten classes (Fig. 1b and Strategies). The community consists of a number of hidden layers with rectified linear unit nonlinearities and a last classification layer that makes use of the SoftMax perform. For a given picture pattern, the mannequin outputs chances for every object class, and the expected label corresponds to the class with the utmost likelihood. The mannequin additionally supplies a measure of prediction confidence, which is derived from the likelihood assigned to the expected class. The predictive uncertainty might be quantified because the distinction between the arrogance worth and one. Ideally, a neural community is anticipated to output calibrated confidence, the place the arrogance stage precisely displays the precise probability of the prediction being right (Fig. 1c and Supplementary Fig. 1). Against this, a poorly calibrated community might output confidence ranges that don’t correspond to the precise probability of correctness, resulting in potential misinterpretations of the mannequin’s predictions.

We educated the feed-forward neural community utilizing a subset of the Canadian Institute For Superior Analysis (CIFAR)-10 dataset35, which consists of pure photos labelled with ten courses representing animals and objects. After coaching, we evaluated the mannequin’s predictions and confidence utilizing check photos that weren’t a part of the coaching set. Particularly, we investigated whether or not the arrogance stage of the educated community precisely displays the probability of correctness (Supplementary Fig. 1). By measuring binned confidence values and the corresponding anticipated accuracy inside every binned trial prediction, we constructed a reliability diagram25,36 to visualise the mannequin’s calibration (Fig. 1c). On this diagram, perfect calibration seems as an identification perform for which the estimated confidence corresponds to the precise likelihood of correctness.

We investigated the sample of miscalibration by coaching neural networks of various complexity with totally different coaching information sizes (Fig. 1d). To quantitatively measure the diploma of miscalibration, we calculated the anticipated calibration error (ECE)37, which averages the distinction between accuracy and confidence in every bin, weighted by the variety of samples. Basically, we noticed a considerable hole between perfect calibration and the mannequin’s outputs—the mannequin’s accuracy was decrease than its confidence stage, demonstrating the mannequin’s tendency for overconfidence in most situations. We discovered that each mannequin complexity and coaching information measurement systematically have an effect on confidence calibration. Particularly, the diploma of miscalibration will increase because the coaching information measurement decreases and mannequin complexity will increase, suggesting that miscalibration happens when the coaching information are inadequate in relation to the community’s complexity. This outcome explains why state-of-the-art fashions with excessive complexity battle with mismatch between confidence and accuracy. Current community architectures typically enhance in depth to reinforce studying capability, however the obtainable information measurement doesn’t scale accordingly. In real-world functions, buying giant datasets is expensive and computationally demanding, making miscalibration inevitable in deep studying fashions.

Heat-up coaching with random noise for confidence calibration

The organic mind begins its studying course of even earlier than start, earlier than encountering sensory inputs, via spontaneous neural exercise38 (Fig. 2a). Throughout this early stage, the mind optimizes its neural connections by transmitting this spontaneous exercise to larger cortical areas, and this prenatal studying is noticed throughout a number of mind areas concerned in sensory methods38,39,40,41 (Fig. 2b). Particularly, the spontaneous actions tackle numerous spatiotemporal kinds, equivalent to waves39 or native synchronization40. Importantly, these random actions are unbiased of exterior stimuli and bear no affiliation with the true world or particular aims, but they critically mediate the preliminary formation of neuronal circuits42,43. Thus, this course of might be seen as a type of warm-up coaching with intrinsic random noise34. Right here we display {that a} warm-up coaching algorithm impressed by this organic course of facilitates the preconditioning of networks, calibrating their preliminary confidence to an opportunity stage.

a, Prenatal studying via spontaneous neural exercise earlier than sensory enter in a fetal rat, earlier than start (left) and after eye opening (proper)38. b, Spontaneous neural exercise within the growing visible and auditory areas throughout embryonic (E) and postnatal (P) days41. c, Schematic of the random noise warm-up coaching algorithm, impressed by the growing mind earlier than sensory expertise. Within the warm-up section, the community receives Gaussian random inputs with unpaired labels sampled uniformly. d, Take a look at loss throughout the warm-up section and information coaching. w/, with; w/o, with out. e, Reliability diagram exhibiting the anticipated accuracy for samples binned by confidence. Inset: ECE. f, Impact of random noise warm-up coaching below various situations of coaching information measurement and community complexity on calibration error. The marker ▼ signifies the parameters utilized in d and e, the place the six-layer networks are educated with a dataset measurement of 4,000. g,h, Results of random noise warm-up when coaching ResNet-18: ((i) and (ii)) coaching the mannequin from scratch (0.25M noise warm-up→2.5M activity examples seen), and ((iii) and (iv)) making use of the identical noise warm-up throughout fine-tuning following large-scale standard pretraining on ImageNet (120M pretraining examples→0.25M noise warm-up→1M activity examples seen). g, Calibration. h, Take a look at efficiency. Every field plot illustrates the interquartile vary (IQR; Q1–Q3), with the central line indicating the median, and whiskers extending to Q1 − 1.5× IQR and Q3 + 1.5× IQR. *P < 0.001; NS, not important (P > 0.05).

We educated neural networks utilizing noise inputs randomly sampled from a Gaussian distribution and random labels sampled from a uniform distribution (Fig. 2c). Throughout coaching with random noise, we noticed that the loss regularly decreased, whereas accuracy remained on the likelihood stage (Fig. 2nd and Supplementary Fig. 2). When the community was subsequently educated with actual information after the warm-up coaching, we noticed a extra substantial discount in check loss, which was considerably decrease in contrast with networks educated with out random noise warm-up (Fig. 2nd, with out versus with random noise warm-up, nweb = 30 independently initialized networks, two-sided Wilcoxon signed-rank check for paired networks initialized with the identical seed, with out assuming normality, W = 0, P = 1.73 × 10−6, r = 0.873). These outcomes display that random noise coaching facilitates quicker and more practical loss discount throughout subsequent coaching with actual information.

Subsequent, we investigated whether or not random noise warm-up might enhance the calibration of chances, aligning confidence with accuracy. Utilizing every community’s predictions and the corresponding confidences, we constructed reliability diagrams (Fig. 2e), which confirmed that the community educated with random noise had outputs that had been nearer to perfect calibration than networks educated on information alone. Moreover, we confirmed that the networks exhibited considerably decrease calibration error in contrast with these educated solely with information (Fig. 2e (inset), with out versus with random noise warm-up, nweb = 30, two-sided Wilcoxon signed-rank check, W = 0, P = 1.73 × 10−6, r = 0.873). We validated that our random noise warm-up constantly improves mannequin calibration throughout a variety of duties, together with picture classification (Supplementary Fig. 3 and Supplementary Desk 1), picture segmentation (Supplementary Fig. 4) and language technology (Supplementary Fig. 5). Past networks educated with customary backpropagation44, we additionally evaluated networks educated with a biologically believable suggestions alignment algorithm45 (Supplementary Fig. 6). Miscalibration was notably pronounced below this approximated error backpropagation, but random noise warm-up considerably improved confidence calibration. These outcomes affirm that miscalibration is a widespread concern throughout datasets, activity domains and coaching algorithms, and that random noise warm-up supplies a common resolution for enhancing confidence calibration.

We then prolonged this evaluation to networks with various depths and sizes of coaching information. We discovered that random noise warm-up had a major impact, whatever the community depth or coaching information measurement (Fig. 2f, with out versus with random noise warm-up, nweb = 10, two-sided Wilcoxon signed-rank check, W = 0, P = 5.06 × 10−3, r = 0.886; the statistical check was carried out for each mixture of community depth and coaching information measurement). Random noise coaching efficiently decreased calibration error throughout all situations examined. Particularly, the impact was notably pronounced for networks with deeper buildings educated on smaller datasets, the place confidence miscalibration is extra widespread. We additional prolonged the evaluation to ResNet4 and DenseNet5 households, in addition to imaginative and prescient transformers46, confirming that calibration error will increase with mannequin measurement. Random noise warm-up successfully mitigated this impact (Supplementary Fig. 7 and Supplementary Desk 2 (baseline)). We additionally discovered that it prevents miscalibration when coaching information are restricted, even in advanced networks equivalent to ResNet-18 (Supplementary Fig. 8 and Supplementary Desk 3 (baseline)). Particularly, the calibration impact of random noise warm-up is comparable with, and even surpasses, earlier post-calibration methods9,23, regardless of requiring no hold-out dataset (Supplementary Fig. 9 and Supplementary Tables 2 and three). Moreover, as a result of our strategy capabilities not merely as a calibration technique however as a common initialization technique, it may be mixed with current state-of-the-art algorithms to attain superior calibration. We confirmed that the results of random noise warm-up are usually not delicate to the particular selection of noise distribution and are constantly reproduced with numerous forms of random or structured noise (Supplementary Fig. 10) or totally different enter variances (Supplementary Fig. 11).

Moreover, we validated that these further warm-up steps are considerably shorter than standard large-scale information pretraining or downstream activity coaching, imposing negligible computational overhead (Supplementary Fig. 12). We utilized a brief random noise warm-up (0.25M examples are seen) when coaching ResNet-18 on CIFAR-10 in each the scratch-training state of affairs (2.5M examples are seen) and the fine-tuning state of affairs (1M examples are seen) (Fig. 2g,h). Within the scratch-training state of affairs, incorporating random noise warm-up enabled the mannequin to attain superior calibration (Fig. 2g, activity solely versus random + activity, nweb = 20, two-sided Wilcoxon signed-rank check, W = 0, P = 8.86 × 10−5, r = 0.877), and the same impact was noticed within the fine-tuning state of affairs following large-scale ImageNet pretraining (120M examples are seen) (Fig. 2g, pretraining + activity versus pretraining + random + activity, nweb = 20, two-sided Wilcoxon signed-rank check, W = 0, P = 8.86 × 10−5, r = 0.877). As well as, random noise warm-up enabled networks to attain comparable or larger check efficiency (Fig. 2h, activity solely versus random + activity, nweb = 20, two-sided Wilcoxon signed-rank check, W = 64, P = 0.126, r = 0.342; pretraining + activity versus pretraining + random + activity, nweb = 20, two-sided Wilcoxon signed-rank check, W = 0, P = 8.85 × 10−5, r = 0.877). These outcomes counsel that random noise warm-up improves calibration with out sacrificing check efficiency, including solely negligible computational value. Furthermore, the impact of random noise warm-up can’t be replicated by standard large-scale information pretraining.

Initialization with random noise warm-up for uncertainty calibration

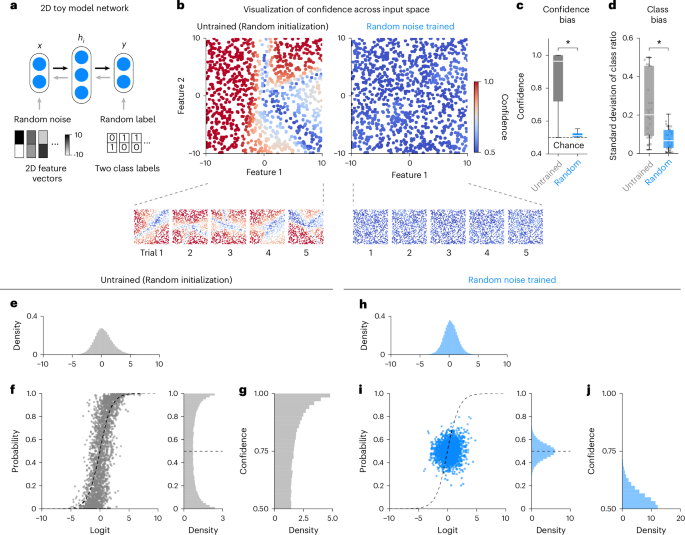

To raised perceive how warm-up coaching with random noise impacts confidence calibration, we additional explored the traits of randomly initialized, untrained networks and people who had been randomly educated, earlier than publicity to actual information. For this goal, we launched a simplified toy mannequin community with a two-dimensional (2D) enter house (Fig. 3a), permitting for the visualization of the enter function house (Fig. 3b). Below these situations, we noticed that the preliminary state of a conventionally processed community—randomly initialized47 and untrained—displayed a extremely biased distribution of confidence throughout the enter house (Fig. 3b, left). Furthermore, we confirmed that this preliminary miscalibration was constant throughout a number of trials with totally different random initializations. Against this, networks educated with random noise displayed a extra homogeneous confidence distribution throughout the enter house, successfully calibrating to the prospect stage (Fig. 3b, proper). This outcome demonstrates that coaching with random noise helps mitigate overconfidence by calibrating confidence ranges throughout the complete enter house, bringing them nearer to likelihood (Fig. 3c, untrained versus random noise educated, npattern = 1,000 per group, two-sided Mann–Whitney U check for 2 unbiased teams with out assuming normality, U = 975,129.5, P = 1.67 × 10−296, r = 0.823). We additionally noticed that untrained networks might exhibit preliminary prediction biases in the direction of particular output courses as a consequence of fluctuations in likelihood. Nonetheless, random noise coaching considerably decreased this preliminary class bias, resulting in a extra uniform likelihood distribution throughout the courses (Fig. 3d, untrained versus random noise educated, nweb = 30, two-sided Wilcoxon signed-rank check, W = 62, P = 4.53 × 10−4, r = 0.640).

a, Diagram illustrating the coaching of random noise in a toy mannequin community with a 2D enter house and binary output house. The community receives random noise with two enter options and randomly assigned binary labels, and is educated utilizing binary cross-entropy loss. b, Visualization of confidence over the enter house. Left: confidence map of the untrained community. Proper: confidence map after random noise coaching. c, Confidence distribution of the community for 2D noise. d, Class bias of the community for 2D noise, illustrating the extent of bias at school prediction. e–g, Logit and SoftMax output distributions of randomly initialized, untrained networks. e, Logit histogram. f, Relationship between logits and SoftMax outputs (left) and histogram of SoftMax outputs (proper). g, Confidence histogram. h–j, Logit and SoftMax output distributions of networks educated with random noise; similar as in e–g. Every field plot illustrates the IQR (Q1–Q3), with the central line indicating the median, and whiskers extending to Q1 − 1.5× IQR and Q3 + 1.5× IQR. *P < 0.001.

We subsequent examined this preliminary overconfidence of randomly initialized networks by analysing the connection between community activation and SoftMax output chances (Fig. 3e–g; Supplementary Word 1 reveals the corresponding theoretical evaluation). In randomly initialized networks, logits had been broadly distributed (Fig. 3e). Their giant magnitudes, arising from excessive variance, induced saturation as a result of nonlinear SoftMax perform, concentrating the output chances close to 0 and 1 (Fig. 3f). This saturation signifies that untrained networks exhibit overconfidence, with chances biased in the direction of 1 (Fig. 3g). Random noise warm-up successfully mitigated this preliminary overconfidence (Fig. 3h–j; Supplementary Word 2 reveals the corresponding theoretical evaluation). Random noise coaching reshaped the logit distribution by concentrating values within the linear area of the SoftMax perform (Fig. 3h) and suppressing large-magnitude logits within the lengthy tail (Fig. 3i), leading to output chances peaking close to the prospect stage (Fig. 3j). Importantly, random noise coaching didn’t appreciably alter the burden distribution however considerably modified the activation patterns throughout the community hierarchy (Supplementary Fig. 13). Not like standard initialization, random noise warm-up regularly decreased activation scales throughout the hierarchy, compressing the final-layer logits to supply outputs near likelihood stage.

Subsequent, we prolonged this evaluation to fashions with higher-dimensional enter areas (Supplementary Fig. 14a). Particularly, we used a mannequin with enter options of 32 × 32 × 3 dimensions and output SoftMax chances for ten courses, permitting it to coach on the CIFAR-10 information. We first measured the likelihood outputs for random noise inputs in each untrained and randomly educated networks (Supplementary Fig. 14b). Per the toy mannequin outcomes, we noticed overconfidence and sophistication bias in untrained networks (Supplementary Fig. 14b, left), whereas such biases had been barely detectable in randomly educated networks (Supplementary Fig. 14b, proper). We confirmed that these variations aligned with the toy mannequin outcomes, each by way of confidence distribution (Supplementary Fig. 14c) and sophistication bias (Supplementary Fig. 14d). We additional prolonged our evaluation to numerous community architectures with differing numbers of outputs (Supplementary Fig. 15a) and depths (Supplementary Fig. 15b). These outcomes confirmed that the preliminary overconfidence is a common function of randomly initialized networks, which random noise coaching successfully mitigates by lowering confidence to the prospect stage. Particularly, the SoftMax outputs of recent architectures, together with ResNet, DenseNet and imaginative and prescient transformers, had been extremely polarized of their untrained state, with most predictions being overconfident. Random noise coaching constantly eradicated this bias (Supplementary Fig. 16).

We additional investigated whether or not the pre-calibration impact noticed with random noise coaching extends to unseen, extra reasonable information. Particularly, we examined how the community’s confidence on the CIFAR-10 and Avenue View Home Numbers (SVHN)48 datasets adjustments throughout random noise coaching (Supplementary Fig. 17a, left) by measuring the community’s predictions for actual information after every epoch of coaching. Particularly, we noticed that the arrogance for unseen CIFAR-10 information was correctly calibrated all through the random noise coaching section, approaching the prospect stage, whereas accuracy remained on the likelihood stage (Supplementary Fig. 17a, proper). We confirmed that this pattern was constant throughout totally different unseen datasets, together with CIFAR-10 and SVHN (Supplementary Fig. 17b). The community maximized uncertainty for unseen information, with accuracy remaining on the likelihood stage, thereby aligning confidence with accuracy. Because of this, the calibration error—the discrepancy between confidence and accuracy—was considerably decreased for unseen information (Supplementary Fig. 17c). These findings display that conventionally initialized networks exhibit overconfidence and sophistication bias even earlier than coaching, whereas random noise coaching successfully eliminates this preliminary bias and calibrates confidence to the prospect stage, maximizing uncertainty earlier than precise studying. This means that random noise coaching can function an initialization technique, providing a substitute for standard random initialization47.

Lastly, we confirmed that this preliminary calibration stabilizes gradients (Supplementary Fig. 18) and smooths the loss panorama at initialization (Supplementary Fig. 19). Consequently, uncertainty-calibrated initialization by way of random noise warm-up allows steady studying inside a smoother loss panorama (Supplementary Fig. 20) and finally converges at flatter minima (Supplementary Fig. 21). We additionally visualized the connection between loss panorama and calibration (Supplementary Fig. 22) and noticed that random noise coaching quickly shifts the community right into a well-calibrated area. Because of this, subsequent activity studying happens in a parameter house that’s already nicely calibrated. This evaluation demonstrates the position of random noise warm-up in shaping the calibrated studying panorama and highlights how its studying dynamics differ from these of conventionally initialized networks. Importantly, we confirmed that these advantages of random noise warm-up are usually not merely as a consequence of adjustments within the efficient studying fee or weight distribution (Supplementary Fig. 23).

Aligning confidence ranges with precise accuracy

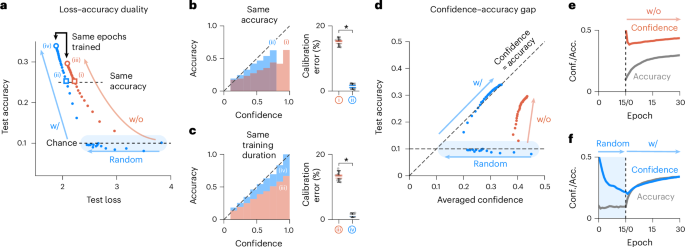

To raised perceive the impression of pre-calibration, we examined the educational dynamics of networks throughout information coaching, by analysing the coaching trajectory in a 2D aircraft of accuracy and loss (Fig. 4a). The trajectory confirmed clear variations between networks with and with out random noise coaching, primarily as a result of preliminary loss discount throughout warm-up. Particularly, for factors on each curves with the identical accuracy, the randomly educated community exhibited decrease loss than the community educated solely on information. By choosing arbitrary factors of equivalent accuracy (Fig. 4a, (i) and (ii)), we analysed the reliability diagram and calibration error for each networks. We discovered that networks with random noise warm-up had higher confidence calibration in contrast with these with out warm-up, even with the identical accuracy (Fig. 4b, with versus with out random noise warm-up, nweb = 30, two-sided Wilcoxon signed-rank check, W = 0, P = 1.73 × 10−6, r = 0.873). Equally, when choosing arbitrary factors with the identical whole coaching epochs (Fig. 4a, (iii) and (iv)), networks warmed up once more demonstrated higher confidence calibration (Fig. 4c, with out versus with random noise warm-up, nweb = 30, two-sided Wilcoxon signed-rank check, W = 0, P = 1.73 × 10−6, r = 0.873). These outcomes spotlight how random noise warm-up influences the dynamics of subsequent studying, notably by lowering preliminary loss.

a, Visualization of loss-accuracy duality. Every dot represents the check loss and accuracy at every epoch of coaching. 4 factors are chosen for calibration comparability, equivalent to networks reaching the identical accuracy below each data-only coaching and random noise warm-up coaching ((i) and (ii)), and absolutely converged networks educated for a similar variety of epochs ((iii) and (iv)). b,c, Comparability between random-noise-trained networks and data-only networks. Left: reliability diagram, exhibiting the connection between predicted confidence and accuracy. Proper: calibration error, measured as ECE. b, Accuracy-controlled comparability ((i) versus (ii)). c, Epoch-controlled comparability ((iii) versus (iv)). d, Scatter plot of accuracy versus confidence for the check dataset in a data-only coaching community and a random-noise-trained community. The diagonal line signifies excellent calibration through which confidence matches the anticipated accuracy. e,f, Averaged confidence and accuracy measured throughout coaching epochs for the check dataset. e, Knowledge-only coaching community. f, Random-noise-trained community. Every field plot illustrates the IQR (Q1–Q3), with the central line indicating the median, and whiskers extending to Q1 − 1.5× IQR and Q3 + 1.5× IQR. *P < 0.001.

We subsequent explored the connection between confidence and accuracy all through the coaching course of (Fig. 4d). In random-noise-trained networks, confidence and accuracy remained nicely aligned, constantly following the diagonal line throughout coaching (Fig. 4d, with). Against this, networks with out warm-up didn’t exhibit this alignment (Fig. 4d (with out) and Fig. 4e). Particularly, a considerable disparity between confidence and accuracy was initially noticed as a consequence of early miscalibration, however this hole shortly diminished and disappeared throughout random noise warm-up (Fig. 4d, random). This alignment of confidence and accuracy helps extra synchronized studying throughout subsequent information coaching (Fig. 4f). Thus, calibrating confidence to the prospect stage via random noise warm-up ensures correct confidence calibration.

Detection of OOD samples utilizing calibrated community confidence

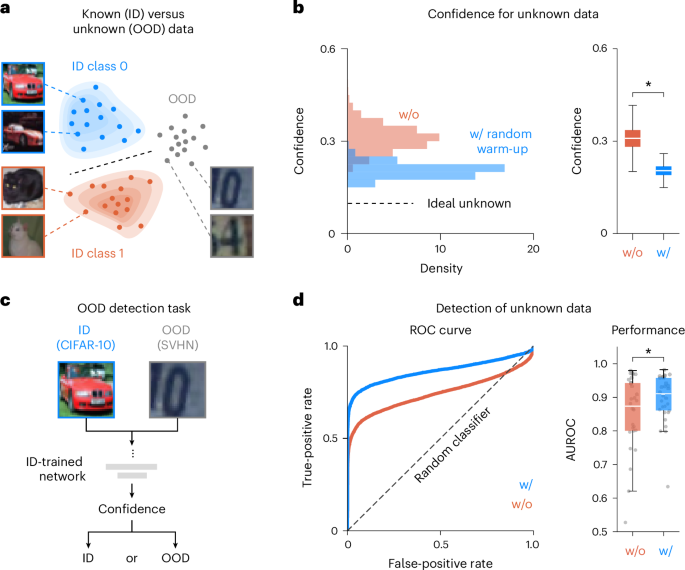

Lastly, we examined the impact of random noise warm-up on detecting OOD information. OOD samples confer with ‘unseen’ information the mannequin has not been educated on, whereas ID samples are these the mannequin has been educated to acknowledge (Fig. 5a). After coaching on CIFAR-10 (ID), we evaluated the mannequin’s response to unseen SVHN information (OOD). Networks with out random noise warm-up exhibited excessive confidence on OOD samples, despite the fact that they’d no prior information of them (Fig. 5b, left). Against this, networks with random noise warm-up confirmed considerably decrease confidence on OOD samples, aligning nearer to the prospect stage—perfect for unknown information. We confirmed that randomly educated networks had decrease common confidence for OOD samples, nearer to likelihood stage (Fig. 5b, proper; with out versus with random noise warm-up, npattern = 10,000 per group, two-sided Mann–Whitney U check, U = 98,908,786, P = 4.94 × 10−324, r = 0.847).

a, Conceptual illustration of ID and OOD samples. ID samples are people who the mannequin has been educated on, whereas OOD samples are from a special distribution, not seen throughout coaching. CIFAR-10 is used as ID information to coach the neural community, and SVHN is used as OOD information, which the community has not been educated on. b, Histogram of confidence for unseen OOD information (left). The distribution can be displayed as a field plot (proper). The horizontal line represents the prospect stage, indicating ideally calibrated confidence for unknown information, the place the mannequin reveals maximized uncertainty. c, Schematic of the OOD detection activity. After coaching the community on ID information, each ID and OOD samples are introduced to the community, and confidence is measured to find out whether or not the samples belong to the ID or OOD class. d, Efficiency of the OOD detection activity utilizing community confidence. Left: ROC curve through which the diagonal line represents random classification, and the purpose (0, 1) represents a great classifier. Proper: efficiency of the OOD detection activity, measured by the AUROC. Every field plot illustrates the IQR (Q1–Q3), with the central line indicating the median, and whiskers extending to Q1 − 1.5× IQR and Q3 + 1.5× IQR. *P < 0.001.

We additional explored how calibrated confidence for each ID and OOD samples can enhance OOD detection. To attain this, we carried out a easy framework through which uncooked confidence values had been used to differentiate ID from OOD samples (Fig. 5c), with samples beneath an arbitrary threshold being categorized as OOD. We then assessed the OOD detection efficiency throughout numerous thresholds and used these outcomes to plot the receiver working attribute (ROC) curve (Fig. 5d, left). To quantify efficiency, we calculated the realm below the ROC curve (AUROC) (Fig. 5d, proper) and located that random noise warm-up considerably improved OOD detection (Fig. 5d, proper; with out versus with random noise warm-up, nweb = 30, two-sided Wilcoxon signed-rank check, W = 60, P = 3.88 × 10−4, r = 0.648).

We prolonged our evaluation to ResNet and DenseNet households, in addition to imaginative and prescient transformers, and confirmed that random noise warm-up usually enhances OOD detection (Supplementary Fig. 24 and Supplementary Desk 4 (baseline)). As well as, we confirmed that random noise warm-up is efficient in enhancing OOD detection efficiency in advanced networks, equivalent to ResNet-18, whatever the coaching information measurement (Supplementary Fig. 25 and Supplementary Desk 5 (baseline)). Particularly, past confidence-based OOD detection, networks educated with random noise considerably outperformed conventionally initialized networks in superior OOD detection frameworks, together with temperature scaling, ODIN13 and vitality rating28 strategies (Supplementary Fig. 26 and Supplementary Tables 4 and 5). Furthermore, random noise warm-up decreased predictive uncertainty and achieved superior OOD detection throughout the conformal prediction framework49,50 (Supplementary Fig. 27). Taken collectively, these outcomes counsel that random noise warm-up and the ensuing preliminary calibration present a vital basis for meta-cognitive skill in neural networks, enabling sturdy discrimination between recognized and unknown information with out further processing or augmented studying.