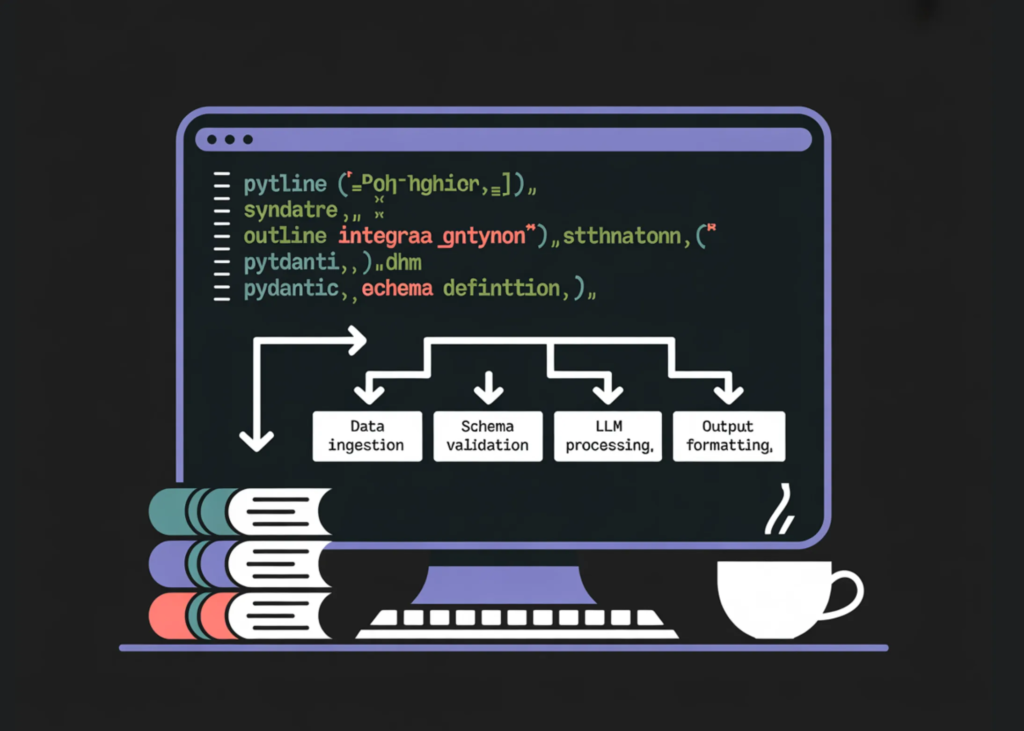

On this tutorial, we construct a workflow utilizing Outlines to generate structured and type-safe outputs from language fashions. We work with typed constraints like Literal, int, and bool, and design immediate templates utilizing outlines.Template, and implement strict schema validation with Pydantic fashions. We additionally implement sturdy JSON restoration and a function-calling fashion that generates validated arguments and executes Python features safely. All through the tutorial, we give attention to reliability, constraint enforcement, and production-grade structured era.

import os, sys, subprocess, json, textwrap, re

subprocess.check_call([sys.executable, "-m", "pip", "install", "-q",

"outlines", "transformers", "accelerate", "sentencepiece", "pydantic"])

import torch

import outlines

from transformers import AutoTokenizer, AutoModelForCausalLM

from typing import Literal, Record, Union, Annotated

from pydantic import BaseModel, Discipline

from enum import Enum

print("Torch:", torch.__version__)

print("CUDA available:", torch.cuda.is_available())

print("Outlines:", getattr(outlines, "__version__", "unknown"))

machine = "cuda" if torch.cuda.is_available() else "cpu"

print("Using device:", machine)

MODEL_NAME = "HuggingFaceTB/SmolLM2-135M-Instruct"

tokenizer = AutoTokenizer.from_pretrained(MODEL_NAME, use_fast=True)

hf_model = AutoModelForCausalLM.from_pretrained(

MODEL_NAME,

torch_dtype=torch.float16 if machine == "cuda" else torch.float32,

device_map="auto" if machine == "cuda" else None,

)

if machine == "cpu":

hf_model = hf_model.to(machine)

mannequin = outlines.from_transformers(hf_model, tokenizer)

def build_chat(user_text: str, system_text: str = "You are a precise assistant. Follow instructions exactly.") -> str:

strive:

msgs = [{"role": "system", "content": system_text}, {"role": "user", "content": user_text}]

return tokenizer.apply_chat_template(msgs, tokenize=False, add_generation_prompt=True)

besides Exception:

return f"{system_text}nnUser: {user_text}nAssistant:"

def banner(title: str):

print("n" + "=" * 90)

print(title)

print("=" * 90)We set up all required dependencies and initialize the Outlines pipeline with a light-weight instruct mannequin. We configure machine dealing with in order that the system robotically switches between CPU and GPU based mostly on availability. We additionally construct reusable helper features for chat formatting and clear part banners to construction the workflow.

def extract_json_object(s: str) -> str:

s = s.strip()

begin = s.discover("{")

if begin == -1:

return s

depth = 0

in_str = False

esc = False

for i in vary(begin, len(s)):

ch = s[i]

if in_str:

if esc:

esc = False

elif ch == "":

esc = True

elif ch == '"':

in_str = False

else:

if ch == '"':

in_str = True

elif ch == "{":

depth += 1

elif ch == "}":

depth -= 1

if depth == 0:

return s[start:i + 1]

return s[start:]

def json_repair_minimal(unhealthy: str) -> str:

unhealthy = unhealthy.strip()

final = unhealthy.rfind("}")

if final != -1:

return unhealthy[:last + 1]

return unhealthy

def safe_validate(model_cls, raw_text: str):

uncooked = extract_json_object(raw_text)

strive:

return model_cls.model_validate_json(uncooked)

besides Exception:

raw2 = json_repair_minimal(uncooked)

return model_cls.model_validate_json(raw2)

banner("2) Typed outputs (Literal / int / bool)")

sentiment = mannequin(

build_chat("Analyze the sentiment: 'This product completely changed my life!'. Return one label only."),

Literal["Positive", "Negative", "Neutral"],

max_new_tokens=8,

)

print("Sentiment:", sentiment)

bp = mannequin(build_chat("What's the boiling point of water in Celsius? Return integer only."), int, max_new_tokens=8)

print("Boiling point (int):", bp)

prime = mannequin(build_chat("Is 29 a prime number? Return true or false only."), bool, max_new_tokens=6)

print("Is prime (bool):", prime)We implement sturdy JSON extraction and minimal restore utilities to securely get well structured outputs from imperfect generations. We then reveal strongly typed era utilizing Literal, int, and bool, guaranteeing the mannequin returns values which might be strictly constrained. We validate how Outlines enforces deterministic type-safe outputs straight at era time.

banner("3) Prompt templating (outlines.Template)")

tmpl = outlines.Template.from_string(textwrap.dedent("""

<|system|>

You're a strict classifier. Return ONLY one label.

<|consumer|>

Classify sentiment of this textual content:

{{ textual content }}

Labels: Constructive, Detrimental, Impartial

<|assistant|>

""").strip())

templated = mannequin(tmpl(textual content="The food was cold but the staff were kind."), Literal["Positive","Negative","Neutral"], max_new_tokens=8)

print("Template sentiment:", templated)We use outlines.Template to construct structured immediate templates with strict output management. We dynamically inject consumer enter into the template whereas preserving function formatting and classification constraints. We reveal how templating improves reusability and ensures constant, constrained responses.

banner("4) Pydantic structured output (advanced constraints)")

class TicketPriority(str, Enum):

low = "low"

medium = "medium"

excessive = "high"

pressing = "urgent"

IPv4 = Annotated[str, Discipline(sample=r"^((25[0-5]|2[0-4]d|[01]?dd?).){3}(25[0-5]|2[0-4]d|[01]?dd?)$")]

ISODate = Annotated[str, Field(pattern=r"^d{4}-d{2}-d{2}$")]

class ServiceTicket(BaseModel):

precedence: TicketPriority

class: Literal["billing", "login", "bug", "feature_request", "other"]

requires_manager: bool

abstract: str = Discipline(min_length=10, max_length=220)

action_items: Record[str] = Discipline(min_length=1, max_length=6)

class NetworkIncident(BaseModel):

affected_service: Literal["dns", "vpn", "api", "website", "database"]

severity: Literal["sev1", "sev2", "sev3"]

public_ip: IPv4

start_date: ISODate

mitigation: Record[str] = Discipline(min_length=2, max_length=6)

e mail = """

Topic: URGENT - Can't entry my account after fee

I paid for the premium plan 3 hours in the past and nonetheless cannot entry any options.

I've a shopper presentation in an hour and want the analytics dashboard.

Please repair this instantly or refund my fee.

""".strip()

ticket_text = mannequin(

build_chat(

"Extract a ServiceTicket from this message.n"

"Return JSON ONLY matching the ServiceTicket schema.n"

"Action items must be distinct.nnMESSAGE:n" + e mail

),

ServiceTicket,

max_new_tokens=240,

)

ticket = safe_validate(ServiceTicket, ticket_text) if isinstance(ticket_text, str) else ticket_text

print("ServiceTicket JSON:n", ticket.model_dump_json(indent=2))We outline superior Pydantic schemas with enums, regex constraints, subject limits, and structured lists. We extract a fancy ServiceTicket object from uncooked e mail textual content and validate it utilizing schema-driven decoding. We additionally apply protected validation logic to deal with edge circumstances and guarantee robustness at manufacturing scale.

banner("5) Function-calling style (schema -> args -> call)")

class AddArgs(BaseModel):

a: int = Discipline(ge=-1000, le=1000)

b: int = Discipline(ge=-1000, le=1000)

def add(a: int, b: int) -> int:

return a + b

args_text = mannequin(

build_chat("Return JSON ONLY with two integers a and b. Make a odd and b even."),

AddArgs,

max_new_tokens=80,

)

args = safe_validate(AddArgs, args_text) if isinstance(args_text, str) else args_text

print("Args:", args.model_dump())

print("add(a,b) =", add(args.a, args.b))

print("Tip: For best speed and fewer truncations, switch Colab Runtime → GPU.")We implement a function-calling fashion workflow by producing structured arguments that conform to an outlined schema. We validate the generated arguments, then safely execute a Python operate with these validated inputs. We reveal how schema-first era permits managed device invocation and dependable LLM-driven computation.

In conclusion, we applied a totally structured era pipeline utilizing Outlines with sturdy typing, schema validation, and managed decoding. We demonstrated find out how to transfer from easy typed outputs to superior Pydantic-based extraction and function-style execution patterns. We additionally constructed resilience by means of JSON salvage and validation mechanisms, making the system sturdy towards imperfect mannequin outputs. General, we created a sensible and production-oriented framework for deterministic, protected, and schema-driven LLM purposes.

Take a look at Full Codes right here. Additionally, be at liberty to comply with us on Twitter and don’t overlook to hitch our 120k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you possibly can be part of us on telegram as effectively.