# Introduction

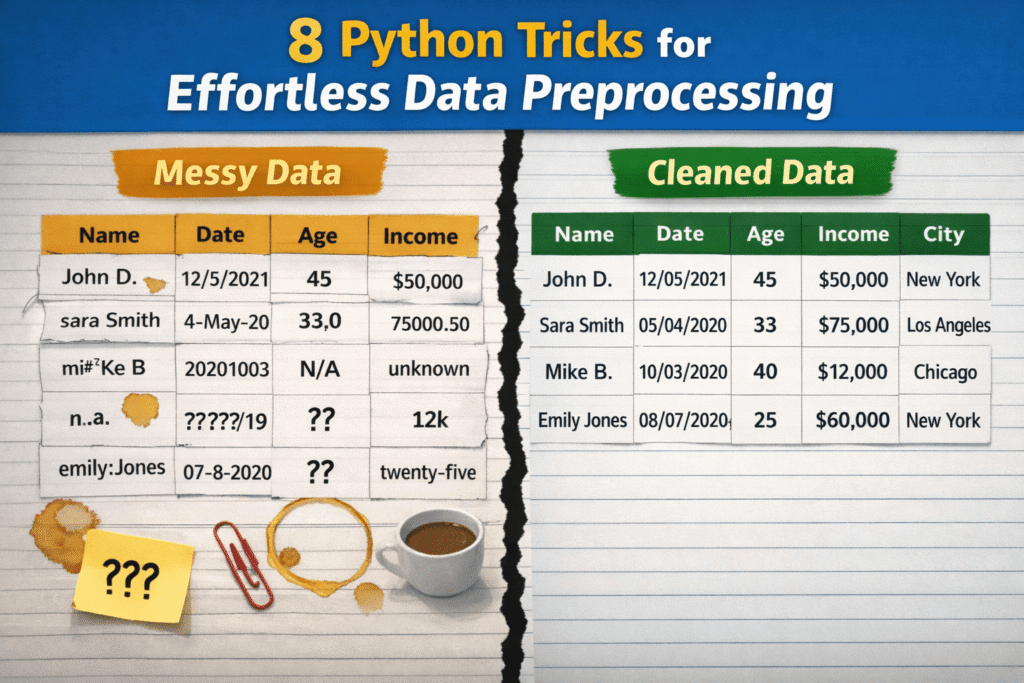

Whereas knowledge preprocessing holds substantial relevance in knowledge science and machine studying workflows, these processes are sometimes not carried out appropriately, largely as a result of they’re perceived as overly complicated, time-consuming, or requiring intensive customized code. Consequently, practitioners might delay important duties like knowledge cleansing, depend on brittle ad-hoc options which can be unsustainable in the long term, or over-engineer options to issues that could be easy at their core.

This text presents 8 Python tips to show uncooked, messy knowledge into clear, neatly preprocessed knowledge with minimal effort.

Earlier than trying on the particular tips and accompanying code examples, the next preamble code units up the mandatory libraries and defines a toy dataset for instance every trick:

import pandas as pd

import numpy as np

# A tiny, deliberately messy dataset

df = pd.DataFrame({

" User Name ": [" Alice ", "bob", "Bob", "alice", None],

"Age": ["25", "30", "?", "120", "28"],

"Income$": ["50000", "60000", None, "1000000", "55000"],

"Join Date": ["2023-01-01", "01/15/2023", "not a date", None, "2023-02-01"],

"City": ["New York", "new york ", "NYC", "New York", "nyc"],

})

# 1. Normalizing Column Names Immediately

It is a very helpful, one-liner fashion trick: in a single line of code, it normalizes the names of all columns in a dataset. The specifics rely upon how precisely you wish to normalize your attributes’ names, however the next instance reveals find out how to change whitespaces with underscore symbols and lowercase all the things, thereby guaranteeing a constant, standardized naming conference. That is vital to forestall annoying bugs in downstream duties or to repair doable typos. No have to iterate column by column!

df.columns = df.columns.str.strip().str.decrease().str.change(" ", "_")

# 2. Stripping Whitespaces from Strings at Scale

Typically it’s possible you’ll solely wish to be sure that particular junk invisible to the human eye, like whitespaces originally or finish of string (categorical) values, is systematically eliminated throughout a complete dataset. This technique neatly does so for all columns containing strings, leaving different columns, like numeric ones, unchanged.

df = df.apply(lambda s: s.str.strip() if s.dtype == "object" else s)

# 3. Changing Numeric Columns Safely

If we aren’t 100% certain that each one values in a numeric column abide by an equivalent format, it’s typically a good suggestion to explicitly convert these values to a numeric format, turning what might typically be messy strings trying like numbers into precise numbers. In a single line, we are able to do what in any other case would require try-except blocks and a extra guide cleansing process.

df["age"] = pd.to_numeric(df["age"], errors="coerce")

df["income$"] = pd.to_numeric(df["income$"], errors="coerce")

Word right here that different classical approaches like df['columna'].astype(float) might typically crash if invalid uncooked values that can not be trivially transformed into numeric have been discovered.

# 4. Parsing Dates with errors="coerce"

Related validation-oriented process, distinct knowledge kind. This trick converts date-time values which can be legitimate, nullifying these that aren’t. Utilizing errors="coerce" is essential to inform Pandas that, if invalid, non-convertible values are discovered, they have to be transformed into NaT (Not a Time), as a substitute of producing an error and crashing this system throughout execution.

df["join_date"] = pd.to_datetime(df["join_date"], errors="coerce")

# 5. Fixing Lacking Values with Good Defaults

For these unfamiliar with methods to deal with lacking values apart from dropping complete rows containing them, this technique imputes these values — fills the gaps — utilizing statistically-driven defaults like median or mode. An environment friendly, one-liner-based technique that may be adjusted with completely different default aggregates. The [0] index accompanying the mode is used to acquire just one worth in case of ties between two or a number of “most frequent values”.

df["age"] = df["age"].fillna(df["age"].median())

df["city"] = df["city"].fillna(df["city"].mode()[0])

# 6. Standardizing Classes with Map

In categorical columns with various values, comparable to cities, it is usually essential to standardize names and collapse doable inconsistencies for acquiring cleaner group names and making downstream group aggregations like groupby() dependable and efficient. Aided by a dictionary, this instance applies a one-to-one mapping on string values associated to New York Metropolis, guaranteeing all of them are uniformly denoted by “NYC”.

city_map = {"new york": "NYC", "nyc": "NYC"}

df["city"] = df["city"].str.decrease().map(city_map).fillna(df["city"])

# 7. Eradicating Duplicates Properly and Flexibly

The important thing for this extremely customizable duplicate elimination technique is the usage of subset=["user_name"]. On this instance, it’s used to inform Pandas to deem a row as duplicated solely by trying on the "user_name" column, and verifying whether or not the worth within the column is equivalent to the one in one other row. An effective way to make sure each distinctive consumer is represented solely as soon as in a dataset, stopping double counting and doing all of it in a single instruction.

df = df.drop_duplicates(subset=["user_name"])

# 8. Clipping Quantiles for Outlier Removing

The final trick consists of capping excessive values or outliers routinely, as a substitute of fully eradicating them. Specifically helpful when outliers are assumed to be attributable to manually launched errors within the knowledge, for example. Clipping units the acute values falling under (and above) two percentiles (1 and 99 within the instance), with such percentile values, holding unique values mendacity between the 2 specified percentiles unchanged. In easy phrases, it’s like holding overly giant or small values throughout the limits.

q_low, q_high = df["income$"].quantile([0.01, 0.99])

df["income$"] = df["income$"].clip(q_low, q_high)

# Wrapping Up

This text illustrated eight helpful tips, suggestions, and methods that can increase your knowledge preprocessing pipelines in Python, making them extra environment friendly, efficient, and sturdy: all on the identical time.

Iván Palomares Carrascosa is a pacesetter, author, speaker, and adviser in AI, machine studying, deep studying & LLMs. He trains and guides others in harnessing AI in the true world.