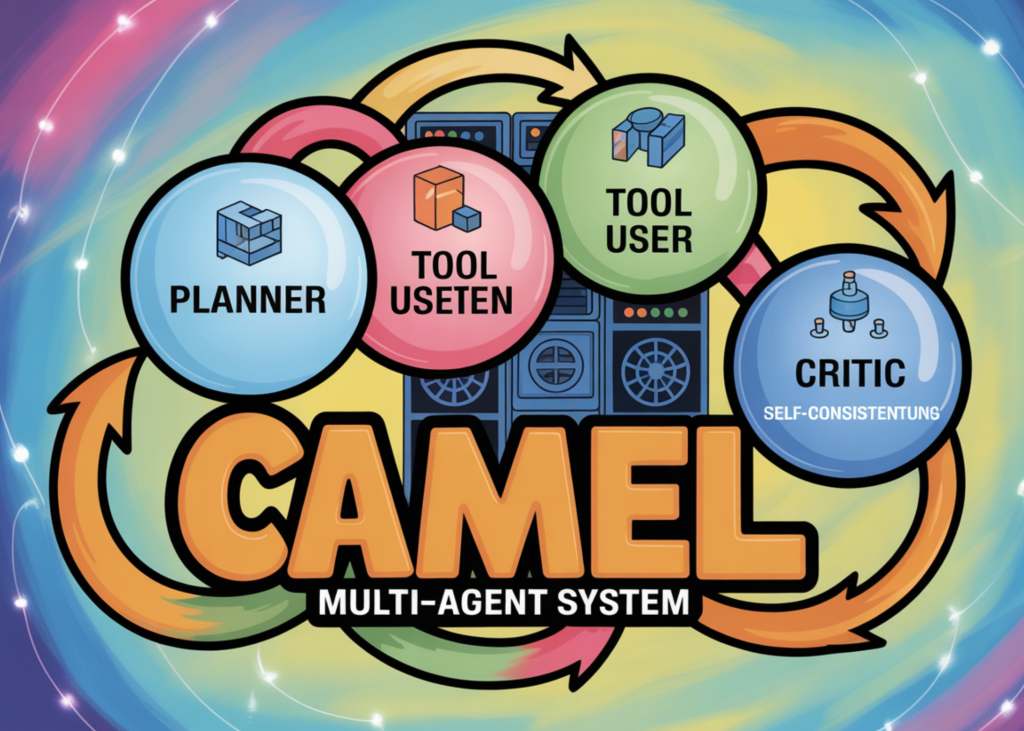

On this tutorial, we implement a sophisticated agentic AI system utilizing the CAMEL framework, orchestrating a number of specialised brokers to collaboratively remedy a posh process. We design a structured multi-agent pipeline consisting of a planner, researcher, author, critic, and rewriter, every with clearly outlined tasks and schema-constrained outputs. We combine instrument utilization, self-consistency sampling, structured validation with Pydantic, and iterative critique-driven refinement to construct a strong, research-backed technical transient generator. By this course of, we display how trendy agent architectures mix planning, reasoning, exterior instrument interplay, and autonomous high quality management inside a single coherent workflow.

import os, sys, re, json, subprocess

from typing import Record, Dict, Any, Elective, Tuple

def _pip_install(pkgs: Record[str]):

subprocess.check_call([sys.executable, "-m", "pip", "install", "-q", "-U"] + pkgs)

_pip_install(["camel-ai[web_tools]~=0.2", "pydantic>=2.7", "rich>=13.7"])

from pydantic import BaseModel, Subject

from wealthy.console import Console

from wealthy.panel import Panel

from wealthy.desk import Desk

console = Console()

def _get_colab_secret(title: str) -> Elective[str]:

strive:

from google.colab import userdata

v = userdata.get(title)

return v if v else None

besides Exception:

return None

def ensure_openai_key():

if os.getenv("OPENAI_API_KEY"):

return

v = _get_colab_secret("OPENAI_API_KEY")

if v:

os.environ["OPENAI_API_KEY"] = v

return

strive:

from getpass import getpass

okay = getpass("Enter OPENAI_API_KEY (input hidden): ").strip()

if okay:

os.environ["OPENAI_API_KEY"] = okay

besides Exception:

go

ensure_openai_key()

if not os.getenv("OPENAI_API_KEY"):

increase RuntimeError("OPENAI_API_KEY is not set. Add it via Colab Secrets (OPENAI_API_KEY) or paste it when prompted.")We arrange the execution setting and set up all required dependencies immediately inside Colab. We securely configure the OpenAI API key utilizing both Colab secrets and techniques or guide enter. We additionally initialize the console utilities that enable us to render structured outputs cleanly throughout execution.

from camel.fashions import ModelFactory

from camel.sorts import ModelPlatformType, ModelType

from camel.brokers import ChatAgent

from camel.toolkits import SearchToolkit

def make_model(temperature: float = 0.2):

return ModelFactory.create(

model_platform=ModelPlatformType.OPENAI,

model_type=ModelType.GPT_4O,

model_config_dict={"temperature": float(temperature)},

)

def strip_code_fences(s: str) -> str:

s = s.strip()

s = re.sub(r"^```(?:json)?s*", "", s, flags=re.IGNORECASE)

s = re.sub(r"s*```$", "", s)

return s.strip()

def extract_first_json_object(s: str) -> str:

s2 = strip_code_fences(s)

begin = None

stack = []

for i, ch in enumerate(s2):

if ch == "{":

if begin is None:

begin = i

stack.append("{")

elif ch == "}":

if stack:

stack.pop()

if not stack and begin just isn't None:

return s2[start:i+1]

m = re.search(r"{[sS]*}", s2)

if m:

return m.group(0)

return s2We import the core CAMEL parts and outline the mannequin manufacturing unit used throughout all brokers. We implement helper utilities to scrub and extract JSON reliably from LLM responses. This ensures that our multi-agent pipeline stays structurally sturdy even when fashions return formatted textual content.

class PlanTask(BaseModel):

id: str = Subject(..., min_length=1)

title: str = Subject(..., min_length=1)

goal: str = Subject(..., min_length=1)

deliverable: str = Subject(..., min_length=1)

tool_hints: Record[str] = Subject(default_factory=listing)

dangers: Record[str] = Subject(default_factory=listing)

class Plan(BaseModel):

aim: str

assumptions: Record[str] = Subject(default_factory=listing)

duties: Record[PlanTask]

success_criteria: Record[str] = Subject(default_factory=listing)

class EvidenceItem(BaseModel):

question: str

notes: str

key_points: Record[str] = Subject(default_factory=listing)

class Critique(BaseModel):

score_0_to_10: float = Subject(..., ge=0, le=10)

strengths: Record[str] = Subject(default_factory=listing)

points: Record[str] = Subject(default_factory=listing)

fix_plan: Record[str] = Subject(default_factory=listing)

class RunConfig(BaseModel):

aim: str

max_tasks: int = 5

max_searches_per_task: int = 2

max_revision_rounds: int = 1

self_consistency_samples: int = 2

DEFAULT_GOAL = "Create a concise, evidence-backed technical brief explaining CAMEL (the multi-agent framework), its core abstractions, and a practical recipe to build a tool-using multi-agent pipeline (planner/researcher/writer/critic) with safeguards."

cfg = RunConfig(aim=DEFAULT_GOAL)

search_tool = SearchToolkit().search_duckduckgoWe outline all structured schemas utilizing Pydantic for planning, proof, critique, and runtime configuration. We formalize the agent communication protocol so that each step is validated and typed. This enables us to remodel free-form LLM outputs into predictable, production-ready knowledge buildings.

planner_system = (

"You are a senior agent architect. Produce a compact, high-leverage plan for achieving the goal.n"

"Return ONLY valid JSON that matches this schema:n"

"{{"goal": "...", "assumptions": ["..."], "tasks": "

"[{{"id": "T1", "title": "...", "objective": "...", "deliverable": "...", "

""tool_hints": ["..."], "dangers": ["..."]}}], "

""success_criteria": ["..."]}}n"

"Constraints: tasks length <= {max_tasks}. Each task should be executable with web search + reasoning."

).format(max_tasks=cfg.max_tasks)

planner = ChatAgent(system_message=planner_system, mannequin=make_model(0.1))

researcher = ChatAgent(

system_message=(

"You are a meticulous research agent. Use the web search tool when useful.n"

"You must:n"

"- Search for authoritative sources (docs, official repos) first.n"

"- Write notes that are directly relevant to the task objective.n"

"- Return ONLY valid JSON:n"

"{"question": "...", "notes": "...", "key_points": ["..."]}n"

"Do not include markdown code fences."

),

mannequin=make_model(0.2),

instruments=[search_tool],

)

author = ChatAgent(

system_message=(

"You are a technical writer agent. You will be given a goal, a plan, and evidence notes.n"

"Write a deliverable that is clear, actionable, and concise.n"

"Include:n"

"- A crisp overviewn"

"- Key abstractions and how they connectn"

"- A practical implementation recipen"

"- Minimal caveats/limitationsn"

"Do NOT fabricate citations. If evidence is thin, state uncertainty.n"

"Return plain text only."

),

mannequin=make_model(0.3),

)

critic = ChatAgent(

system_message=(

"You are a strict reviewer. Evaluate the draft against the goal, correctness, and completeness.n"

"Return ONLY valid JSON:n"

"{"score_0_to_10": 0.0, "strengths": ["..."], "points": ["..."], "fix_plan": ["..."]}n"

"Do not include markdown code fences."

),

mannequin=make_model(0.0),

)

rewriter = ChatAgent(

system_message=(

"You are a revising editor. Improve the draft based on critique. Preserve factual accuracy.n"

"Return the improved draft as plain text only."

),

mannequin=make_model(0.25),

)

We assemble the specialised brokers: planner, researcher, author, critic, and rewriter. We outline their system roles fastidiously to implement process boundaries and structured habits. This establishes the modular multi-agent structure that permits collaboration and iterative refinement.

def plan_goal(aim: str) -> Plan:

resp = planner.step("GOAL:n" + aim + "nnReturn JSON plan now.")

uncooked = resp.msgs[0].content material if hasattr(resp, "msgs") else resp.msg.content material

js = extract_first_json_object(uncooked)

strive:

return Plan.model_validate_json(js)

besides Exception:

return Plan.model_validate(json.masses(js))

def research_task(process: PlanTask, aim: str, okay: int) -> EvidenceItem:

immediate = (

"GOAL:n" + aim + "nnTASK:n" + process.model_dump_json(indent=2) + "nn"

f"Perform research. Use at most {k} web searches. First search official documentation or GitHub if relevant."

)

resp = researcher.step(immediate)

uncooked = resp.msgs[0].content material if hasattr(resp, "msgs") else resp.msg.content material

js = extract_first_json_object(uncooked)

strive:

return EvidenceItem.model_validate_json(js)

besides Exception:

return EvidenceItem.model_validate(json.masses(js))

def draft_with_self_consistency(aim: str, plan: Plan, proof: Record[Tuple[PlanTask, EvidenceItem]], n: int) -> str:

packed_evidence = []

for t, ev in proof:

packed_evidence.append({

"task_id": t.id,

"task_title": t.title,

"objective": t.goal,

"notes": ev.notes,

"key_points": ev.key_points

})

payload = {

"goal": aim,

"assumptions": plan.assumptions,

"tasks": [t.model_dump() for t in plan.tasks],

"evidence": packed_evidence,

"success_criteria": plan.success_criteria,

}

drafts = []

for _ in vary(max(1, n)):

resp = author.step("INPUT:n" + json.dumps(payload, ensure_ascii=False, indent=2))

txt = resp.msgs[0].content material if hasattr(resp, "msgs") else resp.msg.content material

drafts.append(txt.strip())

if len(drafts) == 1:

return drafts[0]

chooser = ChatAgent(

system_message=(

"You are a selector agent. Choose the best draft among candidates for correctness, clarity, and actionability.n"

"Return ONLY the winning draft text, unchanged."

),

mannequin=make_model(0.0),

)

resp = chooser.step("GOAL:n" + aim + "nnCANDIDATES:n" + "nn---nn".be part of([f"[DRAFT {i+1}]n{d}" for i, d in enumerate(drafts)]))

return (resp.msgs[0].content material if hasattr(resp, "msgs") else resp.msg.content material).strip()We implement the orchestration logic for planning, analysis, and self-consistent drafting. We combination structured proof and generate a number of candidate drafts to enhance robustness. We then choose one of the best draft by means of an extra analysis agent, simulating ensemble-style reasoning.

def critique_text(aim: str, draft: str) -> Critique:

resp = critic.step("GOAL:n" + aim + "nnDRAFT:n" + draft + "nnReturn critique JSON now.")

uncooked = resp.msgs[0].content material if hasattr(resp, "msgs") else resp.msg.content material

js = extract_first_json_object(uncooked)

strive:

return Critique.model_validate_json(js)

besides Exception:

return Critique.model_validate(json.masses(js))

def revise(aim: str, draft: str, critique: Critique) -> str:

resp = rewriter.step(

"GOAL:n" + aim +

"nnCRITIQUE:n" + critique.model_dump_json(indent=2) +

"nnDRAFT:n" + draft +

"nnRewrite now."

)

return (resp.msgs[0].content material if hasattr(resp, "msgs") else resp.msg.content material).strip()

def pretty_plan(plan: Plan):

tab = Desk(title="Agent Plan", show_lines=True)

tab.add_column("ID", fashion="bold")

tab.add_column("Title")

tab.add_column("Objective")

tab.add_column("Deliverable")

for t in plan.duties:

tab.add_row(t.id, t.title, t.goal, t.deliverable)

console.print(tab)

def run(cfg: RunConfig):

console.print(Panel.match("CAMEL Advanced Agentic Tutorial Runner", fashion="bold"))

plan = plan_goal(cfg.aim)

pretty_plan(plan)

proof = []

for process in plan.duties[: cfg.max_tasks]:

ev = research_task(process, cfg.aim, cfg.max_searches_per_task)

proof.append((process, ev))

console.print(Panel.match("Drafting (self-consistency)", fashion="bold"))

draft = draft_with_self_consistency(cfg.aim, plan, proof, cfg.self_consistency_samples)

for r in vary(cfg.max_revision_rounds + 1):

crit = critique_text(cfg.aim, draft)

console.print(Panel.match(f"Critique round {r+1} — score {crit.score_0_to_10:.1f}/10", fashion="bold"))

if crit.strengths:

console.print(Panel("Strengths:n- " + "n- ".be part of(crit.strengths), title="Strengths"))

if crit.points:

console.print(Panel("Issues:n- " + "n- ".be part of(crit.points), title="Issues"))

if crit.fix_plan:

console.print(Panel("Fix plan:n- " + "n- ".be part of(crit.fix_plan), title="Fix plan"))

if crit.score_0_to_10 >= 8.5 or r >= cfg.max_revision_rounds:

break

draft = revise(cfg.aim, draft, crit)

console.print(Panel.match("FINAL DELIVERABLE", fashion="bold green"))

console.print(draft)

run(cfg)We implement the critique-and-revision loop to implement high quality management. We rating the draft, determine weaknesses, and iteratively refine it as wanted. Lastly, we execute the complete pipeline, producing a structured, research-backed deliverable by means of coordinated collaboration amongst brokers.

In conclusion, we constructed a production-style CAMEL-based multi-agent system that goes far past easy immediate chaining. We structured agent communication by means of validated schemas, integrated internet search instruments for grounded reasoning, utilized self-consistency to enhance output reliability, and enforced high quality utilizing an inside critic loop. By combining these superior concepts, we confirmed how we are able to assemble scalable, modular, and dependable agentic pipelines appropriate for real-world AI purposes.

Take a look at the Full Codes with Pocket book right here. Additionally, be happy to comply with us on Twitter and don’t neglect to affix our 130k+ ML SubReddit and Subscribe to our Publication. Wait! are you on telegram? now you may be part of us on telegram as effectively.

Must accomplice with us for selling your GitHub Repo OR Hugging Face Web page OR Product Launch OR Webinar and so forth.? Join with us