Follow ZDNET: Add us as a preferred source on Google.

ZDNET’s key takeaways

- Gemini can analyze YouTube, MP4, and MOV files.

- Claude still cannot handle video content directly.

- ChatGPT requires assistance from Codex for more advanced video tasks.

AI tools are great at processing text from prompts and documents. Most handle image interpretation effectively, but what about video? Can your preferred AI actually make sense of video content? And if it can, what can you do with that capability?

Also: I compared ChatGPT Plus and Gemini Pro to determine which performs better – and whether switching is worthwhile

In this piece, we evaluate ChatGPT, Claude, and Gemini to assess their ability to understand video content, whether from YouTube or locally stored files. The findings are unexpected.

The tests

I gave each AI a collection of three videos. The first is a YouTube video I posted last year explaining the scientific method of annealing (yes, I am just as thrilling on camera as I am in ZDNET articles). I checked whether the AI could grasp the video’s content. After that, I attempted to see if they could design a better thumbnail than the one I used on my YouTube channel.

The second video is a motion test for the DJI Neo 2 drone. It simply shows me standing in front of the drone, using hand gestures to direct its flight path. There is no audio. I wanted to determine if the AI understood what was occurring. That file is in MP4 format.

Also: I compared ChatGPT and Claude to assess which performs better – and whether switching makes sense

Lastly, I have the original MOV file that I uploaded to YouTube for a walk-and-talk about my YouTube content strategy. I shared it last year. For testing, I used the local video file. This is the identical version that I uploaded to YouTube. However, I used the local copy for my AI evaluation because I wanted to see how well the AI could figure out what I was discussing without any metadata, transcripts, or cues from YouTube. It was purely the video content itself. If you want to see the uploaded version, here is a link.

I tested the latest and strongest models. I tested the $20-per-month ChatGPT Plus plan, the $20-per-month Gemini Pro plan, and the $100-per-month Claude Max plan, which I use for Claude Code.

My prompt was straightforward: “Can you watch this video?” I discovered that “watch this video” was more effective than “understand” or “summarize,” because both of those caused the AI to search for metadata. On the other hand, when I asked the AI to “watch” the videos, they didn’t all search for metadata. All three AI seemed to recognize that “watch” meant I wanted them to actually view the videos themselves and show comprehension of what was included.

And with that, let’s dive in.

Claude: nope

Sometimes dragging out the disappointment is just unnecessary. Just rip that bandage off quickly. So, Claude is a flat no. Neither the app nor the web interface can do it, well, let’s hear it say so, shall we?

Also: How I leveraged Claude AI to organize a complete hiking trip to the Adirondacks within 30 minutes – without paying a dime

Claude states, “I cannot view video content directly. I am unable to watch videos. I lack the capability to handle video or audio material from YouTube links. I do not have the functionality to process the visual or audio components of an MP4 file. Same response here — I cannot directly view or listen to a .mov file. I do not handle video or audio content.”

And that is how it goes.

On the plus side, Claude performs exceptionally well in other areas. For instance, I once used it to organize an entire hiking adventure to the Adirondack Mountains in 30 minutes – at no cost. Now that Claude gets right. But it simply cannot manage video.

Gemini: yes, indeed

Gemini does have a dedicated app, but I found it unnecessary. Gemini’s web interface handled all video formats wonderfully.

Whether I gave Gemini a YouTube URL, a 625MB MP4 file, or a massive 1.65GB MOV file. Gemini, straight from a browser tab, could manage it.

Also: This powerful Gemini setting made my AI results far more personal and accurate

The most fascinating one is my drone test. That is because the video has no audio (not even ambient noise) and no context other than me standing there and waving my arms.

And yet, Gemini was able to figure out precisely what I was doing:

In the video, you are experimenting with some hand gestures — lifting your palm toward the camera as though telling it to stop or move. The camera responds to your signals, shifting its angle and distance as you direct it through the yard and eventually back toward the house.

Looks like a successful test of those drone gestures! Is there something specific about the footage or the camera’s behavior you wanted to discuss?

I mean, seriously, wow. Let me be clear. The drone itself was not visible in the video. It was functioning as the camera. I suspect there are plenty of people who would not understand what was happening there (I am looking at you, my neighbors!), let alone an AI.

It did successfully grasp my annealing video. It could identify segments, report on specific points I made verbally, and otherwise show its comprehension.

It also understood the uploaded walk-and-talk video, not only recognizing the location, but the various elements of my commentary throughout the video.

Also: I compared ChatGPT Images 2.0 with Gemini Nano Banana to see which performs better – this model comes out on top

The one area where Gemini stumbled was in the shift from Gemini’s understanding mode to Nano Banana’s image mode. Despite Nano Banana’s ability to produce impressive images, it does not comprehend life and the world the way the new ChatGPT Images 2.0 does, and it shows.

I gave Gemini the original thumbnail for the video and instructed it, “Select a single frame for the greatest impact as a YouTube thumbnail, then, based on the context of the video and my existing YouTube thumbnail style, use Nano Banana to generate a high-click-value thumbnail.”

The image on the left is my original thumbnail. The next two were Gemini’s attempts. They are certainly eye-catching and might draw more clicks, but Gemini chose to invent a bearded man and insert him in the image rather than use my suave and sophisticated face. And it spelled “FIRE” as “FCIRE.” And that is how it goes.

If you want to quickly gain an understanding of a video or ask questions about it, Gemini is definitely the best option. If you want to create thoughtful imagery from the video, Gemini is not yet ready for that.

ChatGPT: partially, with some extra effort

Then there is ChatGPT, which is more like a bad news, good news situation.

First, the bad news. ChatGPT itself failed my tests. It could not read the YouTube link. And while it theoretically can understand videos, they must be under 500MB in size. Mine were not.

Then, the good news. If you pair the OpenAI Codex app with ChatGPT,

Also: I stopped relying on ChatGPT for everything: These AI models outperform it in research, coding, and beyond

Codex could read local files and grasp their content. For the drone footage test, Codex described it as:

It appears to be a backyard drone test recording.

Here’s what’s going on:

- An individual stands in a residential backyard, facing the camera/drone.

- They make several gestures (including raising a hand or waving).

- The camera angle shifts around them over time, adjusting perspective and distance while keeping them mostly in the center of the frame.

- The clip ends in the same yard location, with no significant scene changes or additional people or activities.

Exactly. That’s precisely what it shows.

Codex was also able to view and interpret the walk-and-talk MOV file I uploaded. At first, it couldn’t handle the content, so Codex requested permission to install some Python code and libraries to enable audio transcription. Once it set all that up, it could not only watch the video but also make sense of its context.

However, Codex couldn’t view the YouTube stream directly. Fair enough. This is Codex. So I asked, “Can you download the full video and then process it locally?”

That did the trick. It automatically generated a Python script, installed some libraries, essentially built video-downloading capability on the spot, and then watched my YouTube video.

Next, I wanted it to create a thumbnail. I first asked whether it had access to ChatGPT Images 2.0 (keep in mind, both are OpenAI products). It replied, “I have access to image generation tools in this session, but I don’t have a tool explicitly labeled Images 2.0 available to me.”

Also: I tested ChatGPT and Perplexity AI as my CarPlay voice assistants – both made Siri look bad

Let’s just overlook the awkward “available to me” wording. I had to explain to Codex that Images 2.0 existed and direct it to OpenAI’s website so it could understand. At that point, the agentic tool recognized the images tool but still couldn’t do much with it.

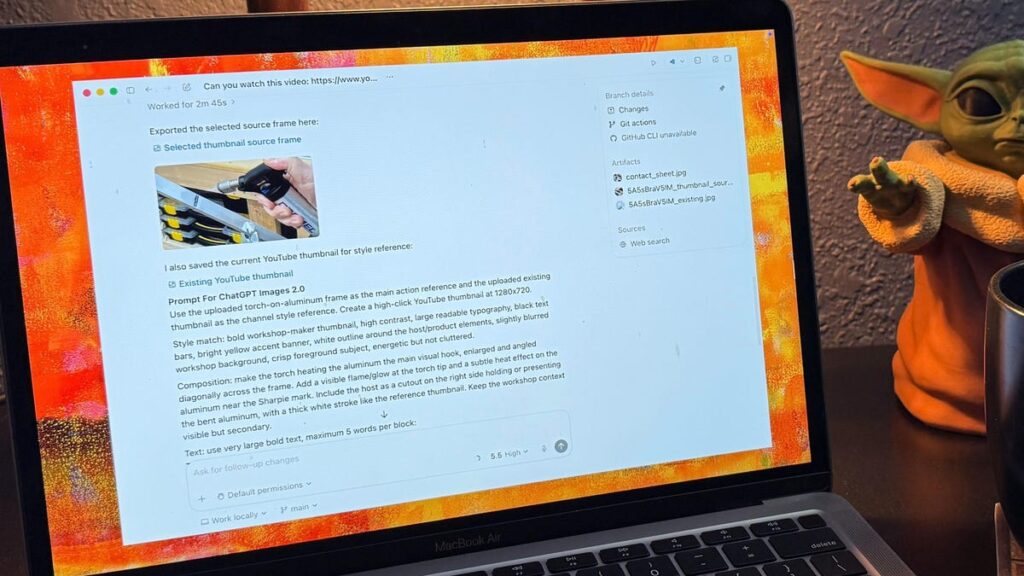

So that’s when I stepped in as the bridge between Codex and ChatGPT. I told Codex, “Select a single frame with the most impact for a YouTube thumbnail, export that thumbnail somewhere so ChatGPT can access it, or so I can upload it to ChatGPT, and then, based on the video’s context and my existing YouTube thumbnail style, write a prompt for ChatGPT to generate a high-click-through thumbnail.”

Then, in ChatGPT, I uploaded the original thumbnail image I showed you earlier along with the frame that Codex selected. I then pasted in the prompt Codex created. As you can see, Codex/ChatGPT got more details right than Gemini did.

It picked up on the white, yellow, and black color scheme I use for my lettering. It didn’t include my logo, and it missed the yellow stripe I use for my titles, but I actually quite like how my photo is positioned over the torch flame. ChatGPT and Codex actually incorporated my image, unlike Gemini. But I do have an issue with the aluminum bar. I used flat stock material. For some reason, the OpenAI tools rendered it as square tubing.

Also: I tried ChatGPT Images 2.0: A fun, huge leap – and surprisingly useful for real work

This is where ChatGPT’s stronger image understanding comes into play. I corrected it on the tubing versus flat bar issue and asked it to regenerate. I prompted, “That aluminum is flat bar material about 1/8-inch thick, not square tubing. Keep everything else the same, but please fix the aluminum.”

We were getting close. I didn’t like how it placed the Sharpie marks (which are used to indicate when the metal is hot enough to bend), and the actual bend was far too sharp. One more prompt: “Good, but the bend is too sharp. It’s not a perfect right angle. There’s a curve because the aluminum needs to flex as it bends. Please revise. Also, the Sharpie marks are perpendicular to the edge of the bar, not at an angle. They indicate where to bend.”

That’s good enough. I believe it’s possible to give Codex and ChatGPT a video with no additional context and get a YouTube thumbnail out of it. You could likely use it to analyze other types of videos and generate images from those as well.

It’s not super convenient, but it does work quite well.

AI can indeed watch video

There are a few things to note. First, the AI was able to fully interpret the videos in a fraction of their actual runtime. Both the science video and the walk-and-talk are about 15 minutes long, but both Gemini and ChatGPT were able to “watch” and analyze them for understanding in roughly two or three minutes each.

Second, both demonstrate fairly strong interpretation capabilities. I found their ability to recognize that the silent video I provided was a drone test to be rather impressive. The drone mostly stayed at human height, yet both were able to extract context from the video frames.

There are certainly some practical applications. I gave Gemini a YouTube video of a CBS report on the OpenAI trial and asked it to provide me with details about what was discussed. I can definitely see using it to scan through security camera footage to quickly locate a specific type of activity.

I can also definitely see giving the AI a longer video and having it extract the key points. What was particularly helpful is that Gemini time-stamped each of the main ideas, so I could simply click the timestamps and jump to that point in the video.

Then, of course, there’s the actual use of these tools to create YouTube thumbnails. I still prefer to do it manually. But the fact that these AIs can extract usable frames and build thumbnails means that creators have a new tool at their disposal.

Also: I used Claude Code to vibe code a Mac app in 8 hours, but it was more work than magic

Overall, I’m impressed with Gemini and the combination of ChatGPT and Codex for video-watching capability. Isn’t it interesting that Gemini doesn’t need two tools (after all, it’s called “Gemini”), but ChatGPT needs Codex? Things like this amuse me.

Even though Claude failed at this test, it still has value. Claude is one of my favorites for vibe coding.

What productivity benefits do you see gaining from the video-watching capabilities of these AIs? Share your thoughts in the comments below.

You can follow my day-to-day project updates on social media. Be sure to subscribe to my weekly update newsletter, and follow me on Twitter/X at @DavidGewirtz, on Facebook at Facebook.com/DavidGewirtz, on Instagram at Instagram.com/DavidGewirtz, on Bluesky at @DavidGewirtz.com, and on YouTube at YouTube.com/DavidGewirtzTV.