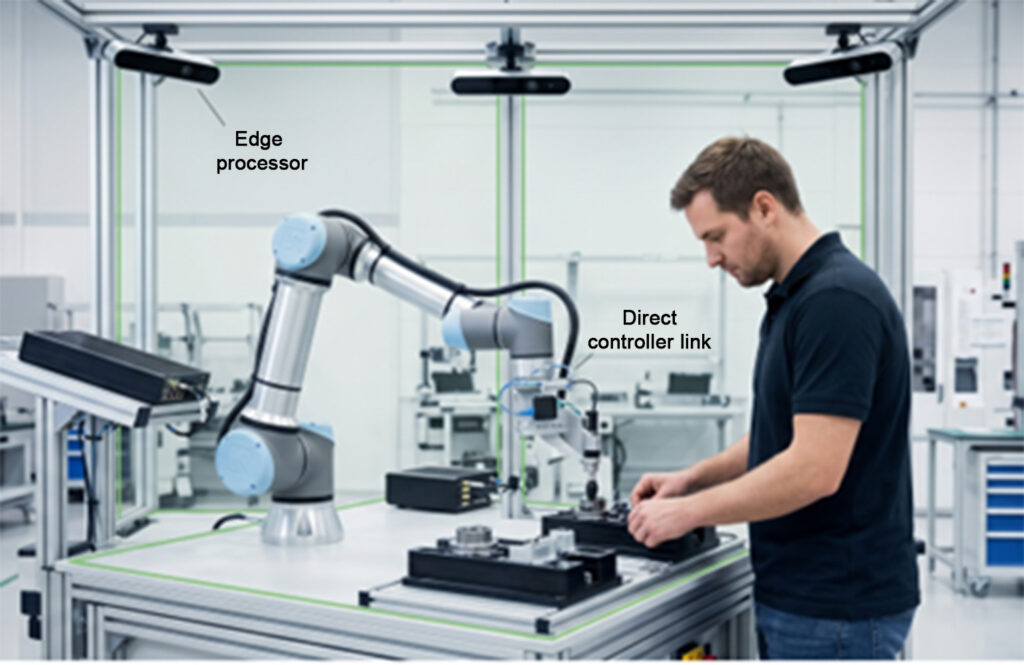

Latency can create safety hazards in collaborative assembly workcells. Image: Cogniedge.ai

Cloud-based vision systems have boosted industrial analytics and predictive maintenance, yet they struggle when real-time safety and throughput are critical on the factory floor. In high-mix collaborative assembly environments, even small network delays can transform a promising human-robot collaboration (HRC) setup into a stop-and-go bottleneck.

The industry’s growing reliance on collaborative robots demands more than just safer enclosures or slower operating speeds. It requires systems that allow cobots to adjust dynamically to human motion and fatigue while preserving both cycle time and safety.

The answer lies in shifting AI inference to the edge and creating a direct, low-latency link from the edge processor to the robot controller—cutting out the traditional PLC (programmable logic controller) when making real-time kinematic adjustments.

The physics of latency in speed and separation monitoring

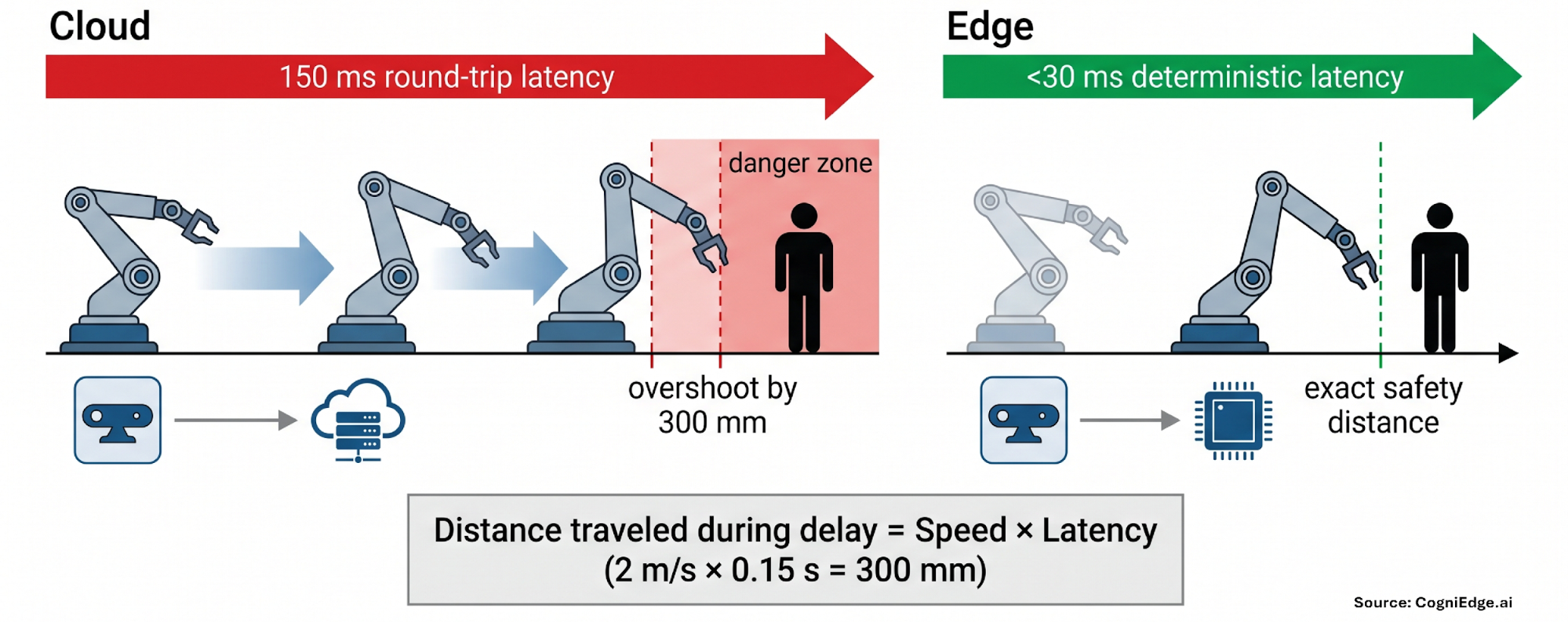

Image: Cogniedge.ai

ISO/TS 15066 identifies speed and separation monitoring (SSM) as a fundamental safety method for collaborative robots. The standard requires the robot to keep a safe protective distance from the operator and either slow down or halt if that distance is violated.

Imagine a typical high-resolution depth camera sending skeletal tracking data to a remote cloud server. The round-trip delay—covering image transmission, inference processing, and command delivery—often falls between 100 and 200 milliseconds.

At a moderate robot arm speed of 2 m/s, the arm covers 200 to 400 mm (7.8 to 15.7 in.) during that lag. In a tight collaborative workspace, a 300 mm (11.8 in.) blind spot can be the difference between safe operation and a potential injury risk.

To work around this, engineers expand safety zones and program conservative speeds or frequent protective stops. The result? Lower throughput that undermines the very purpose of collaborative automation.

Achieving true real-time SSM in dynamic environments requires deterministic end-to-end latency under 30 ms—something only possible when data is processed mere millimeters from the sensor and the decision path connects straight to the motion controller.

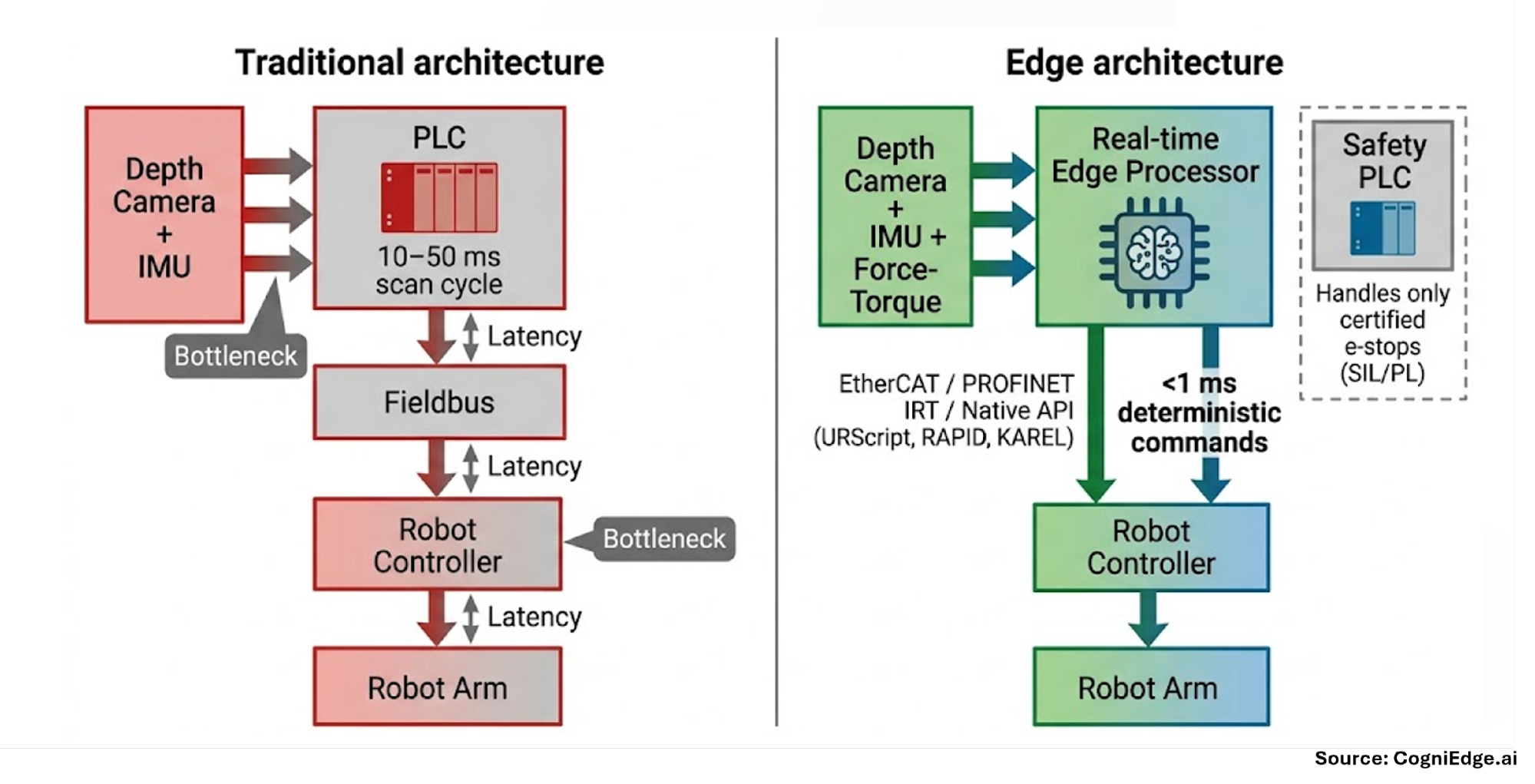

Why legacy PLCs create an unacceptable bottleneck

Most existing installations still depend on conventional PLCs for safety logic. These devices were built for deterministic, discrete I/O operations with scan cycles typically between 10 and 50 ms. They handle light curtains and emergency stops well, but they struggle to process the high-bandwidth, multidimensional data produced by modern vision systems—such as skeletal tracking, micro-movement analysis, and operator state estimation.

Running AI inference results through the PLC adds an extra full scan cycle on top of fieldbus communication overhead. This cumulative delay breaks the determinism needed for proactive speed and separation monitoring.

In practice, many integrators find themselves forced to operate robots at reduced speeds or accept frequent interruptions—even when the AI clearly determines the situation is safe.

Image: Cogniedge.ai

Building the direct edge-to-controller bridge

The solution involves deploying a localized real-time safety processor at the workcell that talks directly to the robot controller, bypassing the PLC for time-sensitive adjustments that aren’t classified as safety-critical.

This processing layer collects multi-modal sensor data—depth cameras, IMUs, and force-torque sensors—at the edge, performs low-latency AI inference, and feeds updated commands into the robot’s motion planner through high-speed industrial protocols. Common implementation approaches include:

- EtherCAT or PROFINET IRT for sub-millisecond deterministic cycles, provided the controller supports fieldbus extension.

- Real-time UDP or native robot APIs (URScript for Universal Robots, RAPID for ABB, KAREL for FANUC) for direct socket communication with the motion controller.

The safety-rated PLC remains in charge of certified emergency stops and SIL/PL-rated functions. The edge processor serves as a parallel, high-speed pathway that continuously updates trajectory, speed, and force setpoints without waiting for the next PLC scan cycle. This “safety coprocessor” architecture ensures full compliance while enabling proactive robot behavior.

Adjusting kinematics on the fly in high-mix cells

With

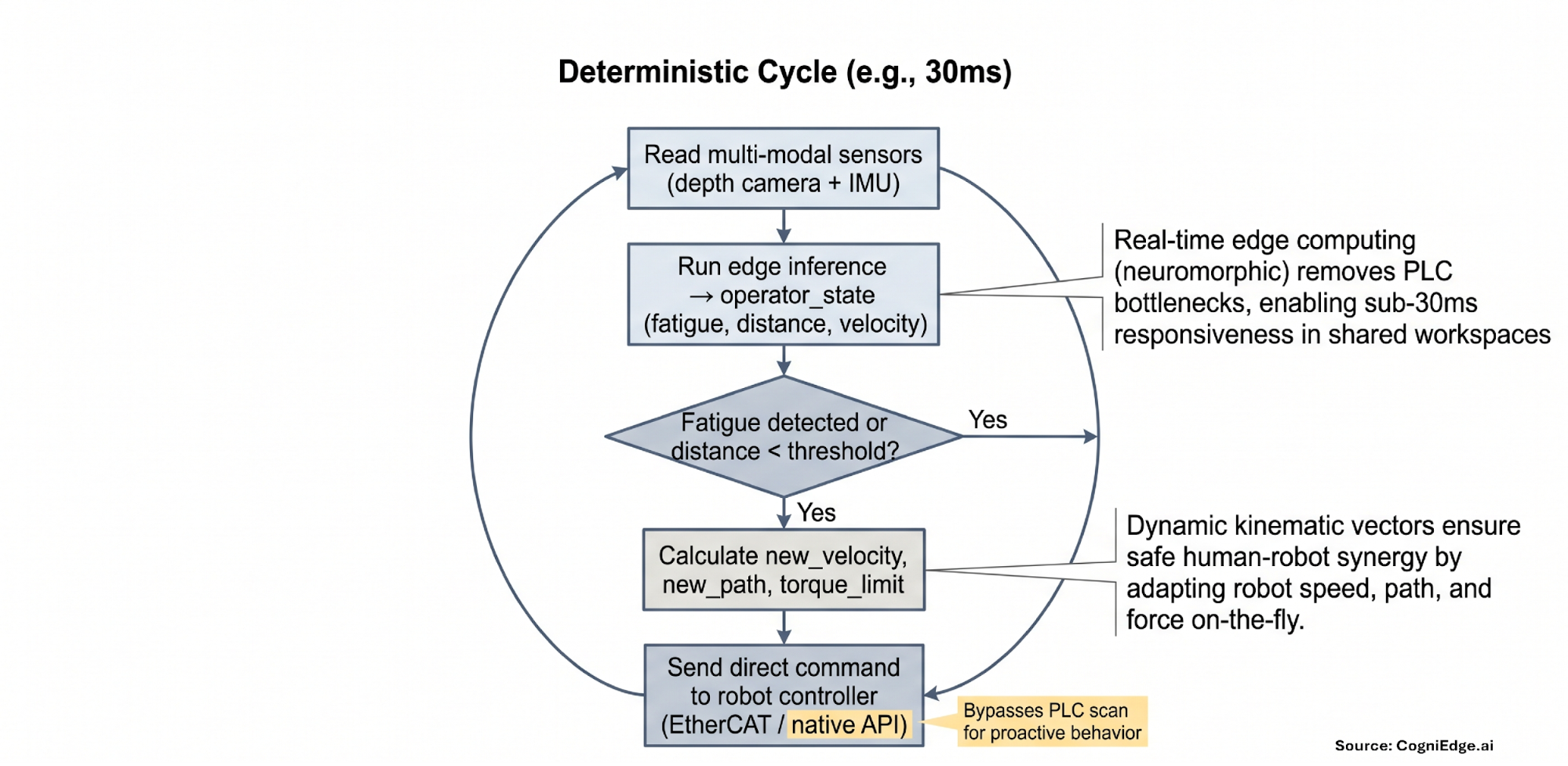

Once the communication delay has been eliminated and a direct control link is established, the collaborative robot can transition from simply stopping reactively to engaging in fluid, real-time teamwork.

In a mixed-product assembly station, an operator’s movements might become slower or less consistent toward the end of a shift, serving as early signs of fatigue. The edge-based processor picks up on these subtle changes instantly through skeletal tracking and movement speed analysis.

Rather than initiating a protective shutdown, the system makes immediate motion adjustments:

- Decrease peak acceleration from 5 m/s² to 2 m/s².

- Increase the approach angle by 15° to provide additional clearance for the operator.

- Reduce torque limits on approach axes to minimize collision impact.

This strategy keeps the workstation running without interruption. The robot fine-tunes its actions based on the worker’s current condition instead of resorting to a complete halt, ensuring both safety and efficiency are maintained. A simplified representation of the decision-making cycle is shown below:

Source: Cogniedge.ai

Hardware essentials for edge-based safety in collaborative robots

Factory environments have constrained space and power availability. Processing units designed for this purpose must consume less than 1 W while performing real-time analysis on time-series data streams. Neuromorphic processors paired with Spiking Neural Networks (SNNs) excel at this task because they handle change detection and temporal data processing with outstanding efficiency and minimal delay.

These small, fanless units install directly inside or adjacent to the work area, link through standard industrial network connections, and interface with present robot controllers without demanding additional enclosures or extensive wiring modifications.

Tangible advantages for systems integrators

By adopting direct edge-to-controller systems, the industry can finally fulfill the core goal of high-mix collaborative workcells: seamless interaction that sustains takt time while upholding safety. This evolution generates immediate benefits throughout the manufacturing ecosystem.

For systems integrators, it delivers a scalable solution suited for existing facilities, utilizes standard protocols across various robot brands, and maintains current investments in safety-certified PLCs. For manufacturers, it safeguards profitability by removing the frequent brief stops that traditionally erode cycle times. Most critically, for the workers on the production floor, it fosters a safer, fatigue-conscious workspace where the robot serves as an attentive, adaptive partner instead of an inflexible machine.

As collaborative automation continues to advance in complexity, resolving the latency issue at the controller level will be the key distinction separating successful, high-output operations from those constrained by outdated limitations.

About the author

Madhu Gaganam is the founder and CEO of Cogniedge.ai, an engineering technologist with over three decades of industrial automation experience spanning companies such as Rockwell Automation, Gartner, NXP, and Dell. A respected voice in the field, he ranks among Thinkers360’s Top 10 Robotics Thought Leaders, serves as co-chair of the Digital Twin Consortium, and is an active member of IEEE RAS.

Madhu Gaganam is the founder and CEO of Cogniedge.ai, an engineering technologist with over three decades of industrial automation experience spanning companies such as Rockwell Automation, Gartner, NXP, and Dell. A respected voice in the field, he ranks among Thinkers360’s Top 10 Robotics Thought Leaders, serves as co-chair of the Digital Twin Consortium, and is an active member of IEEE RAS.