Achiam, J. et al. GPT-4 technical report. Preprint at arXiv (2023).

Touvron, H. et al. Llama 2: open foundation and fine-tuned chat models. Preprint at arXiv (2023).

Anil, R. et al. PaLM 2 technical report. Preprint at arXiv (2023).

Radford, A. et al. Learning transferable visual models from natural language supervision. In Proc. 38th International Conference on Machine Learning (eds Meila, M. & Zhang, T.) 8748–8763 (PMLR, 2021).

Tung, C., Lin, Y., Yin, J., Ye, Q. & Chen, H. Exploring vision language pretraining with knowledge enhancement via large language model. In International Workshop on Trustworthy Artificial Intelligence for Healthcare, 81–91 (Springer, 2024).

Xu, Y. et al. A multimodal knowledge-enhanced whole-slide pathology foundation model. Nat. Commun. 16, 11406 (2025).

Zhu, D., Chen, J., Shen, X., Li, X. & Elhoseiny, M. MiniGPT-4: enhancing vision-language understanding with advanced large language models. In Proc. 12th International Conference on Learning Representations (OpenReview.net, 2024).

Liu, H., Li, C., Wu, Q. & Lee, Y. J. Visual instruction tuning. Adv. Neural Inf. Process. Syst. 36, 34892–34916 (2023).

Chen, Z. et al. InternVL: scaling up vision foundation models and aligning for generic visual-linguistic tasks. In Proc. IEEE/CVF Conference on Computer Vision and Pattern Recognition 24185–24198 (IEEE, 2024).

Antol, S. et al. VQA: visual question answering. In Proc. IEEE International Conference on Computer Vision 2425–2433 (IEEE, 2015).

Lin, T.-Y. et al. Microsoft COCO: common objects in context. In Proc. 13th European Conference on Computer Vision (eds Fleet, D., Pajdla, T., Schiele, B. & Tuytelaars, T.) 740–755 (Springer, 2014).

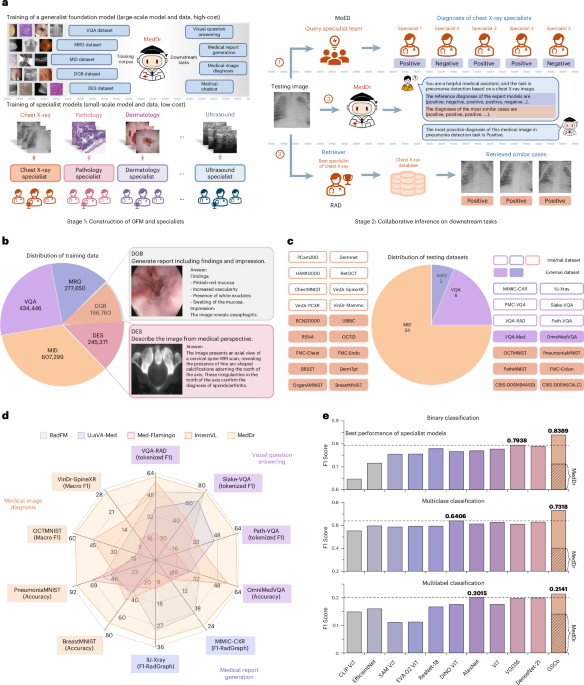

Li, C. et al. LLaVA-Med: training a large language-and-vision assistant for biomedicine in one day. Adv. Neural Inf. Process. Syst. 36, 28541–28564 (2024).

Moor, M. et al. Med-Flamingo: a multimodal medical few-shot learner. In Proc. 3rd Machine Learning for Health Symposium (eds Hegselmann, S. et al.) 353–367 (PMLR, 2023).

Wu, C. et al. Towards generalist foundation model for radiology by leveraging web-scale 2D&3D medical data. Nat. Commun. 16, 7866 (2025).

Tu, T. et al. Towards generalist biomedical AI. NEJM AI 1, AIoa2300138 (2024).

Moor, M. et al. Foundation models for generalist medical artificial intelligence. Nature 616, 259–265 (2023).

Zhou, H.-Y., Acosta, J. N., Adithan, S., Datta, S., Topol, E. J. & Rajpurkar, P. MedVersa: a generalist foundation model for diverse medical imaging tasks. NEJM AI 3, e2500595 (2026).

He, X. et al. Towards visual question answering on pathology images. In Proc. 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 2: Short Papers) (eds Zong, C., Xia, F., Li, W. & Navigli, R.) 708–718 (Association for Computational Linguistics, 2021).

Ben Abacha, A. et al. VQA-Med: overview of the medical visual question answering task at ImageCLEF 2019. In Working Notes of CLEF 2019 (eds Cappellato, L., Ferro, N., Losada, D. E. & Müller, H.) (CEUR-WS.org, 2019).

Johnson, A. E. W. et al. MIMIC-CXR, a de-identified publicly available database of chest radiographs with free-text reports. Sci. Data 6, 317 (2019).

Demner-Fushman, D. et al. Preparing a collection of radiology examinations for distribution and retrieval. J. Am. Med. Inform. Assoc. 23, 304–310 (2016).

Google Scholar

Jin, H., Che, H., Lin, Y. & Chen, H. PromptMRG: diagnosis-driven prompts for medical report generation. In Proc. 38th AAAI Conference on Artificial Intelligence 2607–2615 (AAAI Press, 2024).

Chen, Z., Luo, L., Bie, Y. & Chen, H. Dia-LLaMA: towards large language model-driven CT report generation. In Proc. 28th International Conference on Medical Image Computing and Computer-Assisted Intervention (eds Gee, J. C. et al.) 141–151 (Springer, 2025).

Nguyen, H. T. et al. VinDr-SpineXR: a deep learning framework for spinal lesions detection and classification from radiographs. In Proc. 24th International Conference on Medical Image Computing and Computer-Assisted Intervention (eds de Bruijne, M. et al.) 291–301 (Springer, 2021).

Yang, J. et al. MedMNIST v2 – a large-scale lightweight benchmark for 2D and 3D biomedical image classification. Sci. Data 10, 41 (2023).

Google Scholar

Wang, D. et al. A real-world dataset and benchmark for foundation model adaptation in medical image classification. Sci. Data 10, 574 (2023).

Google Scholar

Hu, Y. et al. OmniMedVQA: a new large-scale comprehensive evaluation benchmark for medical LVLM. In Proc. IEEE/CVF Conference on Computer Vision and Pattern Recognition 22170–22183 (IEEE, 2024).

Zong, Z. et al. MoVA: adapting mixture of vision experts to multimodal context. Adv. Neural Inf. Process. Syst. 37, 103305–103333 (2024).

Nath, V. et al. VILA-M3: enhancing vision-language models with medical expert knowledge. In Proc. IEEE/CVF Conference on Computer Vision and Pattern Recognition 14788–14798 (IEEE, 2025).

Xiong, C. et al. MoME: mixture of multimodal experts for cancer survival prediction. In Proc. 27th International Conference on Medical Image Computing and Computer-Assisted Intervention (eds Linguraru, M. G. et al.) 318–328 (Springer, 2024).

Li, B. et al. MMedAgent: learning to use medical tools with multi-modal agent. In Findings of the Association for Computational Linguistics: EMNLP 2024 (eds Al-Onaizan, Y., Bansal, M. & Chen, Y.-N.) 8745–8760 (Association for Computational Linguistics, 2024).

Pham, H. H., Tran, T. T. & Nguyen, H. Q. VinDr-PCXR: an open, large-scale pediatric chest X-ray dataset for interpretation of common thoracic diseases. PhysioNet (version 1.0. 0), 10, (2022).

Tschandl, P., Rosendahl, C. & Kittler, H. The HAM10000 dataset, a large collection of multi-source dermatoscopic images of common pigmented skin lesions. Sci. Data 5, 1–9 (2018).

Google Scholar

Panchal, S. et al. Retinal Fundus Multi-disease Image Dataset (RFMID) 2.0: a dataset of frequently and rarely identified diseases. Data 8, 29 (2023).

Wang, X. et al. ChestX-ray8: hospital-scale chest X-ray database and benchmarks on weakly-supervised classification and localization of common thorax diseases. In Proc. IEEE Conference on Computer Vision and Pattern Recognition 2097–2106 (IEEE, 2017).

Pacheco, A. G. C. et al. PAD-UFES-20: a skin lesion dataset composed of patient data and clinical images collected from smartphones. Data Br. 32, 106221 (2020).

Google Scholar

Smedsrud, P. H. et al. Kvasir-Capsule, a video capsule endoscopy dataset. Sci. Data 8, 142 (2021).

Google Scholar

Demner-Fushman, D., Antani, S., Simpson, M. & Thoma, G. R. Design and development of a multimodal biomedical information retrieval system. J. Comput. Sci. Eng. 6, 168–177 (2012).

Google Scholar

Lau, J. J., Gayen, S., Ben Abacha, A. & Demner-Fushman, D. A dataset of clinically generated visual questions and answers about radiology images. Sci. Data 5, 1–10 (2018).

Google Scholar

Jacobs, R. A., Jordan, M. I., Nowlan, S. J. & Hinton, G. E. Adaptive mixtures of local experts. Neural Comput. 3, 79–87 (1991).

Google Scholar

PubMed

Google Scholar

Fedus, W., Zoph, B. & Shazeer, N. Switch Transformers: scaling to trillion parameter models with simple and efficient sparsity. J. Mach. Learn. Res. 23, 1–39 (2022).

Luo, L. et al. A large model for non-invasive and personalized management of breast cancer from multiparametric MRI. Nat. Commun. 16, 3647 (2025).

Google Scholar

Lewis, P. et al. Retrieval-augmented generation for knowledge-intensive NLP tasks. Adv. Neural Inf. Process. Syst. 33, 9459–9474 (2020).

Subramanian, M., Shanmugavadivel, K., Naren, O. S., Premkumar, K. & Rankish, K. Classification of retinal OCT images using deep learning. In 2022 International Conference on Computer Communication and Informatics 1–7 (IEEE, 2022).

Liu, B. et al. SLAKE: a semantically-labeled knowledge-enhanced dataset for medical visual question answering. In IEEE 18th International Symposium on Biomedical Imaging 1650–1654 (IEEE, 2021).

Zhang, X. et al. Development of a large-scale medical visual question-answering dataset. Commun. Med. 4, 277 (2024).

Google Scholar

Doerrich, S., Di Salvo, F., Brockmann, J. & Ledig, C. Rethinking model prototyping through the MedMNIST+ dataset collection. Sci. Rep. 15, 7669 (2025).

Google Scholar

Wang, Z., Liu, L., Wang, L. & Zhou, L. R2GenGPT: radiology report generation with frozen LLMs. Meta-Radiol. 1, 100033 (2023).

Google Scholar

Yang, A. et al. Qwen2.5 technical report. Preprint at arXiv (2024).

Tang, X. et al. MedAgents: large language models as collaborators for zero-shot medical reasoning. In Findings of the Association for Computational Linguistics: ACL 2024 (eds Ku, L.-W., Martins, A. & Srikumar, V.) 599–621 (Association for Computational Linguistics, 2024).

Zhou, J., Liu, Z., Xiao, S., Zhao, B. & Xiong, Y. VISTA: visualized text embedding for universal multi-modal retrieval. In Proc. 62nd Annual Meeting of the Association for Computational Linguistics (eds Ku, L.-W., Martins, A. & Srikumar, V.) 3185–3200 (Association for Computational Linguistics, 2024).

Yang, P. et al. LMKG: a large-scale and multi-source medical knowledge graph for intelligent medicine applications. Knowl. Based Syst. 284, 111323 (2024).

Google Scholar

Ni, P., Okhrati, R., Guan, S. & Chang, V. Knowledge graph and deep learning-based text-to-graphQL model for intelligent medical consultation chatbot. Inform. Syst. Front. 26, 137–156 (2024).

Google Scholar

Wei, J. et al. Chain-of-thought prompting elicits reasoning in large language models. Adv. Neural Inf. Process. Syst. 35, 24824–24837 (2022).

Snell, C., Lee, J., Xu, K. & Kumar, A. Scaling LLM test-time compute optimally can be more effective than scaling parameters for reasoning. In Proc. International Conference on Learning Representations (ICLR) (2025).

Hu, E. J. et al. LoRA: low-rank adaptation of large language models. ICLR 1, 3 (2022).

Rajbhandari, S., Ruwase, O., Rasley, J., Smith, S. & He, Y. ZeRO-Infinity: breaking the GPU memory wall for extreme scale deep learning. In Proc. International Conference for High Performance Computing, Networking, Storage and Analysis Article 59 (ACM, 2021).

Simonyan, K. & Zisserman, A. Very deep convolutional networks for large-scale image recognition. In 3rd International Conference on Learning Representations, ICLR 2015, San Diego, CA, USA, May 7–9, 2015, Conference Track Proceedings (eds Bengio, Y. & LeCun, Y.) (Computational and Biological Learning Society, 2015).

Krizhevsky, A., Sutskever, I. & Hinton, G. E. ImageNet classification with deep convolutional neural networks. Adv. Neural Inf. Process. Syst. 25, 1097–1105

Kermany, D. S. et al. Identifying medical diagnoses and treatable diseases by image-based deep learning. Cell 172, 1122–1131 (2018).

Google Scholar

Anouk Stein, M. D. et al. RSNA Pneumonia Detection Challenge. (Kaggle, 2018).

Al-Dhabyani, W., Gomaa, M., Khaled, H. & Fahmy, A. Dataset of breast ultrasound images. Data Br. 28, 104863 (2020).

Google Scholar

Kather, J. N. et al. Predicting survival from colorectal cancer histology slides using deep learning: a retrospective multicenter study. PLoS Med. 16, e1002730 (2019).

Google Scholar

Sawyer-Lee, R., Gimenez, F., Hoogi, A. & Rubin, D. Curated Breast Imaging Subset of Digital Database for Screening Mammography (CBIS-DDSM) (The Cancer Imaging Archive, 2016).

Kawahara, J., Daneshvar, S., Argenziano, G. & Hamarneh, G. Seven-point checklist and skin lesion classification using multitask multimodal neural nets. IEEE J. Biomed. Health Inform. 23, 538–546 (2018).

Google Scholar

Nakayama, L. F. et al. A Brazilian multilabel ophthalmological dataset (BRSET). PhysioNet, 13026 (2023).