The issue: AI mannequin distribution is damaged at scale

Massive-scale AI mannequin distribution presents challenges in efficiency, effectivity, and price.

Contemplate a typical state of affairs: an ML platform staff manages a Kubernetes cluster with 200 GPU nodes. A brand new model of a 70B parameter mannequin turns into obtainable — for instance, DeepSeek-V3 at roughly 130 GB. Every node requires an area copy, leading to 26 TB of information transferred from a single mannequin hub, typically by way of shared origin infrastructure, community bandwidth, and price limits.

The dimensions of contemporary mannequin hubs highlights these challenges:

- Hugging Face Hub serves over 1 million fashions, with particular person information recurrently exceeding 10 GB (safetensors, GGUF quantizations).

- ModelScope Hub hosts over 10,000 fashions — together with massive fashions resembling Qwen, Yi, and inclusionAI’s Ling collection — supporting a quickly rising world person base.

These platforms have considerably improved entry to open fashions, however distributing massive artifacts throughout many nodes introduces system-level constraints:

- Git LFS, which underpins massive file storage on these platforms, is optimized for versioning and entry slightly than large-scale fan-out distribution.

- Price limits can have an effect on each unauthenticated and authenticated requests beneath burst site visitors.

- Community prices improve as the identical knowledge is transferred repeatedly throughout environments.

Current approaches — resembling NFS mounts, pre-built container photographs, or object storage mirrors — will help mitigate these points, however could introduce operational complexity, stale-model threat, or extra storage overhead.

This raises an essential query: how can infrastructure allow mannequin distribution to scale effectively, in order that downloading to the two hundredth node is as quick as downloading to the primary, whatever the mannequin hub?

That’s precisely what the brand new hf:// and modelscope:// protocol assist in Dragonfly delivers.

What Is Dragonfly?

Dragonfly is a CNCF Graduated venture that gives a P2P-based file distribution system. Initially constructed for container picture distribution at Alibaba-scale (processing billions of requests every day), Dragonfly turns each downloading node right into a seed for its friends.

Core Structure:

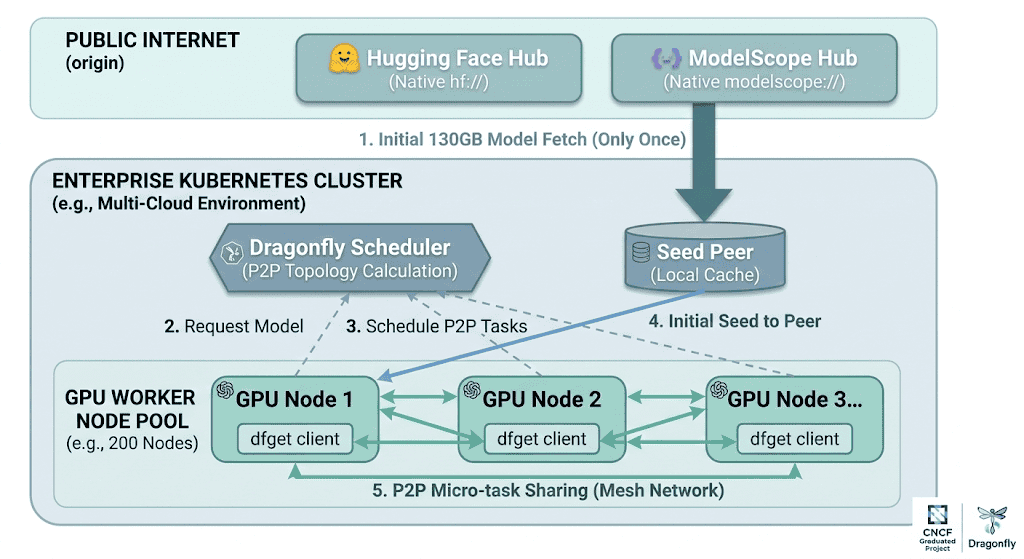

Determine 1: Finish-to-end stream of the P2P mannequin distribution in Dragonfly. The Seed Peer fetches the mannequin from the origin hub as soon as (Step 1), the Dragonfly Scheduler computes the P2P topology (Step 3), and GPU nodes share items through micro-task distribution (Step 5) — lowering origin site visitors from 26 TB to ~130 GB throughout a 200-node cluster.

The magic: Dragonfly splits information into small items and distributes them throughout the P2P mesh. The origin (Hugging Face Hub or ModelScope Hub) is hit as soon as by the seed peer. Critically, the Seed Peer doesn’t want to complete downloading your entire mannequin earlier than sharing with different friends — as quickly as any single piece is downloaded, it may be shared instantly. This piece-based streaming obtain means distribution begins in parallel with the preliminary fetch, dramatically lowering whole switch time. For a 130 GB mannequin throughout 200 nodes, origin site visitors drops from 26 TB to ~130 GB — a 99.5% discount.

Till now, Dragonfly supported HTTP/HTTPS, S3, GCS, Azure Blob Storage, Alibaba OSS, Huawei OBS, Tencent COS, and HDFS backends. However the two largest sources of AI mannequin artifacts — Hugging Face and ModelScope — required customers to pre-resolve hub URLs into uncooked HTTPS hyperlinks, shedding authentication context, revision pinning, and repository construction consciousness.

Not anymore.

Introducing native mannequin hub protocols in Dragonfly

With two new backends merged into the Dragonfly shopper, dfget (Dragonfly’s obtain instrument) now natively understands each Hugging Face and ModelScope URLs. No proxies. No URL rewriting. No wrapper scripts.

The hf:// Protocol — Hugging Face hub

Merged through PR #1665, this backend provides first-class assist for downloading from the world’s largest open-source mannequin repository.

URL format:

hf://[

Parts:

| Part | Required | Description | Default |

|---|---|---|---|

| repository_type | No | fashions, datasets, or areas | fashions |

| proprietor/repository | Sure | Repository identifier (e.g., deepseek-ai/DeepSeek-R1) | — |

| path | No | File path inside the repo | Total repo |

Utilization examples:

# Obtain a single mannequin file with P2P acceleration

dfget hf://deepseek-ai/DeepSeek-R1/mannequin.safetensors

-O /fashions/DeepSeek-R1/mannequin.safetensors

# Obtain a complete repository recursively

dfget hf://deepseek-ai/DeepSeek-R1

-O /fashions/DeepSeek-R1/ -r

# Obtain a particular dataset

dfget hf://datasets/huggingface/squad/practice.json

-O /knowledge/squad/practice.json

# Entry non-public repositories with authentication

dfget hf://proprietor/private-model/weights.bin

-O /fashions/non-public/weights.bin

--hf-token=hf_xxxxxxxxxxxxx

# Pin to a particular mannequin model

dfget hf://deepseek-ai/DeepSeek-R1/mannequin.safetensors --hf-revision v2.0

-O /fashions/DeepSeek-R1/mannequin.safetensorsThe modelscope:// Protocol — ModelScope hub

Merged through PR #1673, this backend brings the identical P2P-accelerated expertise to ModelScope Hub — Alibaba’s open mannequin platform internet hosting hundreds of fashions, with notably robust protection of Chinese language-origin LLMs and multimodal fashions.

URL Format:

modelscope://[/]/[/] Parts:

| Part | Required | Description | Default |

|---|---|---|---|

| repo_type | No | fashions or datasets | fashions |

| proprietor/repo | Sure | Repository identifier (e.g., deepseek-ai/DeepSeek-R1) | — |

| path | No | File path inside the repo | Total repo |

Utilization examples

# Obtain a mannequin repository with P2P acceleration

dfget modelscope://deepseek-ai/DeepSeek-R1

-O /fashions/DeepSeek-R1/ -r

# Obtain a single file

dfget modelscope://deepseek-ai/DeepSeek-R1/config.json

-O /fashions/DeepSeek-R1/config.json

# Obtain with authentication for personal repos

dfget modelscope://deepseek-ai/DeepSeek-R1/config.json

-O /tmp/config.json --ms-token=

# Obtain a dataset

dfget modelscope://datasets/damo/squad-zh/practice.json

-O /knowledge/squad-zh/practice.json

# Obtain from a particular revision

dfget modelscope://deepseek-ai/DeepSeek-R1/config.json --ms-revision v2.0

-O /fashions/DeepSeek-R1/config.json Underneath the hood: Technical deep dive

Each implementations reside within the Dragonfly Rust shopper as new backend modules. Right here’s how they work on the techniques degree.

1. Pluggable Backend Structure

Dragonfly makes use of a pluggable backend system. Every URL scheme (http, s3, gs, hf, modelscope, and so forth.) maps to a backend that implements the Backend trait:

#[tonic::async_trait]

pub trait Backend {

fn scheme(&self) -> String;

async fn stat(&self, request: StatRequest) -> Outcome;

async fn get(&self, request: GetRequest) -> Outcome>;

async fn put(&self, request: PutRequest) -> Outcome;

async fn exists(&self, request: ExistsRequest) -> Outcome;

} Each hf and modelscope backends are registered as builtin backends within the BackendFactory, sitting alongside HTTP, object storage, and HDFS:

// Hugging Face backend

self.backends.insert(

"hf".to_string(),

Field::new(hugging_face::HuggingFace::new(self.config.clone())?),

);

// ModelScope backend

self.backends.insert(

"modelscope".to_string(),

Field::new(modelscope::ModelScope::new()?),

);This implies each schemes can be found in every single place dfget or the Dragonfly daemon operates — no extra configuration wanted.

2. URL parsing: Similar grammar, totally different conventions

Each backends share the identical URL grammar — scheme://[type/]proprietor/repo[/path] — however respect every platform’s conventions:

| Side | Hugging Face (hf://) | ModelScope (modelscope://) |

|---|---|---|

| Repository varieties | fashions, datasets, areas | fashions, datasets |

| Obtain API | huggingface.co/ | modelscope.cn/fashions/ |

| File itemizing API | huggingface.co/api/fashions/ | modelscope.cn/api/v1/fashions/ |

| API response format | Flat JSON with siblings array | Structured JSON with Code, Information, Message envelope |

| Massive file dealing with | Git LFS with HTTP redirects | Direct API obtain |

3. Two obtain modes (each backends)

Single file mode (e.g., hf://proprietor/repo/file.bin or modelscope://proprietor/repo/file.bin):

- Parse URL → extract file path

- Construct platform-specific obtain URL

- stat() performs a HEAD request to get content material size and validate existence

- get() streams the file content material by way of Dragonfly’s piece-based P2P community

- For HF: Git LFS redirects are dealt with transparently by the HTTP shopper

Repository mode (e.g., hf://proprietor/repo -r or modelscope://proprietor/repo -r):

- Parse URL → no file path current

- Name platform-specific API to checklist repository information

- Deserialize the repository metadata right into a file itemizing

- For every file, assemble a scheme-native URL (not uncooked HTTPS), preserving backend semantics

- Dragonfly’s recursive obtain engine processes every file by way of the P2P mesh

It is a essential design determination: recursive downloads emit hf:// or modelscope:// URLs again into the obtain pipeline, not uncooked HTTPS URLs. This preserves authentication context and ensures each file within the recursive obtain goes by way of the proper backend — sustaining token forwarding and URL semantics.

4. Platform-specific API integration

Hugging Face makes use of a resolve-based obtain sample the place the server could return the file straight or redirect to Git LFS storage for giant mannequin information. The reqwest HTTP shopper follows these redirects routinely, making LFS dealing with fully clear.

ModelScope makes use of a structured REST API with express endpoints for file itemizing (/repo/information). The API returns a JSON envelope with Code, Information, and Message fields. The file itemizing endpoint helps recursive traversal natively through the Recursive=true parameter, returning structured RepoFile objects with identify, path, sort, and dimension metadata.

5. Authentication

Each backends assist token-based authentication through CLI flags and bearer token headers:

# Hugging Face authentication

dfget hf://proprietor/private-model/weights.bin

--hf-token=hf_xxxxxxxxxxxxx

# ModelScope authentication

dfget modelscope://proprietor/private-model/config.json

--ms-token=Tokens propagate by way of all operations (stat, get, exists), enabling entry to non-public repositories and gated fashions on each platforms.

Actual-world impression: The place this issues

1. Multi-node GPU cluster mannequin deployment

In large-scale enterprise environments — the sort I architect and function every day — distributing a 130 GB mannequin like meta-llama/Llama-2-70b throughout 50 GPU nodes creates a debilitating community bottleneck. I’ve seen this sample cripple deployment velocity firsthand.

Earlier than: Every of your 50 GPU nodes downloads the mannequin independently.

- Whole bandwidth: 6.5 TB from the mannequin hub

- Time: Restricted by origin server throughput and price limits

- Price: Full web egress x 50

After: Seed peer fetches as soon as, P2P distributes throughout the cluster.

- Origin bandwidth: ~130 GB (as soon as)

- Time: Close to-wire-speed from native friends after preliminary seed

- Price: Minimal egress, heavy intra-cluster site visitors (free)

Once you’re managing self-healing, multi-cloud Kubernetes clusters at enterprise scale, this sort of origin site visitors discount isn’t an optimization — it’s a prerequisite for operational sanity.

2. Multi-hub mannequin sourcing

Groups more and more supply fashions from a number of hubs. A staff may use Llama from Hugging Face and Qwen from ModelScope. With each backends inbuilt, Dragonfly turns into the unified distribution layer no matter origin:

# From Hugging Face

dfget hf://meta-llama/Llama-2-7b -O /fashions/llama2/ -r

# From ModelScope

dfget modelscope://qwen/Qwen-7B -O /fashions/qwen/ -rSimilar P2P mesh. Similar caching layer. Similar operational mannequin. Completely different origins.

3. CI/CD for ML pipelines

Mannequin analysis pipelines that spin up ephemeral runners to check in opposition to particular mannequin variations profit from revision pinning: HTTP shopper follows these redirects routinely:

# Deterministic mannequin variations in CI — from both hub

dfget hf://org/mannequin --hf-revision abc123def -O /workspace/mannequin/ -r

dfget modelscope://org/mannequin --ms-revision v1.0 -O /workspace/mannequin/ -rMixed with Dragonfly’s caching layer, repeated CI runs throughout totally different runners pull from native P2P cache as a substitute of distant hubs. Within the enterprise CI/CD techniques I’ve constructed, this eliminates one of many final remaining sources of non-deterministic pipeline failures: flaky mannequin downloads.

4. Cross-platform mannequin sourcing

For organizations using world infrastructure, Hugging Face serves as the first hub. Dragonfly’s dual-hub assist allows a single distribution platform that routes to the optimum origin:

# International clusters pull from Hugging Face

dfget hf://deepseek-ai/DeepSeek-R1 -O /fashions/DeepSeek-R1/ -r5. Air-gapped and edge deployments

For environments with restricted or no web entry — widespread in regulated enterprise and monetary companies infrastructure — Dragonfly’s seed peer might be pre-loaded from an internet-connected staging space. As soon as seeded, inside nodes use P2P to distribute fashions with none exterior connectivity.

6. Dataset distribution for coaching

Massive-scale coaching jobs typically want the identical dataset replicated throughout data-parallel employees:

# From Hugging Face

dfget hf://datasets/allenai/c4/en/train-00000-of-01024.json.gz

-O /knowledge/c4/train-00000.json.gz

# From ModelScope

dfget modelscope://datasets/damo/squad-zh/practice.json

-O /knowledge/squad-zh/practice.jsonP2P distribution turns O(N) origin fetches into O(1) origin + O(log N) P2P propagation.

Comparability: Why not simply use platform CLIs?

| Functionality | huggingface-cli / modelscope CLI | dfget hf:// / dfget modelscope:// |

|---|---|---|

| Single-source obtain | Sure | Sure |

| P2P acceleration | No | Sure |

| Piece-level parallelism | No | Sure |

| Cluster-wide caching | No | Sure |

| Bandwidth discount (N nodes) | 1x per node | ~1x whole |

| Multi-hub unified interface | No (separate CLIs) | Sure (single instrument) |

| Personal repo auth | Sure | Sure |

| Revision pinning | Sure | Sure |

| Recursive obtain | Sure | Sure |

| Kubernetes-native integration | No | Sure (DaemonSet) |

| Pluggable backend system | No | Sure |

Platform-specific CLIs are wonderful for particular person developer workflows. The native protocol assist in Dragonfly is for infrastructure-scale mannequin distribution.

Getting began

Conditions

- Dragonfly cluster deployed (scheduler + seed peer + peer on nodes)

- dfget CLI obtainable heading in the right direction machines

Fast Begin

1. Set up Dragonfly (through Helm for Kubernetes):

helm repo add dragonfly

helm set up dragonfly dragonfly/dragonfly

--namespace dragonfly-system --create-namespace2. Obtain fashions with P2P from both hub:

# From Hugging Face

dfget hf://deepseek-ai/DeepSeek-R1/mannequin.safetensors -O ./mannequin.safetensors

# From ModelScope

dfget modelscope://deepseek-ai/DeepSeek-R1/config.json -O ./config.json

# Recursive repository obtain (works with each)

dfget hf://deepseek-ai/DeepSeek-R1 -O ./DeepSeek-R1/ -r --hf-token=$HF_TOKEN

dfget modelscope://deepseek-ai/DeepSeek-R1 -O ./DeepSeek-R1/ -r --ms-token=$MS_TOKEN3. Confirm P2P is working:

# Verify Dragonfly daemon logs for peer switch exercise

journalctl -u dfdaemon | grep "peer task"What’s subsequent

These two backends are only the start. The structure is designed for extensibility — including assist for added mannequin hubs follows the identical sample: implement the Backend trait, register the scheme, and your entire P2P mesh immediately serves the brand new supply. Potential future enhancements embody:

- Clever pre-warming: Robotically seed standard fashions throughout clusters primarily based on utilization patterns.

- Deduplication throughout revisions: Share widespread items between mannequin variations (e.g., shared tokenizer information).

- Cross-hub deduplication: When the identical mannequin exists on each Hugging Face and ModelScope, share items throughout obtain sources.

- Integration with Kubernetes mannequin serving frameworks: Native assist in KServe, Triton Inference Server, and vLLM for P2P mannequin loading.

- Bandwidth-aware scheduling: Prioritize P2P transfers primarily based on GPU node topology and community proximity.

Contributing

The PRs that introduced these options to life: –

Dragonfly is a CNCF Graduated venture and welcomes contributions. For those who’re engaged on AI infrastructure and have concepts for enhancing mannequin distribution, try the Dragonfly GitHub repository and be a part of the neighborhood.

Conclusion

The AI business’s mannequin distribution downside doesn’t want one other wrapper script or one other S3 bucket. It wants infrastructure-level P2P distribution with first-class understanding of the place fashions reside — whether or not that’s Hugging Face, ModelScope, or the subsequent mannequin hub that emerges.

Dragonfly now speaks each hf:// and modelscope:// natively: authenticated, revision-aware, P2P-accelerated paths from the world’s two largest mannequin hubs to each node in your cluster. One origin fetch per hub. Peer-distributed propagation. No operational overhead.

The fashions are getting larger. The clusters are getting bigger. The hubs are multiplying. The distribution layer must sustain.

Now it might probably.

Pavan Madduri is a Senior Cloud Platform Engineer at W.W. Grainger and a CNCF Golden Kubestronaut. He focuses on architecting large, self-healing multi-cloud infrastructure and pioneering ‘Agentic Ops’ for enterprise Kubernetes environments. He’s an lively contributor to the cloud-native ecosystem, specializing in observability and high-performance container distribution. Observe his work on GitHub.