Within the present AI panorama, the ‘context window’ has change into a blunt instrument. We’ve been informed that if we merely broaden the reminiscence of a frontier mannequin, the retrieval drawback disappears. However as any AI professionals constructing RAG (Retrieval-Augmented Era) programs is aware of, stuffing one million tokens right into a immediate typically results in larger latency, astronomical prices, and a ‘lost in the middle’ reasoning failure that no quantity of compute appears to totally remedy.

Chroma, the corporate behind the favored open-source vector database, is taking a special, extra surgical method. They launched Context-1, a 20B parameter agentic search mannequin designed to behave as a specialised retrieval subagent.

Moderately than attempting to be a general-purpose reasoning engine, Context-1 is a extremely optimized ‘scout.’ It’s constructed to do one factor: discover the correct supporting paperwork for advanced, multi-hop queries and hand them off to a downstream frontier mannequin for the ultimate reply.

The Rise of the Agentic Subagent

Context-1 is derived from gpt-oss-20B, a Combination of Consultants (MoE) structure that Chroma has fine-tuned utilizing a mixture of Supervised Effective-Tuning (SFT) and Reinforcement Studying (RL) through CISPO (a staged curriculum optimization).

The aim isn’t simply to retrieve chunks; it’s to execute a sequential reasoning activity. When a consumer asks a fancy query, Context-1 doesn’t simply hit a vector index as soon as. It decomposes the high-level question into focused subqueries, executes parallel device calls (averaging 2.56 calls per flip), and iteratively searches the corpus.

For AI professionals, the architectural shift right here is an important takeaway: Decoupling Search from Era. In a standard RAG pipeline, the developer manages the retrieval logic. With Context-1, that duty is shifted to the mannequin itself. It operates inside a particular agent harness that permits it to work together with instruments like search_corpus (hybrid BM25 + dense search), grep_corpus (regex), and read_document.

The Killer Characteristic: Self-Modifying Context

Essentially the most technically important innovation in Context-1 is Self-Modifying Context.

As an agent gathers data over a number of turns, its context window fills up with paperwork—a lot of which turn into redundant or irrelevant to the ultimate reply. Normal fashions finally ‘choke’ on this noise. Context-1, nevertheless, has been educated with a pruning accuracy of 0.94.

Mid-search, the mannequin critiques its amassed context and proactively executes a prune_chunks command to discard irrelevant passages. This ‘soft limit pruning’ retains the context window lean, releasing up capability for deeper exploration and stopping the ‘context rot’ that plagues longer reasoning chains. This enables a specialised 20B mannequin to keep up excessive retrieval high quality inside a bounded 32k context, even when navigating datasets that will usually require a lot bigger home windows.

Constructing the ‘Leak-Proof’ Benchmark: context-1-data-gen

To coach and consider a mannequin on multi-hop reasoning, you want information the place the ‘ground truth’ is thought and requires a number of steps to achieve. Chroma has open-sourced the device they used to unravel this: the context-1-data-gen repository.

The pipeline avoids the pitfalls of static benchmarks by producing artificial multi-hop duties throughout 4 particular domains:

- Internet: Multi-step analysis duties from the open internet.

- SEC: Finance duties involving SEC filings (10-Okay, 20-F).

- Patents: Authorized duties specializing in USPTO prior-art search.

- E mail: Search duties utilizing the Epstein recordsdata and Enron corpus.

The information technology follows a rigorous Discover → Confirm → Distract → Index sample. It generates ‘clues’ and ‘questions’ the place the reply can solely be discovered by bridging data throughout a number of paperwork. By mining ‘topical distractors’—paperwork that look related however are logically ineffective—Chroma ensures that the mannequin can not ‘hallucinate’ its technique to an accurate reply by way of easy key phrase matching.

Efficiency: Sooner, Cheaper, and Aggressive with GPT-5

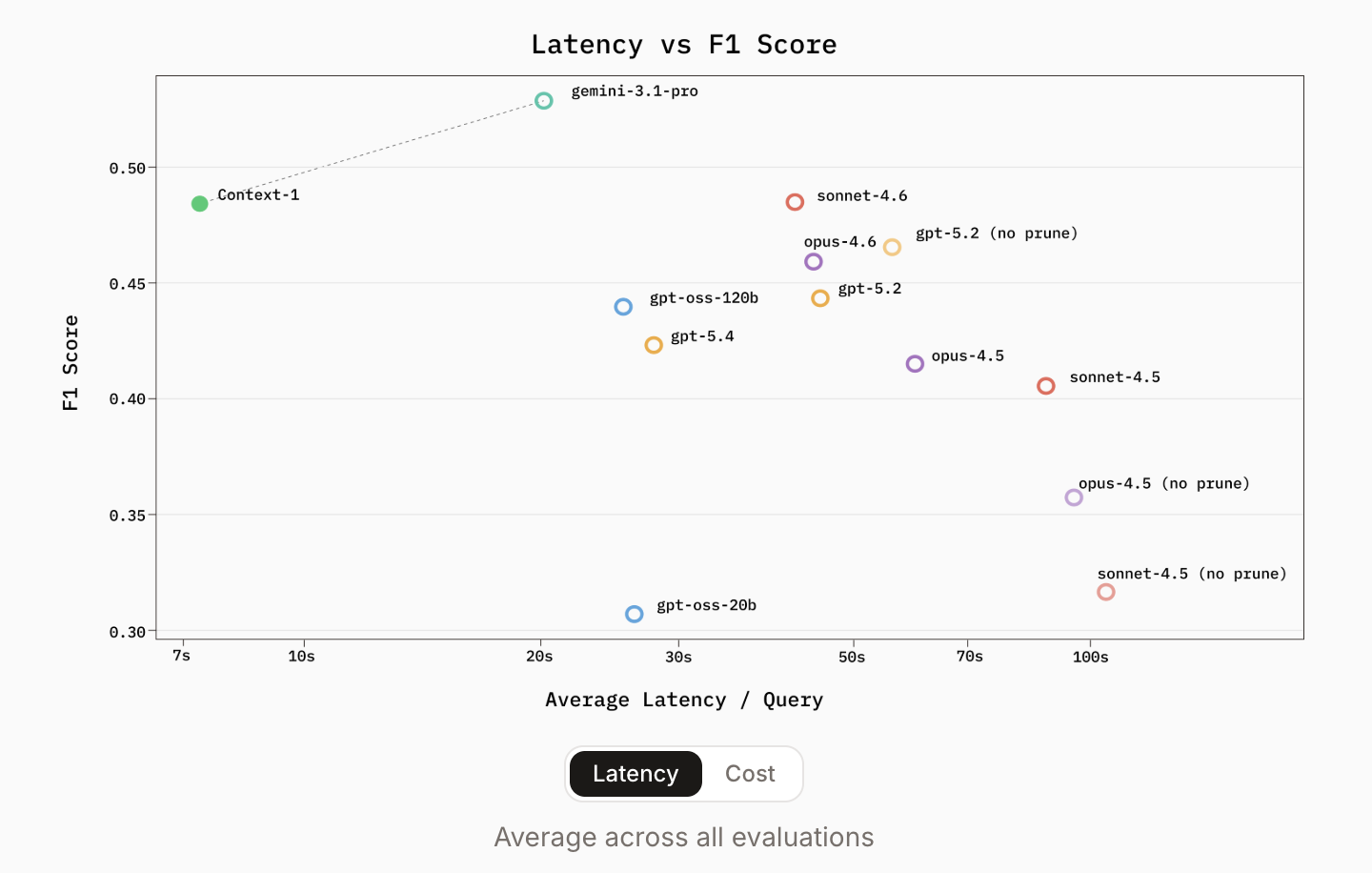

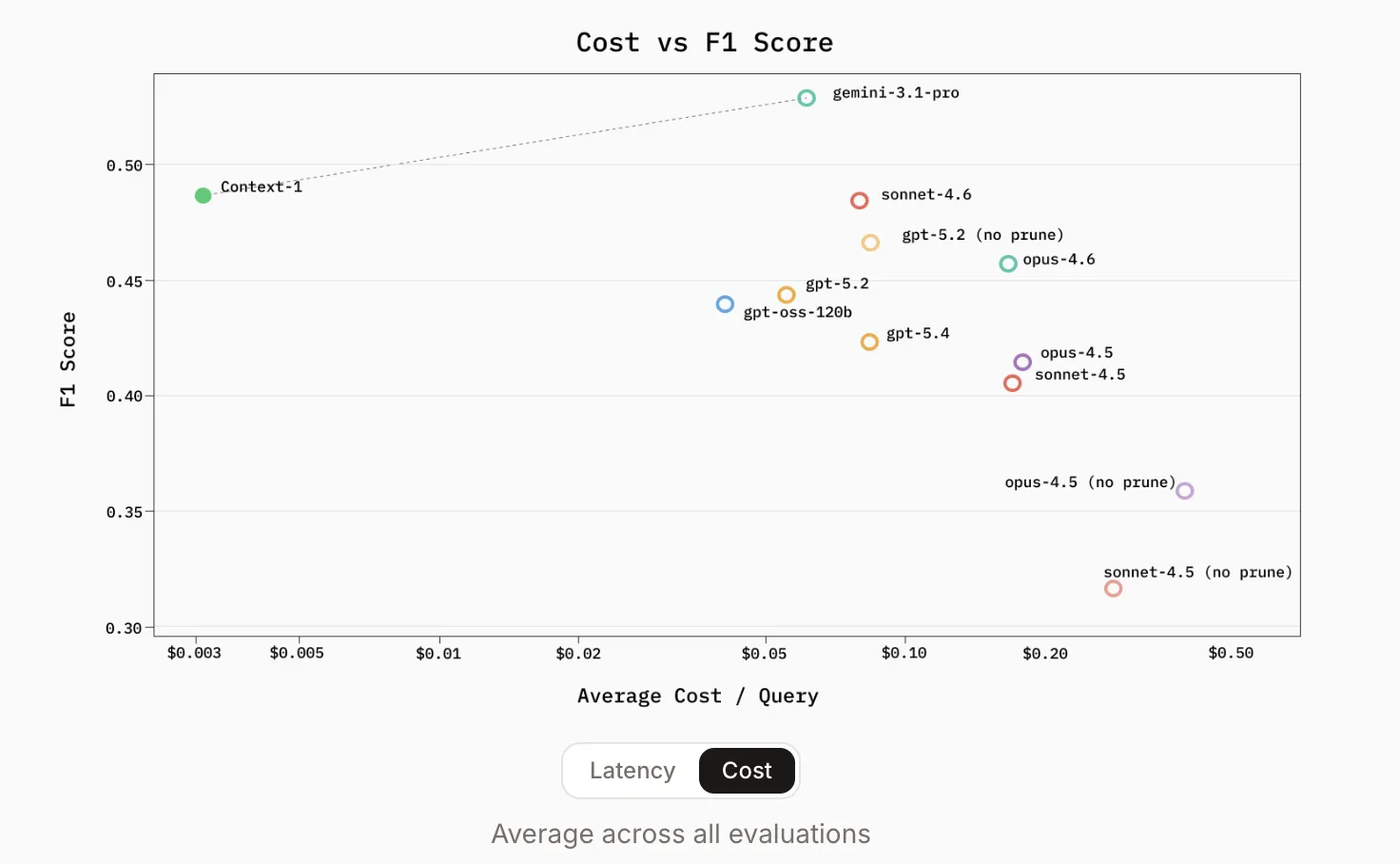

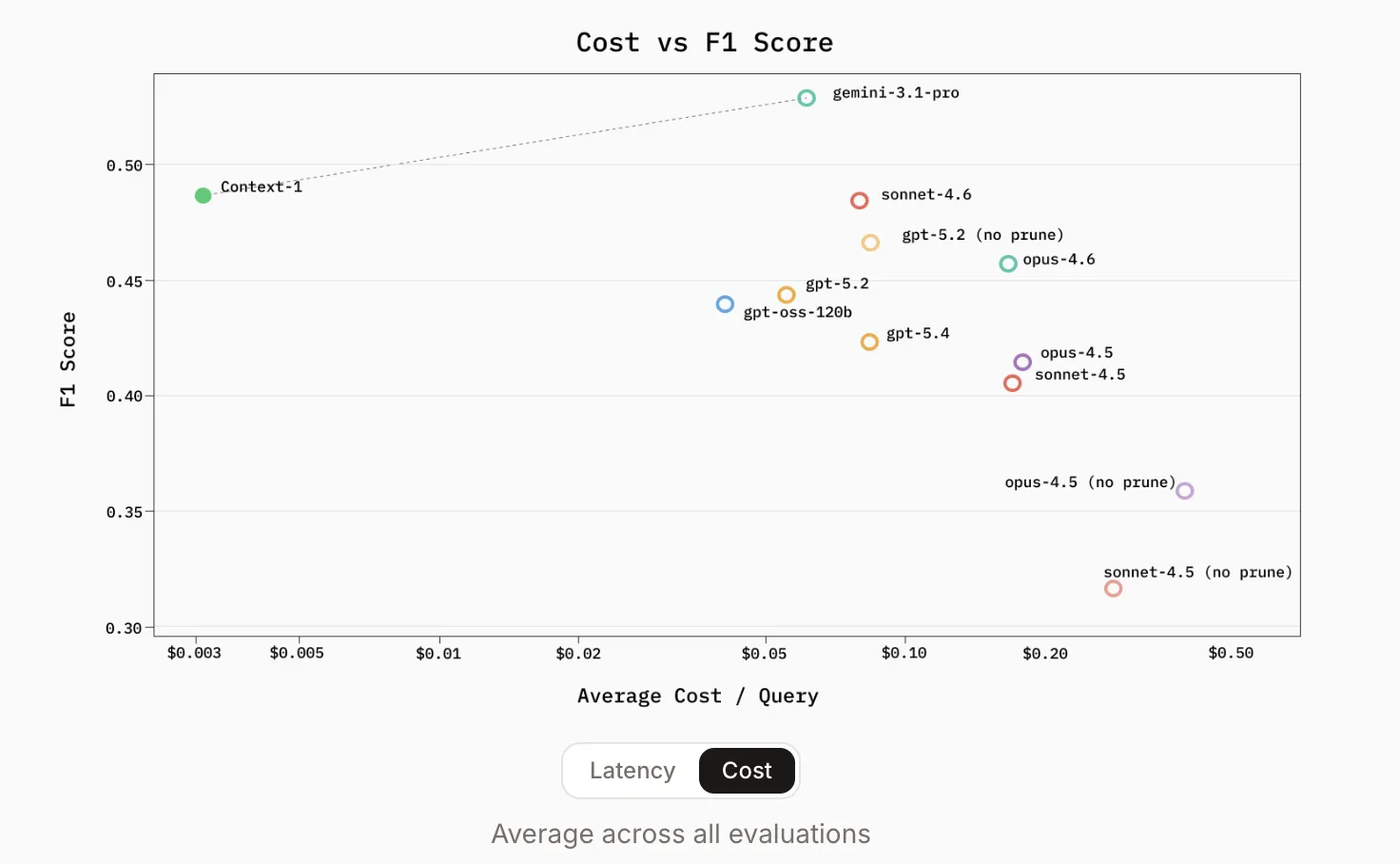

The benchmark outcomes launched by Chroma are a actuality test for the ‘frontier-only’ crowd. Context-1 was evaluated in opposition to 2026-era heavyweights together with gpt-oss-120b, gpt-5.2, gpt-5.4, and the Sonnet/Opus 4.5 and 4.6 households.

Throughout public benchmarks like BrowseComp-Plus, SealQA, FRAMES, and HotpotQA, Context-1 demonstrated retrieval efficiency corresponding to frontier fashions which can be orders of magnitude bigger.

Essentially the most compelling metrics for AI devs are the effectivity positive factors:

- Velocity: Context-1 provides as much as 10x quicker inference than general-purpose frontier fashions.

- Value: It’s roughly 25x cheaper to run for a similar retrieval duties.

- Pareto Frontier: Through the use of a ‘4x’ configuration—operating 4 Context-1 brokers in parallel and merging outcomes through reciprocal rank fusion—it matches the accuracy of a single GPT-5.4 run at a fraction of the compute.

The ‘performance cliff’ recognized isn’t about token size alone; it’s about hop-count. Because the variety of reasoning steps will increase, basic fashions typically fail to maintain the search trajectory. Context-1’s specialised coaching permits it to navigate these deeper chains extra reliably as a result of it isn’t distracted by the ‘answering’ activity till the search is concluded.

Key Takeaways

- The ‘Scout’ Mannequin Technique: Context-1 is a specialised 20B parameter agentic search mannequin (derived from gpt-oss-20B) designed to behave as a retrieval subagent, proving {that a} lean, specialised mannequin can outperform large general-purpose LLMs in multi-hop search.

- Self-Modifying Context: To unravel the issue of ‘context rot,’ the mannequin encompasses a pruning accuracy of 0.94, permitting it to proactively discard irrelevant paperwork mid-search to maintain its context window centered and high-signal.

- Leak-Proof Benchmarking: The open-sourced

context-1-data-gendevice makes use of an artificial ‘Explore → Verify → Distract’ pipeline to create multi-hop duties in Internet, SEC, Patent, and E mail domains, guaranteeing fashions are examined on reasoning quite than memorized information. - Decoupled Effectivity: By focusing solely on retrieval, Context-1 achieves 10x quicker inference and 25x decrease prices than frontier fashions like GPT-5.4, whereas matching their accuracy on advanced benchmarks like HotpotQA and FRAMES.

- The Tiered RAG Future: This launch champions a tiered structure the place a high-speed subagent curates a ‘golden context’ for a downstream frontier mannequin, successfully fixing the latency and reasoning failures of large, unmanaged context home windows.

Try the Repo and Technical particulars. Additionally, be happy to comply with us on Twitter and don’t neglect to hitch our 120k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you may be a part of us on telegram as properly.