AI instruments have quickly grow to be a part of on a regular basis life, powering every part from content material creation and software program growth to analysis and enterprise workflows.

Platforms comparable to ChatGPT, Claude, Microsoft Copilot, Perplexity and plenty of others at the moment are broadly utilized by people and organizations alike, usually aiding with duties that contain inner paperwork, analysis materials, software program code, or different probably delicate info.

In lots of organizations, these instruments are already embedded into day by day workflows, making them not solely handy but in addition operationally important.

As reliance on these companies continues to develop, so does their worth, however not just for authentic customers, but in addition throughout the cybercrime ecosystem. Entry to superior AI fashions can considerably scale back effort, enhance output high quality, and speed up duties that beforehand required experience or time.

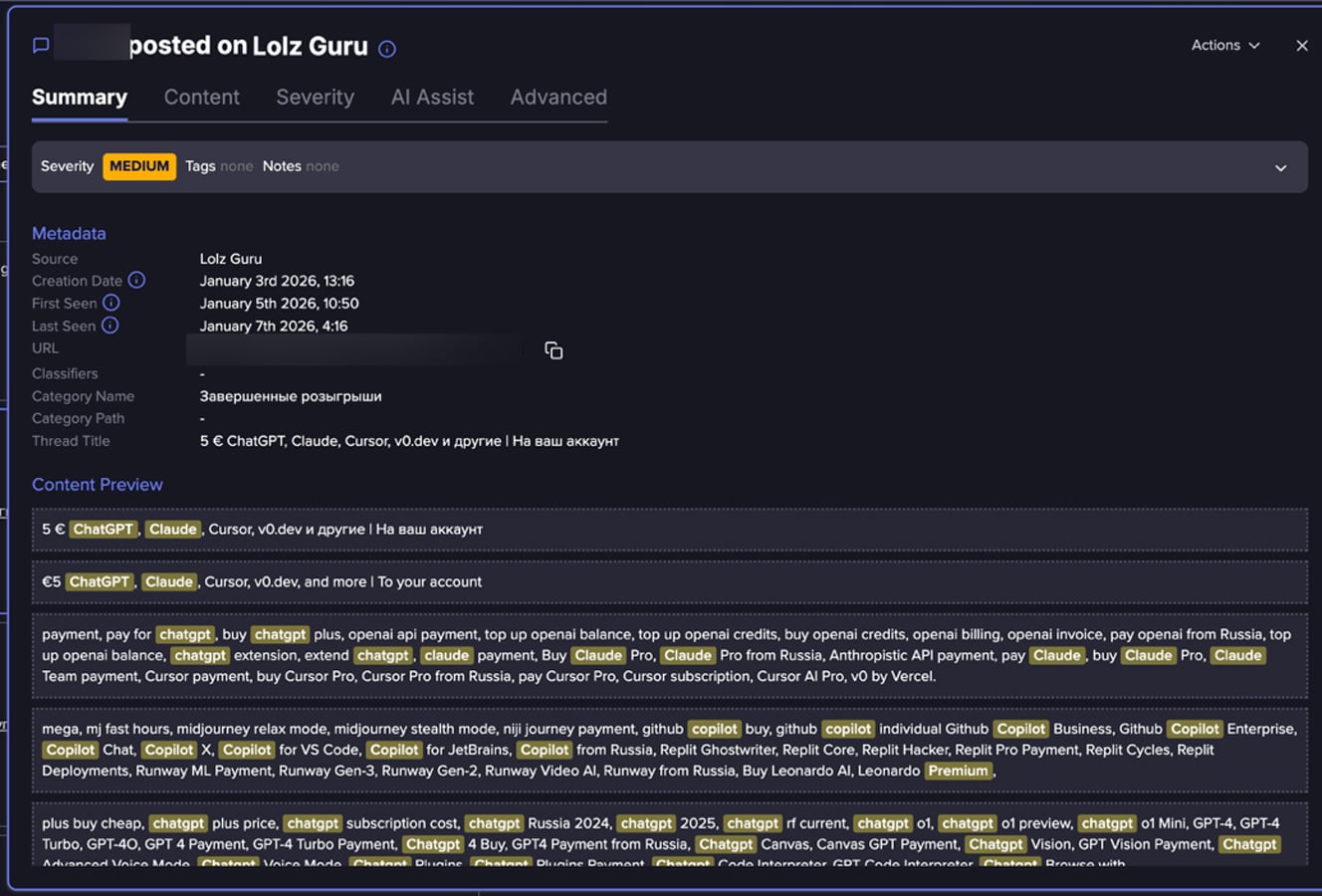

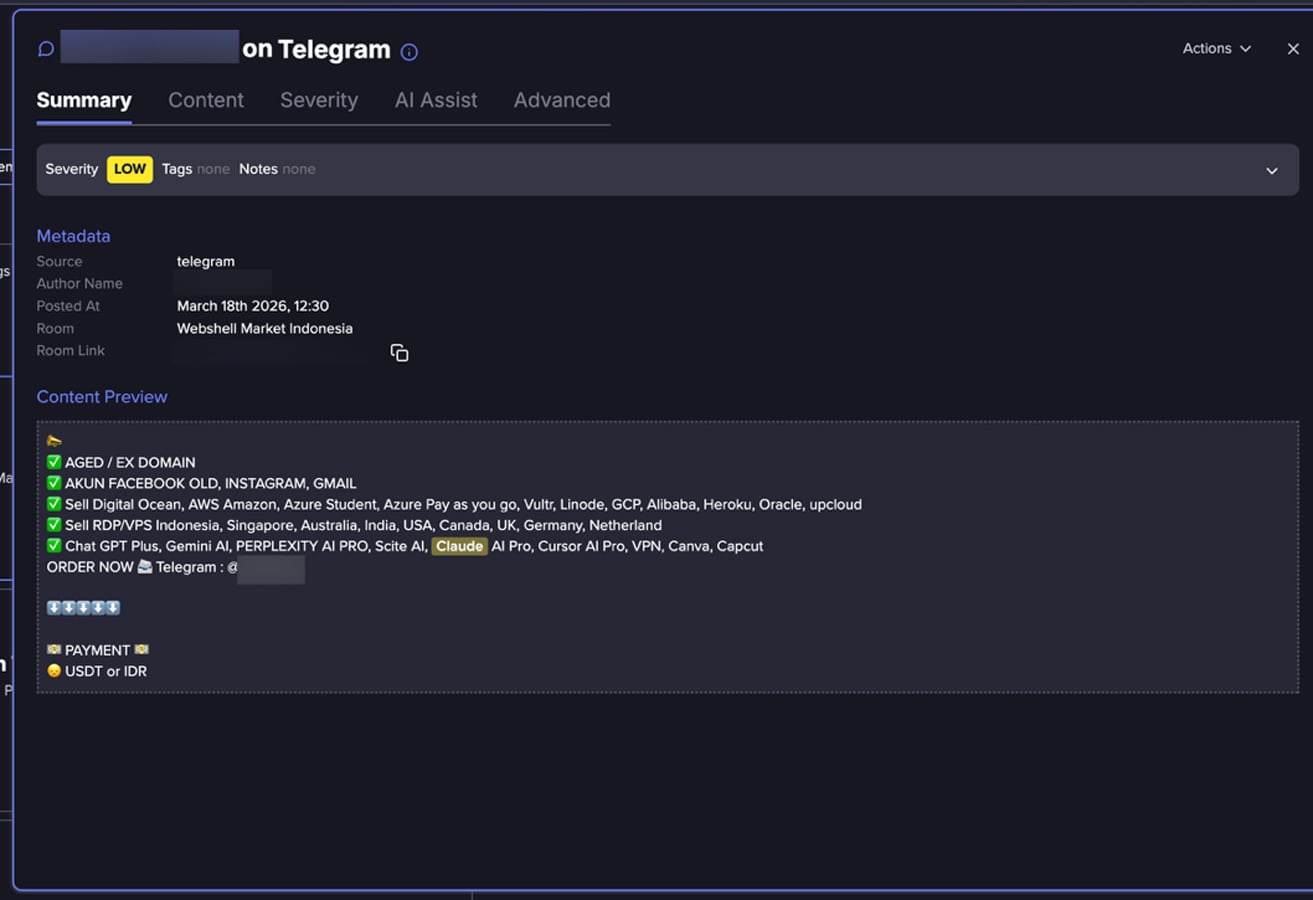

An evaluation carried out by Flare analysts of tons of of posts collected from fraud-oriented on-line communities reveals a rising underground market centered on premium AI platform entry. move

Relatively than remoted circumstances of account misuse, the info factors to a recurring sample wherein entry to AI platforms is repeatedly marketed and redistributed by way of resale-style listings. Many of those listings promote discounted subscriptions, bundled entry to a number of AI instruments, or utilization fashions that declare to take away typical platform limitations.

This will counsel a broader pattern in underground markets, the place entry to digital companies will be bundled, repackaged, and resold throughout a wider purchaser base.

How Do Risk Actors Get hold of AI Accounts?

Whereas the dataset analyzed by Flare’s researchers doesn’t straight doc acquisition strategies, patterns within the knowledge could counsel a number of pathways:

-

Uncovered keys and secrets and techniques: In current analysis carried out by Flare, researchers confirmed how uncovered keys will be present in Docker Hub.

-

Credential theft and account takeover: Listings that embody aged Gmail or Outlook accounts could point out that compromised credentials are being reused to entry AI platforms.

-

Bulk account creation and verification bypass: References to digital telephone numbers could counsel that actors create accounts at scale whereas making an attempt to bypass verification controls.

-

Abuse of trials and promotional applications: Mentions of present codes or trial entry could point out that onboarding incentives are being exploited.

-

Shared or resold subscriptions: Some listings and purchaser discussions could counsel that entry is distributed throughout a number of customers quite than tied to a single proprietor.

-

Potential API key or developer entry resale: Mentions of API keys could point out that backend or programmatic entry can also be being marketed.

Taken collectively, these strategies could point out a mixture of account compromise, large-scale provisioning, and coverage abuse.

You possibly can monitor underground markets and Telegram channels the place risk actors purchase and promote stolen AI platform entry—earlier than attackers use it towards your group.

Search for your AI accounts for Free

Why does underground AI entry entice patrons?

-

Value: Official subscriptions for a lot of premium AI companies sometimes begin round $20 per thirty days and might enhance considerably relying on utilization or enterprise options. In distinction, underground listings ceaselessly emphasize cheaper entry or bundled choices. Whereas actual pricing is just not at all times clearly said, the constant concentrate on affordability suggests a significant value hole.

-

Scale: Patrons who require a number of accounts for automation, testing, or evasion functions could discover it simpler to buy ready-made entry quite than create accounts individually, significantly the place verification and cost necessities introduce friction.

-

Sanctions Bypass: In some international locations like Russia, Iran or North Korea, entry and cost with native bank cards to ChatGPT, Claude and so on., could also be restricted. Underground markets supply ready-to-use accounts that take away onboarding steps or know your consumer and supply quick entry.

-

Mannequin restrictions: Some posts promote “fewer restrictions,” interesting to customers trying to bypass safeguards or utilization limits. Whereas these claims usually learn like exaggerated promoting and could appear impractical, they replicate a standard actuality in underground markets – the place accounts or API keys are resold with the promise of diminished controls or oversight.

Flare hyperlink to submit, join the free trial to entry for those who aren’t already a buyer.

How Risk Actors are Utilizing AI platforms

Entry to AI platforms could allow a spread of actions, a few of which lengthen past easy misuse of the companies themselves.

In fraud-related eventualities, generative AI instruments could also be used to provide phishing messages, rip-off scripts, and multilingual social engineering content material at scale. AI-generated textual content can enhance the realism and effectiveness of fraudulent communications.

For instance, Europol’s 2025 risk evaluation warns that felony teams are more and more utilizing generative AI to automate phishing and fraud operations at scale, noting that these instruments allow attackers to provide convincing content material with larger pace and class than beforehand doable.

Equally, Palo Alto Networks’ Unit 42 reported that attackers are leveraging AI to craft extremely personalised social engineering campaigns, permitting malicious messages to be tailor-made extra exactly to particular person targets and contexts.

Anthropic launched in August 2025 a report masking misuse of AI, and in November 2025 one other report, orchestrated cyber espionage marketing campaign, illustrating how attackers can misuse AI.

AI instruments may additionally help automation, coding, and content material era duties, permitting actors to function extra effectively. Even people with out robust technical backgrounds can leverage these instruments to carry out complicated duties.

Some platforms additionally embody picture, audio, or video era capabilities, which can be used to create artificial content material for impersonation or deception.

The Rising Underground Marketplace for AI Accounts

Flare researchers’ findings counsel that risk actors and underground sellers are perceiving AI accounts as a beneficial black market commodity, and AI accounts are built-in into the prevailing ecosystem that trades entry, id, and digital companies. These choices usually seem alongside electronic mail accounts, developer instruments, and verification infrastructure.

The evaluation reveals a number of forms of AI-related choices, starting from direct resale of premium subscriptions to claims of unrestricted or prolonged entry. These gives are sometimes offered in easy, product-like language, making them accessible even to patrons with out technical experience.

Flare’s knowledge incorporates gives such:

-

ChatGPT Plus and Professional subscriptions

-

Claude Professional entry

-

Microsoft Copilot bundled with Workplace 365 accounts

-

Perplexity AI Professional

-

and API-related choices

In some circumstances, a number of companies are marketed collectively as a single bundle.

Some posts use promotional language comparable to “premium access,” “no limits,” or “full API access.” Whereas these claims can’t at all times be verified, they might point out makes an attempt to draw patrons searching for fewer restrictions or larger flexibility than official plans present.

Flare hyperlink to submit, join the free trial to entry for those who aren’t already a buyer.

This pattern could decrease the barrier to entry and broaden misuse throughout a broader vary of actors. As AI companies proceed to evolve and acquire adoption, their worth inside underground markets may additionally enhance.

Addressing this shift will possible require stronger account protections, improved monitoring for suspicious exercise, and larger consciousness of how these companies are being integrated into broader fraud ecosystems.

How organizations can mitigate the chance

-

Allow multi-factor authentication (MFA) on all AI accounts

-

Keep away from sharing delicate knowledge except utilizing authorized enterprise environments

-

Monitor login conduct and utilization anomalies

-

Use enterprise-grade accounts with higher controls

-

Rotate and safe API keys commonly

-

Monitor underground exercise to determine uncovered accounts, keys and secrets and techniques

-

Educating workers about dangers of shared or bought accounts

-

Implement governance insurance policies for AI device utilization

Be taught extra by signing up for our free trial.

Sponsored and written by Flare.