Comply with ZDNET: Add us as a most popular supply on Google.

ZDNET’s key takeaways

- Nvidia launched new fashions for autonomous robots, vehicles, and extra.

- Uber will add Nvidia-powered robotaxis to cities as early as 2027.

- Extra lifelike robotics may imply robotic characters at Disney World.

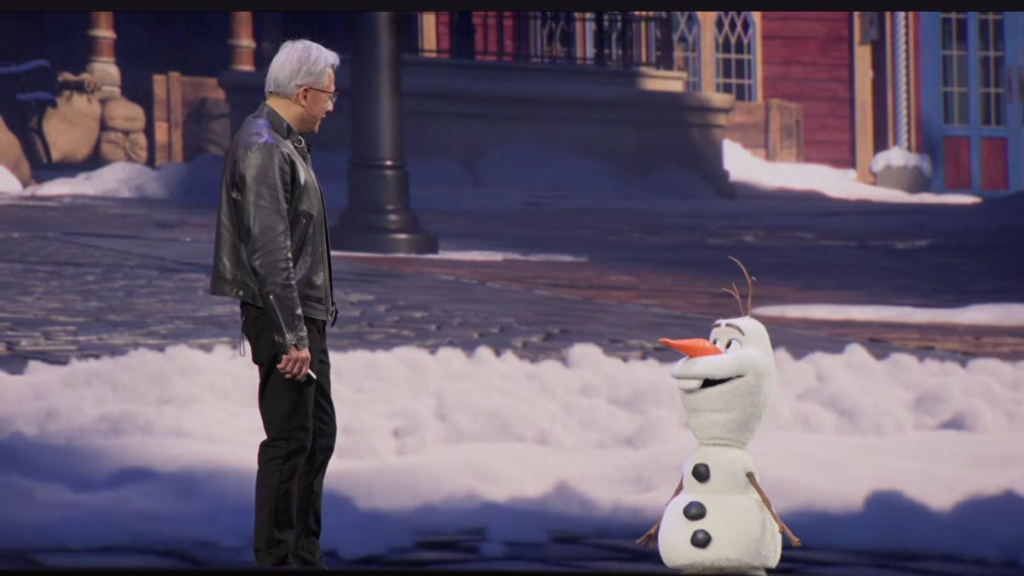

To shut out his Nvidia GTC keynote on Monday, CEO Jensen Huang introduced out an surprising visitor: a strolling, speaking robotic model of Olaf, the animated snowman from Disney’s Frozen film. Huang defined to robo-Olaf that he is run on Nvidia’s Jetson platform and discovered to stroll inside the corporate’s Omniverse simulator

Olaf’s responses did not at all times make sense — the dialog was awkward, however the thought was clear: sooner or later, robotic characters may very well be wandering round Disneyland utilizing Nvidia’s tech.

Additionally: Nvidia desires to personal your AI knowledge heart from finish to finish

Bodily AI — AI programs embedded in machines like robots or vehicles that navigate real-world environments, versus fashions caught within the cloud or in your cellphone — has been gaining steam during the last yr, and was throughout CES this previous January. At GTC, Nvidia made a number of investments within the expertise, starting from new fashions to assist for the info that makes or breaks bodily AI programs.

Here is what’s new.

New fashions for bodily AI

Nvidia launched a number of new basis fashions geared in the direction of bettering how robots and automobiles perform in the actual world. They embody Cosmos 3, which generates artificial worlds to assist bodily AI navigate complicated environments; Isaac GR00T N1.7, an “open reasoning vision language action (VLA) model” constructed for humanoid robots, which the corporate says is “commercially viable for real-world deployment”; and Alpamayo 1.5, one other reasoning VLA mannequin that provides self-driving automobiles higher navigation steerage and immediate specification.

Additionally: Nvidia bets on OpenClaw, however provides a safety layer – how NemoClaw works

Nvidia known as Alpamayo 1.5 “a major upgrade” inside its present autonomous car mannequin household, noting it “takes driving video, ego-motion history, navigation guidance and natural language prompts as inputs.” It turns these inputs into driving trajectories that permit builders carefully observe a car’s habits and create security guardrails by prompts. Nvidia mentioned Alpamayo 1.5 will help take autonomous driving to the subsequent degree by making it simpler to be taught from unpredictable highway occasions, climate situations, or pedestrian exercise.

At present, Nvidia mentioned, its prospects are utilizing Cosmos 3 to coach bodily AI programs and GR00T N1.7 to “scale humanoid robot deployment.”

Autonomous automobiles

With the picture of 110 completely different robots behind him, Nvidia CEO Jensen Huang described our current, saying the “ChatGPT moment of self-driving cars has arrived.”

Nvidia is broadening its partnership with Uber, saying it is going to “launch a fleet of autonomous vehicles” powered fully by Nvidia’s Drive AV software program in 28 cities throughout 4 continents by 2028, with Los Angeles and San Francisco beginning earlier in 2027. Presumably, meaning customers will be capable to e-book self-driving vehicles within the Uber app on a a lot bigger scale.

Additionally: Why encrypted backups might fail in an AI-driven ransomware period

“This DRIVE Hyperion-powered fleet will tap into NVIDIA Alpamayo open models and the NVIDIA Halos operating system to accelerate the development and deployment of safe, scalable robotaxi services worldwide,” the corporate mentioned within the launch.

The corporate can also be including a number of automakers, together with BYD, Hyundai, Nissan, and Geely, to its robotaxi initiative, which already consists of GM, Mercedes, and Toyota. A number of of these new addition corporations are persevering with to make use of Nvidia’s Drive Hyperion platform, alongside its Alpamayo fashions, to scale “level 4” car coaching, or the best degree of automated driving (a totally useful self-driving automotive that has primarily no route from human passengers).

Edge AI and house computing

Nvidia can also be working with T-Cell and Nokia to hurry up bodily AI utilizing AI radio entry community (AI-RAN) infrastructure in distant areas. The corporate says this might assist real-world knowledge assortment for bodily AI cross unconnected, remoted, or overcrowded zones utilizing (however with out disrupting) 5G connectivity.

“By turning the 5G network into a distributed AI computer with T-Mobile and Nokia, we’re creating a scalable blueprint for the world’s edge AI infrastructure,” Huang mentioned within the announcement.

The good thing about edge AI is low latency: Native hubs enable data to maneuver extra shortly than when it has to cross all the web. Nvidia’s partnership makes use of T-Cell’s present infrastructure to assist that for the event of bodily AI. The corporate mentioned utility and operations corporations are already utilizing bodily AI brokers, programs, and digital twins throughout this infrastructure to be used instances like optimizing visitors gentle timing or fixing transmission strains.

In one other announcement, Nvidia additionally nodded to house computing. The corporate mentioned its new platforms, together with Vera Rubin, are “unlocking a new era of space innovation, bringing AI compute to orbital data centers (ODCs), geospatial intelligence and autonomous space operations.”

Additionally: What is the take care of bodily AI? Why the subsequent frontier of tech is already throughout you

What meaning in follow: Nvidia is on the way in which to AI purposes that may function between Earth and house, in addition to between house and house. Nvidia mentioned its IGX ThorTM and Jetson OrinTM platforms provide the energy-efficient inference and knowledge processing required to do something in orbit — which is edge AI, functioning as a neighborhood hub in house, outdoors the cloud.

“As we deploy satellite constellations and explore deeper into space, intelligence must live wherever data is generated,” Huang mentioned within the launch.

However orbital knowledge facilities are nonetheless theoretical — not unimaginable, however not but a full actuality. Whereas Nvidia’s IGX Thor and Jetson Orin platforms can be found immediately, the Vera Rubin House-1 element of the corporate’s house initiative, introduced immediately, will probably be “available at a later date.”

A brand new ‘manufacturing facility’ for bodily AI knowledge

Bodily AI lives in robotics, autonomous automobiles, and different real-world purposes, which might imply larger stakes if one thing goes mechanically or computationally mistaken. That drawback is greatest averted with high-quality coaching knowledge that prepares bodily AI programs for as many conditions as doable to make sure they take safer, extra predictable, and more practical motion.

To accompany its concentrate on bodily AI, Nvidia additionally introduced its Bodily AI Information Manufacturing unit Blueprint, an “open reference architecture that unifies and automates how training data is generated, augmented and evaluated, reducing the costs, time and complexity of training physical AI systems at scale.”

Additionally: Why shopping for into Moltbook and OpenClaw could also be Large Tech’s most harmful wager but

Set to be accessible subsequent month on GitHub, Blueprint lets corporations use Nvidia’s Cosmos household of world basis fashions to course of real-world knowledge and generate artificial knowledge at scale to coach bodily AI programs. It additionally helps reinforcement studying and testing processes for autonomous automobiles and different bodily AI programs. In keeping with Nvidia, Blueprint ensures datasets are various by together with artificial examples of edge instances and different rare eventualities which might be more durable or costly to doc in the actual world.

Whereas it will not be accessible broadly till April, Nvidia mentioned Uber is already utilizing Blueprint to develop autonomous automobiles, and Skild AI is utilizing it for general-purpose robotics.

The large image

Developments in bodily AI have shopper purposes, like Waymo vehicles and the viral home chore robots you have seemingly come throughout, however are most instantly related to industrial engineering. Extra succesful, autonomous robots may have the most important influence on our public and industrial landscapes: on roads, in factories, and, evidently, strolling throughout theme parks.