State-sponsored hackers are exploiting highly-advanced tooling to speed up their specific flavours of cyberattacks, with menace actors from Iran, North Korea, China, and Russia utilizing fashions like Google’s Gemini to additional their campaigns. They can craft subtle phishing campaigns and develop malware, in accordance with a brand new report from Google’s Menace Intelligence Group (GTIG).

The quarterly AI Menace Tracker report, launched at present, reveals how government-backed attackers have begun to make use of synthetic intelligence within the assault lifecycle – reconnaissance, social engineering, and ultimately, malware improvement. This exercise has develop into obvious because of the GTIG’s work throughout the last quarter of 2025.

“For government-backed threat actors, large language models have become essential tools for technical research, targeting, and the rapid generation of nuanced phishing lures,” GTIG researchers said of their report.

Reconnaissance by state-sponsored hackers targets the defence sector

Iranian menace actor APT42 is reported as having used Gemini to enhance its reconnaissance and focused social engineering operations. The group used an AI to create official-seeming e-mail addresses for particular entities after which carried out analysis to ascertain credible pretexts for approaching targets.

APT42 crafted personas and eventualities designed to raised elicit engagement by their targets, translating between languages and deploying pure, native phrases that helped it get spherical conventional phishing crimson flags, resembling poor grammar or awkward syntax.

North Korean government-backed actor UNC2970, which focuses on defence focusing on and impersonating company recruiters, used Gemini to assist it profile high-value targets. The group’s reconnaissance included looking for info on main cybersecurity and defence firms, mapping particular technical job roles, and gathering wage info.

“This activity blurs the distinction between routine professional research and malicious reconnaissance, as the actor gathers the necessary components to create tailored, high-fidelity phishing personas,” GTIG famous.

Mannequin extraction assaults surge

Past operational misuse, Google DeepMind and GTIG recognized a enhance in mannequin extraction makes an attempt – also called “distillation attacks” – aimed toward stealing mental property from AI fashions.

One marketing campaign focusing on Gemini’s reasoning skills concerned the collation and use of over 100,000 prompts designed to coerce the mannequin into outputting reasoning processes. The breadth of questions recommended an try to copy Gemini’s reasoning means in non-English goal languages in numerous duties.

Whereas GTIG noticed no direct assaults on frontier fashions from superior persistent menace actors, the staff recognized and disrupted frequent mannequin extraction assaults from non-public sector entities globally and researchers searching for to clone proprietary logic.

Google’s techniques recognised these assaults in real-time and deployed defences to guard inner reasoning traces.

AI-integrated malware emerges

GTIG noticed malware samples, tracked as HONESTCUE, that use Gemini’s API to outsource performance technology. The malware is designed to undermine conventional network-based detection and static evaluation via a multi-layered obfuscation strategy.

HONESTCUE features as a downloader and launcher framework that sends prompts through Gemini’s API and receives C# supply code as responses. The fileless secondary stage compiles and executes payloads instantly in reminiscence, leaving no artefacts on disk.

Individually, GTIG recognized COINBAIT, a phishing equipment whose building was probably accelerated by AI code technology instruments. The equipment, which masquerades as a serious cryptocurrency alternate for credential harvesting, was constructed utilizing the AI-powered platform Lovable AI.

ClickFix campaigns abuse AI chat platforms

In a novel social engineering marketing campaign first noticed in December 2025, Google noticed menace actors abuse the general public sharing options of generative AI providers – together with Gemini, ChatGPT, Copilot, DeepSeek, and Grok – to host misleading content material distributing ATOMIC malware focusing on macOS techniques.

Attackers manipulated AI fashions to create realistic-looking directions for widespread pc duties, embedding malicious command-line scripts because the “solution.” By creating shareable hyperlinks to those AI chat transcripts, menace actors used trusted domains to host their preliminary assault stage.

Underground market thrives on stolen API keys

GTIG’s observations of English and Russian-language underground boards point out a persistent demand for AI-enabled instruments and providers. Nonetheless, state-sponsored hackers and cybercriminals wrestle to develop customized AI fashions, as an alternative counting on mature business merchandise accessed via stolen credentials.

One toolkit, “Xanthorox,” marketed itself as a customized AI for autonomous malware technology and phishing marketing campaign improvement. GTIG’s investigation revealed Xanthorox was not a bespoke mannequin however really powered by a number of business AI merchandise, together with Gemini, accessed via stolen API keys.

Google’s response and mitigations

Google has taken motion towards recognized menace actors by disabling accounts and property related to malicious exercise. The corporate has additionally utilized intelligence to strengthen each classifiers and fashions, letting them refuse help with comparable assaults transferring ahead.

“We are committed to developing AI boldly and responsibly, which means taking proactive steps to disrupt malicious activity by disabling the projects and accounts associated with bad actors, while continuously improving our models to make them less susceptible to misuse,” the report said.

GTIG emphasised that regardless of these developments, no APT or info operations actors have achieved breakthrough skills that basically alter the menace panorama.

The findings underscore the evolving function of AI in cybersecurity, as each defenders and attackers race to make use of the know-how’s skills.

For enterprise safety groups, significantly within the Asia-Pacific area the place Chinese language and North Korean state-sponsored hackers stay energetic, the report serves as an essential reminder to boost defences towards AI-augmented social engineering and reconnaissance operations.

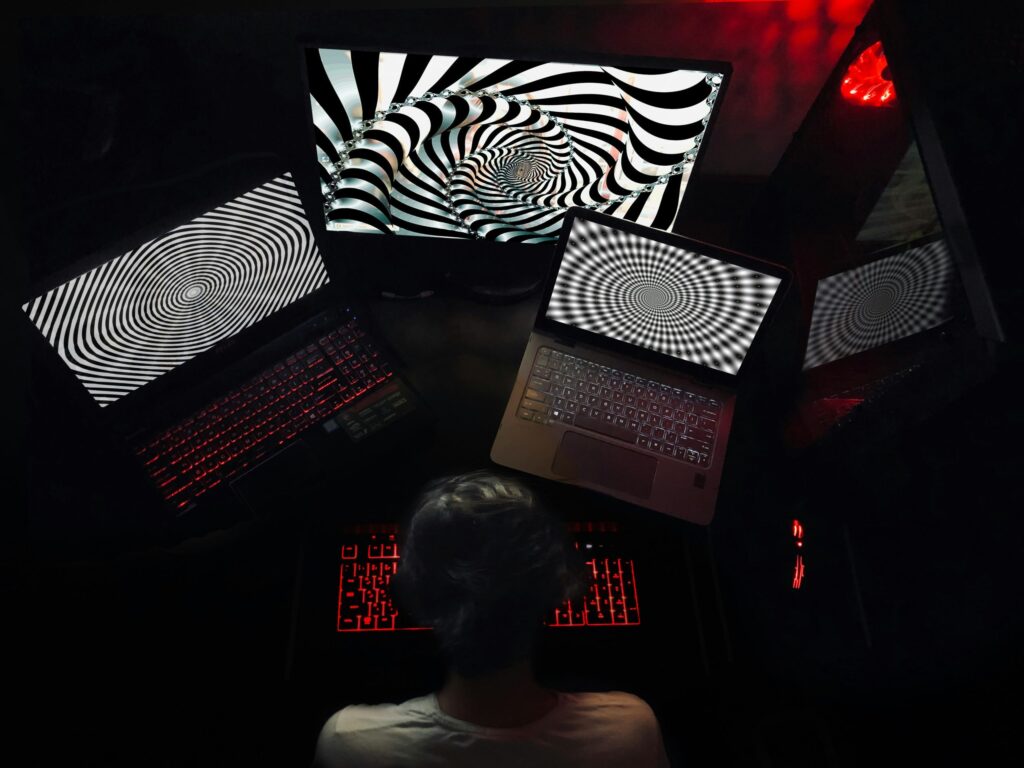

(Picture by SCARECROW artworks)

See additionally: Anthropic simply revealed how AI-orchestrated cyberattacks really work – Right here’s what enterprises have to know

Need to study extra about AI and large information from trade leaders? Take a look at AI & Huge Information Expo going down in Amsterdam, California, and London. The great occasion is a part of TechEx and is co-located with different main know-how occasions, click on right here for extra info.

AI Information is powered by TechForge Media. Discover different upcoming enterprise know-how occasions and webinars right here.