Picture by Editor

# Introduction

Getting labeled information — that’s, information with ground-truth goal labels — is a basic step for constructing most supervised machine studying fashions like random forests, logistic regression, or neural network-based classifiers. Regardless that one main problem in lots of real-world purposes lies in acquiring a enough quantity of labeled information, there are occasions when, even after having checked that field, there would possibly nonetheless be yet another essential problem: class imbalance.

Class imbalance happens when a labeled dataset incorporates lessons with very disparate numbers of observations, often with a number of lessons vastly underrepresented. This challenge usually provides rise to issues when constructing a machine studying mannequin. Put one other manner, coaching a predictive mannequin like a classifier on imbalanced information yields points like biased choice boundaries, poor recall on the minority class, and misleadingly excessive accuracy, which in apply means the mannequin performs effectively “on paper” however, as soon as deployed, fails in vital circumstances we care about most — fraud detection in financial institution transactions is a transparent instance of this, with transaction datasets being extraordinarily imbalanced on account of about 99% of transactions being respectable.

Artificial Minority Over-sampling Approach (SMOTE) is a data-focused resampling method to sort out this challenge by synthetically producing new samples belonging to the minority class, e.g. fraudulent transactions, by way of interpolation methods between current actual cases.

This text briefly introduces SMOTE and subsequently places the lens on explaining apply it appropriately, why it’s usually used incorrectly, and keep away from these conditions.

# What SMOTE is and The way it Works

SMOTE is an information augmentation method for addressing class imbalance issues in machine studying, particularly in supervised fashions like classifiers. In classification, when no less than one class is considerably under-represented in comparison with others, the mannequin can simply turn out to be biased towards the bulk class, resulting in poor efficiency, particularly in the case of predicting the uncommon class.

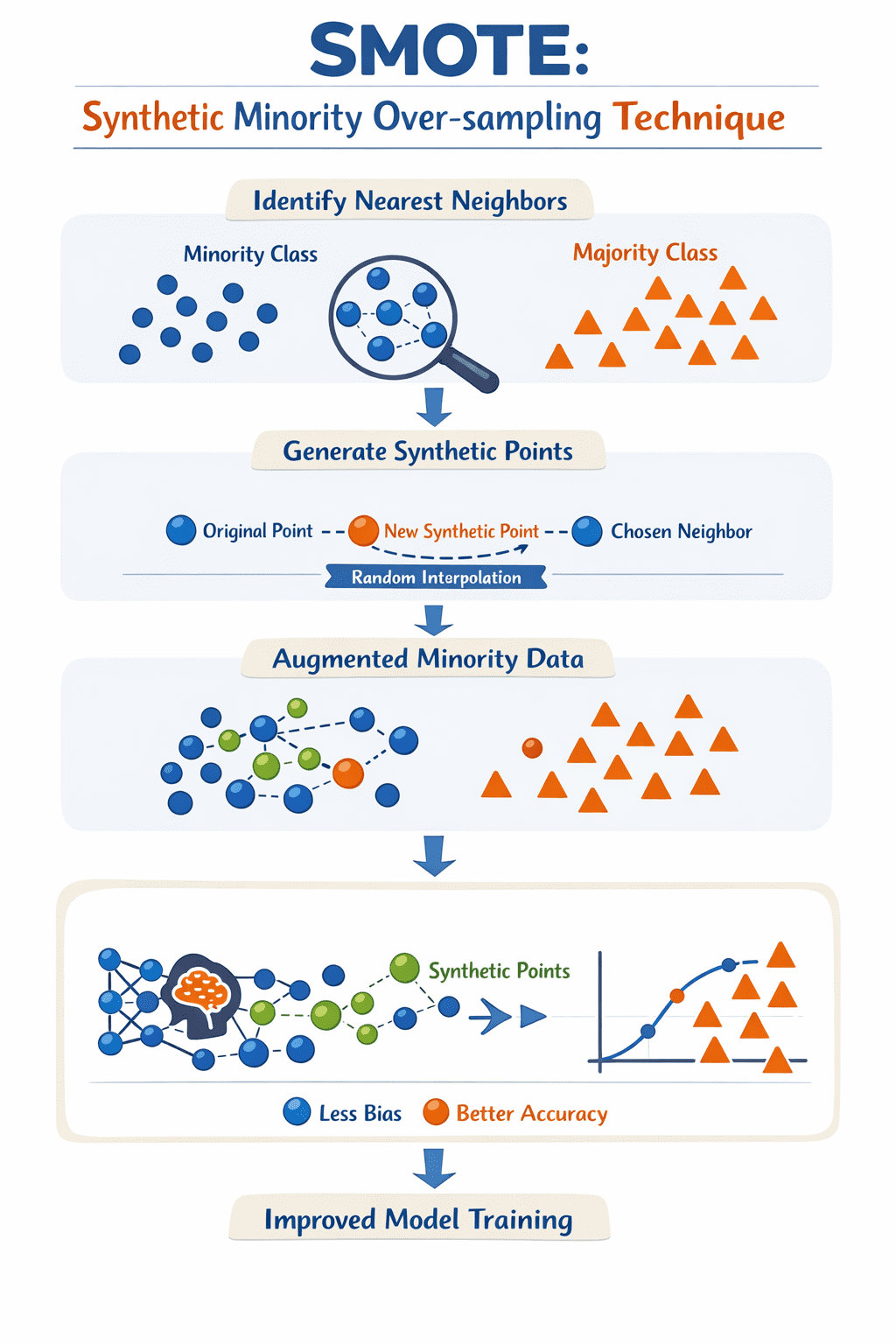

To deal with this problem, SMOTE creates artificial information examples for the minority class, not by simply replicating current cases as they’re, however by interpolating between a pattern from the minority class and its nearest neighbors within the house of accessible options: this course of is, in essence, like successfully “filling in” gaps in areas round which current minority cases transfer, thus serving to steadiness the dataset because of this.

SMOTE iterates over every minority instance, identifies its ( okay ) nearest neighbors, after which generates a brand new artificial level alongside the “line” between the pattern and a randomly chosen neighbor. The results of making use of these easy steps iteratively is a brand new set of minority class examples, in order that the method to coach the mannequin is completed primarily based on a richer illustration of the minority class(es) within the dataset, and leading to a simpler, much less biased mannequin.

How SMOTE works | Picture by Creator

# Implementing SMOTE Accurately in Python

To keep away from the info leakage points talked about beforehand, it’s best to make use of a pipeline. The imbalanced-learn library supplies a pipeline object that ensures SMOTE is barely utilized to the coaching information throughout every fold of a cross-validation or throughout a easy hold-out break up, leaving the take a look at set untouched and consultant of real-world information.

The next instance demonstrates combine SMOTE right into a machine studying workflow utilizing scikit-learn and imblearn:

from imblearn.over_sampling import SMOTE

from imblearn.pipeline import Pipeline

from sklearn.model_selection import train_test_split

from sklearn.ensemble import RandomForestClassifier

from sklearn.metrics import classification_report

# Break up information into coaching and testing units first

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=42)

# Outline the pipeline: Resampling then modeling

# The imblearn Pipeline solely applies SMOTE to the coaching information

pipeline = Pipeline([

('smote', SMOTE(random_state=42)),

('classifier', RandomForestClassifier(random_state=42))

])

# Match the pipeline on coaching information

pipeline.match(X_train, y_train)

# Consider on the untouched take a look at information

y_pred = pipeline.predict(X_test)

print(classification_report(y_test, y_pred))

By utilizing the Pipeline, you make sure that the transformation occurs inside the coaching context solely. This prevents artificial data from “bleeding” into your analysis set, offering a way more trustworthy evaluation of how your mannequin will deal with imbalanced lessons in manufacturing.

# Widespread Misuses of SMOTE

Let’s take a look at three widespread methods SMOTE is misused in machine studying workflows, and keep away from these improper makes use of:

- Making use of SMOTE earlier than partitioning the dataset into coaching and take a look at units: It is a quite common error that inexperienced information scientists might ceaselessly (and typically by chance) incur. SMOTE generates new artificial examples primarily based on all of the accessible information, and injecting artificial factors in what is going to later be each the coaching and take a look at partitions is the “not-so-perfect” recipe to artificially inflate mannequin analysis metrics unrealistically. The correct method is straightforward: break up the info first, then apply SMOTE solely on the coaching set. Pondering of making use of k-fold cross-validation as effectively? Even higher.

- Over-balancing: Blindly resampling till there’s an actual match amongst class proportions is one other widespread error. In lots of circumstances, attaining that good steadiness just isn’t solely pointless however can be counterproductive and unrealistic given the area or class construction. That is notably true in multiclass datasets with a number of sparse minority lessons, the place SMOTE might find yourself creating artificial examples that cross the boundaries or lie in areas the place no actual information examples are discovered: in different phrases, noise could also be inadvertently launched, with attainable undesired penalties like mannequin overfitting. The overall method is to behave gently and take a look at coaching your mannequin with refined, incremental rises in minority class proportions.

- Ignoring the context round metrics and fashions: The general accuracy metric of a mannequin is a straightforward and interpretable metric to acquire, but it surely can be a deceptive and “hollow metric” that doesn’t mirror your mannequin’s incapacity to detect circumstances of the minority class. It is a vital challenge in high-stakes domains like banking and healthcare, with eventualities just like the detection of uncommon illnesses. In the meantime, SMOTE will help enhance the reliance on metrics like recall, however it will possibly lower its counterpart, precision, by introducing noisy artificial samples that will misalign with enterprise objectives. To correctly consider not solely your mannequin, but additionally SMOTE’s effectiveness in its efficiency, collectively deal with metrics like recall, F1-score, Matthews correlation coefficient (MCC, a “summary” of a complete confusion matrix), or precision-recall space beneath the curve (PR-AUC). Likewise, think about different methods like class weighting or threshold tuning as a part of the applying of SMOTE to additional improve effectiveness.

# Concluding Remarks

This text revolved round SMOTE: a generally used method to handle class imbalance in constructing some machine studying classifiers primarily based on real-world datasets. We recognized some widespread misuses of this method and sensible recommendation to attempt avoiding them.

Iván Palomares Carrascosa is a frontrunner, author, speaker, and adviser in AI, machine studying, deep studying & LLMs. He trains and guides others in harnessing AI in the true world.