“Vibe coding” — utilizing AI fashions to assist write code — has grow to be a part of on a regular basis improvement for lots of groups. It may be an enormous time-saver, however it will possibly additionally result in over-trusting AI-generated code, which creates room for safety vulnerabilities to be launched.

Intruder’s expertise serves as a real-world case examine in how AI-generated code can affect safety. Right here’s what occurred and what different organizations ought to look ahead to.

When We Let AI Assist Construct a Honeypot

To ship our Speedy Response service, we arrange honeypots designed to gather early-stage exploitation makes an attempt. For considered one of them, we couldn’t discover an open-source possibility that did precisely what we needed, so we did what loads of groups do lately: we used AI to assist draft a proof-of-concept.

It was deployed as deliberately weak infrastructure in an remoted surroundings, however we nonetheless gave the code a fast sanity verify earlier than rolling it out.

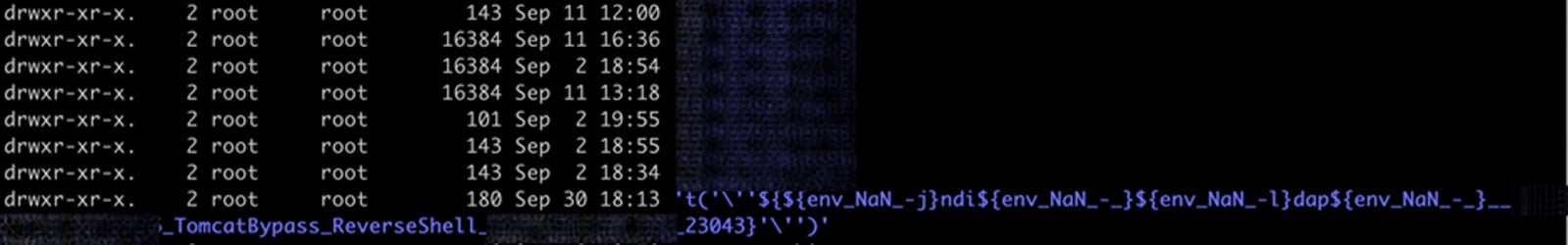

A couple of weeks later, one thing odd began displaying up within the logs. Information that ought to have been saved beneath attacker IP addresses had been showing with payload strings as an alternative, which made it clear that person enter was ending up someplace we didn’t intend.

The Vulnerability We Didn’t See Coming

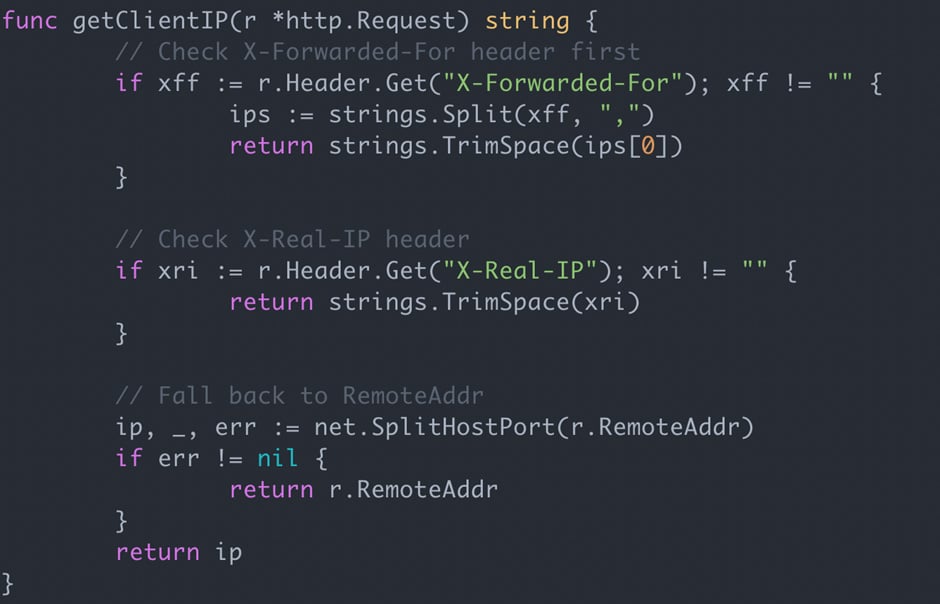

A more in-depth inspection of the code confirmed what was occurring: the AI had added logic to tug client-supplied IP headers and deal with them because the customer’s IP.

This is able to solely be secure if the headers come from a proxy you management; in any other case they’re successfully beneath the shopper’s management.

This implies the positioning customer can simply spoof their IP tackle or use the header to inject payloads, which is a vulnerability we frequently discover in penetration exams.

In our case, the attacker had merely positioned their payload into the header, which defined the weird listing names. The affect right here was low and there was no signal of a full exploit chain, but it surely did give the attacker some affect over how this system behaved.

It may have been a lot worse: if we had been utilizing the IP tackle in one other method, the identical mistake may have simply led to Native File Disclosure or Server-Aspect Request Forgery.

The menace surroundings is intensifying and attackers are transferring quicker with AI.

Constructed on insights from 3,000+ organizations, Intruder’s Publicity Administration Index reveals how defenders are adapting. Get the complete evaluation and benchmark your crew’s time-to-fix.

Obtain the Report

Why SAST Missed It

We ran Semgrep OSS and Gosec on the code. Neither flagged the vulnerability, though Semgrep did report just a few unrelated enhancements. That’s not a failure of these instruments — it’s a limitation of static evaluation.

Detecting this specific flaw requires contextual understanding that the client-supplied IP headers had been getting used with out validation, and that no belief boundary was enforced.

It’s the sort of nuance that’s apparent to a human pentester, however simply missed when reviewers place somewhat an excessive amount of confidence in AI-generated code.

AI Automation Complacency

There’s a well-documented concept from aviation that supervising automation takes extra cognitive effort than performing the duty manually. The identical impact appeared to point out up right here.

As a result of the code wasn’t ours within the strict sense — we didn’t write the strains ourselves — the psychological mannequin of the way it labored wasn’t as robust, and evaluation suffered.

The comparability to aviation ends there, although. Autopilot methods have many years of security engineering behind them, whereas AI-generated code doesn’t. There isn’t but a longtime security margin to fall again on.

This Wasn’t an Remoted Case

This wasn’t the one case the place AI confidently produced insecure outcomes. We used the Gemini reasoning mannequin to assist generate customized IAM roles for AWS, which turned out to be weak to privilege escalation. Even after we identified the problem, the mannequin politely agreed after which produced one other weak function.

It took 4 rounds of iteration to reach at a secure configuration. At no level did the mannequin independently acknowledge the safety downside – it required human steering your complete approach.

Skilled engineers will normally catch these points. However AI-assisted improvement instruments are making it simpler for folks with out safety backgrounds to provide code, and up to date analysis has already discovered hundreds of vulnerabilities launched by such platforms.

However as we’ve proven, even skilled builders and safety professionals can overlook flaws when the code comes from an AI mannequin that appears assured and behaves accurately at first look. And for end-users, there’s no approach to inform whether or not the software program they depend on comprises AI-generated code, which places the duty firmly on the organizations delivery the code.

Takeaways for Groups Utilizing AI

At a minimal, we don’t advocate letting non-developers or non-security workers depend on AI to put in writing code.

And in case your group does permit consultants to make use of these instruments, it’s price revisiting your code evaluation course of and CI/CD detection capabilities to ensure this new class of points doesn’t slip by way of.

We count on AI-introduced vulnerabilities to grow to be extra widespread over time.

Few organizations will overtly admit when a difficulty got here from their use of AI, so the dimensions of the issue might be bigger than what’s reported. This gained’t be the final instance — and we doubt it’s an remoted one.

E-book a demo to see how Intruder uncovers exposures earlier than they grow to be breaches.

Writer

Sam Pizzey is a Safety Engineer at Intruder. Beforehand a pentester somewhat too obsessive about reverse engineering, at the moment targeted on methods to detect utility vulnerabilities remotely at scale.

Sponsored and written by Intruder.