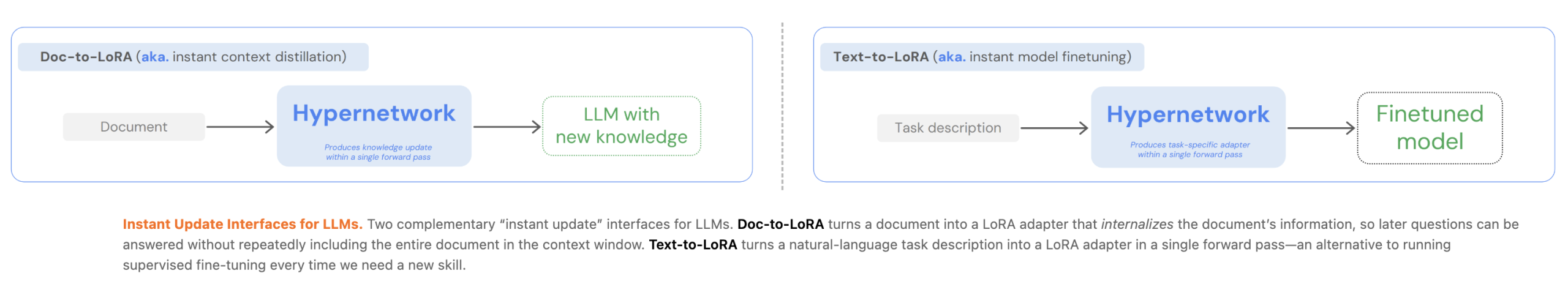

Customizing Massive Language Fashions (LLMs) presently presents a major engineering trade-off between the pliability of In-Context Studying (ICL) and the effectivity of Context Distillation (CD) or Supervised Tremendous-Tuning (SFT). Tokyo-based Sakana AI has proposed a brand new method to bypass these constraints by price amortization. In two of their latest papers, they launched Textual content-to-LoRA (T2L) and Doc-to-LoRA (D2L), light-weight hypernetworks that meta-learn to generate Low-Rank Adaptation (LoRA) matrices in a single ahead move.

The Engineering Bottleneck: Latency vs. Reminiscence

For AI Devs, the first limitation of normal LLM adaptation is computational overhead:

- In-Context Studying (ICL): Whereas handy, ICL suffers from quadratic consideration prices and linear KV-cache development, which will increase latency and reminiscence consumption as prompts lengthen.

- Context Distillation (CD): CD transfers info into mannequin parameters, however per-prompt distillation is usually impractical on account of excessive coaching prices and replace latency.

- SFT: Requires task-specific datasets and costly re-training if info modifications.

Sakana AI’s strategies amortize these prices by paying a one-time meta-training charge. As soon as skilled, the hypernetwork can immediately adapt the bottom LLM to new duties or paperwork with out extra backpropagation.

Textual content-to-LoRA (T2L): Adaptation by way of Pure Language

Textual content-to-LoRA (T2L) is a hypernetwork designed to adapt LLMs on the fly utilizing solely a pure language description of a activity.

Structure and Coaching

T2L makes use of a activity encoder to extract vector representations from textual content descriptions. This illustration, mixed with learnable module and layer embeddings, is processed by a sequence of MLP blocks to generate the A and B low-rank matrices for the goal LLM.

The system may be skilled by way of two main schemes:

- LoRA Reconstruction: Distilling current, pre-trained LoRA adapters into the hypernetwork.

- Supervised Tremendous-Tuning (SFT): Optimizing the hypernetwork end-to-end on multi-task datasets.

The analysis signifies that SFT-trained T2L generalizes higher to unseen duties as a result of it implicitly learns to cluster associated functionalities in weight area. In benchmarks, T2L matched or outperformed task-specific adapters on duties like GSM8K and Arc-Problem, whereas lowering adaptation prices by over 4x in comparison with 3-shot ICL.

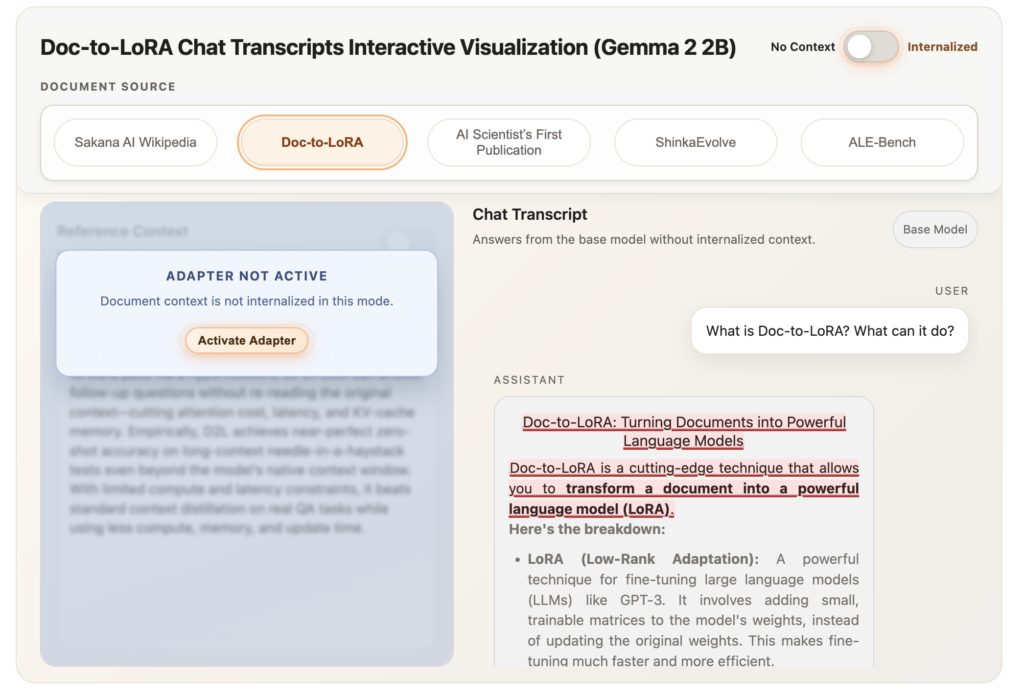

Doc-to-LoRA (D2L): Internalizing Context

Doc-to-LoRA (D2L) extends this idea to doc internalization. It permits an LLM to reply subsequent queries a few doc with out re-consuming the unique context, successfully eradicating the doc from the lively context window.

Perceiver-Based mostly Design

D2L makes use of a Perceiver-style cross-attention structure. It maps variable-length token activations (Z) from the bottom LLM right into a fixed-shape LoRA adapter.

To deal with paperwork exceeding the coaching size, D2L employs a chunking mechanism. Lengthy contexts are partitioned into Ok contiguous chunks, every processed independently to supply per-chunk adapters. These are then concatenated alongside the rank dimension, permitting D2L to generate higher-rank LoRAs for longer inputs with out altering the hypernetwork’s output form.

Efficiency and Reminiscence Effectivity

On a Needle-in-a-Haystack (NIAH) retrieval activity, D2L maintained near-perfect zero-shot accuracy on context lengths exceeding the bottom mannequin’s native window by greater than 4x.

- Reminiscence Influence: For a 128K-token doc, a base mannequin requires over 12 GB of VRAM for the KV cache. Internalized D2L fashions dealt with the identical doc utilizing lower than 50 MB.

- Replace Latency: D2L internalizes info in sub-second regimes (<1s), whereas conventional CD can take between 40 to 100 seconds.

Cross-Modal Switch

A major discovering within the D2L analysis is the power to carry out zero-shot internalization of visible info. Through the use of a Imaginative and prescient-Language Mannequin (VLM) because the context encoder, D2L mapped visible activations right into a text-only LLM’s parameters. This allowed the textual content mannequin to categorise pictures from the Imagenette dataset with 75.03% accuracy, regardless of by no means seeing picture information throughout its main coaching.

Key Takeaways

- Amortized Customization by way of Hypernetworks: Each strategies use light-weight hypernetworks to meta-learn the variation course of, paying a one-time meta-training price to allow instantaneous, sub-second era of LoRA adapters for brand spanking new duties or paperwork.

- Vital Reminiscence and Latency Discount: Doc-to-LoRA internalizes context into parameters, lowering KV-cache reminiscence consumption from over 12 GB to lower than 50 MB for lengthy paperwork and reducing replace latency from minutes to lower than a second.

- Efficient Lengthy-Context Generalization: Utilizing a Perceiver-based structure and a chunking mechanism, Doc-to-LoRA can internalize info at sequence lengths greater than 4x the native context window of the bottom LLM with near-perfect accuracy.

- Zero-Shot Activity Adaptation: Textual content-to-LoRA can generate specialised LoRA adapters for totally unseen duties primarily based solely on a pure language description, matching or exceeding the efficiency of task-specific ‘oracle’ adapters.

- Cross-Modal Information Switch: The Doc-to-LoRA structure permits zero-shot internalization of visible info from a Imaginative and prescient-Language Mannequin (VLM) right into a text-only LLM, permitting the latter to categorise pictures with excessive accuracy with out having seen pixel information throughout its main coaching.

Try the Doc-to-Lora Paper, Code, Textual content-to-LoRA Paper, Code . Additionally, be at liberty to comply with us on Twitter and don’t neglect to hitch our 120k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you may be a part of us on telegram as effectively.