Anthropic’s Claude Mythos Preview has dominated safety discussions since its April 7 announcement. Early reporting describes a strong cybersecurity-focused AI system able to figuring out vulnerabilities at scale and elevating critical questions on how shortly organizations can validate, prioritize, and remediate what it finds.

The controversy that adopted has principally centered on the appropriate questions: Is that this a step-change or an incremental advance? Does proscribing entry to Microsoft, Apple, AWS, and JPMorgan truly scale back threat, or does it simply focus defensive benefit among the many already-well-defended? What occurs when adversaries—state actors, felony enterprises—construct equal functionality?

These are essential. However there is a quieter operational downside that is getting much less airtime, and it is the one that may truly decide whether or not most organizations survive this shift.

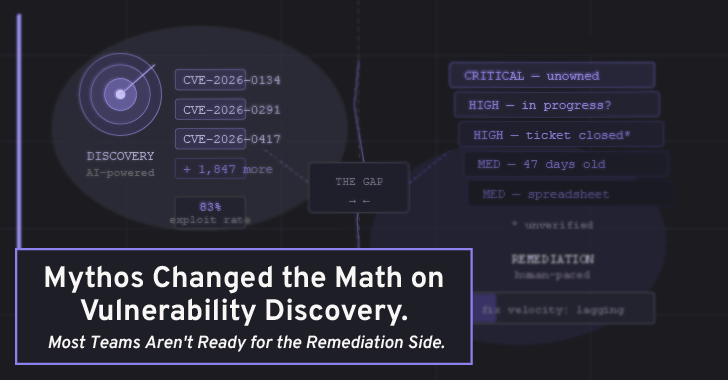

The Discovery-to-Remediation Hole

The Mythos announcement, and the broader AI safety dialog it kicked off, is basically about discovering vulnerabilities sooner. That is beneficial. However discovering a vulnerability and fixing it are two completely totally different workflows, and the hole between them is the place most safety packages quietly bleed out. That is precisely the hole PlexTrac was constructed to shut.

Take into account what usually occurs after a penetration take a look at or a vulnerability scan surfaces a vital discovering: it goes right into a spreadsheet, or a ticket, or a PDF report that lands in somebody’s inbox. The safety group is aware of about it. The engineering group could or could not learn about it. Remediation possession is ambiguous. There is no clear approach to monitor whether or not the patch truly shipped, or whether or not it was deprioritized, or whether or not a re-test was ever scheduled. In the meantime, the findings are.

AI fashions like Mythos will speed up the enter aspect of this pipeline dramatically. They’ll uncover vulnerabilities at a tempo and depth that human crimson groups merely cannot match. But when the organizational infrastructure for triaging, prioritizing, speaking, and verifying fixes hasn’t saved tempo, sooner discovery simply means a faster-growing backlog of unresolved vital points.

That is the issue {that a} mannequin like Mythos truly makes extra acute. In case your present pentest course of takes three weeks to floor ten high-severity findings, and remediation is already struggling to maintain up, what occurs when that very same floor space is scanned repeatedly and generates findings at ten instances the speed?

Schneier’s False Constructive Downside Is Actual

Bruce Schneier raised a pointy level in his writeup: we do not know Mythos’s false optimistic charge on unfiltered output. Anthropic studies 89% severity settlement with human contractors on the findings they showcased—however that is a curated pattern, not a full-run distribution. AI techniques that detect almost each actual bug additionally are likely to generate plausible-sounding vulnerabilities in patched or corrected code.

This issues operationally. A device that generates high-confidence-sounding false positives at scale would not scale back safety group burden—it will increase it. Each spurious vital discovering that needs to be triaged and dismissed is time a safety engineer is not spending on an actual one. The worth of AI-assisted vulnerability discovery is barely realized if the findings that come out of it may be effectively evaluated, contextualized towards precise enterprise threat, and routed to the appropriate individuals.

What the Infrastructure Downside Really Seems Like

The groups greatest positioned to soak up Mythos-era discovery velocity are those that have already got three issues in place:

Centralized findings administration. Not a ticket system, not a JIRA board bolted onto a spreadsheet. A purpose-built place the place vulnerability findings from a number of sources—scanner output, pentest studies, crimson group engagements—stay in a normalized, queryable format. With out this, integrating AI-generated findings simply provides one other knowledge silo.

Risk-contextualized prioritization. Raw CVSS scores are a starting point, not a decision. A critical finding in a system that’s air-gapped and internal is not the same risk as the same finding in a customer-facing API. Organizations that can only sort by severity score will be overwhelmed when AI discovery starts producing findings at volume; organizations that can score against asset criticality, business impact, and exposure context can triage intelligently.

Dynamic, Risk-Based Remediation via Configurable Scoring

Closed-loop remediation tracking. This is where most programs actually fail. A finding that isn’t verified as fixed is just a liability that has a name. Continuous re-testing, structured remediation workflows, and clear ownership handoffs aren’t exciting features—they’re the difference between a security program that improves over time and one that just accumulates documented risk.

PlexTrac is a pentest reporting and exposure management platform that’s been building in exactly this direction—centralized findings data, contextual risk prioritization, and structured remediation workflows.

Mythos (and tools like it) is going to be very good at telling you your house has structural problems. PlexTrac is the operational layer that makes sure those problems actually get fixed, the right contractor gets assigned, and someone verifies the work before closing the job. Both are necessary. Most organizations have invested in the equivalent of better home inspections while letting the repair tracking system stay in a shared Google Doc.

The Access Problem Schneier Identified Is Also a Workflow Problem

One critique of Project Glasswing is that concentrating Mythos access among 50 large vendors means the organizations best-equipped to act on findings get them first. Fortune 500 enterprises, as the Fortune piece from the former national cyber director noted, are better positioned to absorb and remediate; it’s SMEs, regional infrastructure operators, and specialized industrial systems that are most exposed and least resourced.

This is a structural access problem that policy will have to address. But embedded in it is also a workflow problem: even if access were democratized, many smaller organizations don’t have the operational infrastructure to turn AI-generated security findings into executed remediations. Tooling that reduces the overhead of that process—faster reporting, clearer findings communication, lower-friction remediation handoffs—is arguably more important for those organizations than it is for the enterprises that can already throw headcount at the problem.

The Practical Takeaway

The Mythos moment is a useful forcing function. Not because it means your systems will definitely be compromised tomorrow, but because it makes visible a gap that’s been quietly growing for years: security teams are getting better at finding problems while the organizational machinery for fixing them has evolved much more slowly.

The right response isn’t panic, and it isn’t waiting to see whether Glasswing access eventually expands to include you. It’s taking the Mythos announcement as a prompt to audit your own remediation pipeline: How long does it take a critical finding to go from discovery to verified fix? How many open high-severity findings are currently in some ambiguous state of “being worked on”? Can you actually re-test after remediation, or do you just trust the engineering ticket was closed?

Those questions don’t require access to Mythos to answer. And for most teams, the answers will be more uncomfortable than anything in Anthropic’s 245-page technical document.