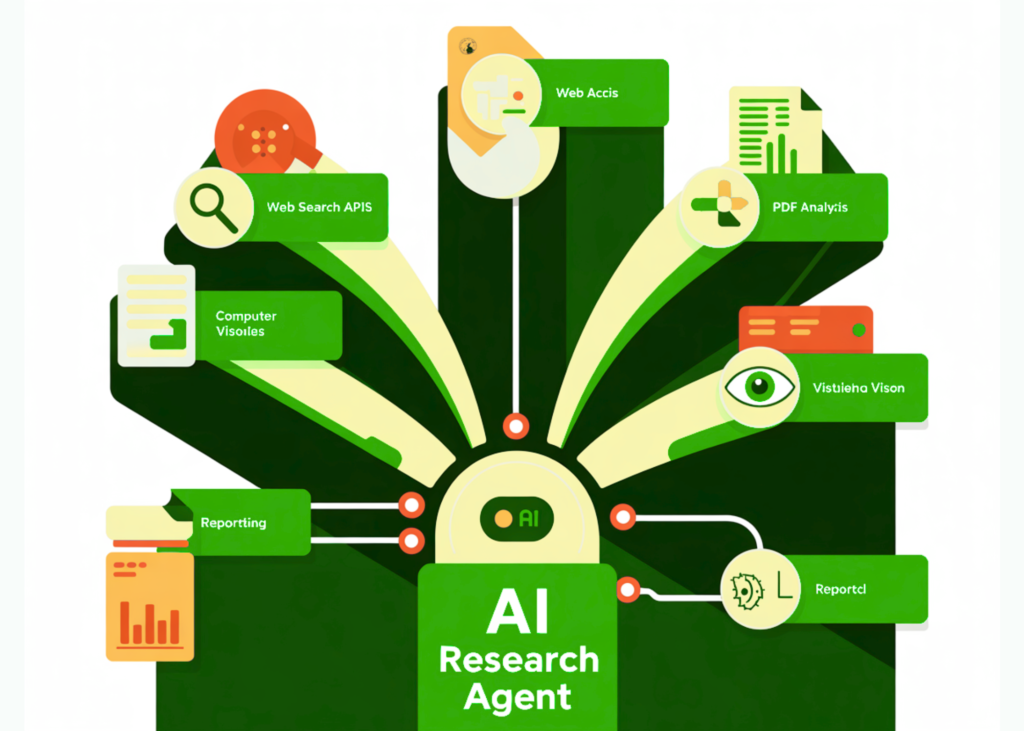

On this tutorial, we construct a “Swiss Army Knife” analysis agent that goes far past easy chat interactions and actively solves multi-step analysis issues end-to-end. We mix a tool-using agent structure with stay net search, native PDF ingestion, vision-based chart evaluation, and automatic report era to show how fashionable brokers can motive, confirm, and produce structured outputs. By wiring collectively small brokers, OpenAI fashions, and sensible data-extraction utilities, we present how a single agent can discover sources, cross-check claims, and synthesize findings into professional-grade Markdown and DOCX reviews.

%pip -q set up -U smolagents openai trafilatura duckduckgo-search pypdf pymupdf python-docx pillow tqdm

import os, re, json, getpass

from typing import Listing, Dict, Any

import requests

import trafilatura

from duckduckgo_search import DDGS

from pypdf import PdfReader

import fitz

from docx import Doc

from docx.shared import Pt

from datetime import datetime

from openai import OpenAI

from smolagents import CodeAgent, OpenAIModel, software

if not os.environ.get("OPENAI_API_KEY"):

os.environ["OPENAI_API_KEY"] = getpass.getpass("Paste your OpenAI API key (hidden): ").strip()

print("OPENAI_API_KEY set:", "YES" if os.environ.get("OPENAI_API_KEY") else "NO")

if not os.environ.get("SERPER_API_KEY"):

serper = getpass.getpass("Optional: Paste SERPER_API_KEY for Google results (press Enter to skip): ").strip()

if serper:

os.environ["SERPER_API_KEY"] = serper

print("SERPER_API_KEY set:", "YES" if os.environ.get("SERPER_API_KEY") else "NO")

shopper = OpenAI()

def _now():

return datetime.utcnow().strftime("%Y-%m-%d %H:%M:%SZ")

def _safe_filename(s: str) -> str:

s = re.sub(r"[^a-zA-Z0-9._-]+", "_", s).strip("_")

return s[:180] if s else "file"We arrange the total execution surroundings and securely load all required credentials with out hardcoding secrets and techniques. We import all dependencies required for net search, doc parsing, imaginative and prescient evaluation, and agent orchestration. We additionally initialize shared utilities to standardize timestamps and file naming all through the workflow.

attempt:

from google.colab import information

os.makedirs("/content/pdfs", exist_ok=True)

uploaded = information.add()

for identify, knowledge in uploaded.gadgets():

if identify.decrease().endswith(".pdf"):

with open(f"/content/pdfs/{name}", "wb") as f:

f.write(knowledge)

print("PDFs in /content/pdfs:", os.listdir("/content/pdfs"))

besides Exception as e:

print("Upload skipped:", str(e))

def web_search(question: str, okay: int = 6) -> Listing[Dict[str, str]]:

serper_key = os.environ.get("SERPER_API_KEY", "").strip()

if serper_key:

resp = requests.put up(

"

headers={"X-API-KEY": serper_key, "Content-Type": "application/json"},

json={"q": question, "num": okay},

timeout=30,

)

resp.raise_for_status()

knowledge = resp.json()

out = []

for merchandise in (knowledge.get("organic") or [])[:k]:

out.append({

"title": merchandise.get("title",""),

"url": merchandise.get("link",""),

"snippet": merchandise.get("snippet",""),

})

return out

out = []

with DDGS() as ddgs:

for r in ddgs.textual content(question, max_results=okay):

out.append({

"title": r.get("title",""),

"url": r.get("href",""),

"snippet": r.get("body",""),

})

return out

def fetch_url_text(url: str) -> Dict[str, Any]:

attempt:

downloaded = trafilatura.fetch_url(url, timeout=30)

if not downloaded:

return {"url": url, "ok": False, "error": "fetch_failed", "text": ""}

textual content = trafilatura.extract(downloaded, include_comments=False, include_tables=True)

if not textual content:

return {"url": url, "ok": False, "error": "extract_failed", "text": ""}

title_guess = subsequent((ln.strip() for ln in textual content.splitlines() if ln.strip()), "")[:120]

return {"url": url, "ok": True, "title_guess": title_guess, "text": textual content}

besides Exception as e:

return {"url": url, "ok": False, "error": str(e), "text": ""}We allow native PDF ingestion and set up a versatile net search pipeline that works with or with no paid search API. We present how we gracefully deal with optionally available inputs whereas sustaining a dependable analysis movement. We additionally implement sturdy URL fetching and textual content extraction to organize clear supply materials for downstream reasoning.

def read_pdf_text(pdf_path: str, max_pages: int = 30) -> Dict[str, Any]:

reader = PdfReader(pdf_path)

pages = min(len(reader.pages), max_pages)

chunks = []

for i in vary(pages):

attempt:

chunks.append(reader.pages[i].extract_text() or "")

besides Exception:

chunks.append("")

return {"pdf_path": pdf_path, "pages_read": pages, "text": "nn".be part of(chunks).strip()}

def extract_pdf_images(pdf_path: str, out_dir: str = "/content/extracted_images", max_pages: int = 10) -> Listing[str]:

os.makedirs(out_dir, exist_ok=True)

doc = fitz.open(pdf_path)

saved = []

pages = min(len(doc), max_pages)

base = _safe_filename(os.path.basename(pdf_path).rsplit(".", 1)[0])

for p in vary(pages):

web page = doc[p]

img_list = web page.get_images(full=True)

for img_i, img in enumerate(img_list):

xref = img[0]

pix = fitz.Pixmap(doc, xref)

if pix.n - pix.alpha >= 4:

pix = fitz.Pixmap(fitz.csRGB, pix)

img_path = os.path.be part of(out_dir, f"{base}_p{p+1}_img{img_i+1}.png")

pix.save(img_path)

saved.append(img_path)

doc.shut()

return saved

def vision_analyze_image(image_path: str, query: str, mannequin: str = "gpt-4.1-mini") -> Dict[str, Any]:

with open(image_path, "rb") as f:

img_bytes = f.learn()

resp = shopper.responses.create(

mannequin=mannequin,

enter=[{

"role": "user",

"content": [

{"type": "input_text", "text": f"Answer concisely and accurately.nnQuestion: {question}"},

{"type": "input_image", "image_data": img_bytes},

],

}],

)

return {"image_path": image_path, "answer": resp.output_text}We give attention to deep doc understanding by extracting structured textual content and visible artifacts from PDFs. We combine a vision-capable mannequin to interpret charts and figures as a substitute of treating them as opaque photos. We be certain that numerical traits and visible insights may be transformed into specific, text-based proof.

def write_markdown(path: str, content material: str) -> str:

os.makedirs(os.path.dirname(path), exist_ok=True)

with open(path, "w", encoding="utf-8") as f:

f.write(content material)

return path

def write_docx_from_markdown(docx_path: str, md: str, title: str = "Research Report") -> str:

os.makedirs(os.path.dirname(docx_path), exist_ok=True)

doc = Doc()

t = doc.add_paragraph()

run = t.add_run(title)

run.daring = True

run.font.measurement = Pt(18)

meta = doc.add_paragraph()

meta.add_run(f"Generated: {_now()}").italic = True

doc.add_paragraph("")

for line in md.splitlines():

line = line.rstrip()

if not line:

doc.add_paragraph("")

proceed

if line.startswith("# "):

doc.add_heading(line[2:].strip(), stage=1)

elif line.startswith("## "):

doc.add_heading(line[3:].strip(), stage=2)

elif line.startswith("### "):

doc.add_heading(line[4:].strip(), stage=3)

elif re.match(r"^s*[-*]s+", line):

p = doc.add_paragraph(fashion="List Bullet")

p.add_run(re.sub(r"^s*[-*]s+", "", line).strip())

else:

doc.add_paragraph(line)

doc.save(docx_path)

return docx_path

@software

def t_web_search(question: str, okay: int = 6) -> str:

return json.dumps(web_search(question, okay), ensure_ascii=False)

@software

def t_fetch_url_text(url: str) -> str:

return json.dumps(fetch_url_text(url), ensure_ascii=False)

@software

def t_list_pdfs() -> str:

pdf_dir = "/content/pdfs"

if not os.path.isdir(pdf_dir):

return json.dumps([])

paths = [os.path.join(pdf_dir, f) for f in os.listdir(pdf_dir) if f.lower().endswith(".pdf")]

return json.dumps(sorted(paths), ensure_ascii=False)

@software

def t_read_pdf_text(pdf_path: str, max_pages: int = 30) -> str:

return json.dumps(read_pdf_text(pdf_path, max_pages=max_pages), ensure_ascii=False)

@software

def t_extract_pdf_images(pdf_path: str, max_pages: int = 10) -> str:

imgs = extract_pdf_images(pdf_path, max_pages=max_pages)

return json.dumps(imgs, ensure_ascii=False)

@software

def t_vision_analyze_image(image_path: str, query: str) -> str:

return json.dumps(vision_analyze_image(image_path, query), ensure_ascii=False)

@software

def t_write_markdown(path: str, content material: str) -> str:

return write_markdown(path, content material)

@software

def t_write_docx_from_markdown(docx_path: str, md_path: str, title: str = "Research Report") -> str:

with open(md_path, "r", encoding="utf-8") as f:

md = f.learn()

return write_docx_from_markdown(docx_path, md, title=title)We implement the total output layer by producing Markdown reviews and changing them into polished DOCX paperwork. We expose all core capabilities as specific instruments that the agent can motive about and invoke step-by-step. We be certain that each transformation from uncooked knowledge to closing report stays deterministic and inspectable.

mannequin = OpenAIModel(model_id="gpt-5")

agent = CodeAgent(

instruments=[

t_web_search,

t_fetch_url_text,

t_list_pdfs,

t_read_pdf_text,

t_extract_pdf_images,

t_vision_analyze_image,

t_write_markdown,

t_write_docx_from_markdown,

],

mannequin=mannequin,

add_base_tools=False,

additional_authorized_imports=["json","re","os","math","datetime","time","textwrap"],

)

SYSTEM_INSTRUCTIONS = """

You're a Swiss Military Knife Analysis Agent.

"""

def run_research(subject: str):

os.makedirs("/content/report", exist_ok=True)

immediate = f"""{SYSTEM_INSTRUCTIONS.strip()}

Analysis query:

{subject}

Steps:

1) Listing accessible PDFs (if any) and determine that are related.

2) Do net seek for the subject.

3) Fetch and extract the textual content of the very best sources.

4) If PDFs exist, extract textual content and pictures.

5) Visually analyze figures.

6) Write a Markdown report and convert to DOCX.

"""

return agent.run(immediate)

subject = "Build a research brief on the most reliable design patterns for tool-using agents (2024-2026), focusing on evaluation, citations, and failure modes."

out = run_research(subject)

print(out[:1500] if isinstance(out, str) else out)

attempt:

from google.colab import information

information.obtain("/content/report/report.md")

information.obtain("/content/report/report.docx")

besides Exception as e:

print("Download skipped:", str(e))We assemble the whole analysis agent and outline a structured execution plan for multi-step reasoning. We information the agent to go looking, analyze, synthesize, and write utilizing a single coherent immediate. We show how the agent produces a completed analysis artifact that may be reviewed, shared, and reused instantly.

In conclusion, we demonstrated how a well-designed tool-using agent can perform as a dependable analysis assistant quite than a conversational toy. We showcased how specific instruments, disciplined prompting, and step-by-step execution enable the agent to go looking the online, analyze paperwork and visuals, and generate traceable, citation-aware reviews. This strategy provides a sensible blueprint for constructing reliable analysis brokers that emphasize analysis, proof, and failure consciousness, capabilities more and more important for real-world AI techniques.

Take a look at the Full Codes right here. Additionally, be at liberty to comply with us on Twitter and don’t neglect to affix our 100k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you’ll be able to be part of us on telegram as properly.