Datasets

This examine makes use of present information from 225 datasets revealed on the OpenNeuro platform32 downloaded by way of the openneuro-py model 2023.1.0 Python package deal ( OpenNeuro information from grownup HCs have been used for coaching and validation with cross-validation, whereas OpenNeuro information from HCs aged 2–12 years have been used for testing, together with a Artificial Atrophy dataset and three affected person datasets: the Münster Neuroimaging Cohort (MNC), the Marburg-Münster Affective Issues Cohort Examine (FOR2107/MACS) and the BiDirect examine. Information availability is ruled by the respective consortia. No new information have been acquired for this examine.

Coaching and validation datasets

OpenNeuro-Whole

Out of the over 700 datasets obtainable at OpenNeuro on the time of compilation (10 November 2021), every dataset that contained at the least 5 T1w MRIs from at the least 5 grownup HCs was included, leading to 208 datasets. Based mostly on a successive visible high quality examine, 30 MRIs have been excluded, primarily as a consequence of improper masking (Supplementary Fig. 23a) and inaccurate orientation (Supplementary Fig. 23b). The remaining compilation of 8,279 T1w MRIs is used because the OpenNeuro-Whole dataset (Supplementary Fig. 24 and Supplementary Desk 10).

OpenNeuro-HD

The 8,279 MRIs from OpenNeuro-Whole have been preprocessed utilizing the generally used CAT12 toolbox ( with default parameters7. To make sure prime quality of the coaching information, strict high quality thresholds have been set primarily based on the preprocessing high quality rankings offered by the toolbox. To be included, all rankings needed to be at the least a B− grade, ensuing within the following thresholds: a floor Euler quantity under 25, a floor defect space beneath 5.0, a floor depth root imply sq. error under 0.1, and a floor place root imply sq. error under 1.0. All OpenNeuro datasets that contained fewer than ten grownup HCs after this high quality management have been excluded. Within the remaining datasets, MRIs have been ranked in accordance with the floor defect quantity, and at last the highest 5 MRIs per dataset that handed a visible high quality examine have been included within the dataset. This leads to a complete of 685 MRIs from 137 datasets referred to as OpenNeuro-HD (Supplementary Fig. 24, center, Supplementary Desk 10 and Supply Information Fig. 2).

Take a look at datasets

OpenNeuro-Youngsters

Among the many over 700 datasets obtainable at OpenNeuro on the time of compilation (10 November 2021), every dataset that contained at the least 5 T1w MRIs from at the least 5 HCs within the age vary from 2 to 12 years was included, leading to 18 datasets. Based mostly on a successive visible high quality examine, 300 MRIs have been excluded, primarily as a consequence of sturdy movement artifacts (Supplementary Fig. 23c) and improper masking (Supplementary Fig. 23d). The remaining compilation of 867 T1w MRIs is used because the OpenNeuro-Youngsters dataset (Supplementary Fig. 24, proper, Supplementary Desk 10 and Supply Information Fig. 1). The CAT12 preprocessing for OpenNeuro-Youngsters used the TMP_Age11.5 template.

Artificial atrophy and artificial artifacts

Revealed by Rusak et al.33, this dataset makes use of T1w MRIs of 20 HCs from the Alzheimer’s Illness Neuroimaging Initiative34 to synthetically introduce world neocortical atrophy. Simulating ten progressions of atrophy, starting from 0.1 mm to 1 mm of world thickness discount, the ensuing dataset consists of 220 T1w MRIs (together with the 20 originals) and their respective ground-truth tissue maps.

To moreover examine the affect of scanner results, we introduce synthetic artifacts within the 20 authentic T1w MRIs utilizing Rician noise, bias area, blurring, ghosting, movement, ringing and spike artifacts (Supplementary Fig. 25). Every of the seven artifacts is utilized with medium and powerful depth, leading to 480 artificial MRIs.

VBM evaluation datasets

For the VBM analyses, we use a complete of 4,017 MRIs from three unbiased German cohorts (Supplementary Fig. 26): the Marburg-Münster Affective Issues Cohort Examine (MACS; N = 1,799), the Münster Neuroimaging Cohort (MNC; N = 1,194) and the BiDirect cohort (N = 1,024). All three cohorts embody subsamples with each sufferers with MDD and HCs who’re free from any lifetime psychological dysfunction diagnoses in accordance with DSM-IV standards.

Marburg-Münster Affective Issues Cohort Examine (FOR2107/MACS)

Sufferers have been recruited by way of psychiatric hospitals, whereas the management group was recruited by way of newspaper ads. Sufferers identified with MDD confirmed various ranges of symptom severity and underwent varied types of remedy (inpatient, outpatient or none). The FOR2107/MACS was performed at two scanning websites: College of Münster and College of Marburg. Inclusion standards for the current examine have been availability of accomplished baseline MRI information with adequate MRI high quality. Additional particulars in regards to the construction of the FOR2107/MACS35 and MRI high quality assurance protocol36 are offered elsewhere.

Münster Neuroimaging Cohort (MNC)

In MNC, sufferers have been recruited from native psychiatric hospitals and underwent inpatient remedy as a consequence of a average or extreme depressive dysfunction. Additional data relating to this examine may be present in refs. 37,38.

BiDirect

The BiDirect Examine is a potential undertaking that includes three distinct cohorts: sufferers hospitalized for an acute episode of main despair, sufferers as much as 6 months after an acute cardiac occasion, and HCs randomly drawn from the inhabitants register of town of Münster, Germany. Additional particulars on the rationale, design and recruitment procedures of the BiDirect examine have been described in refs. 39,40.

Preprocessing

All datasets are preprocessed utilizing the VBM pipeline of model 12.8.2 of the CAT12 toolbox, which was the most recent model obtainable on the time of research, with default parameters7. The affine transformation calculated throughout this preliminary CAT12 preprocessing is used such that tissue segmentation (see ‘Tissue segmentation’ part within the Strategies) and picture registration (see ‘Picture registration’ part within the Strategies) are constantly utilized within the template coordinate house. Picture registration relies on GM and WM likelihood maps in the usual decision of 1.5 mm (113 × 137 × 113 voxels).

For tissue segmentation, unprocessed MRIs are affinely registered to the template in a excessive decision of 0.5 mm (339 × 411 × 339 voxels) utilizing B-spline interpolation, and the CAT12 preprocessing is repeated on the idea of those high-resolution MRIs. This circumvents any potential picture degradation attributable to extra resizing of the CAT12 tissue map. As a result of there exist no ground-truth tissue maps, these high-resolution tissue maps are used as reference maps for mannequin coaching and validation. As a result of the MRIs are skull-stripped earlier than tissue segmentation in deepmriprep’s prediction pipeline (see ‘Prediction pipeline’ part within the Strategies), all voxels within the MRI that don’t comprise tissue within the respective tissue map are set to zero. Moreover, the usual N4 bias correction41 is utilized utilizing the ANTS package deal4 to keep away from interference with potential synthetic bias fields launched throughout information augmentation (see ‘Information augmentation’ part within the Strategies). Lastly, min–max scaling between the 0.fifth and 99.fifth percentile is used as proposed in ref. 23 with one modification: values above the utmost should not clipped to 1 however scaled by way of the perform (f(x)=1+ varOmega _x) to forestall any lack of essential data in areas with excessive depth values (for instance, blood vessels). The code for the enter preprocessing is publicly accessible at (ref. 42).

Information augmentation

Information augmentation is used throughout coaching to artificially introduce picture artifacts which will happen in real-world datasets. This will increase mannequin generalizability, as a result of results which might be rare within the coaching information may be systematically oversampled with any desired depth. Information augmentations for the picture registration step must be per equation (2), requiring specialised implementations. Therefore, for the present model of deepmriprep, information augmentation is omitted throughout picture registration mannequin coaching.

The 12 completely different information augmentations used throughout mannequin coaching (Supplementary Fig. 27) are carried out within the niftiai model 0.3.2 Python package deal (https://github.com/codingfisch/niftiai)43 and introduce synthetic bias fields, movement artifacts, noise, blurring, ghosting, spike artifacts, downsampling, translation, flipping, brightness, distinction and Gibbs ringing. Bias fields are generated by making use of an inverse Fourier remodel to low-frequency Gaussian noise, whereas movement artifacts, ghosting44, spike artifacts45 and Gibbs ringing46 are achieved by introducing artifacts within the okay-space of the T1w MRI. To be MRI-specific, noise is sampled out of a chi distribution47, a generalization of the Rician noise distribution48. As a substitute of utilizing the total set of affine and nonlinear spatial transformations, solely translation and flipping are utilized by way of nearest-neighbor resampling to avoid any potential picture degradation.

Tissue segmentation

To attain high-quality tissue segmentation, a cascaded 3D UNet method, impressed by Isensee et al.23, is utilized to a cropped area of 336 × 384 × 336 voxels within the high-resolution MRI (see ‘Preprocessing’ part within the Strategies). This particular cropping is chosen to make the picture dimensions divisible by 16 (required by UNet), with out excluding voxels which probably comprise tissue. The primary stage of the cascaded UNet processes the entire picture with a decreased decision of 0.75 mm (224 × 256 × 224 voxels). Within the second stage, the unique decision of 0.5 mm is processed utilizing a patchwise method, which includes the prediction from the primary stage in its mannequin enter. For every patch place, a person UNet is educated (see ‘Coaching process’ part within the Strategies). Each levels of the UNet structure are an identical with respect to using the rectified linear unit (ReLU) activation perform, occasion normalization49, a depth of 4, and the doubling of the variety of channels with growing depth, beginning with 8 channels. The implementation of the mannequin and the coaching process is publicly accessible by way of GitHub at (ref. 42).

Patchwise UNet

The second stage of the cascaded UNet subdivides the 336 × 384 × 336 voxels of the high-resolution MRI into 27 patches, every containing 128 × 128 × 128 voxels (Supplementary Fig. 28). For every of those 27 patches, a selected UNet mannequin is educated (see ‘Coaching process’ part within the Strategies). To reduce the variety of voxels in a patch that don’t comprise any tissue, the positions of the patches are optimized primarily based on the tissue segmentation masks of the 685 MRIs in OpenNeuro-HD. This iterative optimization begins from an everyday grid of three × 3 × 3 patches that covers the entire quantity. Then, every patch is moved stepwise by one voxel towards the picture heart till this might trigger a tissue voxel in one of many 685 MRIs to not be lined by the patch. To use the mind’s bilateral symmetry, every of the patch on the left hemisphere is moved in lockstep with its corresponding patch on the correct hemisphere.

Earlier than making use of patches from the correct hemisphere to the UNet, we apply flipping alongside the sagittal axis such that they resemble left-hemisphere patches. The ensuing prediction is then flipped again. This method reduces the variety of efficient patch positions for which particular person UNets must be educated from 27 to 18.

Near the border of a patch, the accuracy of the prediction sometimes decreases. Subsequently, predictions near the border of a patch are weighted much less by way of Gaussian significance weighting23 throughout accumulation of the ultimate prediction containing 336 × 384 × 336 voxels.

Multilevel activation perform

Based mostly on SPM3, the tissue maps produced by CAT12 comprise steady values starting from 0 to three. The values 1, 2 and three correspond to CSF, GM and WM, whereas 0 signifies that the respective voxel doesn’t comprise any tissue. The histogram of the template tissue map (Supplementary Fig. 29, proper) reveals that values near 0, 1, 2 and three are extra frequent than intermediate values. Moreover, smaller peaks may be noticed across the values 1.5 and a couple of.5, which correspond to the lessons CSF–GM and GM–WM, respectively, which CAT12 introduces. To introduce an inductive bias towards this desired worth distribution, the ultimate layer of the tissue segmentation UNet makes use of a customized multilevel activation perform impressed by Hu et al.50. This practice multilevel activation is achieved by way of the summation of six sigmoid capabilities,

$$f(x)=S(alpha x)+mathop limits_frac ,,,, varOmega ,,,,S(x)=frac ,$$

with α being a parameter of the neural community that’s optimized throughout mannequin coaching. This perform (Supplementary Fig. 29, left) efficiently maps a standard distribution—that’s, the everyday output distribution of a neural community—to the specified worth distribution with peaks at 0, 1, 1.5, 2, 2.5 and three (Supplementary Fig. 29, center). Together with a MAE loss, this multilevel activation perform facilitates the coaching of the tissue segmentation mannequin.

Coaching process

The 2 levels of 3D UNets are educated in a cascaded vogue. The primary stage mannequin is educated for 60 epochs on full-view MRIs with a decision of 0.75 mm (224 × 256 × 224 voxels) utilizing a batch dimension of 1.

The coaching of the three × 3 × 3 patchwise method within the second stage is extra advanced and leads to 18 fashions, every devoted to one of many 18 efficient patch positions (3 × 3 × 3 = 27 minus the 9 flipped proper hemisphere patches; see ‘Patchwise UNet’ part within the Strategies). First, a UNet is educated on all efficient patch positions for 2 epochs as a basis mannequin. For every of the 18 patch positions, this basis mannequin is lastly fine-tuned, utilizing solely the respective patch place for 20 epochs. For all patch-based coaching the batch dimension is ready to 2, and the flip augmentation is disabled for patches situated on the left and proper hemispheres. The enter of the second mannequin consists of patches of the unique MRI, concatenated with the respective patch of the primary stage predictions upsampled to the picture decision of 0.5 mm. All fashions are educated with the one-cycle studying price schedule51 utilizing a maximal studying price of 0.001, which follows the default settings of the fastai library52.

Picture registration

To introduce the neural network-based picture registration used for deepmriprep, we first introduce the usual picture registration approaches. In normal picture registration resembling DARTEL27, given an enter picture I and a template J, the sum of picture dissimilarity D and a regularization metric R weighted with a regularization parameter Λ,

$$L( , varOmega ,)=D(cdot ,)+Lambda R()$$

is minimized by way of the deformation area Φ. Within the loss perform L used on this normal method, the regularization parameter Λ controls the trade-off between picture similarity and the regularity of the deformation area. In CAT12, the default metric for picture similarity is solely the MSE between the shifting and the goal picture

$$D( cdot {boldsymbol },{bf })=mathrmOmega ({bf}cdot {boldsymbol},{bf})=frac{1} varOmega mathop{sum }limits_{{bf{p}}in varOmega }| | {bf{I}}cdot {boldsymbol{Phi }}({bf{p}})-{bf{J}}({bf{p}})| ^{2},$$

and the regularization time period is the linear elasticity of the deformation area Φ

$$R({boldsymbol{Phi }})=int left(mu parallel epsilon ({boldsymbol{Phi }}){parallel }^{2}+frac{lambda }{2}{(mathrm{tr}(epsilon ({boldsymbol{Phi }})))}^{2}proper){rm{d}}{bf{x}},$$

(1)

the place μ is the burden of the zoom elasticity and λ is the burden of the shearing elasticity.

To ensure that the deformations are invertible, registration frameworks27,53,54 take into account the deformation as the answer of an preliminary worth drawback of the shape

$$frac{{rm{d}}{boldsymbol{Phi }}(s;{bf{x}})}{{rm{d}}s}={bf{v}}({boldsymbol{Phi }}(s;{bf{x}}),s),,,,mathrm{with},,,,{boldsymbol{Phi }}(0;{bf{x}})={bf{x}}.$$

(2)

The mapping x → Φ(s; x) defines a household of diffeomorphisms forever s ∈ [0, τ]. Therefore, it’s assured that an inverse of the mapping exists, which may be computed by way of backward integration. As proposed in DARTEL (diffeomorphic anatomical registration by way of exponentiated lie algebra), a stationary velocity area framework as an alternative of the massive deformation diffeomorphic metric mapping (LDDMM) mannequin55,56 permits the speed area v to be fixed over time. Utilizing this simplification, the regularity of the deformation area—that’s, smoothness and invertibility—is robotically bolstered by way of ahead integration (additionally referred to as capturing) of this fixed velocity area. On this approach, a clean and invertible deformation area may be discovered by iteratively optimizing the speed area v with respect to L utilizing a gradient descent method.

SyN registration57 moreover enforces symmetry between the ahead (picture to template) Φ and backward (template to picture) deformation area Φ−1. SyN considers the total ahead and backward deformations to be compositions of half deformations ({{boldsymbol{Phi }}}^{frac{1}{2}}) and ({{boldsymbol{Phi }}}^{-frac{1}{2}}) by way of

$${boldsymbol{Phi }}={{boldsymbol{Phi }}}^{frac{1}{2}}cdot -{{boldsymbol{Phi }}}^{-frac{1}{2}},,,,{mathrm{and}},,,,{{boldsymbol{Phi }}}^{-1}={{boldsymbol{Phi }}}^{-frac{1}{2}}cdot -{{boldsymbol{Phi }}}^{frac{1}{2}}.$$

Based mostly on this consideration, SyN provides the dissimilarity between the picture and the backward deformed template D(I, J ⋅ Φ−1) and the dissimilarity between the half ahead deformed picture and the half backward deformed template (D({bf{I}}cdot {{boldsymbol{Phi }}}^{frac{1}{2}},{bf{J}}cdot {{boldsymbol{Phi }}}^{-frac{1}{2}})) to reach on the loss perform

$$L({bf{I}},{bf{J}},varPhi )=D({bf{I}}cdot varPhi ,{bf{J}})+D({bf{I}},{bf{J}}cdot {varPhi }^{-1})+Dleft({bf{I}}cdot {varPhi }^{frac{1}{2}},{bf{J}}cdot {varPhi }^{frac{-1}{2}}proper)+Lambda R(varPhi ).$$

(3)

Utilizing the diffeomorphic mapping in equation (2), velocity fields ({{bf{v}}}^{frac{1}{2}}) and ({{bf{v}}}^{-frac{1}{2}}) are used to generate the half deformations ({{boldsymbol{Phi }}}^{frac{1}{2}}) and ({{boldsymbol{Phi }}}^{-frac{1}{2}}).

Mannequin structure and coaching

The neural network-based picture registration framework used for deepmriprep relies on SYMNet22 and makes use of a UNet to foretell the ahead and backward velocity area ({{bf{v}}}^{frac{1}{2}}) and ({{bf{v}}}^{-frac{1}{2}}). Analogous to the SyN registration, these velocity fields are built-in in accordance with equation (2) to reach on the half deformation fields ({{boldsymbol{Phi }}}^{frac{1}{2}}) and ({{boldsymbol{Phi }}}^{-frac{1}{2}}) by way of the scaling and squaring technique27,53 with τ = 7 time steps (Supplementary Fig. 30).

Just like the neural community structure used for tissue segmentation (see ‘Tissue segmentation’ part within the Strategies), the UNet makes use of occasion normalization49, a depth of 4, and is doubling the variety of channels with growing depth, beginning with 8 channels. Nonetheless, we apply two modifications: (1) utilization of LeakyReLU58 as an alternative of ReLU activation layers, and (2) hyperbolic tangent (tanh) activation perform within the ultimate layer, guaranteeing that the UNet’s output conforms to the worth vary of −1 to 1 used for picture coordinates by PyTorch59. The mannequin is educated for 50 epochs utilizing the one-cycle studying price schedule with a maximal studying price of 0.001.

Throughout preliminary checks, coaching with the usual SyN loss perform (equation (3)) led to main artifacts within the predicted velocity and deformation area (Supplementary Fig. 31b). To keep away from these artifacts, we examined supervised approaches (Supplementary Fig. 31c–e) that make the most of deformation fields created by CAT12 (Supplementary Fig. 31a). Utilizing an iterative method, we decided the speed fields ({{bf{v}}}_{{rm{CAT}}}^{frac{1}{2}}) and ({{bf{v}}}_{{rm{CAT}}}^{-frac{1}{2}}) that produce these given deformation fields ΦCAT and ({{boldsymbol{Phi }}}_{{rm{CAT}}}^{-1}) in our PyTorch-based implementation and used these velocity fields as targets. Utilizing the MSE, the ensuing loss perform Lv measures disagreements between the expected velocity fields ({{bf{v}}}^{frac{1}{2}}) and ({{bf{v}}}^{-frac{1}{2}}) and the targets by way of

$${L}_{{bf{v}}}left({{bf{v}}}^{frac{1}{2}},{{bf{v}}}^{-frac{1}{2}}proper)=frac{1} varOmega mathop{sum }limits_{{bf{p}}in varOmega }| | {{bf{v}}}_{mathrm{CAT}}^{frac{1}{2}}({bf{p}})-{{bf{v}}}^{frac{1}{2}}({bf{p}})| ^{2}+| | {{bf{v}}}_{mathrm{CAT}}^{-frac{1}{2}}({bf{p}})-{{bf{v}}}^{-frac{1}{2}}({bf{p}})| ^{2}.$$

Utilizing this loss perform, the expected velocity fields present fewer artifacts, however primarily based on the ensuing Jacobi determinant area JΦ, some inaccuracies stay (Supplementary Fig. 31c). The Jacobi determinant signifies the amount change attributable to the deformation for every voxel. By explicitly including the MSE between the expected and ground-truth Jacobi determinant JΦ, the loss perform

$$start{array}{l}{L}_{{bf{{v}}},{{bf{{J}}}}_{Phi }}left({{bf{v}}}^{frac{1}{2}},{{bf{v}}}^{-frac{1}{2}}proper)=frac{1}mathop{sum }limits_{{bf{p}}in varOmega }||{{bf{v}}}_{mathrm{CAT}}^{frac{1}{2}}({bf{p}})-{{bf{v}}}^{frac{1}{2}}({bf{p}})|^{2} +||{{bf{v}}}_{mathrm{CAT}}^{-frac{1}{2}}({bf{p}})-{{bf{v}}}^{-frac{1}{2}}({bf{p}})|^{2}+||{{{bf{J}}}_{{boldsymbol{Phi }}}}_{mathrm{CAT}}({bf{p}})-{{bf{J}}}_{Phi }({bf{p}})|^{2}finish{array}$$

improves regularity of the expected velocity fields and the ensuing Jacobi determinant area (Supplementary Fig. 31d). Lastly, we reintroduce the unique loss perform LSyN as

$${L}_{mathrm{supervised}}left({{bf{v}}}^{frac{1}{2}},{{bf{v}}}^{-frac{1}{2}}proper)={L}_{{bf{v}},{{bf{J}}}_{Phi }}left({{bf{v}}}^{frac{1}{2}},{{bf{v}}}^{-frac{1}{2}}proper)+beta {L}_{mathrm{SyN}}$$

with β set to 2 × 10−5. The fields predicted with this method, referred to as supervised SYMNet or sSYMNet, don’t present any obvious artifacts (Supplementary Fig. 31e). The implementation of the mannequin and the coaching process is publicly accessible by way of GitHub at (ref. 42).

Cross-dataset validation and analysis metrics

We observe greatest practices by making use of a five-fold cross-dataset validation—that’s, cross-validation with datasets grouped collectively—utilizing the 137 datasets from OpenNeuro-HD (see ‘Coaching and validation datasets’ part within the Strategies). Thereby, we implement reasonable efficiency measures as a result of all reported outcomes are achieved in datasets unseen throughout coaching of the respective mannequin. We apply the identical folds throughout tissue segmentation, picture registration and GM masking (see ‘Grey matter masking’ within the Supplementary Data) to keep away from information leakage between these processing steps. The check datasets (see ‘Take a look at datasets’ part within the Strategies) and the datasets in OpenNeuro-Whole and OpenNeuro-Youngsters, which aren’t a part of OpenNeuro-HD (see ‘OpenNeuro-Whole’ part within the ‘Outcomes’), are evaluated with a further mannequin educated with the total OpenNeuro-HD dataset.

Provided that the distribution of efficiency metrics throughout pictures is commonly skewed, the median is used as a measure of central tendency, complemented by a visible inspection of unfavourable outliers.

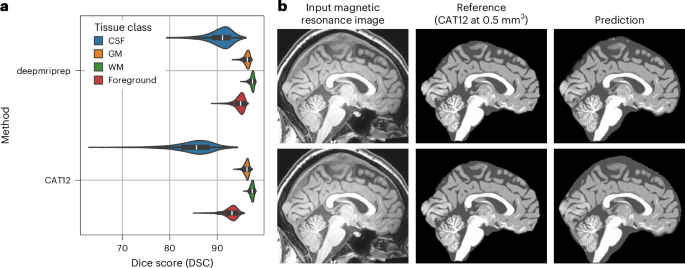

To judge tissue segmentation and GM masking efficiency, we use the Cube rating DSC, the probabilistic Cube rating pDSC and the Jaccard rating JSC. The picture registrations are evaluated primarily based on the regularity of the deformation area and the dissimilarity between the warped enter and the template picture. This dissimilarity is measured utilizing the voxel-wise MSE between the pictures. The deformation area’s regularity—that’s, its bodily legitimacy—is quantified by way of the linear elasticity LE (equation (1)).

Prediction pipeline

The entire deepmriprep pipeline used earlier than the GLM evaluation within the ‘VBM analyses’ part within the ‘Outcomes’ consists of six steps: mind extraction, affine registration, tissue segmentation, tissue separation, nonlinear registration and smoothing. After mind extraction utilizing deepbet14 with default settings, affine registration is utilized utilizing the sum of the MSE (between picture and template) and Cube loss (between picture mind masks and template mind masks). The affine registration is carried out in torchreg60 with zero padding—smart after mind extraction—and the default two-stage setting with 500 iterations in 12-mm3 and successive 100 iterations in 6-mm3 picture decision. After tissue segmentation (see ‘Tissue segmentation’ part within the Strategies) and earlier than picture registration (see ‘Picture registration’ part within the Strategies), we apply GM masking within the ventricles and across the mind stem to adapt the likelihood masks to an undocumented step within the present CAT12 preprocessing (see ‘Grey matter masking’ part within the Supplementary Data). After picture registration of the GM and WM likelihood masks, Gaussian smoothing with a 6 mm full width at half most kernel is utilized. Consistent with all earlier steps, smoothing (a easy convolution operation) is carried out in PyTorch, enabling graphics processing unit acceleration all through your entire prediction pipeline.

VBM analyses

To analyze the impact of various preprocessings on the VBM analyses, deepmriprep- and CAT12-preprocessed information are used to look at statistical associations with each organic variables (age, intercourse and BMI) and psychometric variables (years of training, MDD versus HC, and IQ). To make sure the reliability of outcomes, every VBM evaluation is repeated 100 instances with a randomly chosen 80% subset of the information. Lastly, the median t-map throughout these 100 VBM analyses is used to match the VBM outcomes of deepmriprep and CAT12.

Statistics and reproducibility

We observe greatest practices by making use of a five-fold cross-dataset validation—that’s, cross-validation with datasets grouped collectively—throughout coaching and in depth testing of the fashions throughout a number of check datasets. As well as, the variety of datasets was maximized by gathering MRI information from OpenNeuro and performing high quality checks, leading to 225 datasets. Every VBM evaluation is repeated 100 instances with a randomly chosen 80% subset of the information. No statistical technique was used to predetermine pattern dimension.

Reporting abstract

Additional data on analysis design is offered within the Nature Portfolio Reporting Abstract linked to this text.