Cohere AI Labs has launched Tiny Aya, a household of small language fashions (SLMs) that redefines multilingual efficiency. Whereas many fashions scale by growing parameters, Tiny Aya makes use of a 3.35B-parameter structure to ship state-of-the-art translation and era throughout 70 languages.

The discharge contains 5 fashions: Tiny Aya Base (pretrained), Tiny Aya World (balanced instruction-tuned), and three region-specific variants—Earth (Africa/West Asia), Fireplace (South Asia), and Water (Asia-Pacific/Europe).

The Structure

Tiny Aya is constructed on a dense decoder-only Transformer structure. Key specs embrace:

- Parameters: 3.35B complete (2.8B non-embedding)

- Layers: 36

- Vocabulary: 262k tokenizer designed for equitable language illustration.

- Consideration: Interleaved sliding window and full consideration (3:1 ratio) with Grouped Question Consideration (GQA).

- Context: 8192 tokens for enter and output.

The mannequin was pretrained on 6T tokens utilizing a Warmup-Steady-Decay (WSD) schedule. To take care of stability, the group used SwiGLU activations and eliminated all biases from dense layers.

Superior Put up-training: FUSION and SimMerge

To bridge the hole in low-resource languages, Cohere used an artificial information pipeline.

- Fusion-of-N (FUSION): Prompts are despatched to a ‘team of teachers’ (COMMAND A, GEMMA3-27B-IT, DEEPSEEK-V3). A decide LLM, the Fusor, extracts and aggregates the strongest elements of their responses.

- Area Specialization: Fashions had been finetuned on 5 regional clusters (e.g., South Asia, Africa).

- SimMerge: To forestall ‘catastrophic forgetting’ of world security, regional checkpoints had been merged with the worldwide mannequin utilizing SimMerge, which selects one of the best merge operators based mostly on similarity indicators.

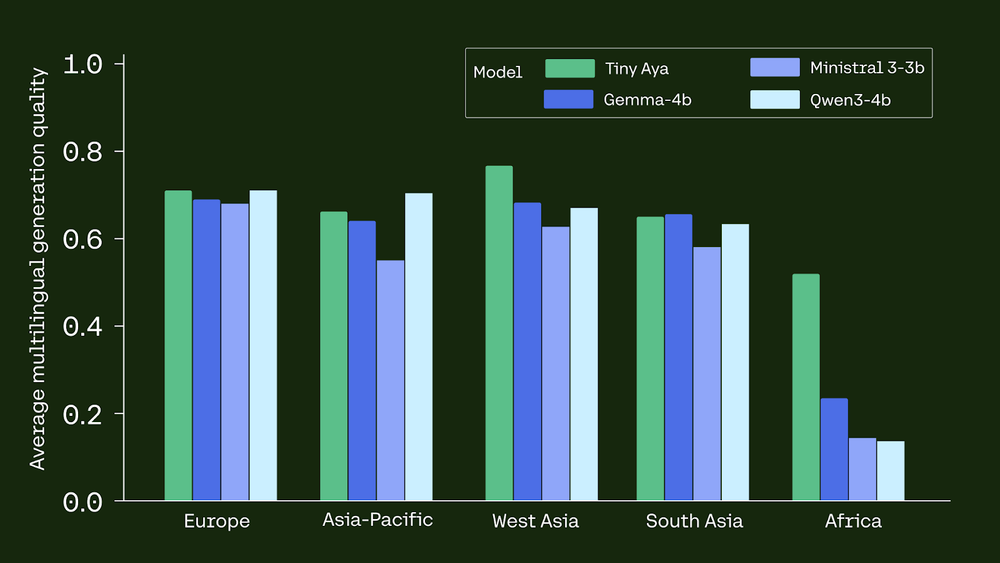

Efficiency Benchmarks

Tiny Aya World constantly beats bigger or same-scale opponents in multilingual duties:

- Translation: It outperforms GEMMA3-4B in 46 of 61 languages on WMT24++.

- Reasoning: Within the GlobalMGSM (math) benchmark for African languages, Tiny Aya achieved 39.2% accuracy, dwarfing GEMMA3-4B (17.6%) and QWEN3-4B (6.25%).

- Security: It holds the best imply protected response charge (91.1%) on MultiJail.

- Language Integrity: The mannequin achieves 94% language accuracy, that means it hardly ever switches to English when requested to answer in one other language.

On-System Deployment

Tiny Aya is optimized for edge computing. Utilizing 4-bit quantization (Q4_K_M), the mannequin matches in a 2.14 GB reminiscence footprint.

- iPhone 13: 10 tokens/s.

- iPhone 17 Professional: 32 tokens/s.

This quantization scheme ends in a minimal 1.4-point drop in era high quality, making it a viable answer for offline, personal, and localized AI functions.

Key Takeaways

- Environment friendly Multilingual Energy: Tiny Aya is a 3.35B-parameter mannequin household that delivers state-of-the-art translation and high-quality era throughout 70 languages. It proves that huge scale just isn’t required for robust multilingual efficiency if fashions are designed with intentional information curation.

- Progressive Coaching Pipeline: The fashions had been developed utilizing a novel technique involving Fusion-of-N (FUSION), the place a ‘team of teachers’ (like Command A and DeepSeek-V3) generated artificial information. A decide mannequin then aggregated the strongest elements to make sure high-quality coaching indicators even for low-resource languages.

- Regional Specialization by way of Merging: Cohere launched specialised variants—Tiny Aya Earth, Fireplace, and Water—that are tuned for particular areas like Africa, South Asia, and the Asia-Pacific. These had been created by merging regional fine-tuned fashions with a world mannequin utilizing SimMerge to protect security whereas boosting native language efficiency.

- Superior Benchmark Efficiency: Tiny Aya World outperforms opponents like Gemma3-4B in translation high quality for 46 of 61 languages on WMT24++. It additionally considerably reduces disparities in mathematical reasoning for African languages, attaining 39.2% accuracy in comparison with Gemma3-4B’s 17.6%.

- Optimized for On-System Deployment: The mannequin is very moveable and runs effectively on edge units; it achieves ~10 tokens/s on an iPhone 13 and 32 tokens/s on an iPhone 17 Professional utilizing Q4_K_M quantization. This 4-bit quantization format maintains prime quality with solely a minimal 1.4-point degradation.

Try the Technical particulars, Paper, Mannequin Weights and Playground. Additionally, be at liberty to comply with us on Twitter and don’t neglect to affix our 100k+ ML SubReddit and Subscribe to our Publication. Wait! are you on telegram? now you possibly can be part of us on telegram as properly.