Mistral AI has been steadily developing one of the most hands-on coding agent ecosystems around, and they’ve just rolled out their biggest infrastructure update to date. The Mistral team unveiled remote agents in Vibe, its coding agent platform, along with the public preview of Mistral Medium 3.5 — a new 128B dense model that now serves as the default in both Vibe and Le Chat, the company’s all-purpose assistant.

What is Vibe, and Why Should You Care?

For those who haven’t tried it yet, Mistral Vibe is a CLI (command-line interface) coding agent that takes over software tasks for you — think writing code, restructuring modules, creating tests, diagnosing CI failures, and beyond. Picture it as a tireless junior developer who can navigate your entire codebase.

Previously, Vibe sessions operated locally on your machine, locking the agent to your laptop and terminal. That’s no longer the case.

Remote Agents: Let the Agent Work While You’re Away

Here’s the simplest way to think about it — coding sessions can now tackle lengthy tasks in the background while you’re gone. Multiple sessions can run simultaneously, freeing you from slowing things down at every step.

This marks the core workflow change. Rather than hovering over a terminal monitoring every move, you launch a task and let the cloud take it from there. You can spin up cloud agents through the Mistral Vibe CLI or directly from Le Chat. While agents are at work, you can peek in on their progress — reviewing file diffs, tracking tool calls, checking statuses, and responding to prompts along the way.

A particularly handy feature for anyone already deep in work: active local CLI sessions can be seamlessly shifted to the cloud so you can leave things running, with your session history, task context, and approval settings moving right along with them. You don’t lose any progress — the work simply moves off your machine.

Every session is fully isolated. Each agent runs in its own sandboxed environment, safe to make broad edits and install packages freely. Once the job is finished, the agent can open a pull request on GitHub and ping you, letting you review the outcome instead of micromanaging every keystroke that led there.

It’s also worth unpacking how Vibe ties into Le Chat. The team built the integration using Workflows orchestrated through Mistral Studio — the same system originally created for their own internal coding setup, then offered to enterprise clients, and now available to all users. What this means is the remote coding agent in Le Chat isn’t a disconnected add-on — it’s powered by Mistral’s native orchestration layer, which is useful to know if you’re considering how to build similar agent-driven systems.

On the connectivity front, Vibe integrates with GitHub for repository management and pull requests, Linear and Jira for issue tracking, Sentry for incident monitoring, and communication tools like Slack and Teams for updates.

Mistral Medium 3.5: The Engine Powering Everything

None of this would hold together without a strong AI model underneath it all. Enter Mistral Medium 3.5, which the Mistral team is calling its first flagship merged model.

It’s a 128B dense model with a 256k context window, covering instruction-following, reasoning, and coding all within a single set of weights. To put that into perspective, a 256k context window allows the model to ingest roughly 200,000 words at once — enough to reason across an entire large-scale codebase in one go.

The model also supports multimodal input. The Mistral team built the vision encoder from the ground up to process variable image sizes and aspect ratios — a deliberate architectural decision. Rather than relying on off-the-shelf pretrained encoders like CLIP indicates the team prioritized adaptability for real-world image handling over standard fixed-resolution approaches.

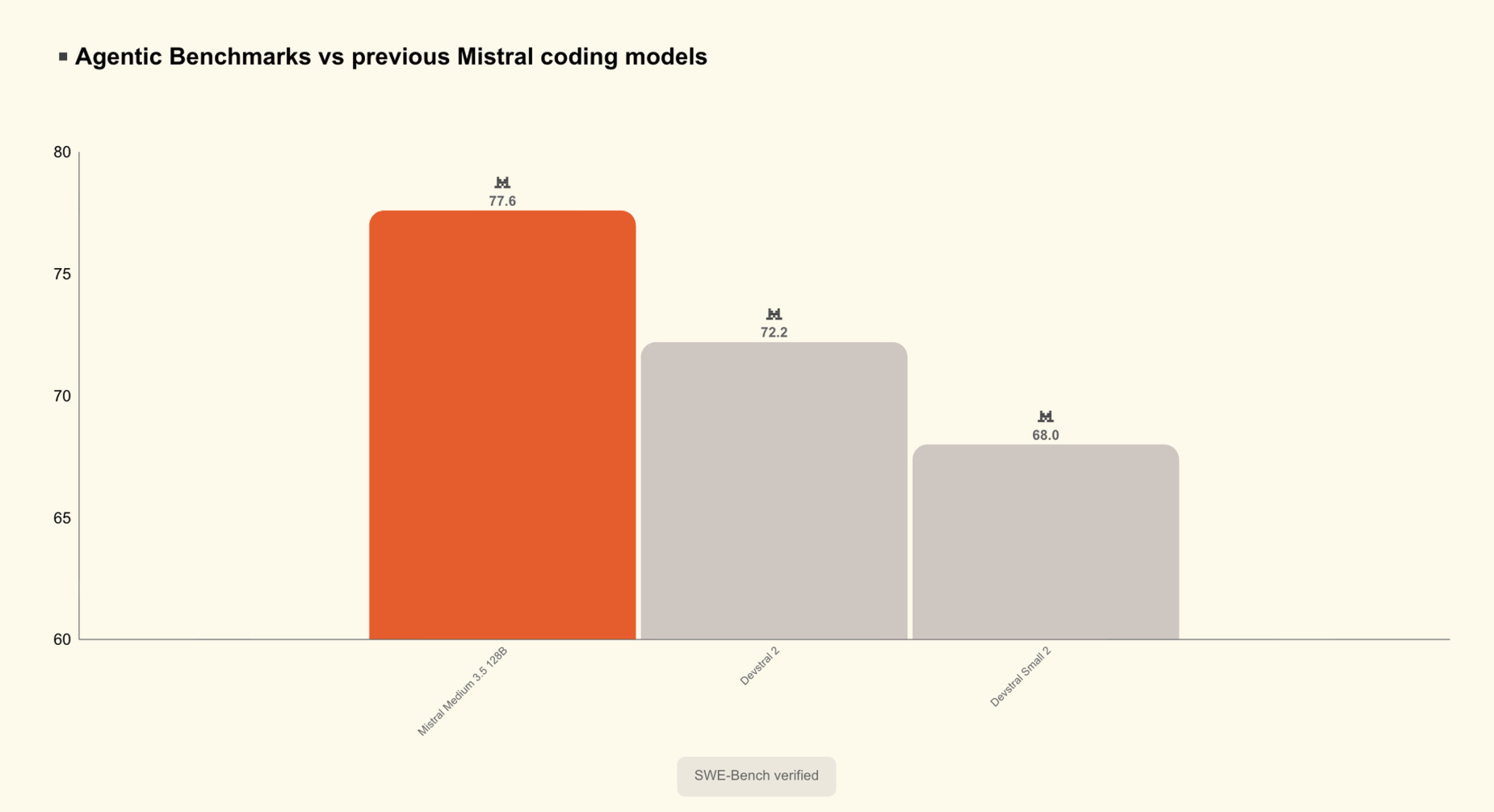

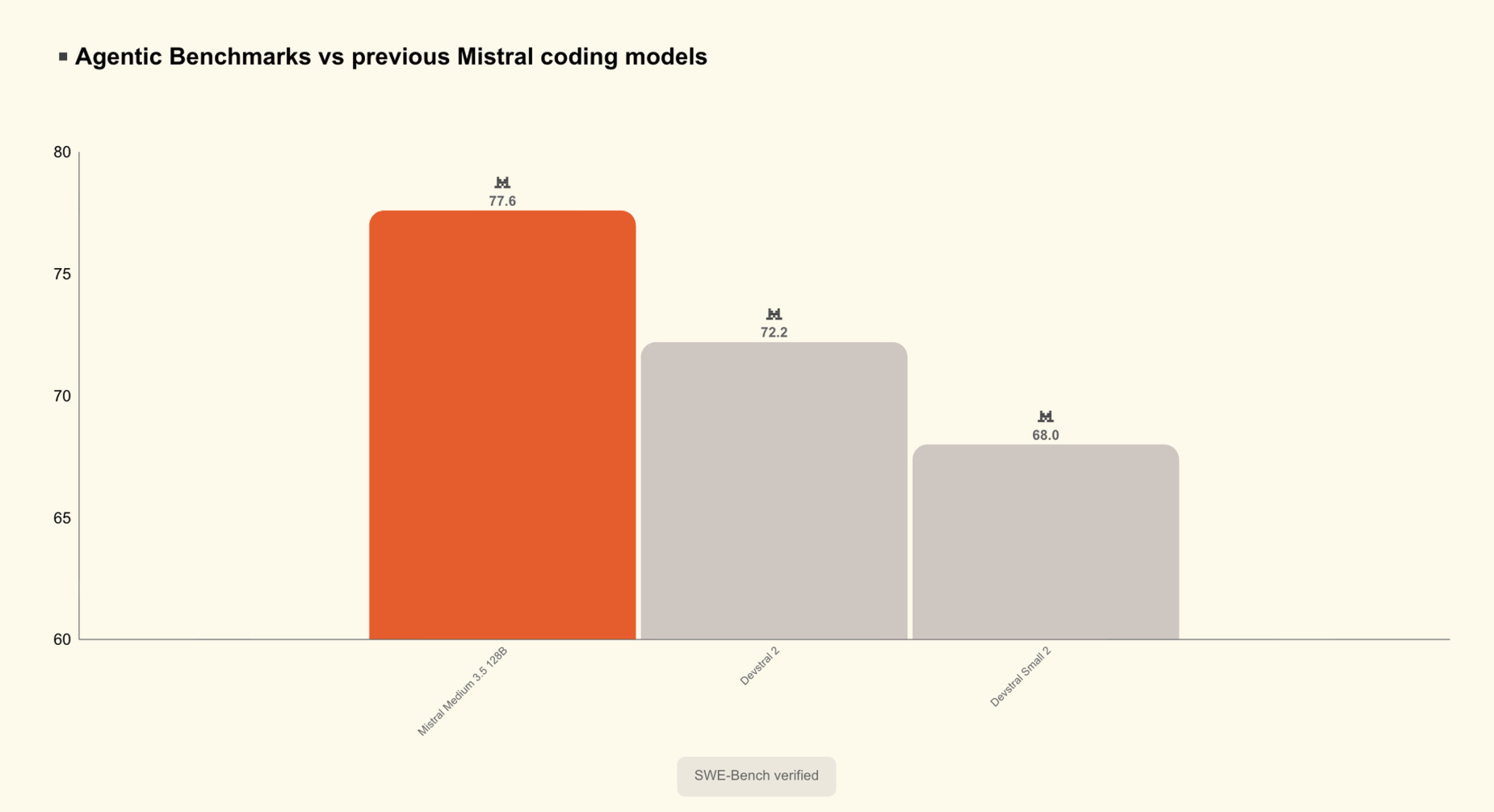

Mistral Medium 3.5 achieves a 77.6% score on SWE-Bench Verified, outperforming Devstral 2 and models like Qwen3.5 397B A17B. SWE-Bench Verified is a widely recognized benchmark that evaluates whether a model can resolve real GitHub issues drawn from popular open-source projects — making it one of the most dependable indicators of genuine software engineering skill. The model also posts a 91.4 on τ³-Telecom and shows strong agentic capabilities.

A notable design decision: reasoning effort can now be adjusted on a per-request basis, allowing the same model to handle a brief chat response or tackle a complex agentic workflow. This is especially valuable for developers using the API — you can reduce compute for straightforward queries and increase it for multi-step reasoning, all without swapping models.

The model was engineered for long-running tasks, dependable multi-tool invocation, and generating structured outputs that downstream applications can easily process.

Work Mode in Le Chat: A New Agentic Layer

In addition to the coding agent improvements, Mistral is rolling out Work mode in Le Chat — a new agentic mode designed for broader, multi-step tasks. Work mode is a robust new agentic capability within Le Chat, driven by a new harness and Mistral Medium 3.5. The agent acts as the execution engine for the assistant, enabling Le Chat to read and write files, leverage multiple tools concurrently, and carry out multi-step projects until the task is fully complete.

In practice, this enables cross-tool workflows — such as catching up across email, messages, and calendar; getting ready for a meeting with context gathered from various sources; or sorting through an inbox and generating Jira tickets from team conversations.

With Work mode, connectors are enabled by default rather than requiring manual selection, giving the agent access to documents, mailboxes, calendars, and other systems to gather the context it needs to act accurately. This marks a major usability improvement over conventional chat assistants, where tools must be manually chosen before each session.

Transparency is woven into the experience from the start: every action the agent performs is displayed — you can see each tool call along with the reasoning behind it. Le Chat will request your explicit approval — based on your permission settings — before carrying out sensitive actions like sending a message, creating a document, or altering data.

Key Takeaways

Here’s a summary of the highlights:

- Mistral Medium 3.5 is now the default model in both Vibe and Le Chat — a dense 128B parameter model with a 256k context window, achieving 77.6% on SWE-Bench Verified, outperforming Devstral 2 and Qwen3.5 397B A17B, and available as open weights on Hugging Face.

- Vibe coding agents now operate in the cloud — sessions can be launched from the CLI or Le Chat, execute asynchronously in isolated sandboxes, and local sessions can be seamlessly moved to the cloud without losing session history or task progress.

- Le Chat’s new Work mode enables parallel, multi-step agentic task execution — powered by Mistral Medium 3.5, it can simultaneously work across email, calendar, documents, Jira, and Slack, with all tool calls and reasoning steps visible and explicit approval required before sensitive actions.

- Reasoning effort in Mistral Medium 3.5 is adjustable per API request — the same model efficiently handles lightweight chat responses and complex long-horizon agentic workflows.

Check out the Model Weights on HF and Technical details. Also, feel free to follow us on Twitter and don’t forget to join our 130k+ ML SubReddit and subscribe to our Newsletter. Wait! Are you on Telegram? Now you can join us on Telegram as well.

Looking to partner with us to promote your GitHub repo, Hugging Face page, product release, webinar, or more? Connect with us