Learnable frontend (LEAF)

LEAF22 is designed to switch express pre-processing steps for uncooked audio recordsdata (just like the extraction of frequency-based options within the type of spectrograms) by performing comparable processing steps by parametrised operations within the community. The output of this learnable frontend, having an identical type of representations as spectrograms, then serves as enter to a backend neural community – typically a convolutional neural community (CNN) – and the parameters of the frontend are learnt collectively with the parameters of the backend. For this function, LEAF takes in a one-dimensional waveform sign with T samples and transforms it into an output of measurement (Mtimes N), with a lot of N filters and M time home windows, following three steps: Step one, which shall be the main focus of this work, is the convolution of the enter sign with a set of N 1-D Gabor filters (phi _n), adopted by a squared modulus operator. The Gabor filters28 are characterised by the bandwidth (1/sigma _n) of their Gaussian kernel and the centre frequency (eta _n) of their sinusoidal sign, with each centre frequency and bandwidth being learnable parameters. The convolution of the enter sign and the Gabor filters thus produce an output of (Ttimes N), which might be seen because the sign passing by N bandpass filters. The second step within the LEAF pipeline is a Gaussian lowpass filter with a learnable bandwidth and a set window and hop measurement, which reduces the decision of the sign from (Ttimes N) to (Mtimes N). Lastly, further learnable parameters are launched by a PCEN, the behaviour of which throughout coaching has been analysed earlier than26.

As a backend for classification, we make use of EfficientNet-B029, a light-weight CNN structure with roughly 4 million parameters, which has typically served as a spine for LEAF in different research22,26,30. The variety of output neurons is adjusted to the variety of courses in every laptop audition (CA) process.

Filterbank initialisation

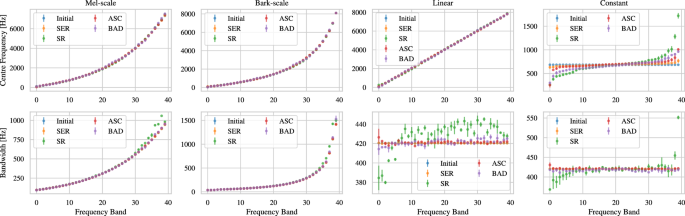

Our fundamental level of investigation on this contribution is on the interaction of the Gabor filter parameters and the coaching for numerous laptop audition duties. For that function, we discover totally different initialisations of the Gabor filterbank to analyze how LEAF adjusts to totally different beginning factors or prior information. Amongst different issues, we goal to higher perceive whether or not totally different filterbanks are preferable throughout duties and to what extent LEAF is ready to converge to them. Along with the initialisations explored in27, we additionally embrace a very suboptimal fixed initialisation, which provides all channels of LEAF entry to the identical frequency vary.

Mel-scale: The usual initialisation of LEAF’s Gabor filters follows centre frequencies and bandwidths initialised in accordance with the Mel-scale7. With an extended historical past of analysis, the Mel-scale is an try to quantify the sensitivity of human listening to notion in several frequency ranges. It’s characterised by the next frequency decision within the decrease frequencies and decrease density within the increased frequencies. The transformation for a given level m on the linear Mel-scale to a corresponding frequency (eta) (in Hz) follows an exponential distribution7. The initialisation covers 40 filter bands with equidistant centre frequencies throughout the Mel-scale, starting from (65,textrm{Hz}) to (7800,textrm{Hz}). Bandwidths are correspondingly initialised round every centre frequency, such that they roughly cowl a variety from the earlier to the next centre frequency.

Bark-scale: The Bark-scale is a psychoacoustic scale, sharing some similarities with the Mel-scale however with the primary concentrate on perceived loudness throughout the frequency spectrum. We as soon as once more derive an initialisation for LEAF filters utilizing equally spaced frequencies, in an identical frequency vary because the Mel-scale, with bandwidths as soon as extra roughly spanning from one centre frequency to the subsequent. Our conversion to Hertz (together with error corrections) follows Traunmüller31.

Linear: In distinction to the beforehand talked about psychoacoustics-informed scales, we moreover outline an initialisation masking the identical frequency vary, however with equal distances of centre frequencies sampled on a linear scale, thus omitting any prior bias from human listening to. The bandwidths are outlined with a relentless worth of 420 Hz, barely beneath the median bandwidth of the Mel-initialisation, thus providing insights into whether or not smaller bandwidths for smaller frequencies are learnt mechanically.

Fixed: As the ultimate sort of initialisation, we select a relentless centre frequency of 684 Hz, proper on the centre of the Mel-scale and the identical fixed bandwidth as within the linear case. This resembles successfully one an identical bandpass filter throughout all channels. LEAF thus wants to regulate its parameters to get entry to data in several frequency ranges.

Datasets

Our choice of datasets encompasses a excessive number of auditory cues and goal duties, exhibiting appreciable variations in data distribution throughout frequencies, which in flip would possibly favour totally different frequency distributions within the filterbanks. We mix duties masking earlier associated analysis within the type of linguistic and paralinguistic speech duties, in addition to a chicken recognition process. We additional lengthen our experiments to a extra various acoustic scene classification process containing a big number of audio occasions. The datasets are publicly out there for analysis. The experiments don’t pose moral issues conflicting the situations beneath which the info units are launched. All audio samples are resampled to (16,textrm{kHz}), if obligatory, to match the enter necessities for a typical LEAF mannequin. All splits are reproducible as we used and saved mounted random seeds to create splits the place obligatory, which can be found in our repository.

Speech recognition (SR): The Speech Instructions dataset32 is a typical benchmark dataset for SR, with knowledge splits supplied by torchaudio. The duty is to assign clear speech recordings of round (1,textrm{s}) size to 1 out of 35 key phrases.

Speech emotion recognition (SER): The FAU-AIBO corpus33,34 was launched as the primary ever SER problem and has a stronger concentrate on paralinguistic data in speech, giving perception concerning the topic’s feelings. We select the 2-class model of the duty, thus a classification of utterances to both of two emotion courses. The dataset is break up in accordance with the Interspeech 2009 Emotion Problem35.

Acoustic scene classification (ASC): To research the behaviour of LEAF past speech-based duties, we go for Activity 1 of the DCASE2020 problem. The (10,)s lengthy audio chunks should be labeled to 1 out of 10 acoustic scenes and comprise environmental noises, corresponding to “natural” animal sounds, but in addition machine sounds, typically engineered in the direction of a low auditory disturbance of the human listening to36; we use the official coaching/analysis splits of the problem knowledge.

Fowl exercise detection (BAD): Lastly, the BAD process comprises audio with the least adaption to human listening to, as chicken vocalisations are believed to be evolutionary developed for bird-to-bird communication and are evidently in increased frequency ranges than nearly all of human communication37, which was additionally the justification to incorporate a bird-related process in27. Moreover, LEAF has explicitly proven to outperform non-learnable frontends for chicken exercise recognition23. We use the datasets from the DCASE 2018 Fowl Audio Detection problem38, comprising knowledge from three distinct supply. As take a look at set, we use the “warblrb10k” a part of the info, whereas we do a random 75%−25% break up of the opposite two sources for practice and validation.

Noise and bandpass filter augmentations

To be able to additional assert management over the frequency profile of our knowledge in our second line of experiments, we incorporate three sorts of augmentation strategies, one limiting the data content material of the frequencies and two including frequency-specific noise signatures to the unique audio.

Bandpass filtering: We start with bandpass filtering utilizing 2nd order Butteworth filters that goal to restrict the frequency content material of the sign past a sure vary of frequencies. Particularly, we design 10 bandpass filters with their centres and bandwidths following the Mel-scale throughout the identical frequency vary as described for the Mel initialisation. We apply one bandpass filter at a time through the experiments, ensuring that solely frequency content material throughout the chosen bandpass filter stays. This aggressive elimination of frequency content material serves to restrict the exploration panorama for LEAF; our speculation is that the mannequin will adapt to the restricted bandwidth by specializing in the out there frequencies.

Low-passed noise: We add low-passed noise to the info, the place we begin from uniform white noise and low-pass it with 2nd order Butteworth filters with cutoff frequencies being the centres of the 10-part Mel-scale outlined above (that is impressed by pink noise);

Excessive-passed noise: Lastly, we add high-passed noise to the sign, the place we begin from unfiltered broadband white noise and high-pass it in comparable trend (that is impressed by blue noise). Observe that as a substitute of pink/blue noise we opted for these various definitions of pink and blue noise as a result of we needed zero noise (moderately than attenuated noise) within the increased/decrease frequencies.

Experiments

All experiments are carried out with the identical mannequin structure consisting of a LEAF frontend and EfficientNet-B0, solely deviating within the variety of neurons within the classification layer and their filterbank initialisation, on all 4 CA duties. We go for a unified coaching setting throughout datasets and initialisations. We practice all fashions for 50 epochs with balanced cross-entropy loss, Adam optimiser with a studying fee of (3cdot 10^{-4}), and a batch measurement of 32. Throughout coaching, the mannequin is evaluated on the validation knowledge after each epoch. The ultimate evaluations on the take a look at knowledge are then carried out for the mannequin states with the very best validation efficiency in every coaching run. The code is predicated on the autrainer package deal39 and is publicly out there. We be aware that from the 48 experiments solely 5 fashions confirmed enchancment with respect to the event loss within the final three epochs of coaching, with nearly all of experiments reaching their lowest growth loss lengthy earlier than the ultimate epoch. Total, we thus assume that almost all fashions have reached an inexpensive stage of convergence, permitting us to additional analyse their skilled mannequin states. Coaching was carried out on a NVIDIA GeForce RTX 3090 with per-epoch coaching instances of roughly 6:37 min (SR), 1:22 min (SER), 8:29 min (ASC), and seven:33 min (BAD).