Picture by Creator

# Introduction

Operating a top-performing AI mannequin regionally now not requires a high-end workstation or costly cloud setup. With light-weight instruments and smaller open-source fashions, now you can flip even an older laptop computer right into a sensible native AI setting for coding, experimentation, and agent-style workflows.

On this tutorial, you’ll discover ways to run Qwen3.5 regionally utilizing Ollama and join it to OpenCode to create a easy native agentic setup. The objective is to maintain every part easy, accessible, and beginner-friendly, so you will get a working native AI assistant with out coping with an advanced stack.

# Putting in Ollama

Step one is to put in Ollama, which makes it simple to run massive language fashions regionally in your machine.

In case you are utilizing Home windows, you may both obtain Ollama straight from the official Obtain Ollama on Home windows web page and set up it like some other software, or run the next command in PowerShell:

irm | iex

The Ollama obtain web page additionally contains set up directions for Linux and macOS, so you may comply with the steps there if you’re utilizing a distinct working system.

As soon as the set up is full, you’ll be prepared to begin Ollama and pull your first native mannequin.

# Beginning Ollama

Typically, Ollama begins robotically after set up, particularly if you launch it for the primary time. Which means it’s possible you’ll not have to do the rest earlier than working a mannequin regionally.

If the Ollama server shouldn’t be already working, you can begin it manually with the next command:

# Operating Qwen3.5 Regionally

As soon as Ollama is working, the subsequent step is to obtain and launch Qwen3.5 in your machine.

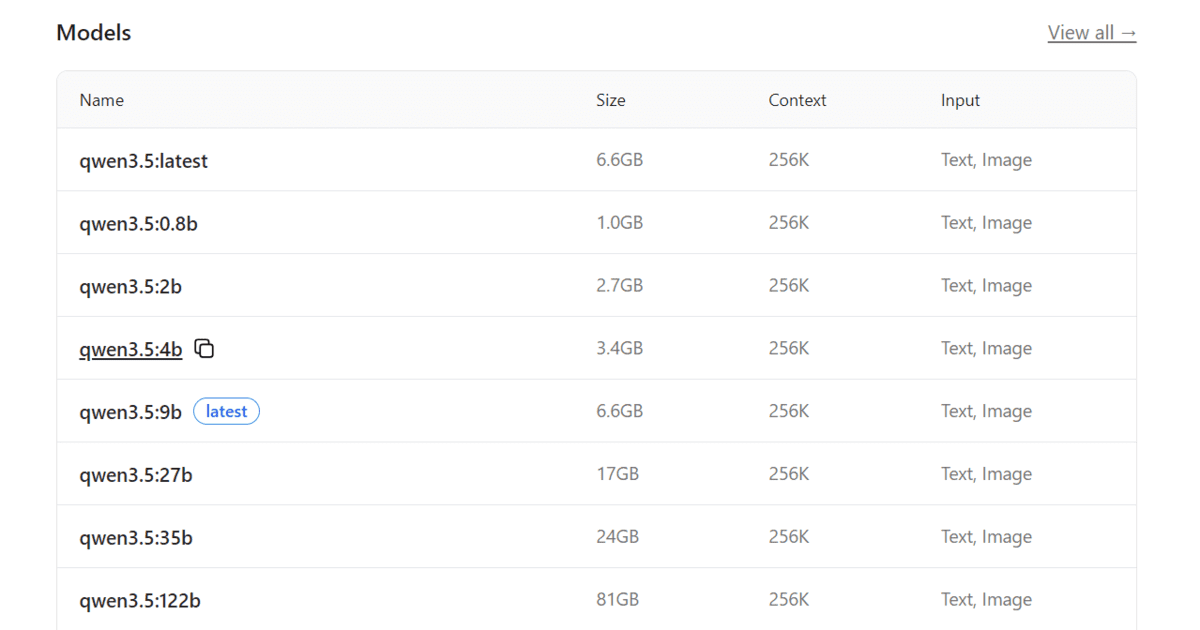

If you happen to go to the Qwen3.5 mannequin web page in Ollama, you will notice a number of mannequin sizes, starting from bigger variants to smaller, extra light-weight choices.

For this tutorial, we are going to use the 4B model as a result of it gives steadiness between efficiency and {hardware} necessities. It’s a sensible selection for older laptops and sometimes requires round 3.5 GB of random entry reminiscence (RAM).

To obtain and run the mannequin out of your terminal, use the next command:

The primary time you run this command, Ollama will obtain the mannequin recordsdata to your machine. Relying in your web velocity, this may increasingly take a couple of minutes.

After the obtain finishes, Ollama might take a second to load the mannequin and put together every part wanted to run it regionally. As soon as prepared, you will notice an interactive terminal chat interface the place you may start prompting the mannequin straight.

At this level, you may already use Qwen3.5 within the terminal for easy native conversations, fast checks, and light-weight coding assist earlier than connecting it to OpenCode for a extra agentic workflow.

# Putting in OpenCode

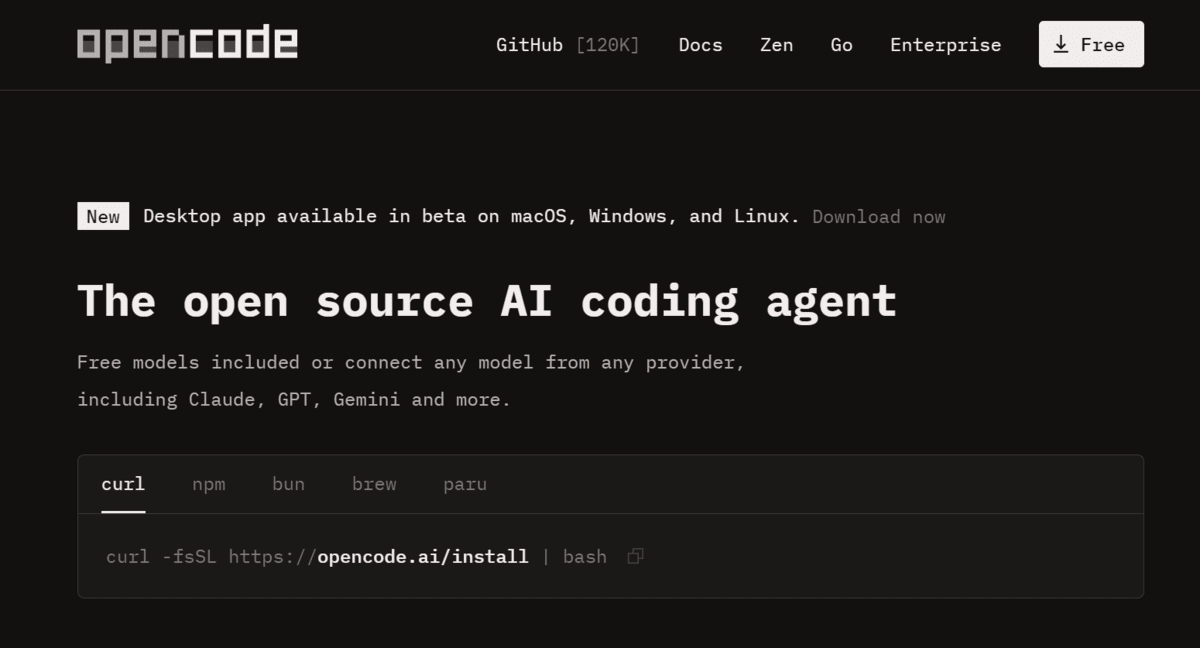

After organising Ollama and Qwen3.5, the subsequent step is to put in OpenCode, an area coding agent that may work with fashions working by yourself machine.

You may go to the OpenCode web site to discover the out there set up choices and study extra about the way it works. For this tutorial, we are going to use the fast set up methodology as a result of it’s the easiest solution to get began.

Run the next command in your terminal:

curl -fsSL | bash

This installer handles the setup course of for you and installs the required dependencies, together with Node.js when wanted, so that you shouldn’t have to configure every part manually.

# Launching OpenCode with Qwen3.5

Now that each Ollama and OpenCode are put in, you may join OpenCode to your native Qwen3.5 mannequin and begin utilizing it as a light-weight coding agent.

If you happen to take a look at the Qwen3.5 web page in Ollama, you’ll discover that Ollama now helps easy integrations with exterior AI instruments and coding brokers. This makes it a lot simpler to make use of native fashions in a extra sensible workflow as an alternative of solely chatting with them within the terminal.

To launch OpenCode with the Qwen3.5 4B mannequin, run the next command:

ollama launch opencode --model qwen3.5:4b

This command tells Ollama to begin OpenCode utilizing your regionally out there Qwen3.5 mannequin. After it runs, you’ll be taken into the OpenCode interface with Qwen3.5 4B already linked and able to use.

# Constructing a Easy Python Venture with Qwen3.5

As soon as OpenCode is working with Qwen3.5, you can begin giving it easy prompts to construct software program straight out of your terminal.

For this tutorial, we requested it to create a small Python sport challenge from scratch utilizing the next immediate:

Create a brand new Python challenge and construct a contemporary Guess the Phrase sport with clear code, easy gameplay, rating monitoring, and an easy-to-use terminal interface.

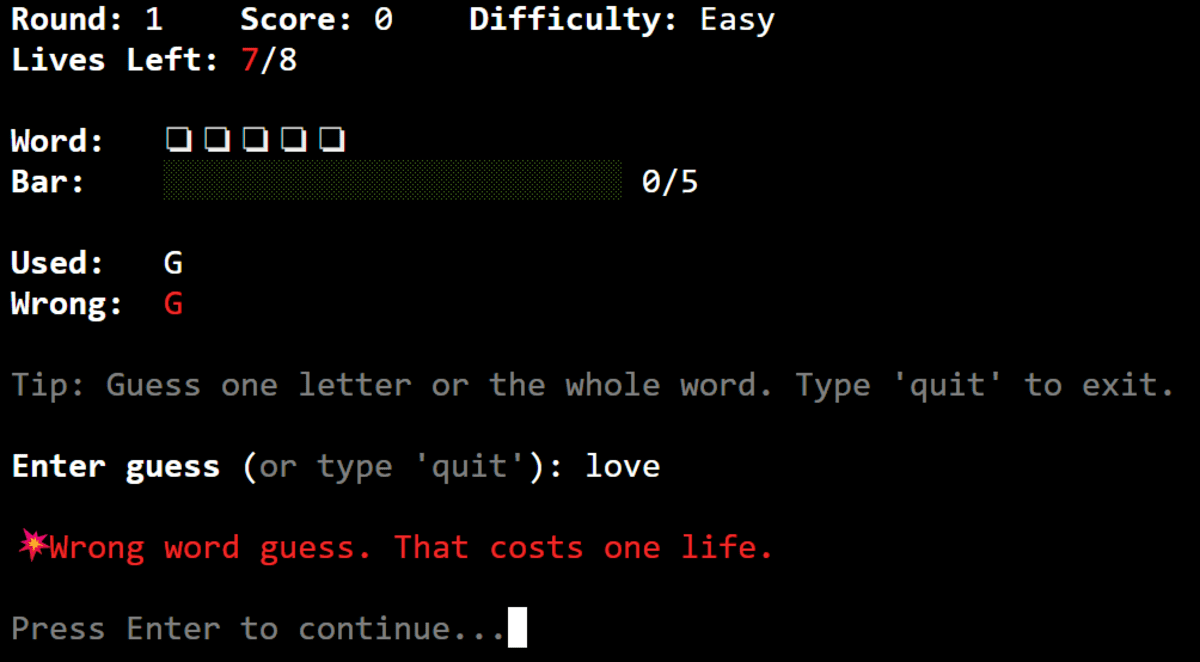

After a couple of minutes, OpenCode generated the challenge construction, wrote the code, and dealt with the setup wanted to get the sport working.

We additionally requested it to put in any required dependencies and take a look at the challenge, which made the workflow really feel a lot nearer to working with a light-weight native coding agent than a easy chatbot.

The ultimate consequence was a completely working Python sport that ran easily within the terminal. The gameplay was easy, the code construction was clear, and the rating monitoring labored as anticipated.

For instance, if you enter an accurate character, the sport instantly reveals the matching letter within the hidden phrase, exhibiting that the logic works correctly proper out of the field.

# Ultimate Ideas

I used to be genuinely impressed by how simple it’s to get an area agentic setup working on an older laptop computer with Ollama, Qwen3.5, and OpenCode. For a light-weight, low-cost setup, it really works surprisingly effectively and makes native AI really feel far more sensible than many individuals anticipate.

That stated, it isn’t all easy crusing.

As a result of this setup depends on a smaller and quantized mannequin, the outcomes usually are not all the time robust sufficient for extra complicated coding duties. In my expertise, it may deal with easy tasks, fundamental scripting, analysis assist, and general-purpose duties fairly effectively, however it begins to wrestle when the software program engineering work turns into extra demanding or multi-step.

One concern I bumped into repeatedly was that the mannequin would generally cease midway by way of a process. When that occurred, I needed to manually sort proceed to get it to maintain going and end the job. That’s manageable for experimentation, however it does make the workflow much less dependable if you need constant output for bigger coding duties.

Abid Ali Awan (@1abidaliawan) is an authorized information scientist skilled who loves constructing machine studying fashions. At present, he’s specializing in content material creation and writing technical blogs on machine studying and information science applied sciences. Abid holds a Grasp’s diploma in expertise administration and a bachelor’s diploma in telecommunication engineering. His imaginative and prescient is to construct an AI product utilizing a graph neural community for college kids combating psychological sickness.