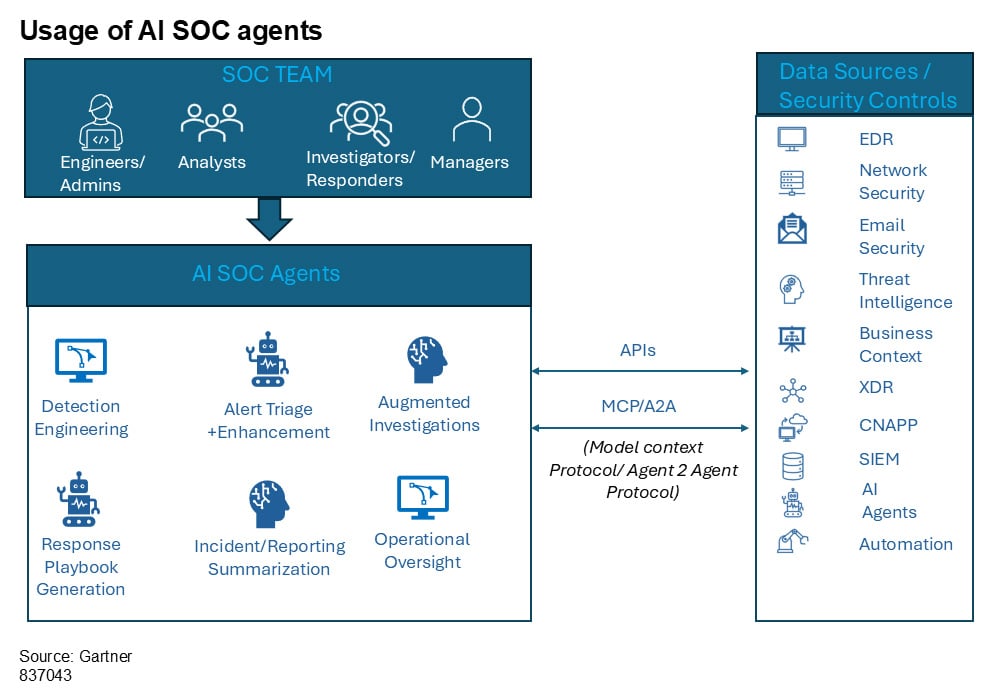

The marketplace for Agentic SOC, or AI SOC brokers as Gartner calls them, is shifting quick. Dozens of startups have entered the house previously 18 months, every promising to rework how safety operations groups deal with alert triage, investigation, and response.

The pitch is normally some model of the identical factor: deploy an AI agent, cut back your alert backlog, and free your analysts to concentrate on higher-value work.

A few of that promise is actual. However Gartner’s newest analysis on the class suggests most organizations evaluating these instruments are asking the improper questions, or not asking sufficient of them.

In a latest Gartner report titled Validate the Guarantees of AI SOC Brokers With These Key Questions, analysts Craig Lawson and Andrew Davies lay out a structured analysis framework for cybersecurity leaders contemplating AI SOC agent deployments.

Their central discovering is sobering: whereas 70% of enormous SOCs are anticipated to pilot AI brokers for Tier 1 and Tier 2 operations by 2028, solely 15% will obtain measurable enhancements with out structured analysis. You’ll be able to obtain a complementary copy of the complete report right here.

That hole between adoption and outcomes is big. It suggests the issue dealing with most safety groups is much less about whether or not to undertake AI within the SOC and extra about find out how to separate real operational enchancment from advertising noise.

Listed here are the important thing areas Gartner recommends evaluating, and why each is essential to success.

1. Does it truly cut back the work your staff does at this time?

This sounds apparent, however Gartner frames it rigorously. The primary query is not “what can this tool do?” however fairly “which SOC functions does your organization handle today that are repetitive time sinks of limited value in improving threat detection, investigation, and response?”

A software would possibly display spectacular capabilities in a demo setting whereas addressing workflows your staff has already solved via different means. The analysis ought to begin along with your operational bottlenecks, not the seller’s characteristic listing.

Gartner additionally recommends asking which particular duties are greatest suited to augmentation and whether or not the answer is purpose-built for particular SOC roles. A platform designed round alert triage and investigation will method the issue in another way than one constructed for creating if/then workflow guidelines.

Understanding that scope upfront prevents misaligned expectations later.

This Gartner report gives cybersecurity leaders with key questions and a realistic method to consider AI SOC options, guaranteeing they really enhance Risk Detection, Investigation, and Response (TDIR) program effectivity and operational outcomes.

Obtain Now

2. How do you measure outcomes past “alerts processed”?

Quantity metrics may be deceptive. Processing 10,000 alerts a month means little or no if the standard of investigation degrades or if true positives slip via.

Gartner emphasizes that analysis ought to heart on enhancements in TDIR metrics and outcomes: imply time to detect, imply time to reply, and false optimistic discount. However the report goes additional, noting that qualitative outcomes matter too.

Is the software enhancing analyst satisfaction? Is it main to raised execution, not simply quicker execution?

The report additionally stresses that imply time to include (MTTC) needs to be the general finish purpose, since containment is the place danger truly will get lowered. Any vendor dialog that stops at triage velocity with out addressing downstream investigation high quality and containment timelines is leaving out the half that issues most.

Ask for real-world benchmarks from environments much like yours. And ask whether or not these benchmarks have been collected throughout a proof of idea or in sustained manufacturing use, as a result of these are sometimes very completely different numbers.

3. Is the seller going to be round in two years?

This class is early-stage. Gartner’s report describes a market with giant numbers of startups utilizing completely different approaches and design ideas. That variety is wholesome for innovation, however it introduces vendor danger that cybersecurity leaders want to guage truthfully.

The report recommends asking when a vendor’s resolution first turned usually obtainable, what their present buyer base seems like, and what their funding and monetary outlook is. Gartner additionally suggests accepting that acquisitions on this house are extremely doubtless and treating that actuality as a third-party vendor administration danger fairly than a disqualifying issue.

Pricing fashions deserve scrutiny too. Some AI SOC brokers worth primarily based on alert quantity, others on knowledge quantity or token utilization.

The price of processing excessive volumes of alerts via an LLM-backed system can scale in sudden methods, and Gartner particularly cautions patrons to know how prices behave beneath load.

4. Does it make your analysts higher, or simply busier another way?

One of many extra nuanced sections of Gartner’s framework focuses on analyst augmentation and upskilling. The query is not simply whether or not the AI handles triage quicker. Velocity has by no means been unsure with AI. It is whether or not the expertise enhances human experience over time.

Gartner recommends asking what coaching and enablement assets accompany the software, whether or not the AI can create studying alternatives for analysts (equivalent to suggesting risk hunts or recommending greatest practices), and whether or not it assists with detection engineering work.

This will get at a pressure within the AI SOC market that does not get mentioned sufficient. If the AI handles all of the investigative legwork, do junior analysts ever develop the abilities to grow to be senior analysts?

The very best implementations thread this needle by presenting their reasoning in a approach that teaches whereas it triages, giving analysts a clear investigation to assessment fairly than a binary verdict to just accept.

Prophet Safety, for instance, buildings its investigations to point out each question, knowledge supply, and analytical step the AI took to succeed in a conclusion. That offers junior analysts a mannequin for a way skilled investigators method an alert, fairly than only a yes-or-no reply to rubber-stamp.

5. What are the boundaries of AI autonomy?

Gartner attracts a helpful distinction between “human in the loop” and “human on the loop” fashions. The previous requires human approval for every motion. The latter offers the AI broader latitude to behave, with human oversight on the strategic stage fairly than the tactical stage.

Neither mannequin is inherently appropriate. The best reply is determined by your group’s danger urge for food, regulatory necessities, and the maturity of the AI system in query.

However Gartner’s framework pushes patrons to ask particular questions: What actions can the agent carry out autonomously, and which require human approval? How do you implement guardrails for high-impact selections like account disablement or community isolation? Can autonomy ranges be personalized primarily based on process kind or danger stage?

The report additionally highlights the significance of fail-safe mechanisms. When an AI agent encounters ambiguity or conflicting indicators, it ought to default to escalation fairly than motion. That design philosophy issues greater than any particular person characteristic as a result of it displays how the system behaves on the edges, which is the place actual injury can happen.

6. Will it truly work along with your current stack?

Integration claims are simple to make and laborious to validate. Gartner’s framework asks patrons to guage native integration depth throughout SIEM, EDR, SOAR, and id platforms fairly than accepting a brand wall at face worth.

The report raises a query that always will get missed: does the answer require knowledge centralization, or can it function in any setting as a plug-and-play resolution?

For organizations with advanced or hybrid architectures, the distinction between a software that wants all of your knowledge in a single place and one that may question throughout a number of safety knowledge sources is operationally vital.

7. Are you able to truly see what it is doing?

Transparency may be an important analysis criterion in the complete framework. Gartner asks: How does the answer present explainability for selections and actions taken by the AI agent? Do you supply human-readable audit trails for each automated motion? How do you deal with delicate knowledge, and what controls stop mannequin misuse or knowledge leakage?

For regulated industries, these aren’t nice-to-haves. They’re necessities. However even organizations with out strict compliance mandates ought to care about explainability as a result of it instantly impacts whether or not analysts belief the software sufficient to undertake it.

An AI agent that produces a verdict with out exhibiting its work places the analyst in an uncomfortable place. They both settle for the conclusion on religion, which is dangerous, or they redo the investigation themselves, which defeats the aim.

This is the reason some distributors within the house have adopted what quantities to a “glass box” method: documenting each question run towards knowledge sources, the particular knowledge retrieved, and the logic used to succeed in a willpower.

Prophet Safety calls this their investigation timeline, the place analysts can hint every conclusion again to the underlying proof fairly than trusting a confidence rating.

The report emphasizes that patrons ought to search for this sort of clear explainability, secure dealing with of delicate knowledge, and mechanisms for human suggestions that really affect the system’s future habits.

The larger image

Gartner’s framework is effective exactly as a result of it resists the impulse to declare winners in a class that is nonetheless taking form. The report’s cautions part warns towards overreliance on advertising claims, notes that full autonomy is not viable at this time, and flags hidden prices round pricing fashions and integration complexity.

For safety leaders evaluating AI SOC brokers, the takeaway is easy: the expertise has real potential to cut back investigation burden, enhance response instances, and prolong protection to alert volumes that human groups merely can not course of manually. However realizing that potential requires the form of structured, outcomes-driven analysis that almost all shopping for processes skip.

Prophet Safety constructed its agentic AI SOC platform round lots of the identical ideas Gartner outlines on this report: clear investigations that present each step of the AI’s reasoning, integration throughout SIEM, EDR, id, and cloud instruments with out requiring knowledge centralization, and a human-on-the-loop mannequin the place analysts assessment accomplished investigations fairly than uncooked alerts.

The platform is designed to enhance current groups, not exchange them, finishing investigations in minutes whereas giving analysts the proof and context they should make assured selections.

For organizations seeking to apply Gartner’s analysis framework to their very own shopping for course of, the complete report, Validate the Guarantees of AI SOC Brokers With These Key Questions, is obtainable for obtain.

Get the report right here to entry all seven analysis classes, together with detailed steering on what to search for in vendor responses.

Sponsored and written by Prophet Safety.