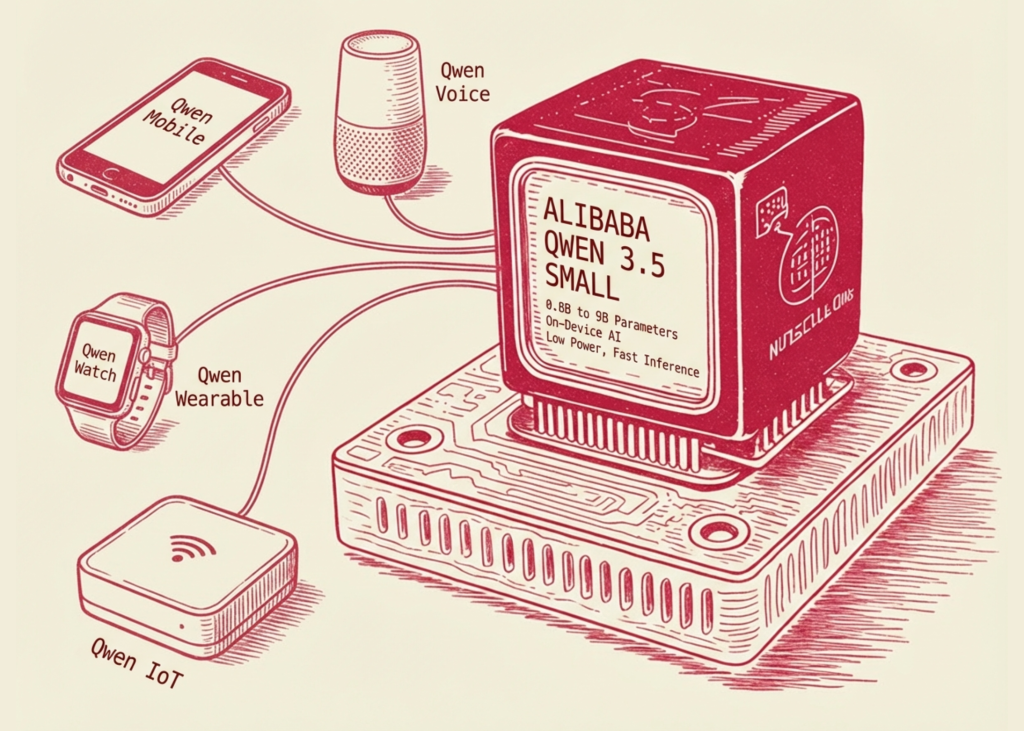

Alibaba’s Qwen staff has launched the Qwen3.5 Small Mannequin Sequence, a set of Massive Language Fashions (LLMs) starting from 0.8B to 9B parameters. Whereas the business development has traditionally favored rising parameter counts to attain ‘frontier’ efficiency, this launch focuses on ‘Extra Intelligence, Much less Compute.‘ These fashions signify a shift towards deploying succesful AI on shopper {hardware} and edge units with out the normal trade-offs in reasoning or multimodality.

The sequence is at present obtainable on Hugging Face and ModelScope, together with each Instruct and Base variations.

The Mannequin Hierarchy: Optimization by Scale

The Qwen3.5 small sequence is categorized into 4 distinct tiers, every optimized for particular {hardware} constraints and latency necessities:

- Qwen3.5-0.8B and Qwen3.5-2B: These fashions are designed for high-throughput, low-latency purposes on edge units. By optimizing the dense token coaching course of, these fashions present a diminished VRAM footprint, making them appropriate with cellular chips and IoT {hardware}.

- Qwen3.5-4B: This mannequin serves as a multimodal base for light-weight brokers. It bridges the hole between pure textual content fashions and sophisticated visual-language fashions (VLMs), permitting for agentic workflows that require visible understanding—comparable to UI navigation or doc evaluation—whereas remaining sufficiently small for native deployment.

- Qwen3.5-9B: The flagship of the small sequence, the 9B variant, focuses on reasoning and logic. It’s particularly tuned to shut the efficiency hole with fashions considerably bigger (comparable to 30B+ parameter variants) via superior coaching methods.

Native Multimodality vs. Visible Adapters

One of many vital technical shifts in Qwen3.5-4B and above is the transfer towards native multimodal capabilities. In earlier iterations of small fashions, multimodality was typically achieved via ‘adapters’ or ‘bridges’ that linked a pre-trained imaginative and prescient encoder (like CLIP) to a language mannequin.

In distinction, Qwen3.5 incorporates multimodality immediately into the structure. This native method permits the mannequin to course of visible and textual tokens throughout the similar latent area from the early levels of coaching. This leads to higher spatial reasoning, improved OCR accuracy, and extra cohesive visual-grounded responses in comparison with adapter-based methods.

Scaled RL: Enhancing Reasoning in Compact Fashions

The efficiency of the Qwen3.5-9B is basically attributed to the implementation of Scaled Reinforcement Studying (RL). Not like customary Supervised Nice-Tuning (SFT), which teaches a mannequin to imitate high-quality textual content, Scaled RL makes use of reward alerts to optimize for proper reasoning paths.

The advantages of Scaled RL in a 9B mannequin embody:

- Improved Instruction Following: The mannequin is extra more likely to adhere to advanced, multi-step system prompts.

- Lowered Hallucinations: By reinforcing logical consistency throughout coaching, the mannequin displays increased reliability in fact-retrieval and mathematical reasoning.

- Effectivity in Inference: The 9B parameter depend permits for sooner token technology (increased tokens-per-second) than 70B fashions, whereas sustaining aggressive logic scores on benchmarks like MMLU and GSM8K.

Abstract Desk: Qwen3.5 Small Sequence Specs

| Mannequin Dimension | Major Use Case | Key Technical Characteristic |

| 0.8B / 2B | Edge Gadgets / IoT | Low VRAM, high-speed inference |

| 4B | Light-weight Brokers | Native multimodal integration |

| 9B | Reasoning & Logic | Scaled RL for frontier-closing efficiency |

By specializing in architectural effectivity and superior coaching paradigms like Scaled RL and native multimodality, the Qwen3.5 sequence supplies a viable path for builders to construct subtle AI purposes with out the overhead of huge, cloud-dependent fashions.

Key Takeaways

- Extra Intelligence, Much less Compute: The sequence (0.8B to 9B parameters) prioritizes architectural effectivity over uncooked parameter scale, enabling high-performance AI on consumer-grade {hardware} and edge units.

- Native Multimodal Integration (4B Mannequin): Not like fashions that use ‘bolted-on’ imaginative and prescient towers, the 4B variant incorporates a native structure the place textual content and visible information are processed in a unified latent area, considerably enhancing spatial reasoning and OCR accuracy.

- Frontier-Stage Reasoning by way of Scaled RL: The 9B mannequin leverages Scaled Reinforcement Studying to optimize for logical reasoning paths slightly than simply token prediction, successfully closing the efficiency hole with fashions 5x to 10x its dimension.

- Optimized for Edge and IoT: The 0.8B and 2B fashions are developed for ultra-low latency and minimal VRAM footprints, making them splendid for local-first purposes, cellular deployment, and privacy-sensitive environments.

Take a look at the Mannequin Weights. Additionally, be at liberty to observe us on Twitter and don’t overlook to hitch our 120k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you may be part of us on telegram as effectively.