Surveys are a cornerstone of social-science analysis. Over the previous twenty years, on-line recruitment platforms — similar to Amazon Mechanical Turk, Prolific, Cloud Analysis’s Prime Panels and Cint’s Lucid — have develop into important instruments for serving to researchers to achieve giant numbers of survey contributors rapidly and cheaply.

There have lengthy been considerations, nevertheless, about inauthentic participation1. Some survey takers rush the duty merely to become profitable. As a result of they’re usually paid a set quantity based mostly on the estimated time taken to finish the survey (sometimes US$6–12 per hour), the sooner they full the duty, the more cash they will make.

Research recommend that between 30% and 90% of responses to social-science surveys could be inauthentic or fraudulent2,3. This downside is exacerbated in research concentrating on specialised populations or marginalized communities as a result of the meant contributors are more durable to achieve and are sometimes recruited on-line, elevating the chance of fraud and interference by automated packages known as bots4,5. These percentages are a lot increased than the quantity of fraudulent responses most research can deal with, if they’re to supply outcomes which can be statistically legitimate: even 3–7% of polluted information can distort outcomes, rendering interpretations inaccurate6. And the issue is getting worse.

AI chatbots are infiltrating social-science surveys — and getting higher at avoiding detection

A parallel trade has emerged providing scripts, bots and tutorials that (legitimately) make it straightforward to partially or absolutely automate kind filling (see, for instance, go.nature.com/4q8kftd). The usage of synthetic intelligence for crafting responses is on the rise, too. For example, solutions mediated by giant language fashions (LLMs) accounted for as much as 45% of submissions in a single 2025 examine7. The appearance of AI brokers that may autonomously work together with web sites is ready to escalate the issue, as a result of such brokers make the manufacturing of authentic-looking survey responses trivially straightforward, even for individuals with out coding expertise.

Researchers and survey suppliers have lengthy developed instruments to stop, deter or detect inauthentic survey responses. CAPTCHA8, for instance, exams whether or not a person is human by requiring them to establish distorted textual content, sounds or pictures. Such strategies may confuse unsophisticated bots (and inattentive people), however not AI brokers.

A couple of detection measures can distinguish agent-generated responses from real ones by exploiting the best way LLMs depend on coaching information to supply responses and their lack of skill to motive contextually9. For instance, LLMs may label a picture of a distorted grid or colour-contrast sample as an optical phantasm even after the illusion-inducing parts have been digitally eliminated, counting on discovered associations moderately than notion7,10. People, against this, reply to what they really see, making a detectable distinction between human and AI interpretations. Nevertheless, these distinctions are prone to fade as AI advances, rendering such exams unreliable within the close to future.

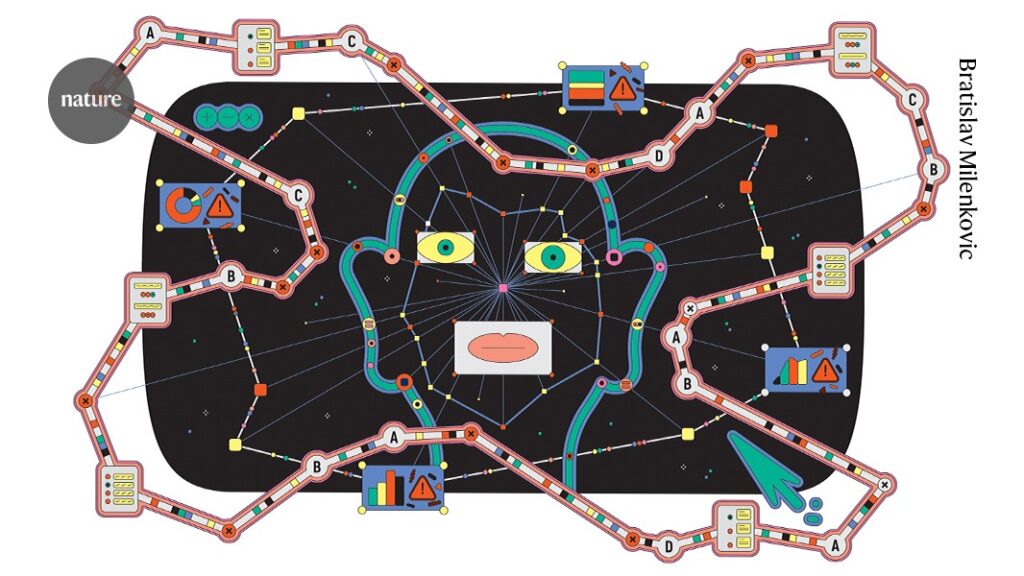

AI-agent detection has been described as a continuous recreation of cat and mouse, by which “the mouse never sleeps”11. Right here, we lay out 4 steps to attenuate the chance of survey air pollution by AI brokers. Utilizing a mix of those detection methods will most likely be essential to allow researchers to proceed to separate out genuine responses from AI bots (see ‘Outwitting AI agents’).

Search for response patterns

One method is to assign probabilistic scores to survey submissions by evaluating every response to recognized patterns in human and AI-generated solutions12. LLMs have a tendency to supply solutions which have decrease variability. For instance, when requested to explain their political opinions by expressing their stage of settlement on a collection of statements, people have a tendency to make use of the extremes of the dimensions extra usually than bots do. When enough textual content is offered, open-ended responses also can reveal linguistic patterns13,14.

Detection instruments can exploit such distinctions. By evaluating people’ responses with pattern replies to the identical questions answered by real people and by AI, instruments can flag responses by which patterns carefully match the latter. The potential of such an ‘AI or human’ filter is buttressed by current findings that LLMs proceed to wrestle to precisely simulate human psychology and behavior15.

Though promising, this methodology has one essential limitation: it can’t reliably establish particular person responses as bot-generated (as a result of the detection methodology is probabilistic, not deterministic), making it extraordinarily tough to flag a single survey entry with certainty.

Monitor paradata

Paradata refers back to the info that describes how survey responses had been generated, such because the variety of keystrokes a respondent used, using copy–paste performance or the time spent answering a given query. It’s comparatively easy to embed fundamental keystroke monitoring right into a survey (though applicable moral concerns similar to consent and justified use must be taken under consideration).

AI chatbots are already biasing analysis — we should set up pointers for his or her use now

Monitoring paradata may also help to establish probably inauthentic responses by highlighting inconsistencies. For example, if leaving a 100-word open-text response took 5 seconds, it’s probably that the response was no less than low effort or probably AI-generated. Moreover, if that response appeared within the survey window unexpectedly (as a substitute of step by step, stroke-by-stroke), this offers proof that the response was generated outdoors of the survey atmosphere — say, in one other browser window16. Some survey firms have developed their very own instruments to flag open-text responses which can be suspected to be inauthentic in such methods.

Nevertheless, this method shouldn’t be all the time applicable, as a result of some survey duties may require entry to exterior info. One other downside is that this won’t work as properly for survey questions that don’t require textual content enter, though suspicious click on and mouse-movement patterns can nonetheless assist to establish low-quality information. Importantly, newer LLMs and a few AI browser brokers are already able to creating practical paradata6,17.

Discover vetted survey populations

Researchers can depend on recruitment platforms that draw contributors from census-based, probability-sampled swimming pools. For instance, panels within the Netherlands and France recruit households utilizing official inhabitants registers maintained by nationwide statistics companies. Though this doesn’t forestall contributors from utilizing AI to finish surveys, it ensures that responses come from actual people, and sometimes just one per family.

Social-science analysis depends on gathering on-line survey information for experiments.Credit score: Milky Means/Getty

Collaborating with such panels can improve information integrity. Nevertheless, these panels are usually dearer to make use of than typical on-line platforms as a result of enrolment entails strict verification of identities and/or census-based choice, and the participant pool is usually vetted. Moreover, information assortment happens only some instances per yr, usually combining a number of surveys, which requires cautious timing and limits the variety of questions that may be included in any single examine.

For survey platforms, there are a number of potential pathways forwards as they attempt to adapt to AI. Platforms may contemplate capping the variety of allowed submissions per day from a person; mixed with id verification, this might show to be an efficient deterrent for inauthentic submissions. Platforms may additionally implement a fame or sanctions system to incentivize person authenticity.