Picture by Writer

# Introduction

All tutorials on information science make detecting outliers look like fairly simple. Take away all values better than three customary deviations; that is all there’s to it. However when you begin working with an precise dataset the place the distribution is skewed and a stakeholder asks, “Why did you remove that data point?” you immediately notice you do not have reply.

So we ran an experiment. We examined 5 of essentially the most generally used outlier detection strategies on an actual dataset (6,497 Portuguese wines) to search out out: do these strategies produce constant outcomes?

They did not. What we realized from the disagreement turned out to be extra priceless than something we may have picked up from a textbook.

Picture by Writer

We constructed this evaluation as an interactive Strata pocket book, a format you should utilize to your personal experiments utilizing the Information Venture on StrataScratch. You’ll be able to view and run the total code right here.

# Setting Up

Our information comes from the Wine High quality Dataset, publicly out there by UCI’s Machine Studying Repository. It accommodates physicochemical measurements from 6,497 Portuguese “Vinho Verde” wines (1,599 crimson, 4,898 white), together with high quality scores from knowledgeable tasters.

We chosen it for a number of causes. It is manufacturing information, not one thing generated artificially. The distributions are skewed (6 of 11 options have skewness ( > 1 )), so the info don’t meet textbook assumptions. And the standard scores allow us to test if the detected “outliers” present up extra amongst wines with uncommon scores.

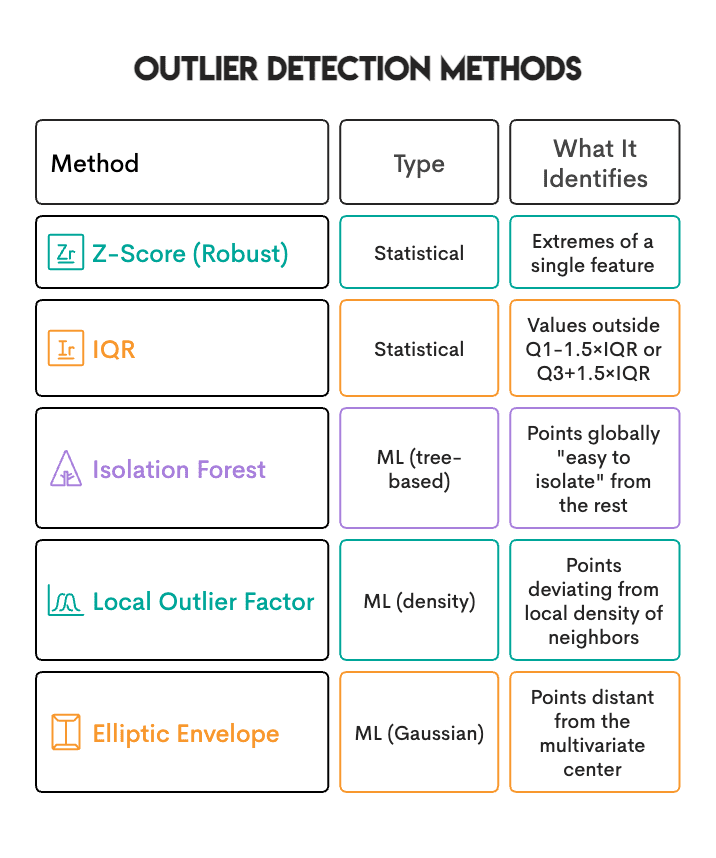

Beneath are the 5 strategies we examined:

# Discovering the First Shock: Inflated Outcomes From A number of Testing

Earlier than we may examine strategies, we hit a wall. With 11 options, the naive method (flagging a pattern primarily based on an excessive worth in no less than one function) produced extraordinarily inflated outcomes.

IQR flagged about 23% of wines as outliers. Z-Rating flagged about 26%.

When almost 1 in 4 wines get flagged as outliers, one thing is off. Actual datasets don’t have 25% outliers. The issue was that we had been testing 11 options independently, and that inflates the outcomes.

The mathematics is simple. If every function has lower than a 5% chance of getting a “random” excessive worth, then with 11 impartial options:

[ P(text{at least one extreme}) = 1 – (0.95)^{11} approx 43% ]

In plain phrases: even when each function is completely regular, you’d anticipate almost half your samples to have no less than one excessive worth someplace simply by random likelihood.

To repair this, we modified the requirement: flag a pattern solely when no less than 2 options are concurrently excessive.

![]()

Altering min_features from 1 to 2 modified the definition from “any feature of the sample is extreme” to “the sample is extreme across more than one feature.”

Here is the repair in code:

# Depend excessive options per pattern

outlier_counts = (np.abs(z_scores) > 3.5).sum(axis=1)

outliers = outlier_counts >= 2

# Evaluating 5 Strategies on 1 Dataset

As soon as the multiple-testing repair was in place, we counted what number of samples every methodology flagged:

Here is how we arrange the ML strategies:

from sklearn.ensemble import IsolationForest

from sklearn.neighbors import LocalOutlierFactor

iforest = IsolationForest(contamination=0.05, random_state=42)

lof = LocalOutlierFactor(n_neighbors=20, contamination=0.05)

Why do the ML strategies all present precisely 5%? Due to the contamination parameter. It requires them to flag precisely that share. It is a quota, not a threshold. In different phrases, Isolation Forest will flag 5% no matter whether or not your information accommodates 1% true outliers or 20%.

# Discovering the Actual Distinction: They Establish Totally different Issues

Here is what shocked us most. Once we examined how a lot the strategies agreed, the Jaccard similarity ranged from 0.10 to 0.30. That is poor settlement.

Out of 6,497 wines:

- Solely 32 samples (0.5%) had been flagged by all 4 main strategies

- 143 samples (2.2%) had been flagged by 3+ strategies

- The remaining “outliers” had been flagged by just one or 2 strategies

You may assume it is a bug, however it’s the purpose. Every methodology has its personal definition of “unusual”:

If a wine has residual sugar ranges considerably increased than common, it is a univariate outlier (Z-Rating/IQR will catch it). But when it is surrounded by different wines with related sugar ranges, LOF will not flag it. It is regular inside the native context.

So the actual query is not “which method is best?” It is “what kind of unusual am I searching for?”

# Checking Sanity: Do Outliers Correlate With Wine High quality?

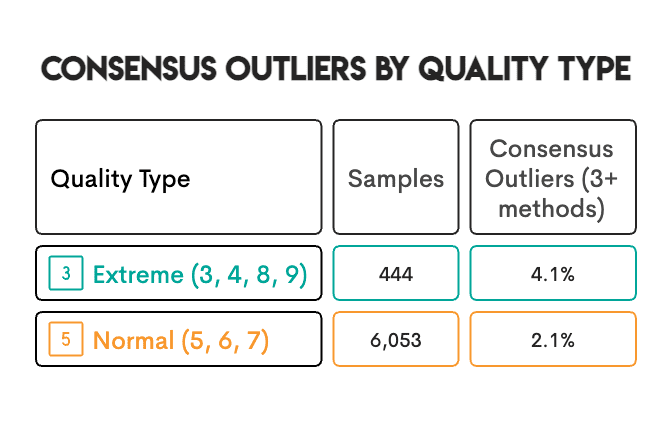

The dataset contains knowledgeable high quality scores (3-9). We wished to know: do detected outliers seem extra steadily amongst wines with excessive high quality scores?

Excessive-quality wines had been twice as more likely to be consensus outliers. That is sanity test. In some circumstances, the connection is evident: a wine with approach an excessive amount of unstable acidity tastes vinegary, will get rated poorly, and will get flagged as an outlier. The chemistry drives each outcomes. However we will not assume this explains each case. There is perhaps patterns we’re not seeing, or confounding elements we’ve not accounted for.

# Making Three Choices That Formed Our Outcomes

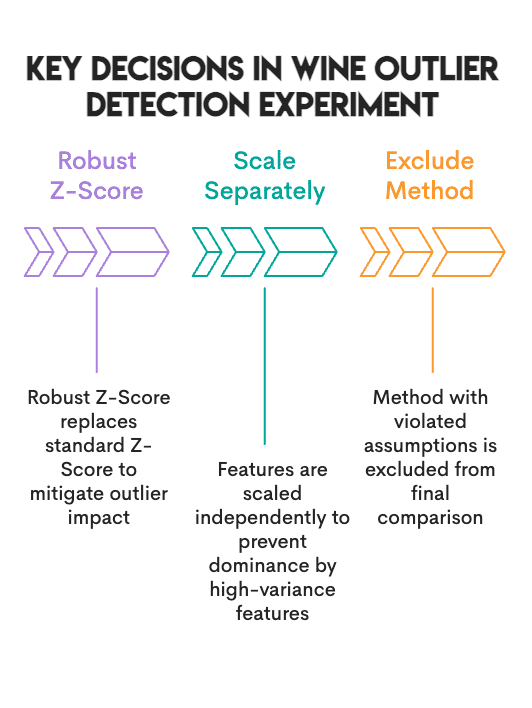

// 1. Utilizing Strong Z-Rating Relatively Than Customary Z-Rating

A Customary Z-Rating makes use of the imply and customary deviation of the info, each of that are affected by the outliers current in our dataset. A Strong Z-Rating as a substitute makes use of the median and Median Absolute Deviation (MAD), neither of which is affected by outliers.

In consequence, the Customary Z-Rating recognized 0.8% of the info as outliers, whereas the Strong Z-Rating recognized 3.5%.

# Strong Z-Rating utilizing median and MAD

median = np.median(information, axis=0)

mad = np.median(np.abs(information - median), axis=0)

robust_z = 0.6745 * (information - median) / mad

// 2. Scaling Purple And White Wines Individually

Purple and white wines have completely different baseline ranges of chemical compounds. For instance, when combining crimson and white wines right into a single dataset, a crimson wine that has completely common chemistry relative to different crimson wines could also be recognized as an outlier primarily based solely on its sulfur content material in comparison with the mixed imply of crimson and white wines. Subsequently, we scaled every wine sort individually utilizing the median and Interquartile Vary (IQR) of every wine sort, after which mixed the 2.

# Scale every wine sort individually

from sklearn.preprocessing import RobustScaler

scaled_parts = []

for wine_type in ['red', 'white']:

subset = df[df['type'] == wine_type][features]

scaled_parts.append(RobustScaler().fit_transform(subset))

// 3. Realizing When To Exclude A Technique

Elliptic Envelope assumes your information follows a multivariate regular distribution. Ours did not. Six of 11 options had skewness above 1, and one function hit 5.4. We stored the Elliptic Envelope within the comparability for completeness, however left it out of the consensus vote.

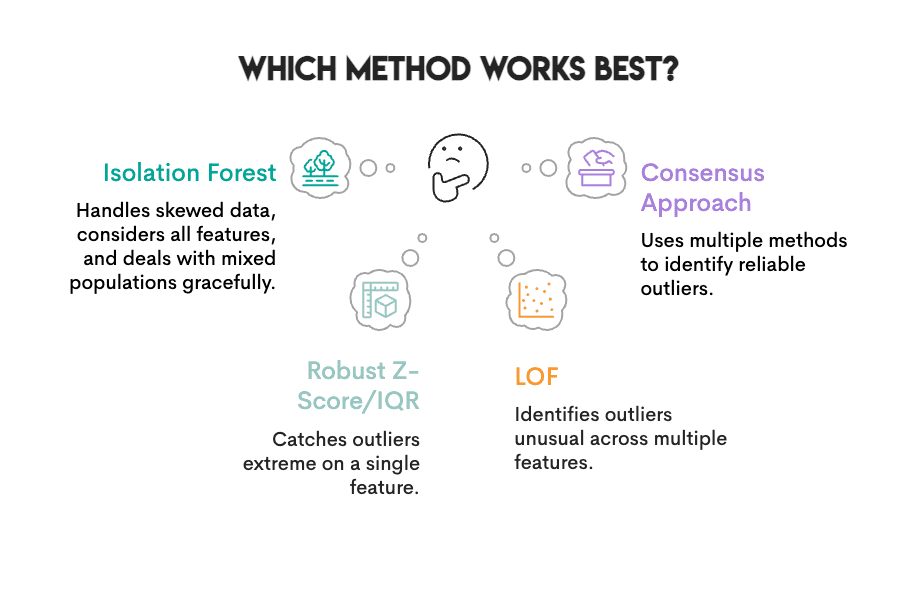

# Figuring out Which Technique Performs Finest For This Wine Dataset

Picture by Writer

Can we decide a “winner” given the traits of our information (heavy skewness, combined inhabitants, no recognized floor fact)?

Strong Z-Rating, IQR, Isolation Forest, and LOF all deal with skewed information moderately properly. If pressured to select one, we might go together with Isolation Forest: no distribution assumptions, considers all options without delay, and offers with combined populations gracefully.

However no single methodology does every part:

- Isolation Forest can miss outliers which are solely excessive on one function (Z-Rating/IQR catches these)

- Z-Rating/IQR can miss outliers which are uncommon throughout a number of options (multidimensional outliers)

The higher method: use a number of strategies and belief the consensus. The 143 wines flagged by 3 or extra strategies are much more dependable than something flagged by a single methodology alone.

Here is how we calculated consensus:

# Depend what number of strategies flagged every pattern

consensus = zscore_out + iqr_out + iforest_out + lof_out

high_confidence = df[consensus >= 3] # Recognized by 3+ strategies

With out floor fact (as in most real-world tasks), methodology settlement is the closest measure of confidence.

# Understanding What All This Means For Your Personal Tasks

Outline your downside earlier than selecting your methodology. What sort of “unusual” are you truly on the lookout for? Information entry errors look completely different from measurement anomalies, and each look completely different from real uncommon circumstances. The kind of downside factors to completely different strategies.

Verify your assumptions. In case your information is closely skewed, the Customary Z-Rating and Elliptic Envelope will steer you mistaken. Take a look at your distributions earlier than committing to a technique.

Use a number of strategies. Samples flagged by three or extra strategies with completely different definitions of “outlier” are extra reliable than samples flagged by only one.

Do not assume all outliers ought to be eliminated. An outlier could possibly be an error. It may be your most fascinating information level. Area information makes that decision, not algorithms.

# Concluding Remarks

The purpose right here is not that outlier detection is damaged. It is that “outlier” means various things relying on who’s asking. Z-Rating and IQR catch values which are excessive on a single dimension. Isolation Forest and LOF discover samples that stand out of their total sample. Elliptic Envelope works properly when your information is definitely Gaussian (ours wasn’t).

Work out what you are actually on the lookout for earlier than you decide a technique. And for those who’re undecided? Run a number of strategies and go together with the consensus.

# FAQs

// 1. Figuring out Which Method I Ought to Begin With

A very good place to start is with the Isolation Forest method. It doesn’t assume how your information is distributed and makes use of your entire options on the similar time. Nonetheless, if you wish to determine excessive values for a selected measurement (reminiscent of very hypertension readings), then Z-Rating or IQR could also be extra appropriate for that.

// 2. Selecting a Contamination Charge For Scikit-learn Strategies

It depends upon the issue you are attempting to resolve. A generally used worth is 5% (or 0.05). However needless to say contamination is a quota. Which means that 5% of your samples might be categorized as outliers, no matter whether or not there truly are 1% or 20% true outliers in your information. Use a contamination fee primarily based in your information of the proportion of outliers in your information.

// 3. Eradicating Outliers Earlier than Splitting Prepare/take a look at Information

No. You need to match an outlier-detection mannequin to your coaching dataset, after which apply the educated mannequin to your testing dataset. For those who do in any other case, your take a look at information is influencing your preprocessing, which introduces leakage.

// 4. Dealing with Categorical Options

The strategies coated right here work on numerical information. There are three attainable alternate options for categorical options:

- encode your categorical variables and proceed;

- use a method designed for mixed-type information (e.g. HBOS);

- run outlier detection on numeric columns individually and use frequency-based strategies for categorical ones.

// 5. Realizing If A Flagged Outlier Is An Error Or Simply Uncommon

You can’t decide from the algorithm alone when an recognized outlier represents an error versus when it’s merely uncommon. It flags what’s uncommon, not what’s mistaken. For instance, a wine that has an especially excessive residual sugar content material is perhaps an information entry error, or it is perhaps a dessert wine that’s supposed to be that candy. In the end, solely your area experience can present a solution. For those who’re not sure, mark it for evaluation relatively than eradicating it routinely.

Nate Rosidi is an information scientist and in product technique. He is additionally an adjunct professor instructing analytics, and is the founding father of StrataScratch, a platform serving to information scientists put together for his or her interviews with actual interview questions from prime corporations. Nate writes on the most recent developments within the profession market, provides interview recommendation, shares information science tasks, and covers every part SQL.