Picture by Writer

# Introduction

Everybody focuses on fixing the issue, however virtually nobody assessments the answer. Typically, a wonderfully working script can break with only one new row of information or a slight change within the logic.

On this article, we are going to remedy a Tesla interview query in Python and present how versioning and unit assessments flip a fragile script right into a dependable resolution by following three steps. We are going to begin with the interview query and finish with automated testing utilizing GitHub Actions.

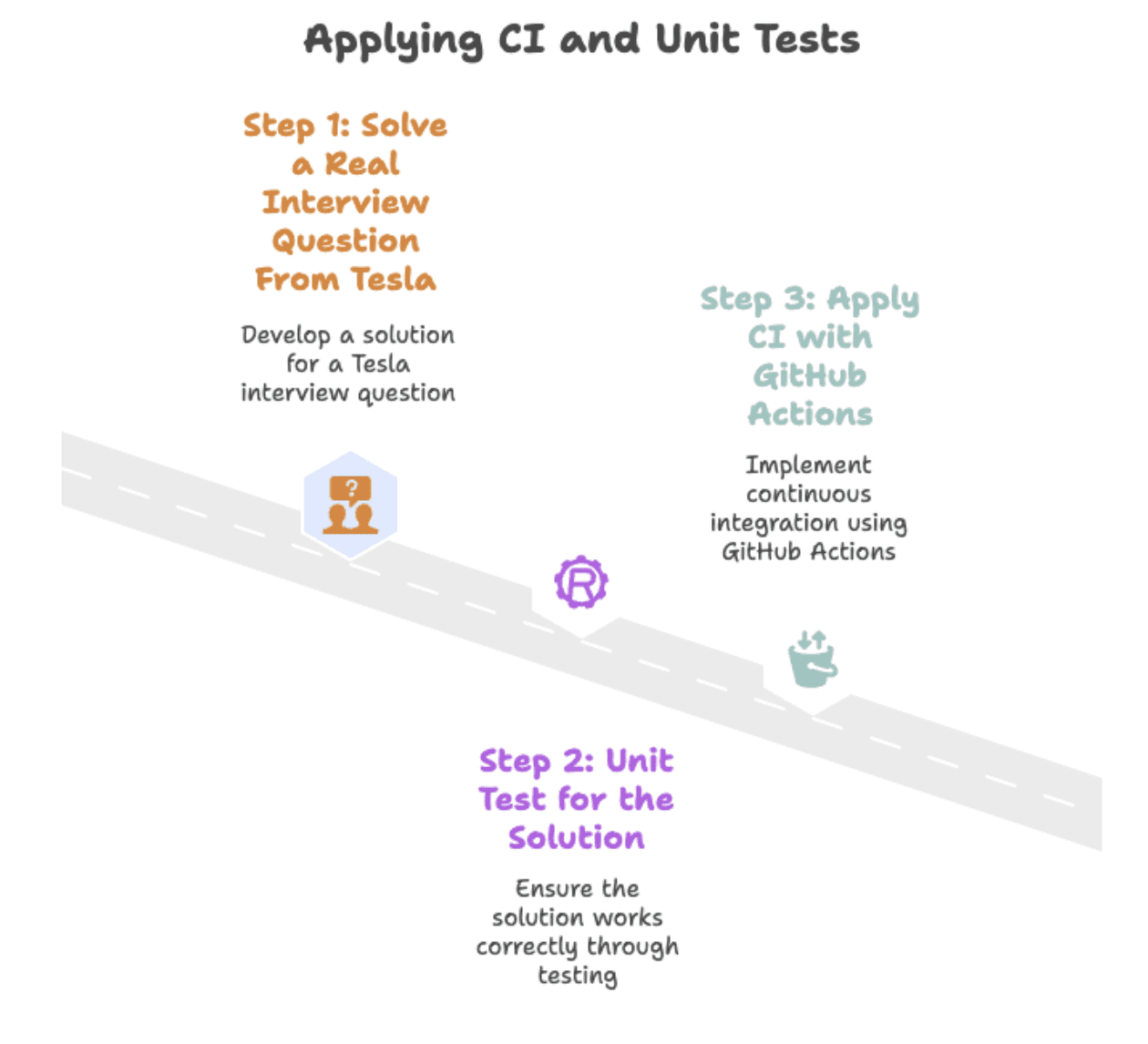

Picture by Writer

We are going to undergo these three steps to make an information resolution production-ready.

First, we are going to remedy an actual interview query from Tesla. Subsequent, we are going to add unit assessments to make sure the answer stays dependable over time. Lastly, we are going to use GitHub Actions to automate testing and model management.

# Fixing A Actual Interview Query From Tesla

New Merchandise

Calculate the online change within the variety of merchandise launched by corporations in 2020 in comparison with 2019. Your output ought to embody the corporate names and the online distinction.

(Web distinction = Variety of merchandise launched in 2020 – The quantity launched in 2019.)

On this interview query from Tesla, you might be requested to measure product progress throughout two years.

The duty is to return every firm’s identify together with the distinction in product rely between 2020 and 2019.

// Understanding The Dataset

Allow us to first take a look at the dataset we’re working with. Listed below are the column names.

| Column Identify | Knowledge Sort |

|---|---|

| yr | int64 |

| company_name | object |

| product_name | object |

Allow us to preview the dataset.

| Yr | Company_name | Product_name |

|---|---|---|

| 2019 | Toyota | Avalon |

| 2019 | Toyota | Camry |

| 2020 | Toyota | Corolla |

| 2019 | Honda | Accord |

| 2019 | Honda | Passport |

This dataset comprises three columns: yr, company_name, and product_name. Every row represents a automotive mannequin launched by an organization in a given yr.

// Writing The Python Resolution

We are going to use primary pandas operations to group, evaluate, and calculate the online product change per firm. The operate we are going to write splits the info into subsets for 2019 and 2020.

Subsequent, it merges them by firm names and counts the variety of distinctive merchandise launched every year.

import pandas as pd

import numpy as np

from datetime import datetime

df_2020 = car_launches[car_launches['year'].astype(str) == '2020']

df_2019 = car_launches[car_launches['year'].astype(str) == '2019']

df = pd.merge(df_2020, df_2019, how='outer', on=[

'company_name'], suffixes=['_2020', '_2019']).fillna(0)

The ultimate output subtracts 2019 counts from 2020 to get the online distinction. Right here is all the code.

import pandas as pd

import numpy as np

from datetime import datetime

df_2020 = car_launches[car_launches['year'].astype(str) == '2020']

df_2019 = car_launches[car_launches['year'].astype(str) == '2019']

df = pd.merge(df_2020, df_2019, how='outer', on=[

'company_name'], suffixes=['_2020', '_2019']).fillna(0)

df = df[df['product_name_2020'] != df['product_name_2019']]

df = df.groupby(['company_name']).agg(

{'product_name_2020': 'nunique', 'product_name_2019': 'nunique'}).reset_index()

df['net_new_products'] = df['product_name_2020'] - df['product_name_2019']

end result = df[['company_name', 'net_new_products']]

// Viewing The Anticipated Output

Right here is the anticipated output.

| Company_name | Net_new_products |

|---|---|

| Chevrolet | 2 |

| Ford | -1 |

| Honda | -3 |

| Jeep | 1 |

| Toyota | -1 |

# Making The Resolution Dependable With Unit Checks

Fixing an information downside as soon as doesn’t imply it would maintain working. A brand new row or a logic tweak can silently break your script. As an illustration, think about you by chance rename a column in your code, altering this line:

df['net_new_products'] = df['product_name_2020'] - df['product_name_2019']

to this:

df['new_products'] = df['product_name_2020'] - df['product_name_2019']

The logic nonetheless runs, however your output (and assessments) will all of a sudden fail as a result of the anticipated column identify not matches. Unit assessments repair that. They examine if the identical enter nonetheless offers the identical output, each time. If one thing breaks, the take a look at fails and exhibits precisely the place. We are going to do that in three steps, from turning the interview query’s resolution right into a operate to writing a take a look at that checks the output in opposition to what we anticipate.

Picture by Writer

// Turning The Script Into A Reusable Perform

Earlier than writing assessments, we have to make our resolution reusable and simple to check. Changing it right into a operate permits us to run it with completely different datasets and confirm the output robotically, with out having to rewrite the identical code each time. We modified the unique code right into a operate that accepts a DataFrame and returns a end result. Right here is the code.

def calculate_net_new_products(car_launches):

df_2020 = car_launches[car_launches['year'].astype(str) == '2020']

df_2019 = car_launches[car_launches['year'].astype(str) == '2019']

df = pd.merge(df_2020, df_2019, how='outer', on=[

'company_name'], suffixes=['_2020', '_2019']).fillna(0)

df = df[df['product_name_2020'] != df['product_name_2019']]

df = df.groupby(['company_name']).agg({

'product_name_2020': 'nunique',

'product_name_2019': 'nunique'

}).reset_index()

df['net_new_products'] = df['product_name_2020'] - df['product_name_2019']

return df[['company_name', 'net_new_products']]

// Defining Take a look at Knowledge And Anticipated Output

Earlier than operating any assessments, we have to know what “correct” appears to be like like. Defining the anticipated output offers us a transparent benchmark to check our operate’s outcomes in opposition to. So, we are going to construct a small take a look at enter and clearly outline what the proper output ought to be.

import pandas as pd

# Pattern take a look at knowledge

test_data = pd.DataFrame({

'yr': [2019, 2019, 2020, 2020],

'company_name': ['Toyota', 'Toyota', 'Toyota', 'Toyota'],

'product_name': ['Camry', 'Avalon', 'Corolla', 'Yaris']

})

# Anticipated output

expected_output = pd.DataFrame({

'company_name': ['Toyota'],

'net_new_products': [0] # 2 in 2020 - 2 in 2019

})

// Writing And Operating Unit Checks

The next take a look at code checks in case your operate returns precisely what you anticipate.

![]()

If not, the take a look at fails and tells you why, all the way down to the final row or column.

![]()

The take a look at beneath makes use of the operate from the earlier step (calculate_net_new_products()) and the anticipated output we outlined.

import unittest

class TestProductDifference(unittest.TestCase):

def test_net_new_products(self):

end result = calculate_net_new_products(test_data)

end result = end result.sort_values('company_name').reset_index(drop=True)

anticipated = expected_output.sort_values('company_name').reset_index(drop=True)

pd.testing.assert_frame_equal(end result, anticipated)

if __name__ == '__main__':

unittest.major()

# Automating Checks With Steady Integration

Writing assessments is an efficient begin, however provided that they really run. You possibly can run the assessments manually after each change, however that doesn’t scale, it’s simple to overlook, and workforce members could use completely different setups. Steady Integration (CI) solves this by operating assessments robotically every time code modifications are pushed to the repository.

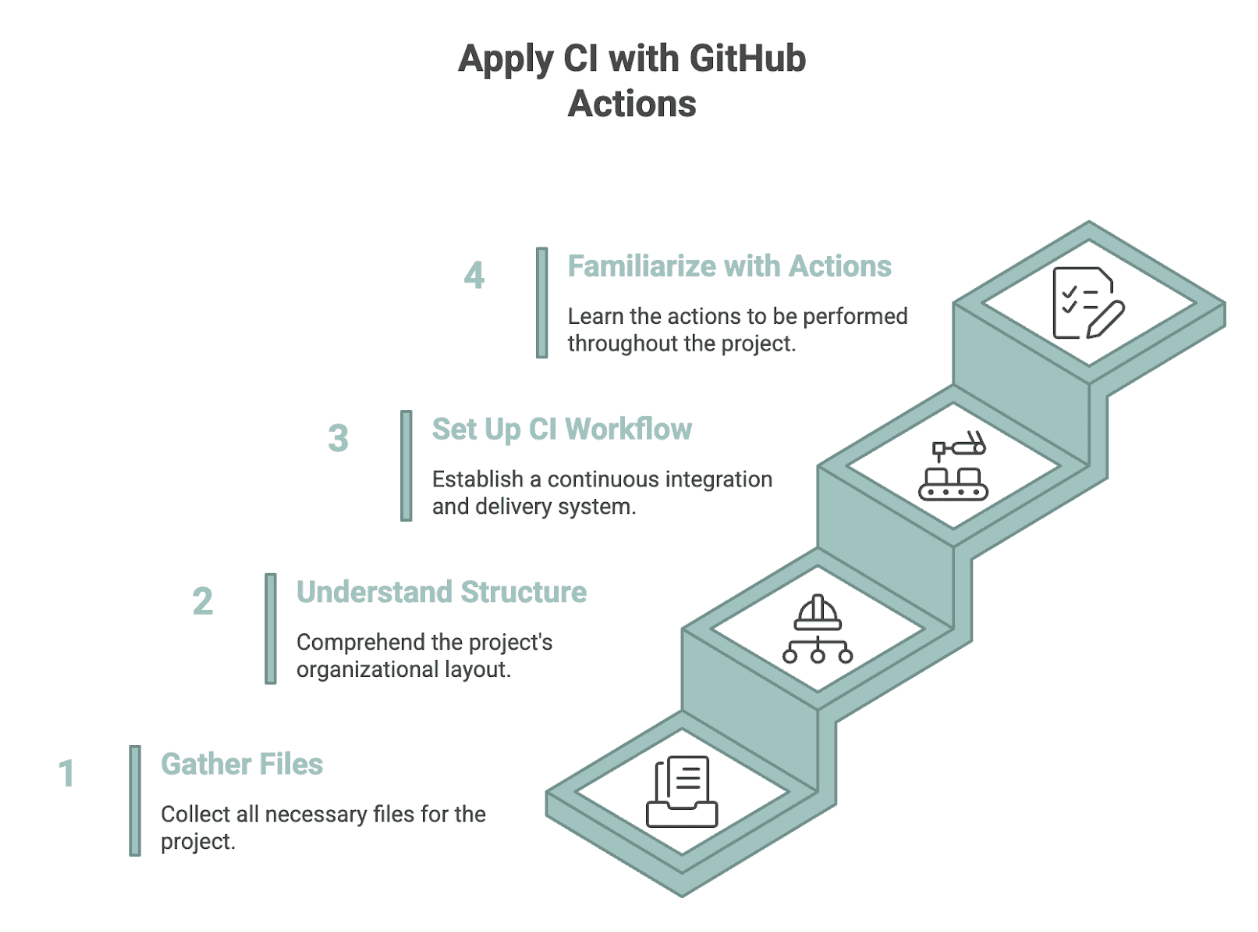

GitHub Actions is a free CI instrument that does this on each push, protecting your resolution dependable even when the code, knowledge, or logic modifications. It runs your assessments robotically on each push, so your resolution stays dependable even when the code, knowledge, or logic modifications. Right here is the way to apply CI with GitHub Actions.

Picture by Writer

// Organizing Your Undertaking Recordsdata

To use CI to an interview question, you first have to push your resolution to a GitHub repository. (To learn to create a GitHub repo, please learn this).

Then, arrange the next information:

resolution.py: Interview questions resolution from Step 2.1expected_output.py: Defines take a look at enter and anticipated output from Step 2.2test_solution.py: Unit take a look at utilizingunittestfrom Step 2.3necessities.txt: Dependencies (e.g., pandas).github/workflows/take a look at.yml: GitHub Actions workflow fileknowledge/car_launches.csv: Enter dataset utilized by the answer

// Understanding The Repository Structure

The repository is organized this manner so GitHub Actions can discover every part it wants in your GitHub repository with out further setup. It retains issues easy, constant, and simple for each you and others to work with.

my-query-solution/

├── knowledge/

│ └── car_launches.csv

├── resolution.py

├── expected_output.py

├── test_solution.py

├── necessities.txt

└── .github/

└── workflows/

└── take a look at.yml

// Creating A GitHub Actions Workflow

Now that you’ve all of the information, the final one you want is take a look at.yml. This file tells GitHub Actions the way to run your assessments robotically when code modifications.

First, we identify the workflow and inform GitHub when to run it.

identify: Run Unit Checks

on:

push:

branches: [ main ]

pull_request:

branches: [ main ]

This implies the assessments will run each time somebody pushes code or opens a pull request on the principle department. Subsequent, we create a job that defines what’s going to occur contained in the workflow.

jobs:

take a look at:

runs-on: ubuntu-latest

The job runs on GitHub’s Ubuntu surroundings, which supplies you a clear setup every time. Now we add steps inside that job. The primary one checks out your repository so GitHub Actions can entry your code.

- identify: Checkout repository

makes use of: actions/checkout@v4

Then we arrange Python and select the model we wish to use.

- identify: Arrange Python

makes use of: actions/setup-python@v5

with:

python-version: "3.10"

After that, we set up all of the dependencies listed in necessities.txt.

- identify: Set up dependencies

run: |

python -m pip set up --upgrade pip

pip set up -r necessities.txt

Lastly, we run all unit assessments within the venture.

- identify: Run unit assessments

run: python -m unittest uncover

This final step runs your assessments robotically and exhibits any errors if one thing breaks. Right here is the total file for reference:

identify: Run Unit Checks

on:

push:

branches: [ main ]

pull_request:

branches: [ main ]

jobs:

take a look at:

runs-on: ubuntu-latest

steps:

- identify: Checkout repository

makes use of: actions/checkout@v4

- identify: Arrange Python

makes use of: actions/setup-python@v5

with:

python-version: "3.10"

- identify: Set up dependencies

run: |

python -m pip set up --upgrade pip

pip set up -r necessities.txt

- identify: Run unit assessments

run: python -m unittest uncover

// Reviewing Take a look at Outcomes In GitHub Actions

After you have uploaded all of the information to your GitHub repository, go to the Actions tab by clicking Actions, as you possibly can see from the screenshot beneath.

![]()

When you click on on Actions, you will note a inexperienced checkmark if every part ran efficiently, like within the screenshot beneath.

![]()

Click on into the “Update test.yml” to see what truly occurred. You’ll get a full breakdown, from organising Python to operating the take a look at. If all assessments move:

- Every step may have a examine mark.

- That confirms every part labored as anticipated.

- It means your code behaves as meant at each stage, primarily based on the assessments you outlined.

- The output matches the objectives you set when creating these assessments.

Allow us to see:

![]()

As you possibly can see, our unit take a look at accomplished in simply 1 second, and all the CI course of completed in 17 seconds, verifying every part from setup to check execution.

// When A Small Change Breaks The Take a look at

Not each change will move the take a look at. Allow us to say you by chance rename a column in resolution.py, and ship the modifications to GitHub, for instance:

# Unique (works positive)

df['net_new_products'] = df['product_name_2020'] - df['product_name_2019']

# Unintended change

df['new_products'] = df['product_name_2020'] - df['product_name_2019']

Allow us to now see the take a look at ends in the motion tab.

![]()

We’ve an error. Allow us to click on it to see the main points.

![]()

The unit assessments didn’t move, so allow us to click on “Run unit tests” to see the total error message.

![]()

As you possibly can see, our assessments discovered the problem with a KeyError: 'net_new_products', as a result of the column identify within the operate not matches what the take a look at expects.

That’s how you retain your code beneath fixed examine. If you happen to or somebody in your workforce makes a mistake, the assessments act as your security web.

# Utilizing Model Management To Observe And Take a look at Adjustments

Versioning helps you monitor each change you make, whether or not it’s in your logic, your assessments, or your dataset. Say you wish to attempt a brand new approach to group the info. As a substitute of enhancing the principle script straight, create a brand new department:

git checkout -b refactor-grouping

Here’s what is subsequent:

- Make your modifications, commit them, and run the assessments.

- If all assessments move, which means the code works as anticipated, merge it.

- If not, revert the department with out affecting the principle code.

That’s the energy of model management: each change is tracked, testable, and reversible.

# Last Ideas

Most individuals cease after getting the suitable reply. However real-world knowledge options ask greater than that. They reward those that can construct queries that maintain up over time, not simply as soon as.

With versioning, unit assessments, and a easy CI setup, even a one-off interview query turns into a dependable, reusable a part of your portfolio.

Nate Rosidi is an information scientist and in product technique. He is additionally an adjunct professor educating analytics, and is the founding father of StrataScratch, a platform serving to knowledge scientists put together for his or her interviews with actual interview questions from prime corporations. Nate writes on the newest tendencies within the profession market, offers interview recommendation, shares knowledge science initiatives, and covers every part SQL.