AI instruments are in all places now and utilized by just about everybody in your org. For IT and safety groups, meaning the job has shifted from “should we allow AI?” to “how do we secure and govern it?” And that is no small job.

New AI instruments and integrations are added consistently, normally with none information or oversight from IT.

To handle this new hidden supply of threat, you want a system that offers you steady discovery, real-time monitoring, and proactive governance with out requiring a full-time staff devoted to monitoring down each new AI device. That is precisely what Nudge Safety delivers.

Right here’s the way it works:

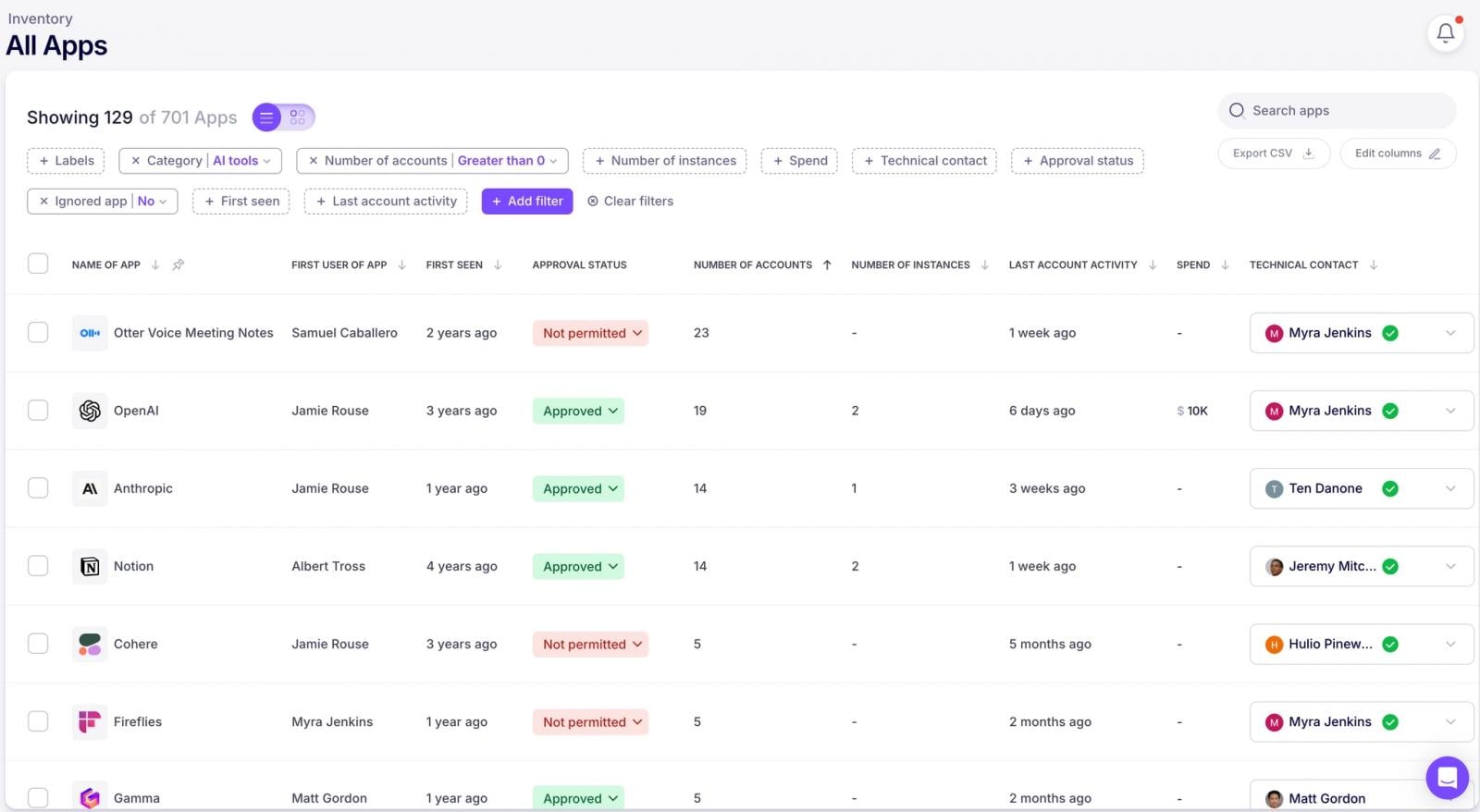

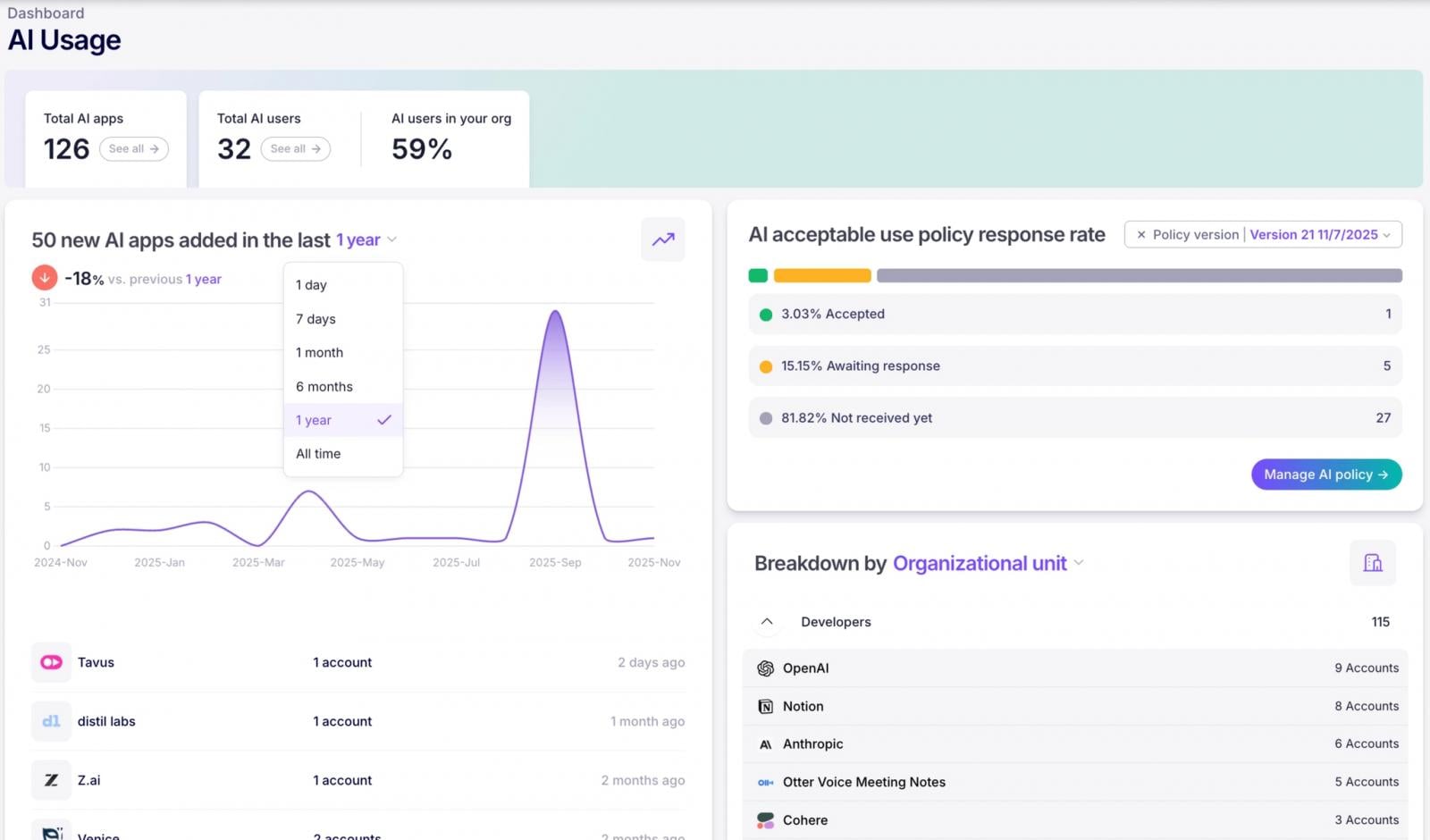

Day One: Get a full stock of AI apps and customers

First issues first—you may’t safe what you may’t see. Nudge Safety offers you Day One discovery of each AI app and account ever launched to your org, even these added earlier than you began utilizing Nudge. No surveys, no guesswork, no counting on individuals to self-report (as a result of let’s be trustworthy, that by no means works).

You may get a whole image of your AI panorama from the second you begin.

The way it Works

Nudge Safety’s shadow AI discovery works by way of a light-weight integration along with your IdP (Microsoft 365 or Google Workspace). It takes lower than 5 minutes to allow this integration ,and as soon as that’s in place,

Nudge Safety analyzes the machine-generated emails despatched by SaaS and AI app suppliers (assume noreply@dropbox.com) to doc actions like creation of recent accounts, password modifications, modifications to safety settings, and extra.

Nudge faucets into this sign (with out ever storing e mail content material) to mechanically detect new accounts and power adoption throughout your workforce. This implies you get complete visibility into AI device sprawl because it occurs, and a Day One stock of the whole lot that has been launched as much as that time.

You may get expanded visibility by deploying the browser extension which delivers real-time insights and alerts when dangerous behaviors are detected.

Moreover, you may “nudge” customers by way of the browser extension (and by way of Slack, Groups, and e mail) to warn customers of dangerous behaviors, remind them of safe practices, redirect them to accepted instruments, ask for added context on new or unfamiliar instruments, and extra.

Let’s dive into how this works in observe.

Shadow AI is quietly accessing delicate knowledge throughout your SaaS setting. AI use now spans MCP servers, agentic AI, and SaaS apps with AI options—it is extra than simply chat prompts.

Discover ways to shut AI blind spots and get forward of information publicity dangers with this new information.

Get Your Free AI Discovery Information →

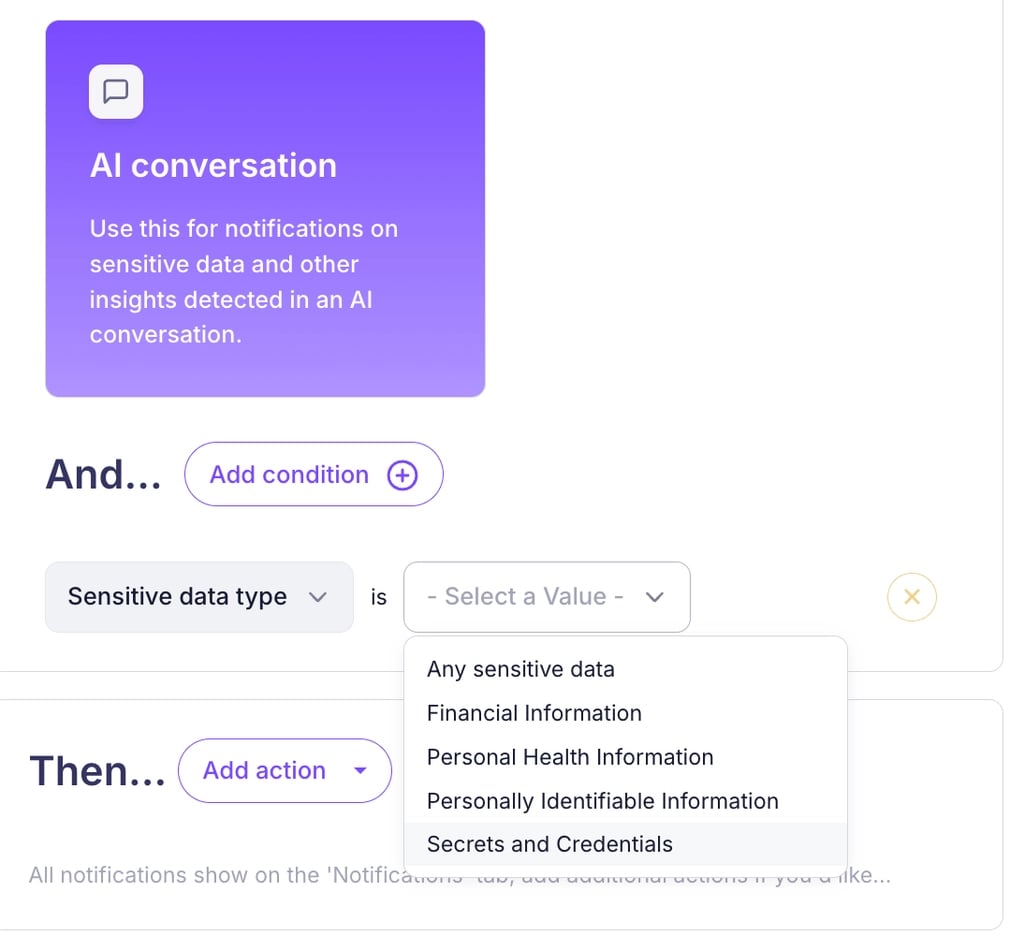

Monitor AI Conversations for Delicate Knowledge Sharing

AI instruments are extremely helpful, however they’re additionally extremely chatty. Staff paste all types of issues into ChatGPT, Gemini, and the handfuls of different AI assistants on the market.

The Nudge Safety browser extension displays AI conversations and detects when delicate knowledge like PII, secrets and techniques, or monetary information is shared.

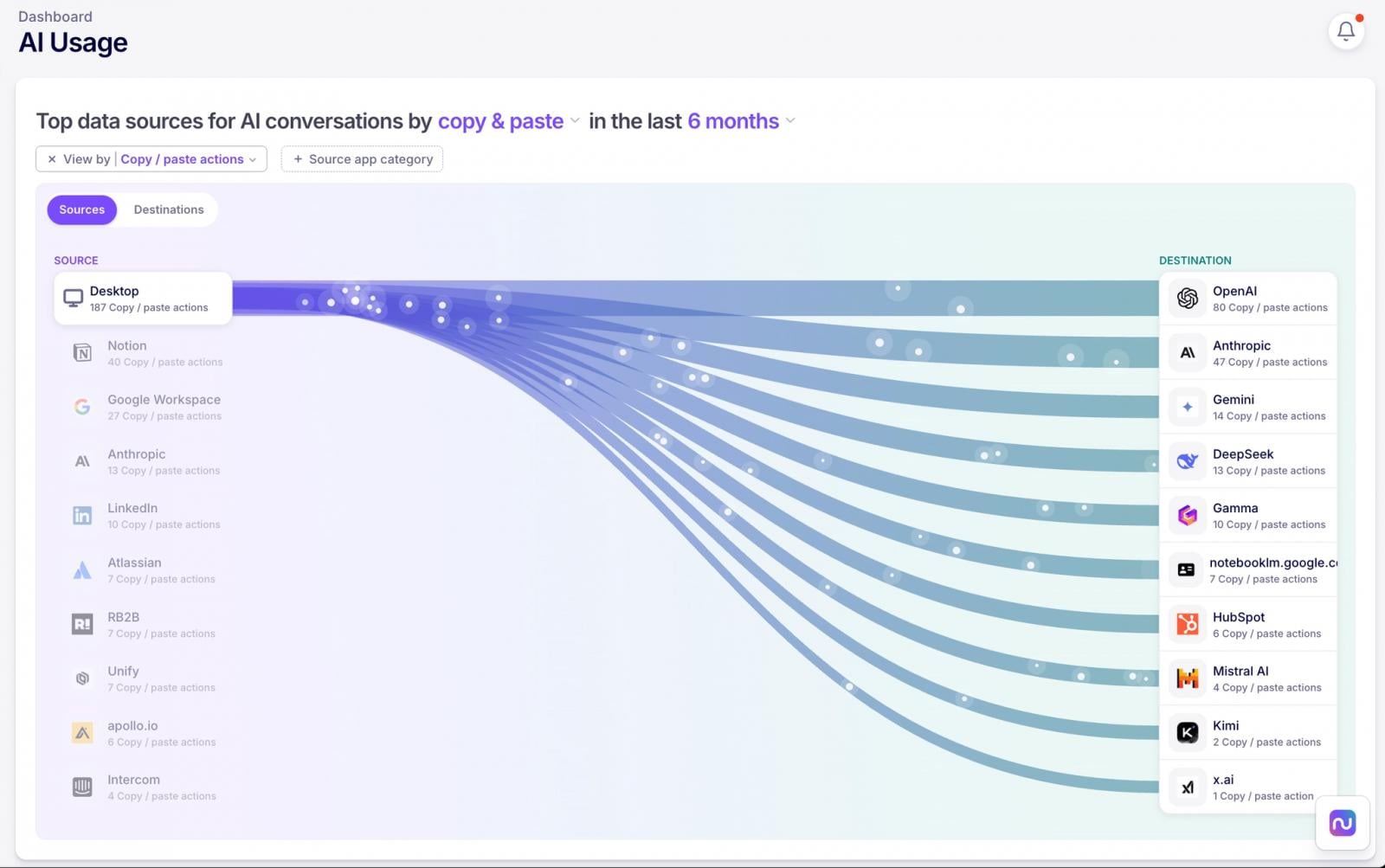

And it isn’t simply textual content. Nudge additionally detects file uploads to AI instruments, together with context on who, what, when, and the way. You’ll additionally see a visible abstract of information flows between your methods and AI instruments to rapidly perceive the place the largest knowledge dangers are more likely to be.

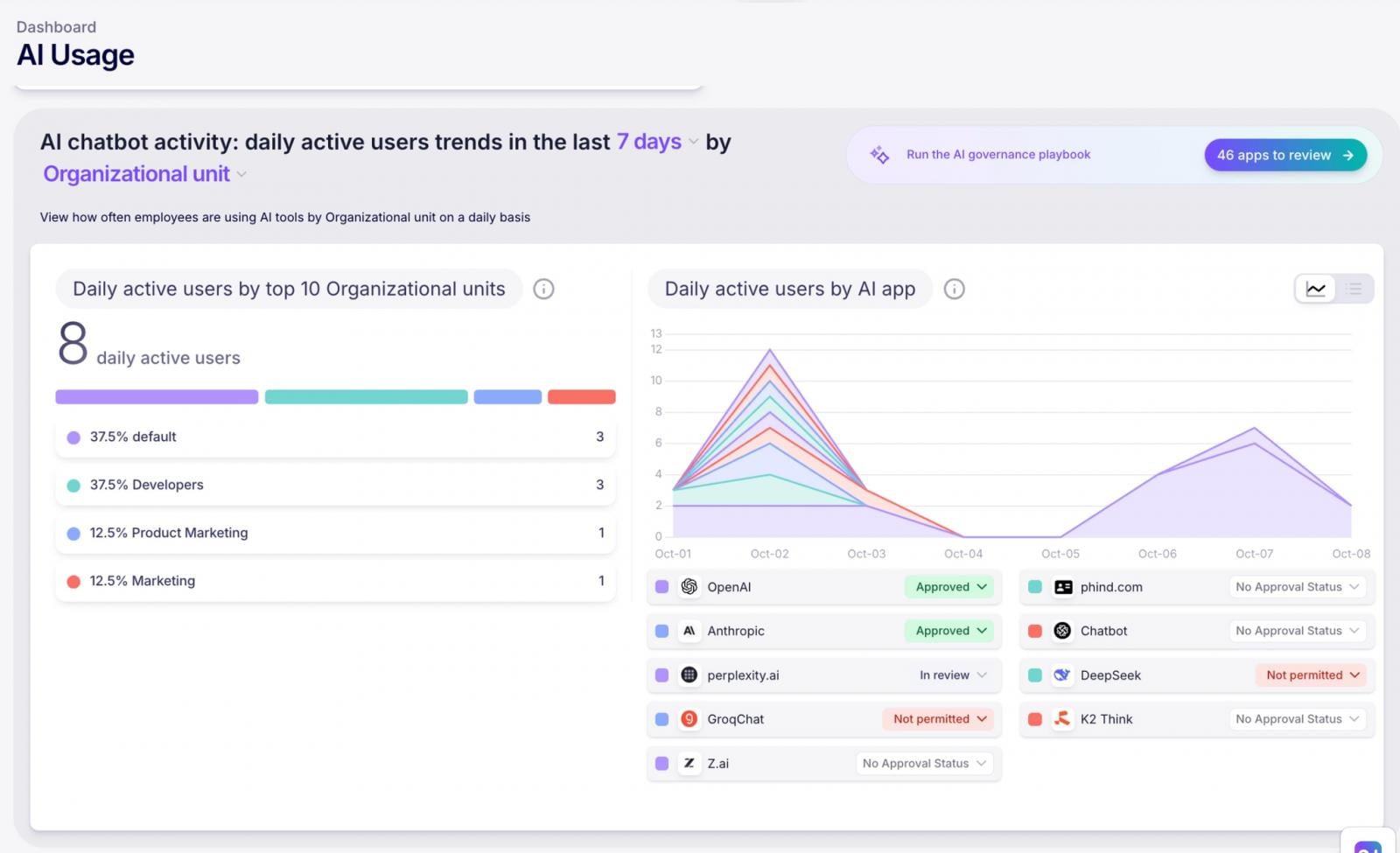

Monitor Utilization of AI Instruments

Need to know which departments are AI energy customers? Curious whether or not that unapproved device is popping up once more? Nudge tracks AI use by accepted/unapproved app standing, particular apps, and division.

You may lastly have knowledge exhibiting what AI use truly seems like in observe so you may focus your safety efforts on the most-used instruments and information customers in direction of the accepted toolset.

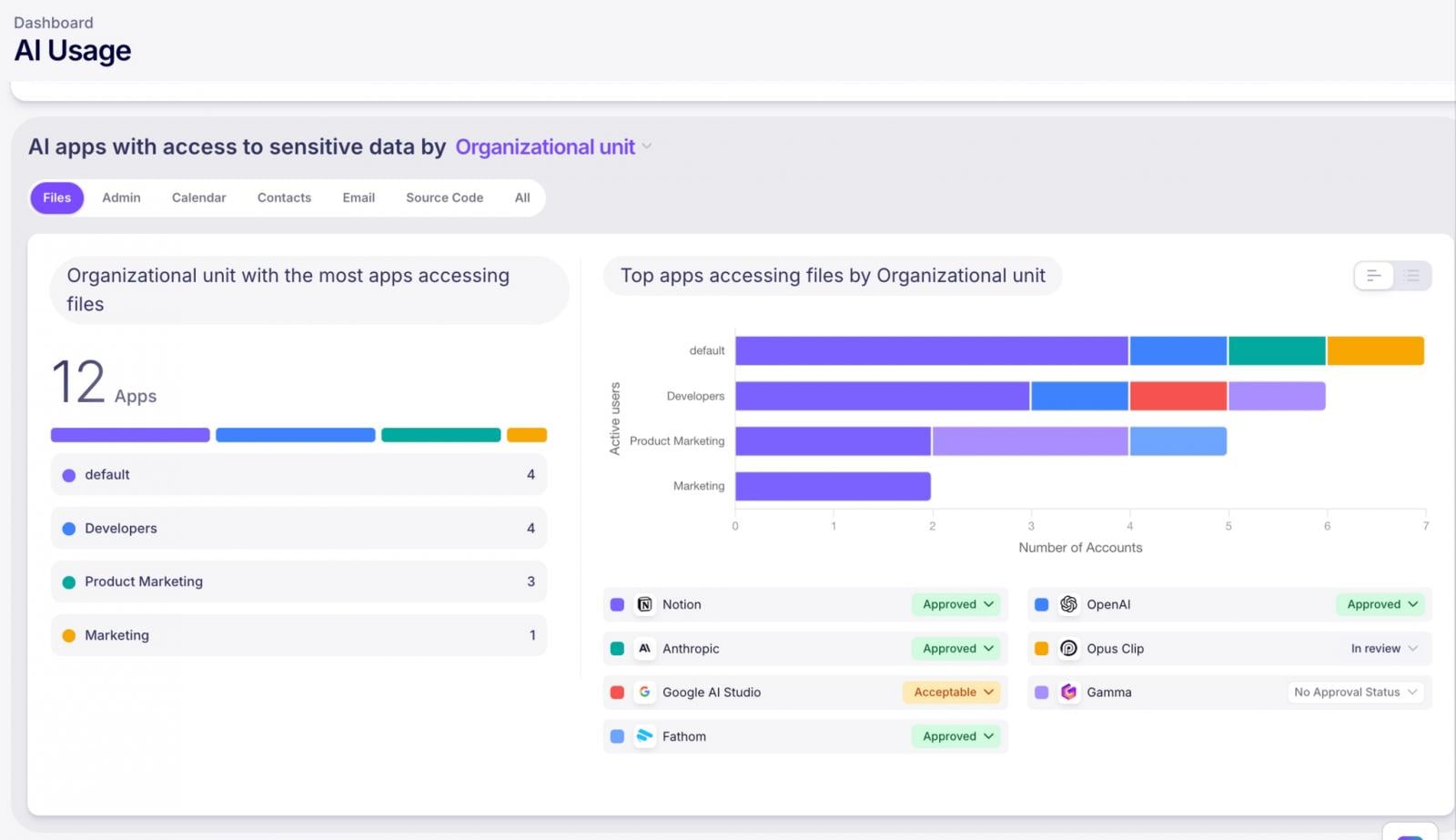

See Which AI Apps Have Entry to Delicate Knowledge

AI instruments like to combine along with your SaaS apps. MCP server connections, AI brokers, Google Workspace add-ons, Microsoft Copilot plugins—they’re all requesting entry to knowledge.

Nudge maintains a listing of SaaS-to-AI integrations and scopes, together with MCP server connections, so you may see precisely the place AI instruments have been granted entry to knowledge and consider the danger.

Get Alerted of Dangerous Actions

You’ll be able to’t watch the whole lot on a regular basis. That is why Nudge gives configurable alerts that notify you when new AI instruments present up or when coverage violations happen—like delicate knowledge sharing or use of unapproved instruments. Consider it as your early warning system.

Implement Your AI Coverage

You’ve an AI acceptable use coverage, proper? (If not, let’s discuss.) Nudge automates the method of sharing your coverage with workers in addition to amassing and monitoring acknowledgements.

However acknowledgment is just the start. Nudge delivers guardrails when and the place workers are working within the type of pleasant nudges (therefore the identify) that reinforce your coverage and information them towards safer AI use in actual time.

It is proactive governance that does not require you to be the unhealthy man.

The Backside Line

Your job is not to cease progress—it is to verify progress would not include a facet of information breach. Nudge Safety offers you the visibility, management, and automation you could govern AI use successfully, so you may sleep a bit of higher at evening.

Serious about seeing it for your self? Begin a free 14-day trial of Nudge Safety in the present day.

Sponsored and written by Nudge Safety.