When operating LLMs at scale, the actual limitation is GPU reminiscence slightly than compute, primarily as a result of every request requires a KV cache to retailer token-level information. In conventional setups, a big fastened reminiscence block is reserved per request primarily based on the utmost sequence size, which results in vital unused house and limits concurrency. Paged Consideration improves this by breaking the KV cache into smaller, versatile chunks which might be allotted solely when wanted, just like how digital reminiscence works. It additionally permits a number of requests with the identical beginning immediate to share reminiscence and solely duplicate it when their outputs begin to differ. This strategy significantly improves reminiscence effectivity, permitting considerably greater throughput with little or no overhead.

On this article, we simulate the naive KV cache allocator, construct a working Paged Consideration implementation with a block desk and Copy-on-Write prefix sharing, and measure the utilisation hole throughout batch sizes of 10 to 200 concurrent requests.

import math

import random

import numpy as np

import matplotlib.pyplot as plt

import matplotlib.patches as mpatches

from collections import defaultdict

random.seed(42)

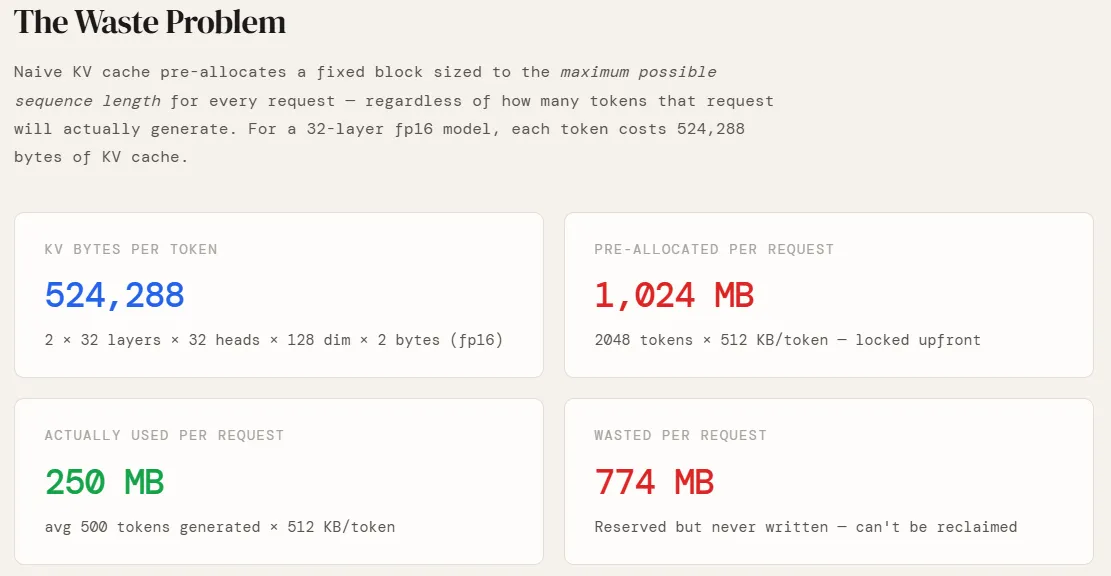

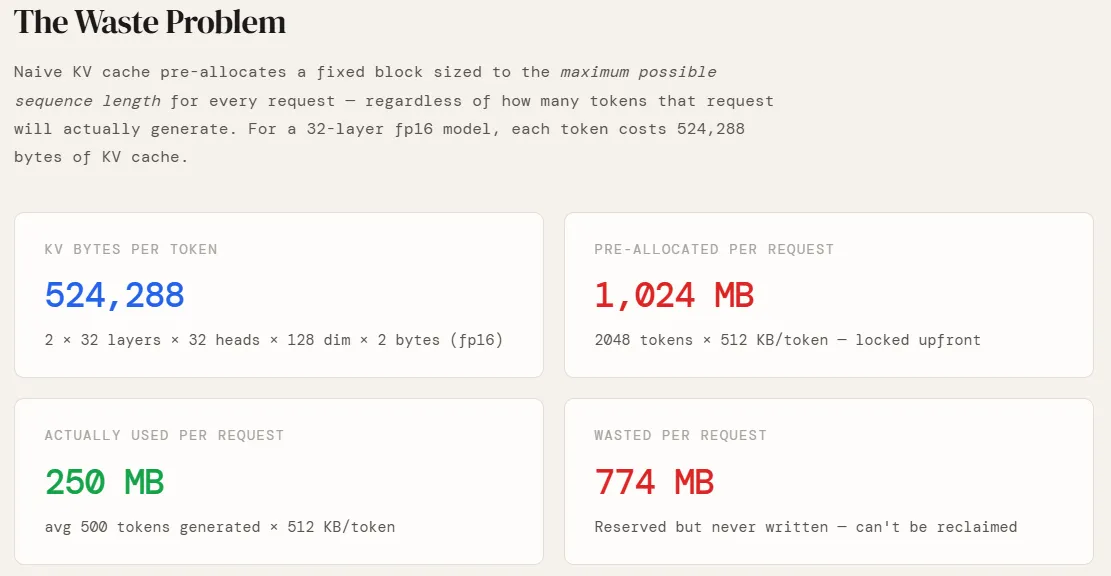

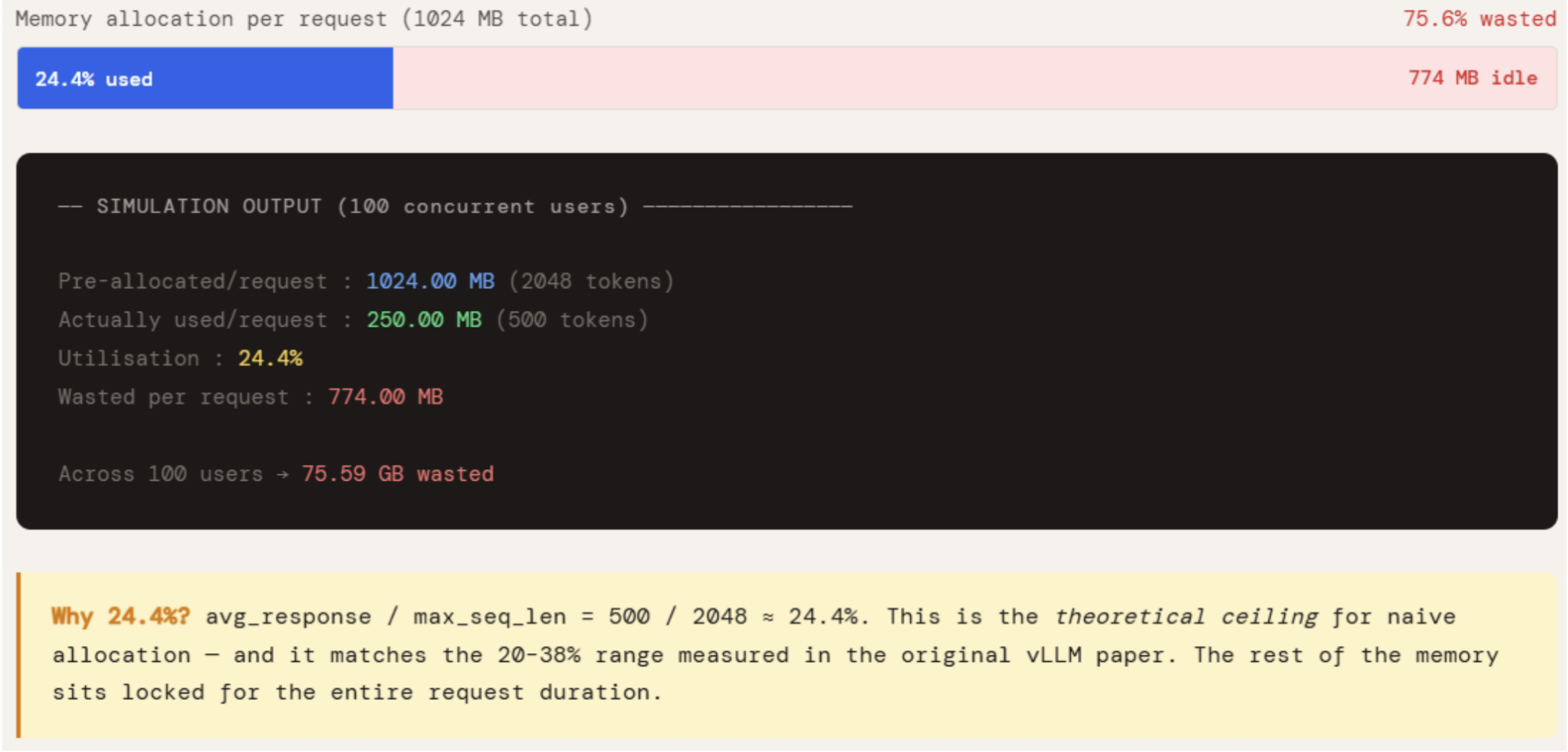

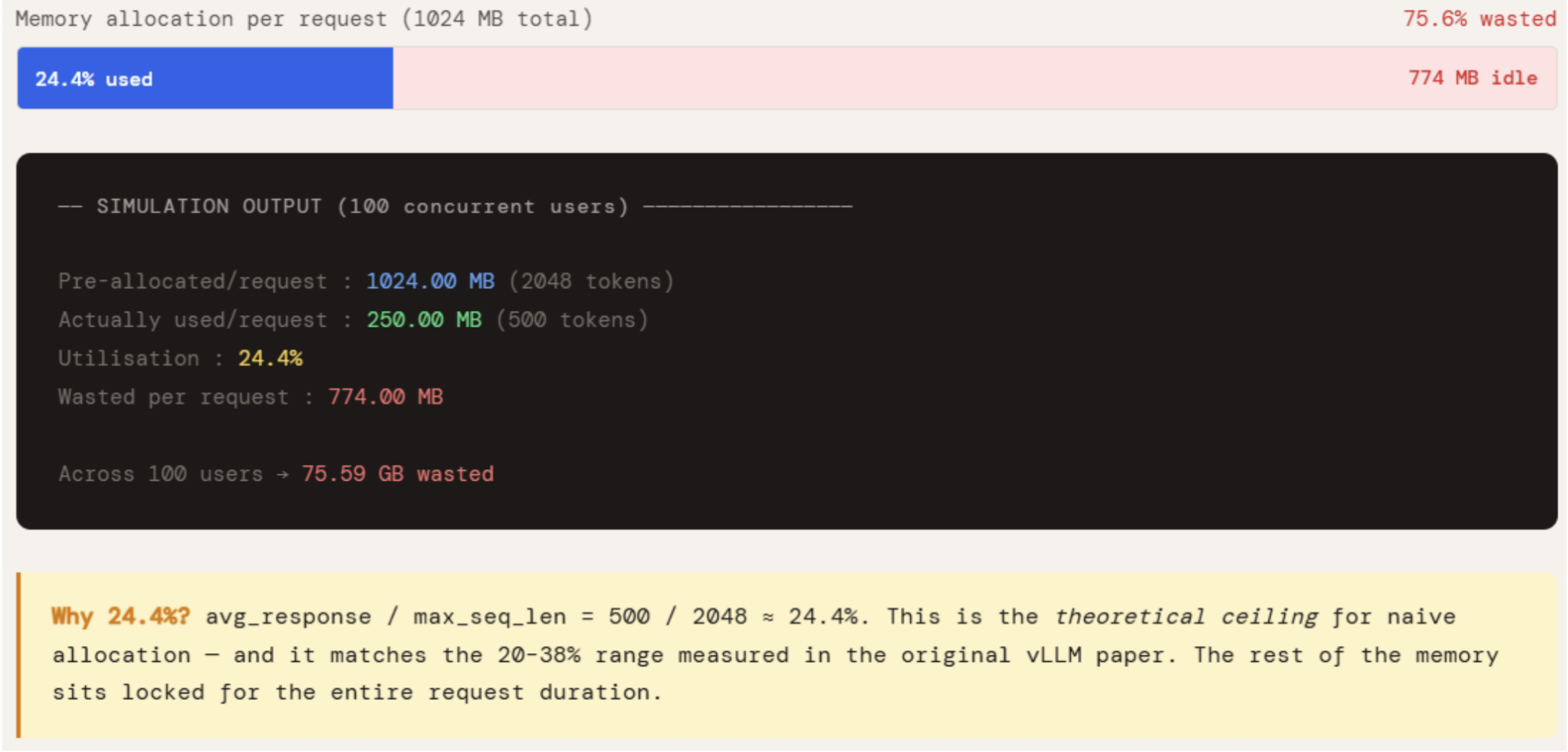

np.random.seed(42)Earlier than simulating something, we have to know the way a lot GPU reminiscence a single token really prices. This relies completely on the mannequin’s structure. We use a GPT-style configuration — 32 layers, 32 consideration heads, 128 dimensions per head, saved in fp16. The issue of two on the entrance accounts for each the Key and Worth projections (there is no such thing as a Q cache — queries are recomputed at every step). Multiplying these out provides us 524,288 bytes, or 512 KB, per token. That is the basic unit all the things else is constructed on — pre-allocation sizes, web page counts, and wasted reminiscence all scale instantly from this quantity.

NUM_LAYERS = 32

NUM_HEADS = 32

HEAD_DIM = 128

BYTES_FP16 = 2

PAGE_SIZE = 16 # tokens per web page (vLLM default)

MAX_SEQ_LEN = 2048

KV_BYTES_PER_TOKEN = 2 * NUM_LAYERS * NUM_HEADS * HEAD_DIM * BYTES_FP16

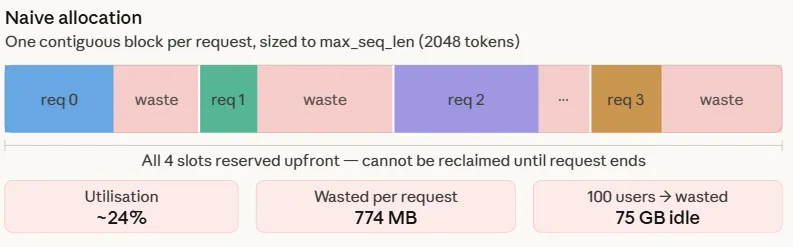

KV_MB_PER_TOKEN = KV_BYTES_PER_TOKEN / 1024 / 1024The naive strategy is easy: when a request arrives, a contiguous block of GPU reminiscence is allotted sized to the utmost sequence size — 2048 tokens on this case. This occurs as a result of the response size is unknown upfront, so the worst case is reserved.

AVG_RESPONSE is ready to 500, which is a sensible common for a manufacturing chatbot. Multiplying by KV_MB_PER_TOKEN provides what is definitely written versus what was locked. The hole is the waste.

The numbers make the issue concrete. Every request pre-allocates 1024 MB however makes use of solely 250 MB — 24.4% utilisation. The remaining 774 MB sits reserved for the complete period of the request, unavailable to every other request. Throughout 100 concurrent customers, that’s 75 GB of GPU reminiscence doing nothing. This isn’t an edge case — it’s the default conduct of each system that doesn’t implement paged allocation, and it’s precisely why naive serving methods hit an OOM wall lengthy earlier than the GPU is computationally saturated.

print("=" * 60)

print("SECTION 1 -- Naive KV Cache: The Waste Problem")

print("=" * 60)

AVG_RESPONSE = 500 # sensible common tokens generated

pre_allocated_mb = MAX_SEQ_LEN * KV_MB_PER_TOKEN

actually_used_mb = AVG_RESPONSE * KV_MB_PER_TOKEN

print(f"nKV cache per token : {KV_BYTES_PER_TOKEN:,} bytes")

print(f"Pre-allocated/request : {pre_allocated_mb:.2f} MB ({MAX_SEQ_LEN} tokens)")

print(f"Actually used/request : {actually_used_mb:.2f} MB ({AVG_RESPONSE} tokens)")

print(f"Utilisation : {actually_used_mb / pre_allocated_mb * 100:.1f}%")

print(f"Wasted per request : {pre_allocated_mb - actually_used_mb:.2f} MB")

NUM_USERS = 100

wasted_gb = (pre_allocated_mb - actually_used_mb) * NUM_USERS / 1024

print(f"nAcross {NUM_USERS} concurrent users → {wasted_gb:.2f} GB wasted")

print("n→ Naive systems utilise only 20-38% of allocated KV cache memory")

print(" (source: original Paged Attention / vLLM paper)")

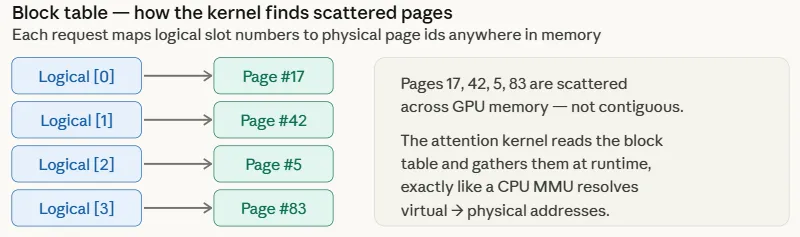

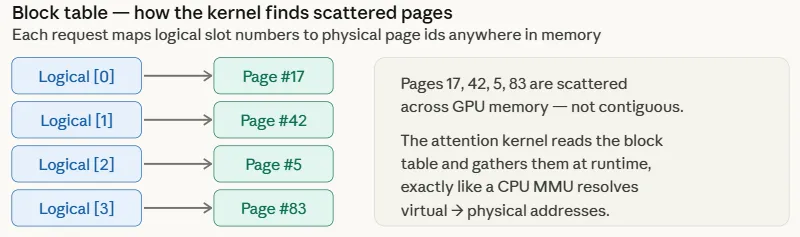

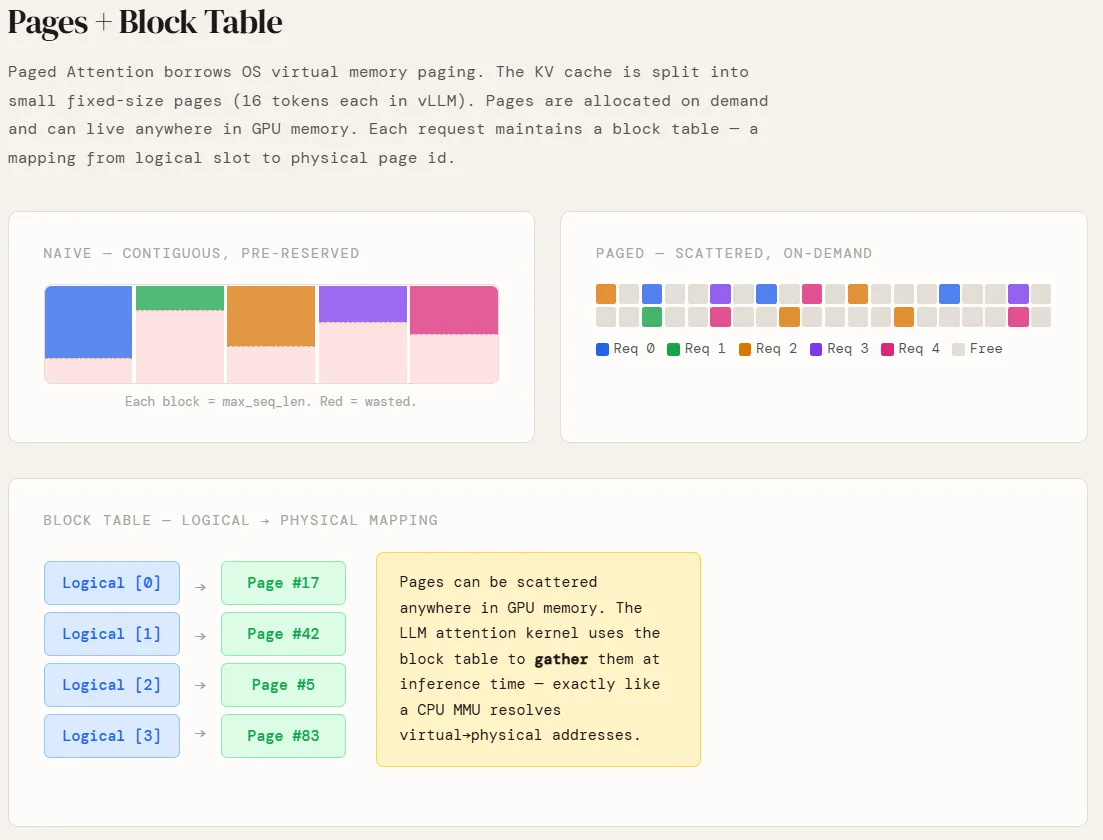

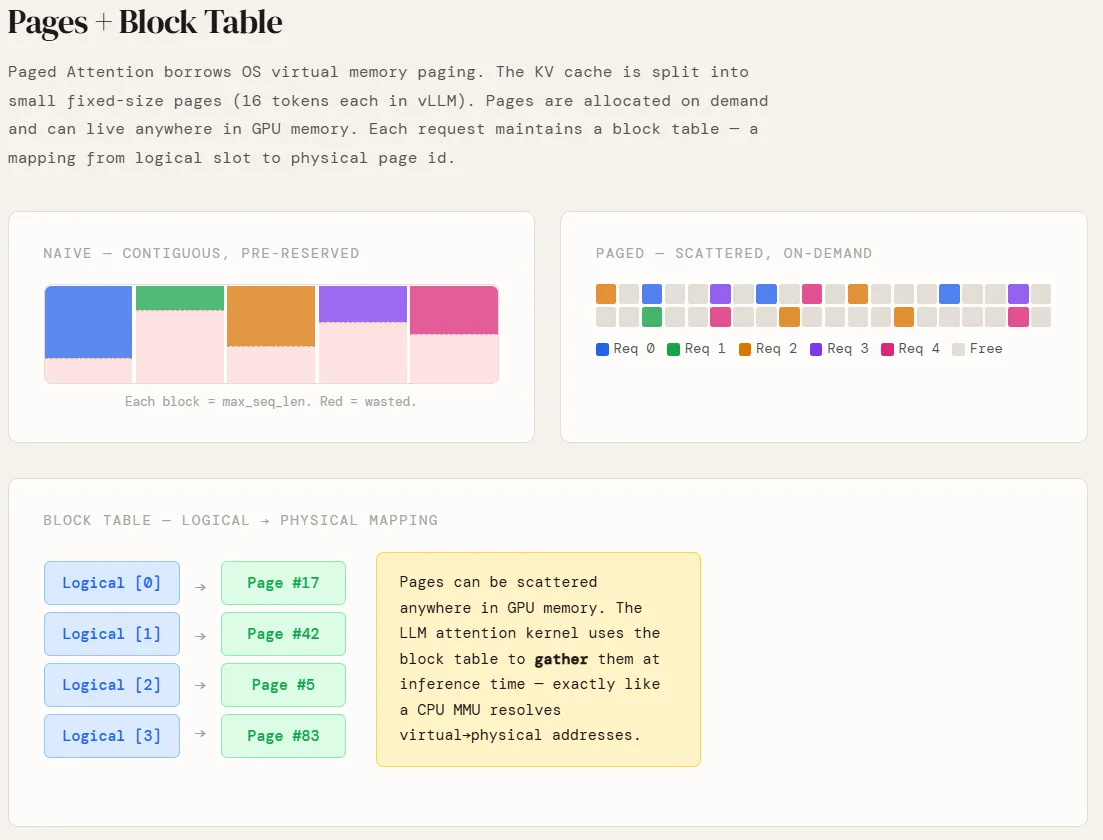

Two courses are launched right here to simulate how Paged Consideration really works on the reminiscence administration degree.

PagePool represents the bodily GPU reminiscence pool — a flat array of equal-size pages, every holding 16 tokens. It maintains a free checklist and a ref rely per web page. When a web page’s ref rely drops to zero, it’s instantly returned to the free checklist and turns into out there to any new request. That is the important thing distinction from naive allocation — there aren’t any reserved holes, no fragmentation, and no reminiscence tied to a completed request.

PagedRequest represents a single inference request. It holds a block_table — a listing that maps logical web page indices to bodily web page ids within the pool. Each time generate_token() is known as and the token rely crosses a web page boundary, a brand new bodily web page is claimed from the pool. No reminiscence is touched earlier than it’s wanted.

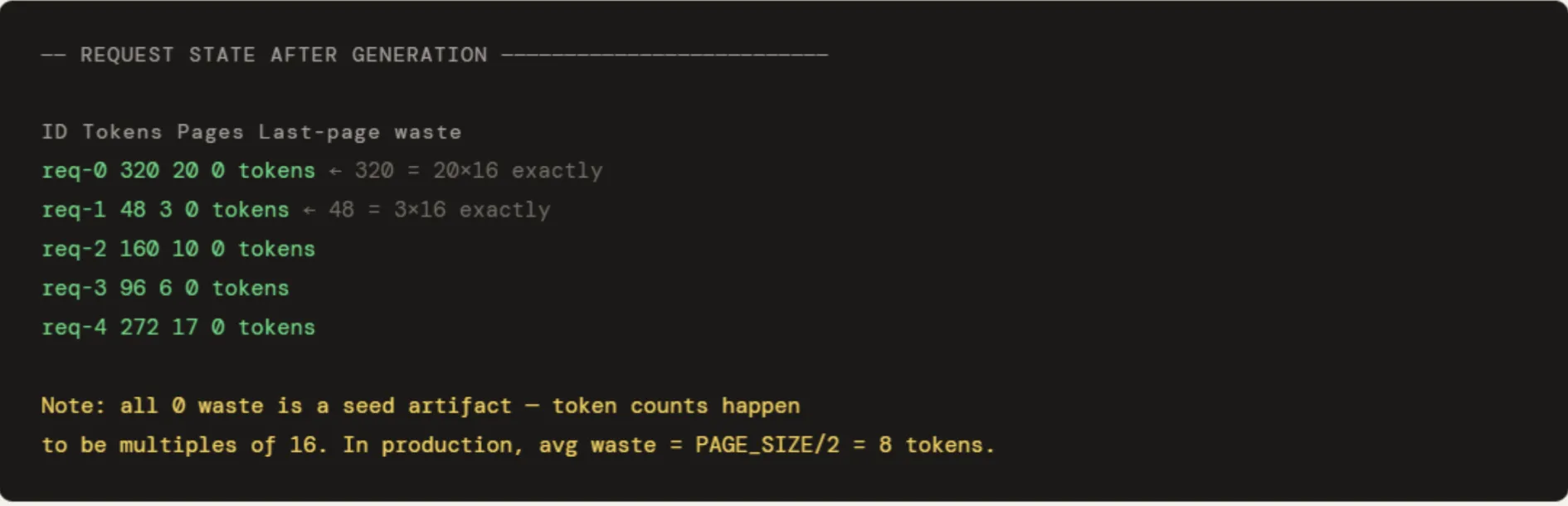

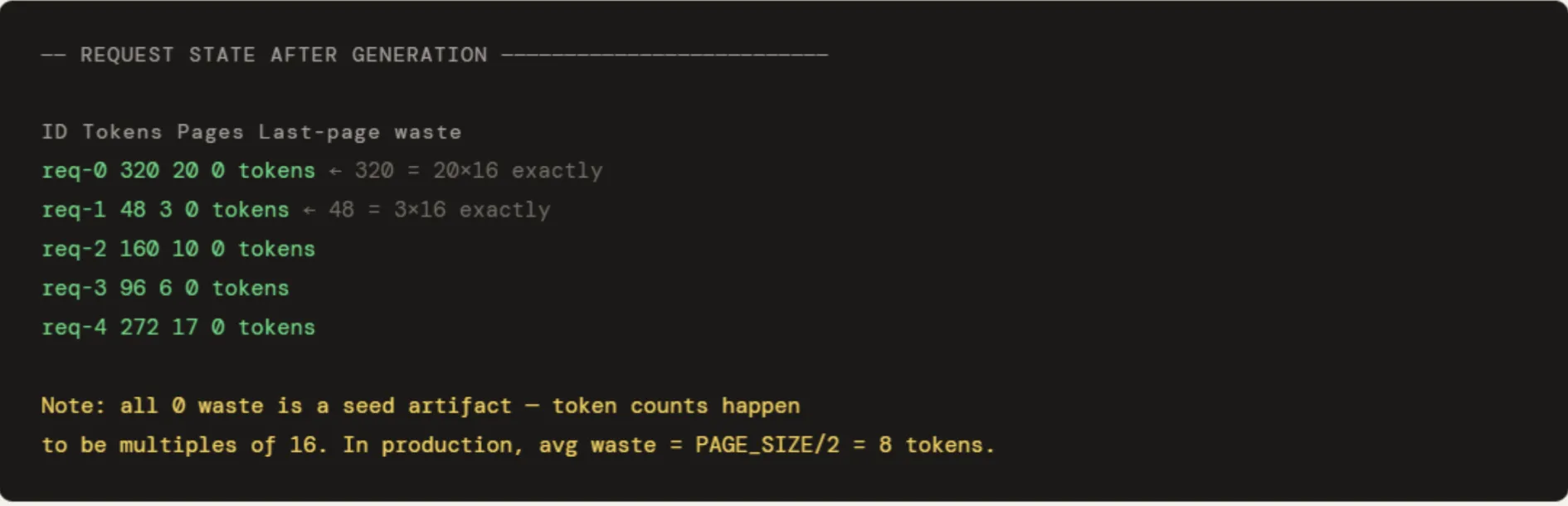

5 requests are run with token counts of 320, 48, 160, 96, and 272. The output exhibits pages allotted proportionally to precise utilization — req-1 with 48 tokens will get 3 pages, req-0 with 320 tokens will get 20. When req-1 is freed, its 3 pages go straight again to the pool and are instantly reusable. The pool utilisation at 10.9% appears to be like low solely as a result of 512 pages had been provisioned for five small requests — in a completely loaded manufacturing pool it could sit close to the 98% vary seen in Part 4. The “0 tokens wasted” within the last-page column is a seed artifact — all 5 token counts occur to be actual multiples of 16. In observe, the typical last-page waste is PAGE_SIZE / 2 = 8 tokens per request.

print("n" + "=" * 60)

print("SECTION 2 -- Paged Attention: Pages + Block Table")

print("=" * 60)

"""

As an alternative of 1 giant contiguous block per request:

- KV cache is break up into fixed-size pages (PAGE_SIZE tokens every)

- Pages are allotted on demand, can dwell anyplace in GPU reminiscence

- Every request retains a block_table: logical index → bodily web page id

"""

class PagePool:

def __init__(self, total_pages):

self.free = checklist(vary(total_pages))

self.whole = total_pages

self.ref_count = defaultdict(int)

def allocate(self):

if not self.free:

increase MemoryError("OOM -- no free pages")

pid = self.free.pop(0)

self.ref_count[pid] = 1

return pid

def launch(self, pid):

self.ref_count[pid] -= 1

if self.ref_count[pid] <= 0:

self.free.append(pid)

del self.ref_count[pid]

def share(self, pid):

"""Increment ref count -- another request is sharing this page."""

self.ref_count[pid] += 1

def cow_copy(self, pid):

"""CoW: allocate a new page, decrement ref on the old one."""

new_pid = self.allocate()

self.launch(pid)

return new_pid

@property

def utilisation(self):

return (self.whole - len(self.free)) / self.whole * 100

class PagedRequest:

def __init__(self, req_id, pool: PagePool):

self.id = req_id

self.pool = pool

self.block_table = [] # logical index → bodily web page id

self.tokens = 0

def generate_token(self):

if self.tokens % PAGE_SIZE == 0: # web page boundary → allocate new web page

self.block_table.append(self.pool.allocate())

self.tokens += 1

def free(self):

for pid in self.block_table:

self.pool.launch(pid)

self.block_table.clear()

pool = PagePool(total_pages=512)

requests = [PagedRequest(f"req-{i}", pool) for i in range(5)]

token_counts = [320, 48, 160, 96, 272]

for req, n in zip(requests, token_counts):

for _ in vary(n):

req.generate_token()

print("nRequest state after generation:")

print(f" {'ID':<10} {'Tokens':>8} {'Pages':>7} {'Last-page waste':>16}")

for req in requests:

waste = req.tokens % PAGE_SIZE

waste = PAGE_SIZE - waste if waste else 0

print(f" {req.id:<10} {req.tokens:>8} {len(req.block_table):>7} {waste:>16} tokens")

print(f"nPool utilisation : {pool.utilisation:.1f}%")

requests[1].free()

print(f"After freeing req-1 → utilisation: {pool.utilisation:.1f}% (pages immediately reusable)")

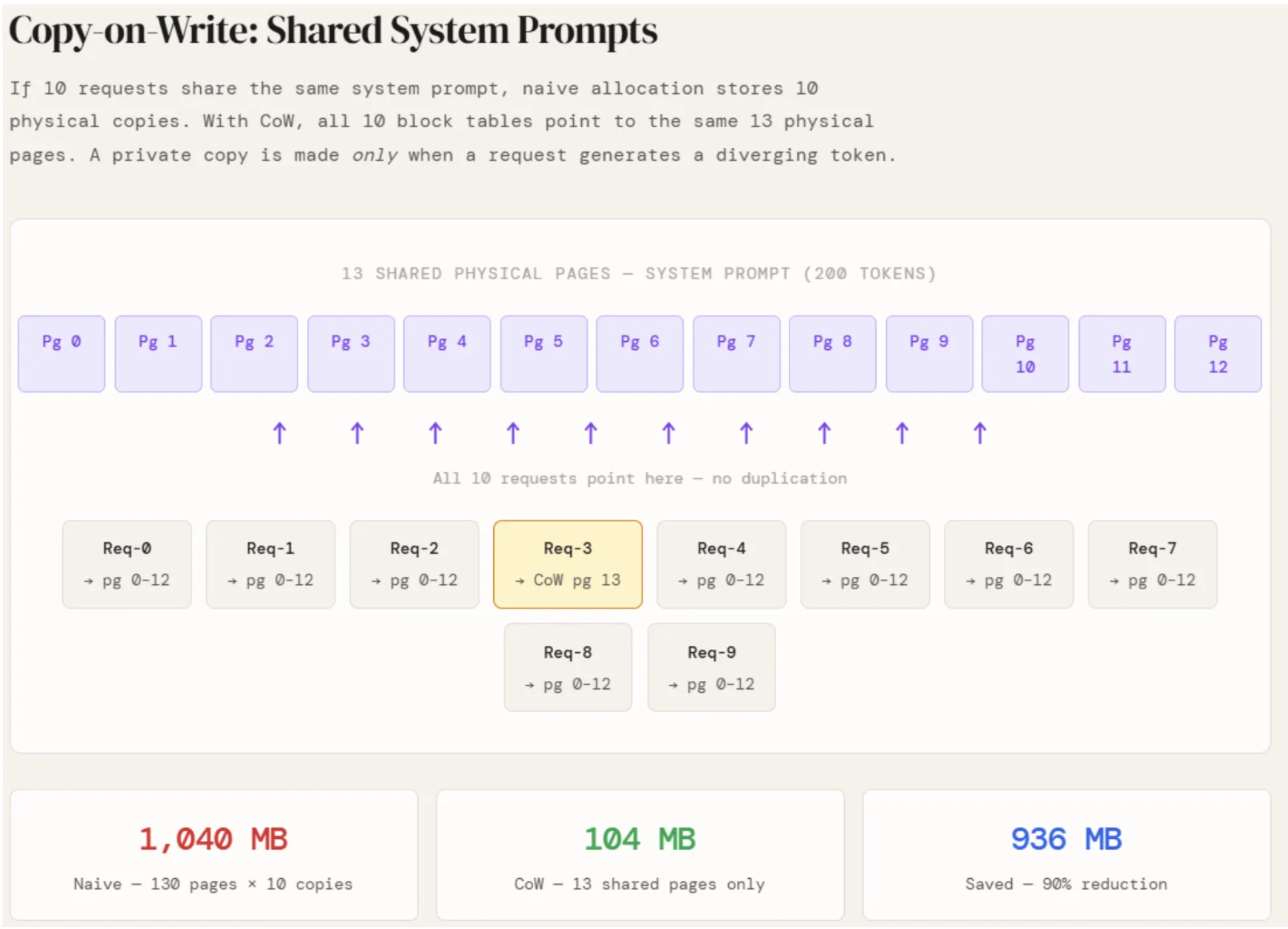

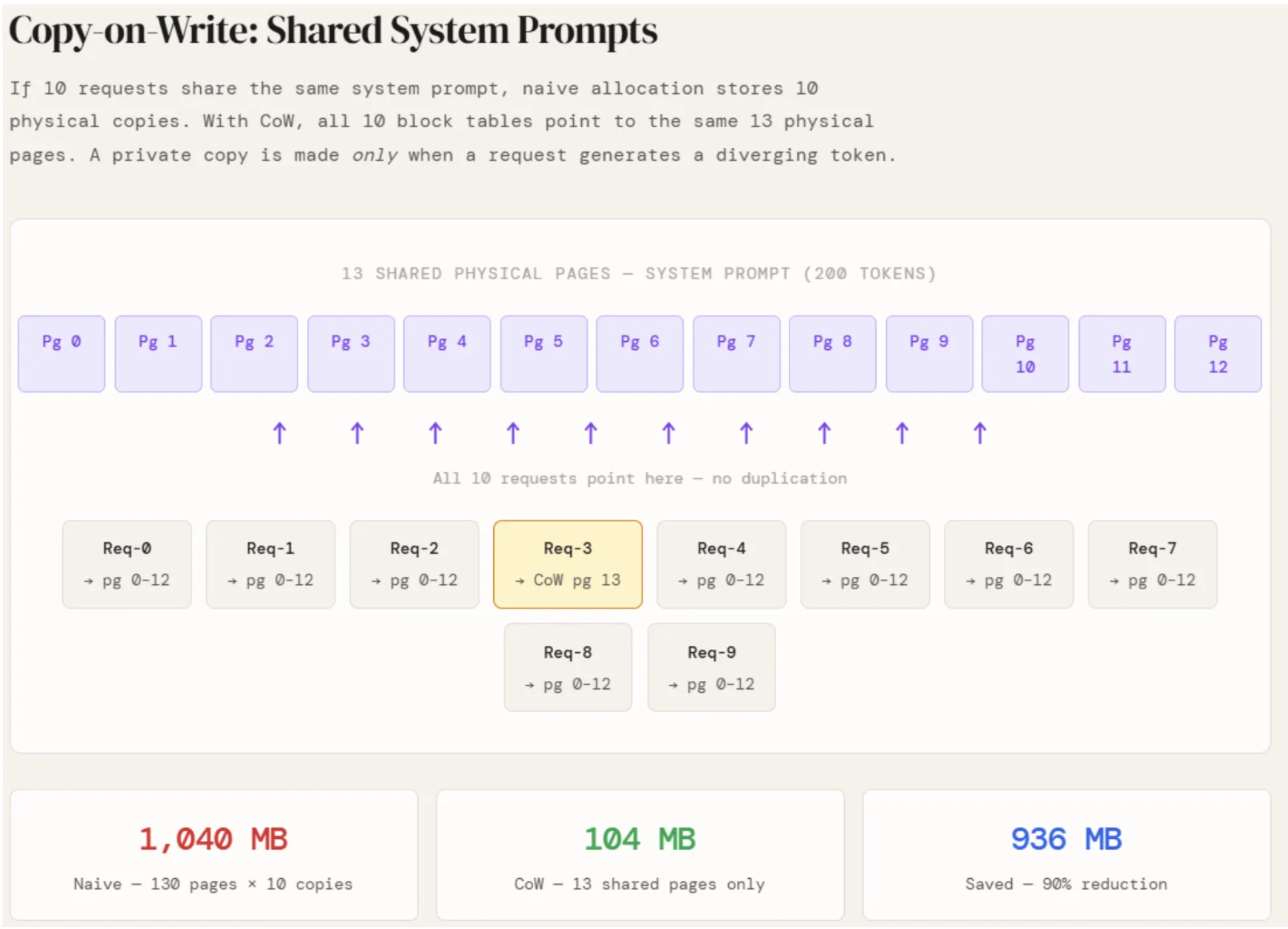

In manufacturing, practically each request to a deployed LLM carries the identical system immediate — the directions that outline the mannequin’s conduct. Beneath naive allocation, every of these requests shops its personal full copy of the system immediate’s KV cache. With 10 concurrent requests and a 200-token system immediate, that’s 10 an identical copies of the identical information occupying separate reminiscence areas.

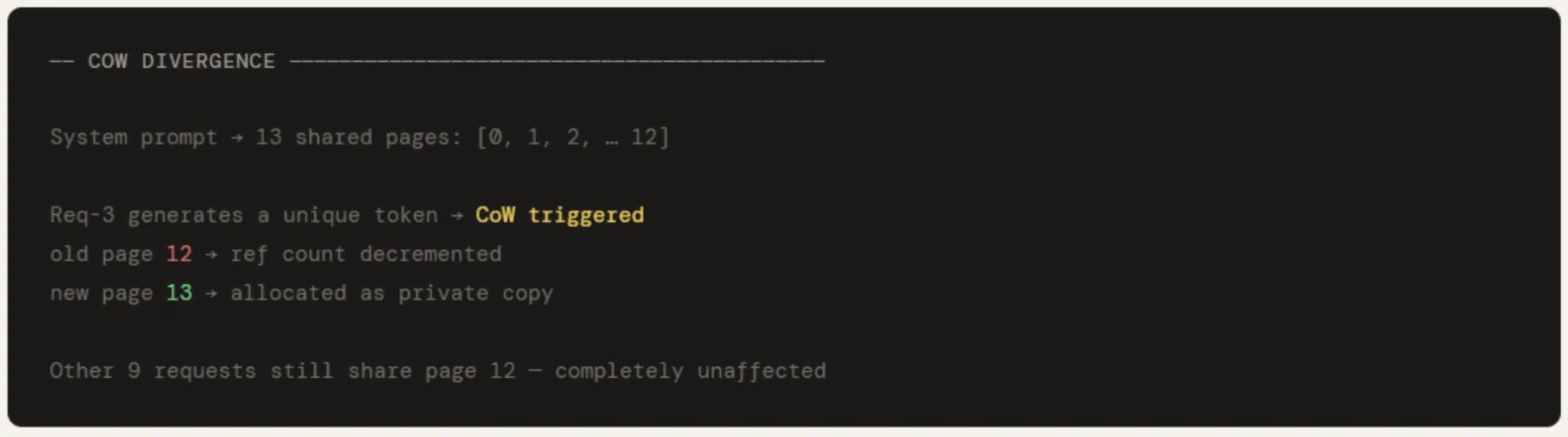

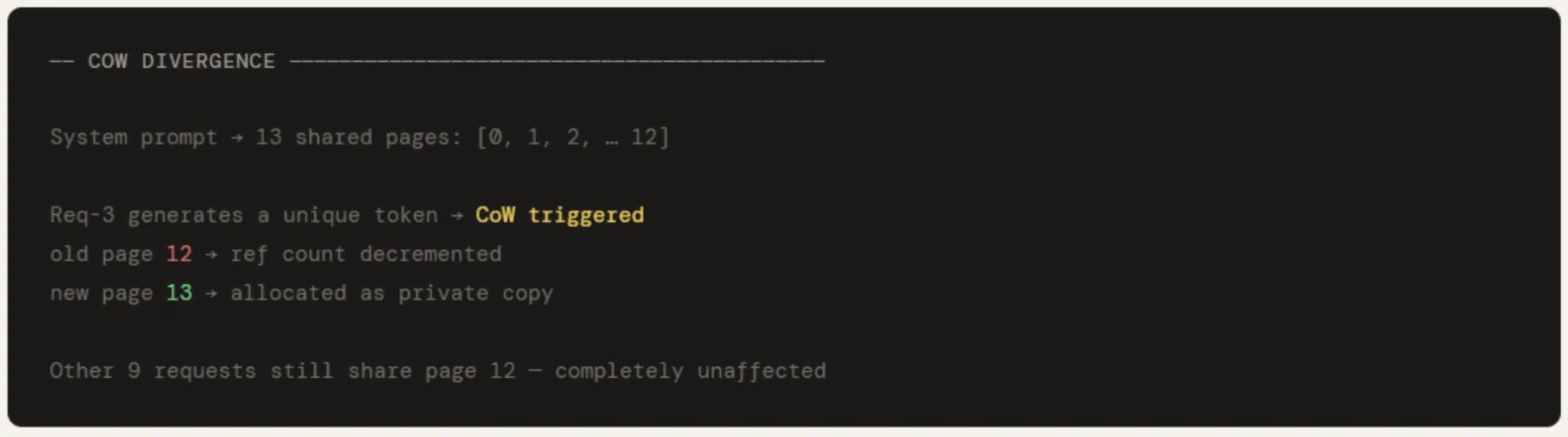

The identical PagePool from Part 2 is reused right here, prolonged with two strategies: share() increments a web page’s ref rely with out allocating something new, and cow_copy() allocates a recent web page and decrements the ref rely on the unique. A brand new pool is instantiated and the system immediate is encoded into 13 pages — math.ceil(200 / 16). Every of the ten person requests then calls share() on all 13 pages, pointing their block tables on the identical bodily reminiscence. No new pages are allotted. The ref rely on every shared web page merely rises to 11.

The financial savings are rapid: naive allocation would require 130 pages throughout 10 requests. With CoW, solely 13 bodily pages exist. That’s 936 MB saved from a single shared prefix.

When req-3 generates its first distinctive token, cow_copy() is known as on its final shared web page — web page 12. A brand new web page 13 is allotted as req-3’s non-public copy, and the ref rely on web page 12 drops by one. The opposite 9 requests proceed pointing at web page 12, utterly unaffected. That is the CoW contract: shared till divergence, non-public solely when obligatory.

print("n" + "=" * 60)

print("SECTION 3 -- Copy-on-Write: Shared System Prompts")

print("=" * 60)

"""

If N requests share a system immediate, naive allocation shops N copies.

With CoW, all requests level to the SAME bodily pages.

A non-public copy is made solely when a request writes a diverging token.

"""

cow_pool = PagePool(total_pages=512)

SYSTEM_TOKENS = 200

system_pages = math.ceil(SYSTEM_TOKENS / PAGE_SIZE)

shared_pids = [cow_pool.allocate() for _ in range(system_pages)]

print(f"nSystem prompt → {system_pages} shared pages: {shared_pids}")

N = 10

user_tables = []

for i in vary(N):

desk = checklist(shared_pids)

for pid in shared_pids:

cow_pool.share(pid) # ref rely up -- no bodily copy

user_tables.append(desk)

saved_mb = (system_pages * N - system_pages) * PAGE_SIZE * KV_MB_PER_TOKEN

print(f"nStoring system prompt for {N} requests:")

print(f" Naive : {system_pages * N} pages ({system_pages * N * PAGE_SIZE * KV_MB_PER_TOKEN:.1f} MB)")

print(f" CoW : {system_pages} pages ({system_pages * PAGE_SIZE * KV_MB_PER_TOKEN:.1f} MB)")

print(f" Saved : {saved_mb:.1f} MB")

old_pid = user_tables[3][-1]

new_pid = cow_pool.cow_copy(old_pid)

user_tables[3][-1] = new_pid

print(f"nReq-3 diverges → CoW: old page {old_pid} → new page {new_pid}")

print(f"All other {N-1} requests still share page {old_pid} unaffected")

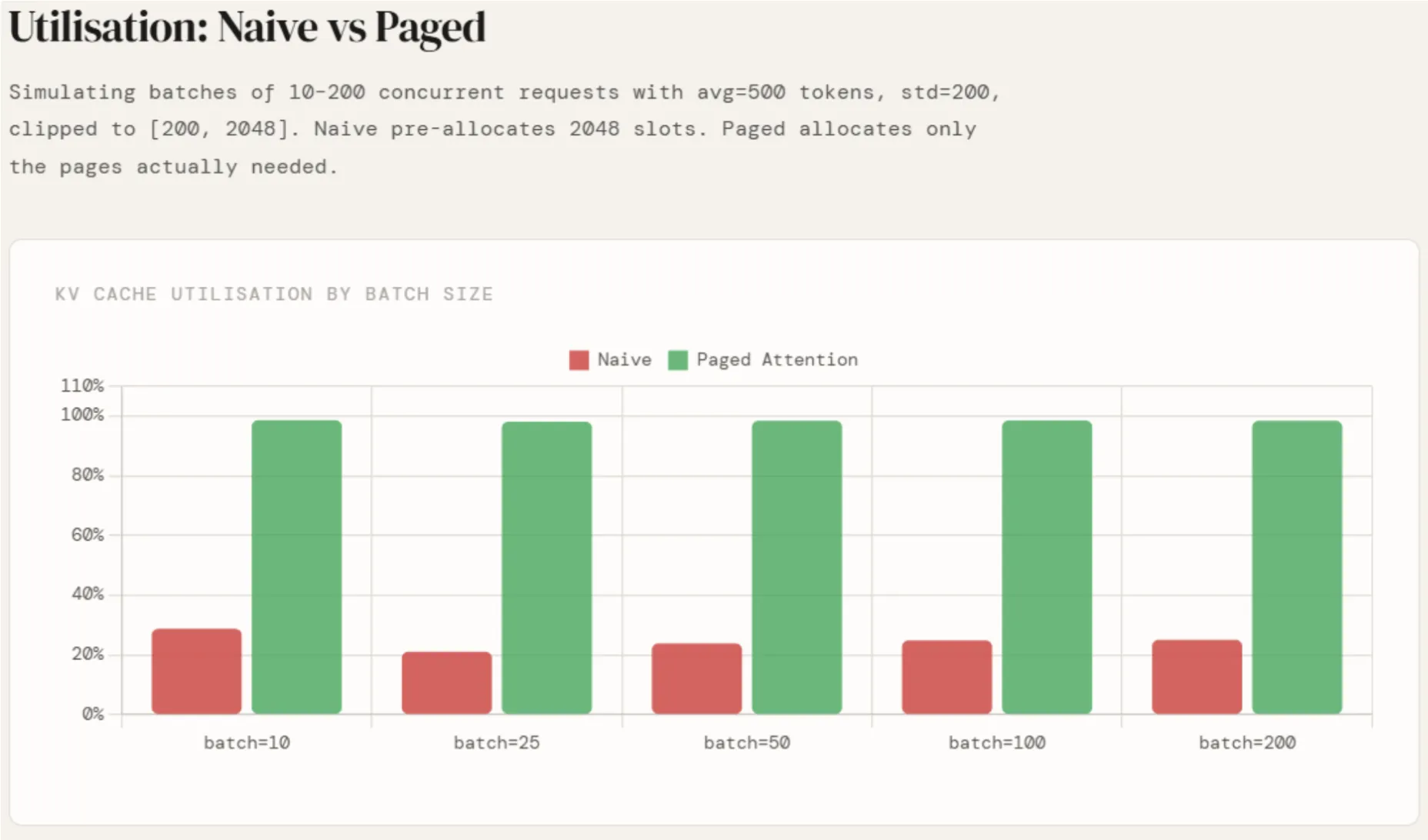

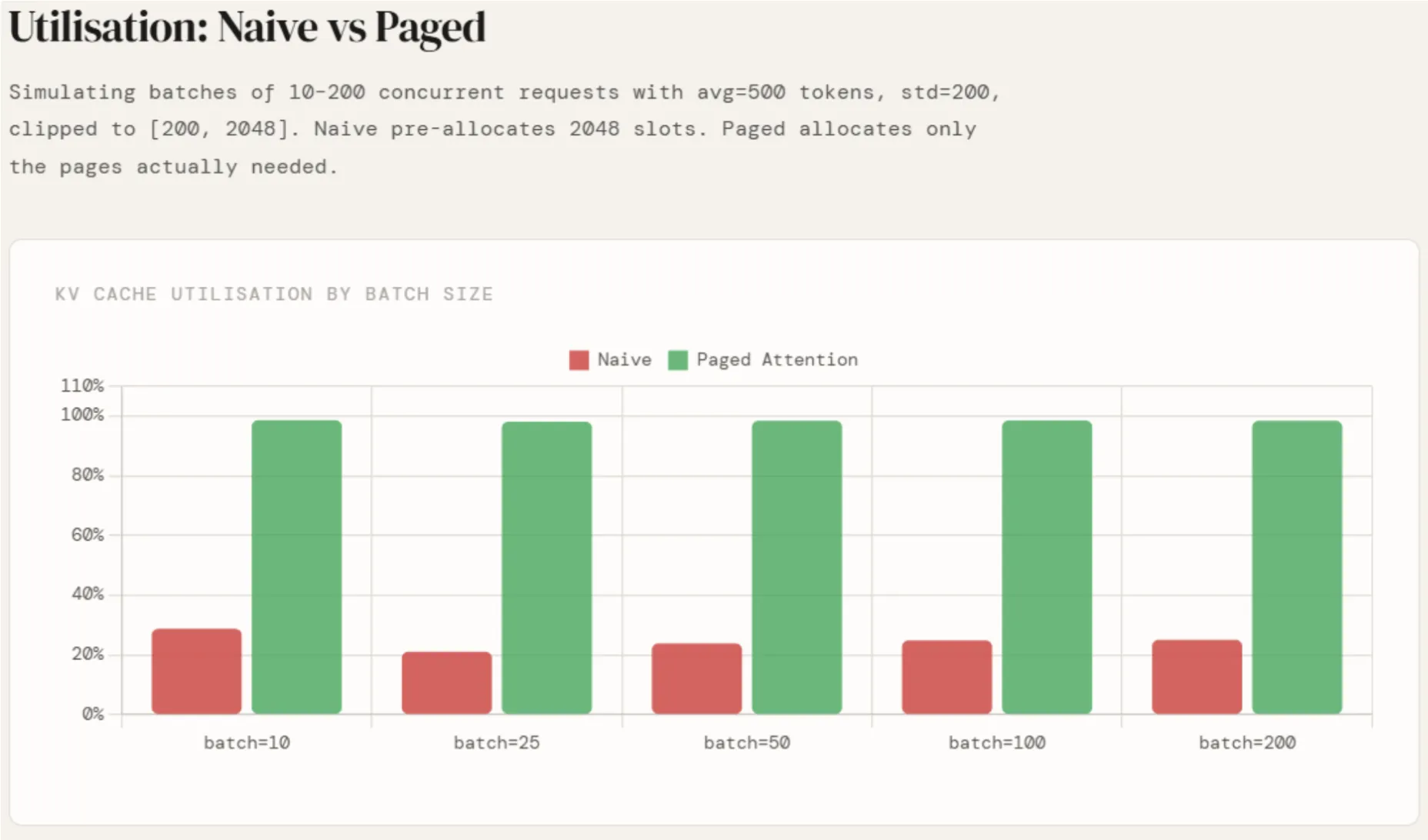

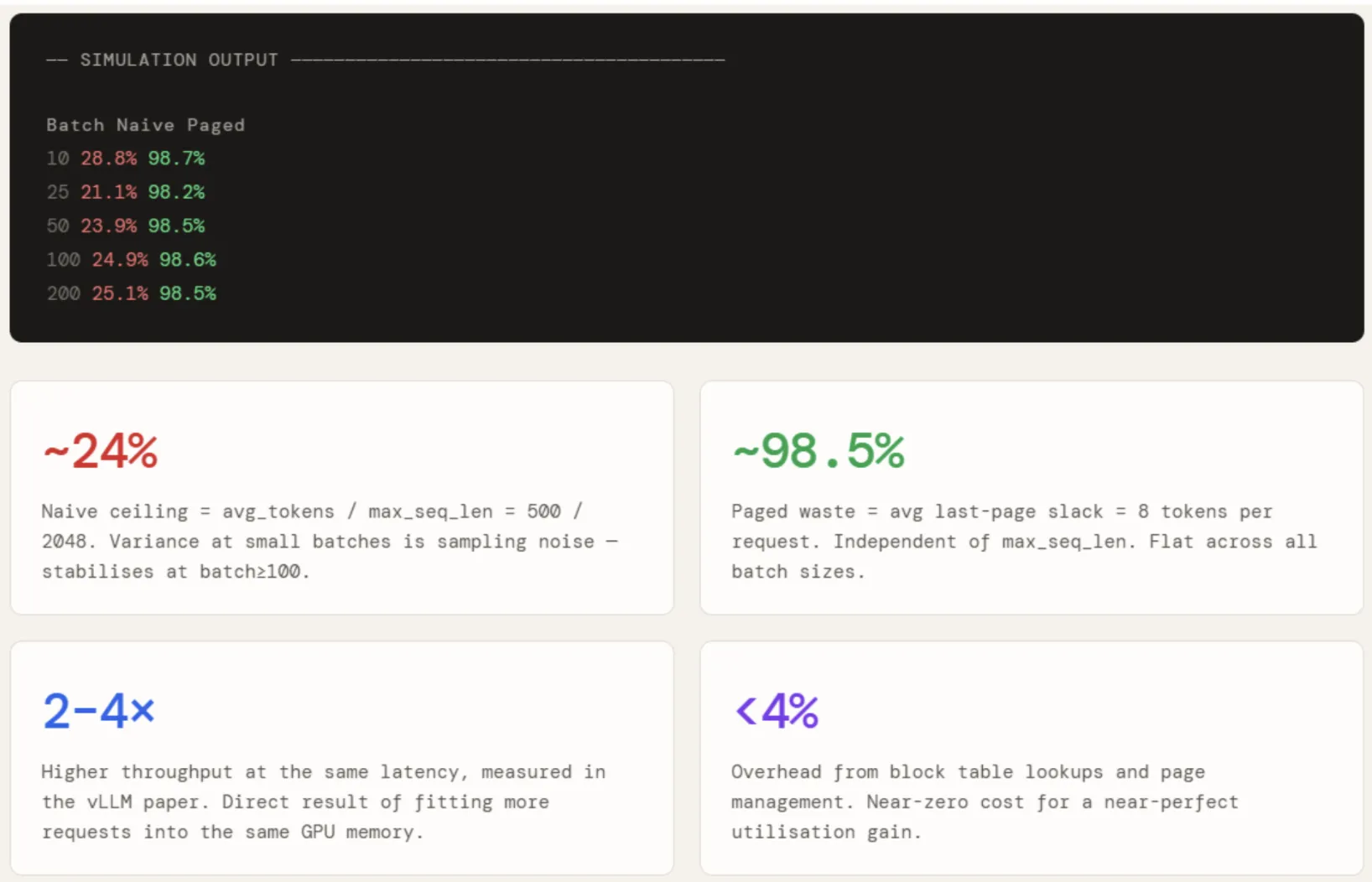

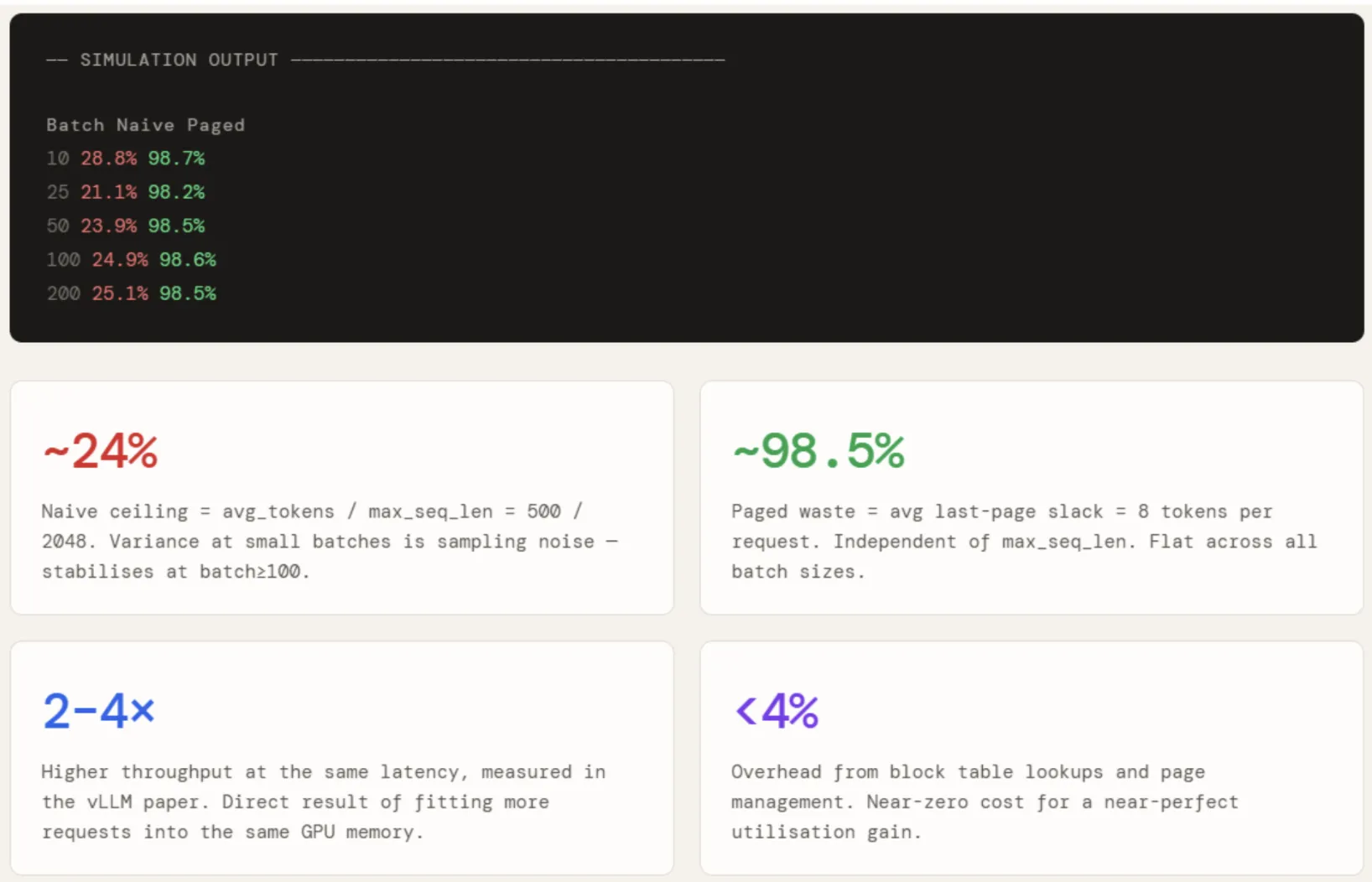

Two capabilities are outlined to measure utilisation beneath every strategy throughout totally different batch sizes.

naive_utilisation attracts token counts from a traditional distribution with avg=500 and std=200, clipped to [200, 2048]. This displays a sensible manufacturing distribution — most responses fall between 200 and 800 tokens, with occasional lengthy ones. For every request, the complete 2048-slot block is pre-allocated regardless. Utilisation is then actual_tokens_sum / (2048 × n) — the ratio of what was written to what was reserved.

paged_utilisation takes the identical precise token counts however computes what number of pages every request would wish — ceil(tokens / 16). The one waste is the unfilled tail of every request’s final web page, which averages 8 tokens. Utilisation is actual_tokens_sum / (pages_allocated × 16).

The outcomes are run throughout batch sizes of 10, 25, 50, 100, and 200. Naive utilisation hovers round 24% throughout all batch sizes — with some variance at smaller batches on account of sampling noise — which is precisely avg / max_seq = 500 / 2048. It doesn’t enhance with scale as a result of the waste is structural, not statistical.

Paged utilisation sits flat at ~98.5% no matter batch measurement, as a result of the waste per request is bounded by a single partial web page and doesn’t scale with max_seq_len in any respect. The hole between the 2 numbers — roughly 74 proportion factors — is instantly what allows vLLM to suit 2–4× extra concurrent requests into the identical GPU reminiscence.

print("n" + "=" * 60)

print("SECTION 4 -- Utilisation: Naive vs Paged")

print("=" * 60)

def naive_utilisation(n, max_seq=2048, avg=500, std=200):

precise = np.clip(np.random.regular(avg, std, n).astype(int), 200, max_seq)

return precise.sum() / (max_seq * n) * 100, precise

def paged_utilisation(actual_tokens, page_size=PAGE_SIZE):

pages = np.ceil(actual_tokens / page_size).astype(int)

return actual_tokens.sum() / (pages * page_size).sum() * 100

batch_sizes = [10, 25, 50, 100, 200]

naive_u, paged_u = [], []

print(f"n {'Batch':>6} {'Naive':>8} {'Paged':>8}")

for bs in batch_sizes:

nu, precise = naive_utilisation(bs)

pu = paged_utilisation(precise)

naive_u.append(nu)

paged_u.append(pu)

print(f" {bs:>6} {nu:>7.1f}% {pu:>7.1f}%")

Try the Full Pocket book right here. Additionally, be at liberty to observe us on Twitter and don’t neglect to affix our 120k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you’ll be able to be part of us on telegram as properly.

I’m a Civil Engineering Graduate (2022) from Jamia Millia Islamia, New Delhi, and I’ve a eager curiosity in Knowledge Science, particularly Neural Networks and their software in numerous areas.