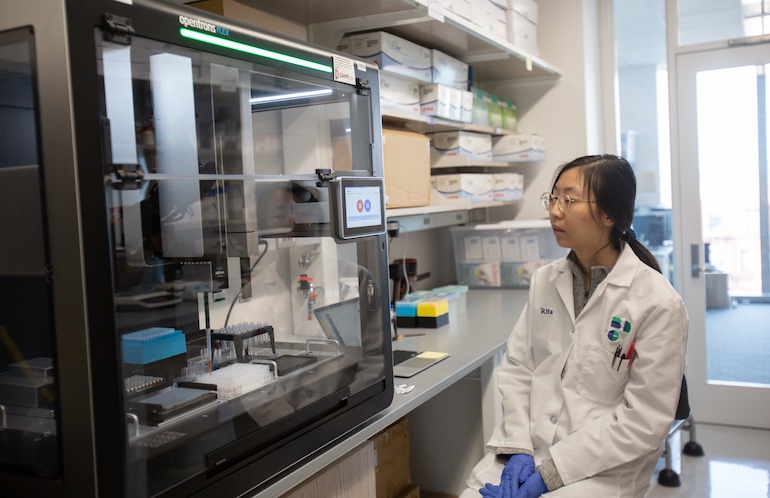

A researcher a Boston College’s DAMP Lab works with an Opentrons Flex robotic. Credit score: Opentrons Labworks Inc.

Pharmaceutical firms and analysis establishments are utilizing synthetic intelligence to design robotic experiments at scale, however they should know if AI-generated directions will execute accurately earlier than dealing with priceless samples and reagents. Opentrons Labworks Inc. at this time introduced Protocol Visualization for Opentrons Flex, a brand new simulation and visualization functionality.

The function permits scientists to simulate and examine robotic protocols in a dynamic digital surroundings earlier than operating them on the Flex system. The interface permits customers to watch every step of an automatic workflow.

“This capability gives researchers a dynamic way to simulate and inspect robotic execution before an experiment begins, creating a clearer bridge between computational design and physical laboratory workflows,” acknowledged James Atwood, CEO of Opentrons. “As AI systems propose more experiments, researchers need infrastructure that makes those experiments understandable, inspectable, and repeatable before they reach the bench.”

Based in 2013, Opentrons mentioned it has greater than 10,000 robotic methods deployed globally, together with installations at main analysis universities and lots of the world’s largest biopharma firms.

Visualization instrument runs on present protocols

Opentrons mentioned it helps protocols authored throughout its software program ecosystem, together with OpentronsAI, the Python Protocol API, and the Protocol Designer utility. Scientists can examine workflows and observe adjustments in liquid ranges at microliter scale.

The system additionally features a Slot Highlight view that gives further element for particular person deck areas. This permits customers to observe effectively volumes and module circumstances all through a run, the New York-based firm defined.

For laboratories growing advanced automation workflows, this stage of inspection could assist sooner debugging and protocol refinement. Scientists can evaluation workflows offline with out interrupting lively laboratory operations, famous Opentrons.

The brand new functionality will probably be obtainable by means of Opentrons App Model 9.0, scheduled for launch in April 2026.

Opentrons CEO explains how lab function works

Atwood replied to the the next questions from The Robotic Report:

Is Opentrons’ simulation and inspection layer hardware-agnostic? If not, is there a particular set of procedures it covers?

Atwood: The simulation and visualization surroundings is designed particularly for protocols written for the Opentrons Flex robotic platform. The system takes any legitimate Flex protocol and permits the consumer to simulate and examine how the robotic will execute it. That features all the things from easy liquid transfers to advanced workflows with hundreds of robotic actions.

Scientists can step by means of protocols of just about any measurement, from a handful of steps to workflows containing 10,000 or extra actions, and observe pipetting, liquid dealing with, labware actions, and module states earlier than operating the experiment. As a result of the simulation surroundings mirrors the Flex execution surroundings, it permits researchers to grasp precisely how the robotic will behave earlier than committing reagents, consumables, and instrument time.

Why has the pharmaceutical business been gradual to handle AI verification issues? How critical are they?

Atwood: A part of the problem is that a lot of the experience required to confirm experiments has traditionally been tacit laboratory information relatively than formalized information. Plenty of experimental troubleshooting depends on what skilled scientists discover on the bench: how a liquid behaves in a effectively plate, how a response seems because it proceeds, or whether or not one thing delicate appears off within the workflow. That sort of observational experience is troublesome to encode immediately into AI methods.

In different phrases, the AI doesn’t know what it doesn’t know. Most of the verification challenges solely grow to be seen when experiments work together with the bodily world. Because of this the business is now specializing in what we name bodily AI: methods that mix language fashions with notion and real-world information

As a substitute of relying solely on documentation or protocols, these methods more and more want visible information, sensor information, and execution information from actual experiments. The verification problem is critical, however it’s additionally solvable as automation platforms generate extra structured experimental information and as AI fashions start interacting immediately with laboratory environments.

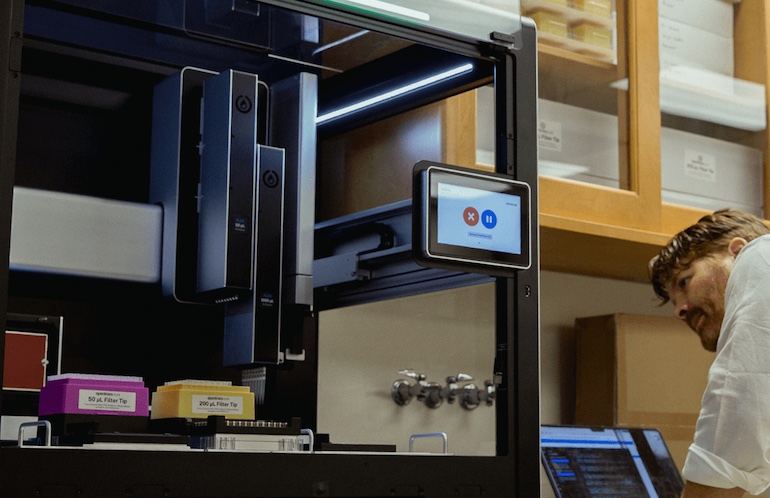

Protocol Visualization for Opentrons Flex is designed to check robotic biopharma experiments at scale earlier than execution. Supply: Opentrons

Are you able to describe how Opentrons’ generative AI works? How do you guarantee repeatability and explainability?

Atwood: The generative AI behind OpentronsAI interprets scientific intent into executable automation protocols.

At a excessive stage, the system makes use of giant language fashions mixed with a retrieval-augmented era (RAG) structure. The fashions reference Opentrons’ documentation and a big inside information base of laboratory protocols and automation workflows developed and verified by Opentrons over a few years.

When a scientist describes an experiment in pure language, the system retrieves related examples and structured information from this database and makes use of that context to generate a protocol appropriate for execution on the robotic.

Repeatability and explainability come from a number of layers of management. The protocols generated are totally inspectable Python-based automation workflows, which means researchers can evaluation, edit, and confirm the steps earlier than execution. Structured prompting additionally ensures that the enter captures the data required to supply dependable automation protocols.

In observe, the AI helps scientists transfer sooner from experimental concept to executable workflow, whereas nonetheless permitting human oversight and inspection earlier than the experiment runs.

Did Opentrons work with particular lab automation distributors and finish customers to develop this providing, and if that’s the case, what did it be taught?

Atwood: The AI functionality was developed with in depth suggestions from a cohort of beta customers, together with researchers constructing automated workflows on the Flex platform. One of many key insights was that as AI-generated protocols grow to be extra advanced, researchers want higher methods to examine, perceive, and debug workflows earlier than execution.

In parallel, Opentrons can also be collaborating with AI and robotics companions, together with NVIDIA and HighRes Biosolutions as a part of broader efforts to attach AI methods with bodily laboratory automation. These collaborations are serving to push the ecosystem towards bodily AI, the place autonomous methods can purpose about experiments, work together with robotic platforms, and adapt primarily based on real-world suggestions.

How is autonomous science evolving, and what challenges stay?

Atwood: The trajectory is towards more and more autonomous laboratories, the place AI methods can design experiments, execute them by means of robotics, observe outcomes, and refine future experiments primarily based on the outcomes.

Reaching that requires combining a number of capabilities:

- Reasoning methods, typically powered by giant language fashions, that may plan experiments

- Notion methods corresponding to vision-language fashions that permit AI to watch what is occurring in actual experiments

- Bodily AI methods that join these fashions to laboratory automation platforms so experiments might be executed in the actual world

Opentrons gives the infrastructure layer for this growth, connecting AI-driven intent to dependable execution on automated lab {hardware}. The most important problem is constructing dependable suggestions loops between digital intelligence and bodily experiments. In contrast to purely digital domains, biology requires capturing structured information from actual laboratory environments: visible observations, instrument outputs, and environmental indicators.

There was speedy progress on this space, together with advances in simulation and digital twin environments like NVIDIA Isaac, which assist practice and take a look at AI methods earlier than they work together with actual laboratory {hardware}.

The publish Opentrons introduces dynamic simulation, visualization for AI-generated lab workflows appeared first on The Robotic Report.