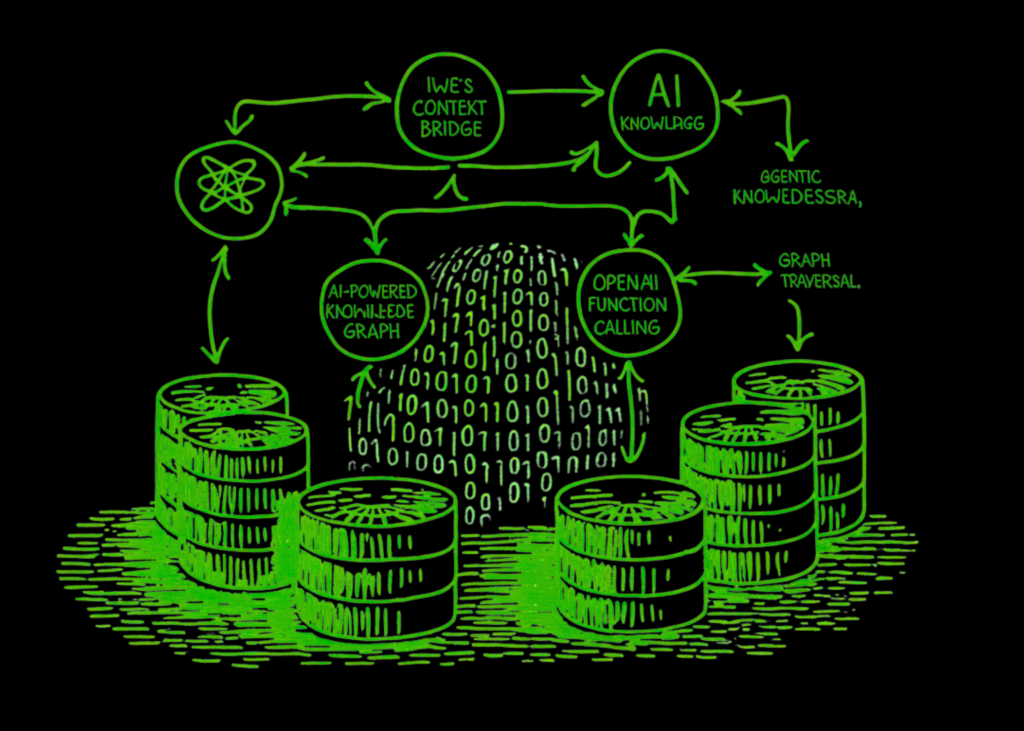

On this tutorial, we implement IWE: an open-source, Rust-powered private data administration system that treats markdown notes as a navigable data graph. Since IWE is a CLI/LSP device designed for native editors. We construct a practical developer data base from scratch, wire up wiki-links and markdown hyperlinks right into a directed graph, after which stroll by means of each main IWE operation: fuzzy search with discover, context-aware retrieval with retrieve, hierarchy show with tree, doc consolidation with squash, statistics with stats, and DOT graph export for visualization. We then transcend the CLI by integrating OpenAI to energy IWE-style AI transforms: summarization, hyperlink suggestion, and todo extraction, immediately towards our data graph. Lastly, we assemble a full agentic RAG pipeline the place an AI agent navigates the graph utilizing function-calling instruments, performs multi-hop reasoning throughout interconnected paperwork, identifies data gaps, and even generates new notes that slot into the prevailing construction.

import subprocess, sys

def _install(pkg):

subprocess.check_call([sys.executable, "-m", "pip", "install", "-q", pkg])

_install("openai")

_install("graphviz")

import re, json, textwrap, os, getpass

from collections import defaultdict

from dataclasses import dataclass, discipline

from typing import Non-obligatory

from datetime import datetime

attempt:

from google.colab import userdata

OPENAI_API_KEY = userdata.get("OPENAI_API_KEY")

if not OPENAI_API_KEY:

elevate ValueError

print("✅ Loaded OPENAI_API_KEY from Colab secrets.")

besides Exception:

OPENAI_API_KEY = getpass.getpass("🔑 Enter your OpenAI API key: ")

print("✅ API key received.")

os.environ["OPENAI_API_KEY"] = OPENAI_API_KEY

from openai import OpenAI

consumer = OpenAI(api_key=OPENAI_API_KEY)

print("n" + "=" * 72)

print(" IWE Advanced Tutorial — Knowledge Graph + AI Agents")

print("=" * 72)

@dataclass

class Part:

degree: int

title: str

content material: str

kids: record = discipline(default_factory=record)

@dataclass

class Doc:

key: str

title: str

raw_content: str

sections: record = discipline(default_factory=record)

outgoing_links: record = discipline(default_factory=record)

tags: record = discipline(default_factory=record)

created: str = ""

modified: str = ""

class KnowledgeGraph:

def __init__(self):

self.paperwork: dict[str, Document] = {}

self.backlinks: dict[str, set] = defaultdict(set)

_WIKI_LINK = re.compile(r"[[([^]|]+)(?:|([^]]+))?]]")

_MD_LINK = re.compile(r"[([^]]+)](([^)]+))")

_HEADER = re.compile(r"^(#{1,6})s+(.+)", re.MULTILINE)

_TAG = re.compile(r"#([a-zA-Z][w/-]*)")

def _extract_links(self, textual content: str) -> record[str]:

hyperlinks = []

for match in self._WIKI_LINK.finditer(textual content):

hyperlinks.append(match.group(1).strip())

for match in self._MD_LINK.finditer(textual content):

goal = match.group(2).strip()

if not goal.startswith("http"):

goal = goal.substitute(".md", "")

hyperlinks.append(goal)

return hyperlinks

def _parse_sections(self, textual content: str) -> record[Section]:

sections = []

elements = self._HEADER.break up(textual content)

i = 1

whereas i < len(elements) - 1:

degree = len(elements[i])

title = elements[i + 1].strip()

physique = elements[i + 2] if i + 2 < len(elements) else ""

sections.append(Part(degree=degree, title=title, content material=physique.strip()))

i += 3

return sections

def _extract_tags(self, textual content: str) -> record[str]:

tags = set()

for line in textual content.break up("n"):

if line.strip().startswith("#") and " " in line.strip():

stripped = re.sub(r"^#{1,6}s+.*", "", line)

for m in self._TAG.finditer(stripped):

tags.add(m.group(1))

else:

for m in self._TAG.finditer(line):

tags.add(m.group(1))

return sorted(tags)

def add_document(self, key: str, content material: str) -> Doc:

sections = self._parse_sections(content material)

title = sections[0].title if sections else key

hyperlinks = self._extract_links(content material)

tags = self._extract_tags(content material)

now = datetime.now().strftime("%Y-%m-%d %H:%M")

doc = Doc(

key=key, title=title, raw_content=content material,

sections=sections, outgoing_links=hyperlinks, tags=tags,

created=now, modified=now,

)

self.paperwork[key] = doc

for goal in hyperlinks:

self.backlinks[target].add(key)

return doc

def get(self, key: str) -> Non-obligatory[Document]:

return self.paperwork.get(key)

def discover(self, question: str, roots_only: bool = False, restrict: int = 10) -> record[str]:

q = question.decrease()

scored = []

for key, doc in self.paperwork.gadgets():

rating = 0

if q in doc.title.decrease():

rating += 10

if q in doc.raw_content.decrease():

rating += doc.raw_content.decrease().depend(q)

if q in key.decrease():

rating += 5

for tag in doc.tags:

if q in tag.decrease():

rating += 3

if rating > 0:

scored.append((key, rating))

scored.type(key=lambda x: -x[1])

outcomes = [k for k, _ in scored[:limit]]

if roots_only:

outcomes = [k for k in results if not self.backlinks.get(k)]

return outcomes

def retrieve(self, key: str, depth: int = 1, context: int = 1,

exclude: set = None) -> str:

exclude = exclude or set()

elements = []

if context > 0:

parents_of = record(self.backlinks.get(key, set()) - exclude)

for p in parents_of[:context]:

pdoc = self.get(p)

if pdoc:

elements.append(f"[CONTEXT: {pdoc.title}]n{pdoc.raw_content[:300]}...n")

exclude.add(p)

doc = self.get(key)

if not doc:

return f"⚠ Document '{key}' not found."

elements.append(doc.raw_content)

exclude.add(key)

if depth > 0:

for hyperlink in doc.outgoing_links:

if hyperlink not in exclude:

little one = self.get(hyperlink)

if little one:

elements.append(f"n---n[LINKED: {child.title}]n")

elements.append(

self.retrieve(hyperlink, depth=depth - 1,

context=0, exclude=exclude)

)

return "n".be part of(elements)

def tree(self, key: str, indent: int = 0, _visited: set = None) -> str:

_visited = _visited if _visited will not be None else set()

doc = self.get(key)

if not doc:

return ""

prefix = " " * indent + ("└─ " if indent else "")

if key in _visited:

return f"{prefix}{doc.title} ({key}) ↩ (circular ref)"

_visited.add(key)

strains = [f"{prefix}{doc.title} ({key})"]

for hyperlink in doc.outgoing_links:

if self.get(hyperlink):

strains.append(self.tree(hyperlink, indent + 1, _visited))

return "n".be part of(strains)

def squash(self, key: str, visited: set = None) -> str:

visited = visited or set()

doc = self.get(key)

if not doc or key in visited:

return ""

visited.add(key)

elements = [doc.raw_content]

for hyperlink in doc.outgoing_links:

child_content = self.squash(hyperlink, visited)

if child_content:

elements.append(f"n{'─' * 40}n")

elements.append(child_content)

return "n".be part of(elements)

def stats(self) -> dict:

total_words = sum(len(d.raw_content.break up()) for d in self.paperwork.values())

total_links = sum(len(d.outgoing_links) for d in self.paperwork.values())

orphans = [k for k in self.documents if not self.backlinks.get(k)

and not self.documents[k].outgoing_links]

all_tags = set()

for d in self.paperwork.values():

all_tags.replace(d.tags)

return {

"total_documents": len(self.paperwork),

"total_words": total_words,

"total_links": total_links,

"unique_tags": len(all_tags),

"tags": sorted(all_tags),

"orphan_notes": orphans,

"avg_words_per_doc": total_words // max(len(self.paperwork), 1),

}

def export_dot(self, highlight_key: str = None) -> str:

strains = ['digraph KnowledgeGraph {',

' rankdir=LR;',

' node [shape=box, style="rounded,filled", fillcolor="#f0f4ff", '

'fontname="Helvetica", fontsize=10];',

' edge [color="#666666", arrowsize=0.7];']

for key, doc in self.paperwork.gadgets():

label = doc.title[:30]

shade="#ffe4b5" if highlight_key == key else '#f0f4ff'

strains.append(f' "{key}" [label="{label}", fillcolor="{color}"];')

for key, doc in self.paperwork.gadgets():

for hyperlink in doc.outgoing_links:

if hyperlink in self.paperwork:

strains.append(f' "{key}" -> "{link}";')

strains.append("}")

return "n".be part of(strains)

print("n✅ Section 1 complete — KnowledgeGraph class defined.n")We set up the required dependencies, securely settle for the OpenAI API key by means of Colab secrets and techniques or a password immediate, and initialize the OpenAI consumer. We then outline the three foundational knowledge courses, Part, Doc, and KnowledgeGraph, that mirror IWE’s arena-based graph structure the place each markdown file is a node and each hyperlink is a directed edge. We implement the total suite of IWE CLI operations on the KnowledgeGraph class, together with markdown parsing for wiki-links and headers, fuzzy search with discover, context-aware retrieval with retrieve, cycle-safe hierarchy show with tree, doc consolidation with squash, data base analytics with stats, and DOT graph export for Graphviz visualization.

kg = KnowledgeGraph()

kg.add_document("project-index", """# Net App Challenge

That is the **Map of Content material** for our internet utility undertaking.

## Structure

- [Authentication System](authentication)

- [Database Design](database-design)

- [API Design](api-design)

## Growth

- [Frontend Stack](frontend-stack)

- [Deployment Pipeline](deployment)

## Analysis

- [[caching-strategies]]

- [[performance-notes]]

""")

kg.add_document("authentication", """# Authentication System

Our app makes use of **JWT-based authentication** with refresh tokens.

## Stream

1. Consumer submits credentials to `/api/auth/login`

2. Server validates towards [Database Design](database-design) person desk

3. Returns short-lived entry token (15 min) + refresh token (7 days)

4. Shopper shops refresh token in HTTP-only cookie

## Safety Issues

- Passwords hashed with bcrypt (value issue 12)

- Fee limiting on login endpoint: 5 makes an attempt / minute

- Refresh token rotation on every use

- See [[caching-strategies]] for session caching

#safety #jwt #auth

""")

kg.add_document("database-design", """# Database Design

We use **PostgreSQL 16** with the next core tables.

## Customers Desk

```sql

CREATE TABLE customers (

id UUID PRIMARY KEY DEFAULT gen_random_uuid(),

electronic mail VARCHAR(255) UNIQUE NOT NULL,

password VARCHAR(255) NOT NULL,

created_at TIMESTAMPTZ DEFAULT NOW()

);

```

## Periods Desk

```sql

CREATE TABLE classes (

id UUID PRIMARY KEY,

user_id UUID REFERENCES customers(id),

token_hash VARCHAR(255) NOT NULL,

expires_at TIMESTAMPTZ NOT NULL

);

```

## Indexing Technique

- B-tree on `customers.electronic mail` for login lookups

- B-tree on `classes.token_hash` for token validation

- See [[performance-notes]] for question optimization

#database #postgresql #schema

""")

kg.add_document("api-design", """# API Design

RESTful API following OpenAPI 3.0 specification.

## Endpoints

| Methodology | Path | Description |

|--------|------|-------------|

| POST | /api/auth/login | Authenticate person |

| POST | /api/auth/refresh | Refresh entry token |

| GET | /api/customers/me | Get present person profile |

| PUT | /api/customers/me | Replace profile |

## Error Dealing with

All errors return JSON with `{ "error": "code", "message": "..." }`.

Authentication endpoints documented in [Authentication System](authentication).

Information fashions align with [Database Design](database-design).

#api #relaxation #openapi

""")

kg.add_document("frontend-stack", """# Frontend Stack

## Know-how Selections

- **Framework**: React 19 with Server Elements

- **Styling**: Tailwind CSS v4

- **State Administration**: Zustand for consumer state

- **Information Fetching**: TanStack Question v5

## Auth Integration

The frontend consumes the [API Design](api-design) endpoints.

Entry tokens are saved in reminiscence (not localStorage) for safety.

Refresh dealt with transparently through Axios interceptors.

#frontend #react #tailwind

""")

kg.add_document("deployment", """# Deployment Pipeline

## Infrastructure

- **Container Runtime**: Docker with multi-stage builds

- **Orchestration**: Kubernetes on GKE

- **CI/CD**: GitHub Actions → Google Artifact Registry → GKE

## Pipeline Levels

1. Lint & type-check

2. Unit assessments (Jest + pytest)

3. Construct Docker pictures

4. Push to Artifact Registry

5. Deploy to staging (auto)

6. Deploy to manufacturing (guide approval)

## Monitoring

- Prometheus + Grafana for metrics

- Structured logging with correlation IDs

- See [[performance-notes]] for SLOs

#devops #kubernetes #cicd

""")

kg.add_document("caching-strategies", """# Caching Methods

## Utility-Stage Caching

- **Redis** for session storage and charge limiting

- Cache-aside sample for often accessed person profiles

- TTL: 5 minutes for profiles, quarter-hour for config

## HTTP Caching

- `Cache-Management: non-public, max-age=0` for authenticated endpoints

- `Cache-Management: public, max-age=3600` for static belongings

- ETag help for conditional requests

## Cache Invalidation

- Occasion-driven invalidation through pub/sub

- Versioned cache keys: `person:{id}:v{model}`

Associated: [Authentication System](authentication) makes use of Redis for refresh tokens.

#caching #redis #efficiency

""")

kg.add_document("performance-notes", """# Efficiency Notes

## Database Question Optimization

- Use `EXPLAIN ANALYZE` earlier than deploying new queries

- Connection pooling with PgBouncer (max 50 connections)

- Keep away from N+1 queries — use JOINs or DataLoader sample

## SLO Targets

| Metric | Goal | Present |

|--------|--------|---------|

| p99 latency | < 200ms | 180ms |

| Availability | 99.9% | 99.95% |

| Error charge | < 0.1% | 0.05% |

## Load Testing

- k6 scripts in `/assessments/load/`

- Baseline: 1000 RPS sustained

- Spike: 5000 RPS for 60 seconds

Associated to [Database Design](database-design) indexing and [[caching-strategies]].

#efficiency #slo #monitoring

""")

print("✅ Section 2 complete — 8 documents loaded into knowledge graph.n")

print("─" * 72)

print(" 3A · iwe find — Search the Knowledge Graph")

print("─" * 72)

outcomes = kg.discover("authentication")

print(f"n🔍 find('authentication'): {results}")

outcomes = kg.discover("performance")

print(f"🔍 find('performance'): {results}")

outcomes = kg.discover("cache", roots_only=True)

print(f"🔍 find('cache', roots_only=True): {results}")

print("n" + "─" * 72)

print(" 3B · iwe tree — Document Hierarchy")

print("─" * 72)

print()

print(kg.tree("project-index"))

print("n" + "─" * 72)

print(" 3C · iwe stats — Knowledge Base Statistics")

print("─" * 72)

stats = kg.stats()

for okay, v in stats.gadgets():

print(f" {k:>25s}: {v}")

print("n" + "─" * 72)

print(" 3D · iwe retrieve — Context-Aware Retrieval")

print("─" * 72)

print("n📄 Retrieving 'authentication' with depth=1, context=1:n")

retrieved = kg.retrieve("authentication", depth=1, context=1)

print(retrieved[:800] + "n... (truncated)")

print("n" + "─" * 72)

print(" 3E · iwe squash — Combine Documents")

print("─" * 72)

squashed = kg.squash("project-index")

print(f"n📋 Squashed 'project-index': {len(squashed)} characters, "

f"{len(squashed.split())} words")

print("n" + "─" * 72)

print(" 3F · iwe export dot — Graph Visualization")

print("─" * 72)

dot_output = kg.export_dot(highlight_key="project-index")

print(f"n🎨 DOT output ({len(dot_output)} chars):n")

print(dot_output[:500] + "n...")

attempt:

import graphviz

src = graphviz.Supply(dot_output)

src.render("knowledge_graph", format="png", cleanup=True)

print("n✅ Graph rendered to 'knowledge_graph.png'")

attempt:

from IPython.show import Picture, show

show(Picture("knowledge_graph.png"))

besides ImportError:

print(" (Run in Colab/Jupyter to see the image inline)")

besides Exception as e:

print(f" ⚠ Graphviz rendering skipped: {e}")

print("n✅ Section 3 complete — all graph operations demonstrated.n")We instantiate the KnowledgeGraph and populate it with eight interconnected markdown paperwork that type a practical developer data base, spanning authentication, database design, API design, frontend, deployment, caching, and efficiency, all organized underneath a Map of Content material entry level, precisely as we’d construction notes in IWE. We then train each graph operation towards this data base: we search with discover, show the total doc hierarchy with tree, pull statistics with stats, carry out context-aware retrieval that follows hyperlinks with retrieve, consolidate your entire graph right into a single doc with squash, and export the construction as a DOT graph. We render the graph visually utilizing Graphviz and show it inline, giving us a transparent image of how all our notes join to one another.

print("─" * 72)

print(" 4 · AI-Powered Document Transforms")

print("─" * 72)

def ai_transform(textual content: str, motion: str, context: str = "",

mannequin: str = "gpt-4o-mini") -> str:

prompts = {

"rewrite": (

"Rewrite the following text to improve clarity and readability. "

"Keep the markdown formatting. Return ONLY the rewritten text."

),

"summarize": (

"Summarize the following text in 2-3 concise bullet points. "

"Focus on the key decisions and technical choices."

),

"expand": (

"Expand the following text with more technical detail and examples. "

"Keep the same structure and add depth."

),

"extract_todos": (

"Extract all actionable items from this text and format them as "

"a markdown todo list. If there are no actionable items, suggest "

"relevant next steps based on the content."

),

"generate_links": (

"Analyze the following note and suggest related topics that should "

"be linked. Format as a markdown list of wiki-links: [[topic-name]]. "

"Only suggest topics that are genuinely related."

),

}

system_msg = prompts.get(motion, prompts["rewrite"])

if context:

system_msg += f"nnDocument context:n{context[:500]}"

messages = [

{"role": "system", "content": system_msg},

{"role": "user", "content": text},

]

response = consumer.chat.completions.create(

mannequin=mannequin, messages=messages, temperature=0.3, max_tokens=1000,

)

return response.selections[0].message.content material.strip()

auth_doc = kg.get("authentication")

print("n🔄 Transform: SUMMARIZE — Authentication Systemn")

abstract = ai_transform(auth_doc.raw_content, "summarize")

print(abstract)

print("nn🔗 Transform: GENERATE_LINKS — Authentication Systemn")

hyperlinks = ai_transform(auth_doc.raw_content, "generate_links")

print(hyperlinks)

print("nn✅ Transform: EXTRACT_TODOS — Performance Notesn")

perf_doc = kg.get("performance-notes")

todos = ai_transform(perf_doc.raw_content, "extract_todos")

print(todos)

print("n✅ Section 4 complete — AI transforms demonstrated.n")We outline the ai_transform operate that mirrors IWE’s config.toml motion system, supporting 5 remodel sorts: rewrite, summarize, broaden, extract_todos, and generate_links, every backed by a tailor-made system immediate despatched to OpenAI. We run three dwell demonstrations towards our data base: we summarize the Authentication System doc into concise bullet factors, analyze it for recommended wiki hyperlinks to associated matters, and extract actionable to-do gadgets from the Efficiency Notes doc. We see how IWE’s AI motion sample, deciding on a doc, selecting a remodel, and making use of it in-place, interprets immediately right into a reusable Python operate that works with any word in our graph.

print("─" * 72)

print(" 5 · Agentic RAG — AI Navigates Your Knowledge Graph")

print("─" * 72)

AGENT_TOOLS = [

{

"type": "function",

"function": {

"name": "iwe_find",

"description": "Search the knowledge graph for documents matching a query. Returns a list of document keys.",

"parameters": {

"type": "object",

"properties": {

"query": {"type": "string", "description": "Search query"},

"roots_only": {"type": "boolean", "description": "Only return root/MOC documents", "default": False},

},

"required": ["query"],

},

},

},

{

"type": "function",

"function": {

"name": "iwe_retrieve",

"description": "Retrieve a document's content with linked context. Use depth>0 to follow outgoing links, context>0 to include parent documents.",

"parameters": {

"type": "object",

"properties": {

"key": {"type": "string", "description": "Document key to retrieve"},

"depth": {"type": "integer", "description": "How many levels of child links to follow (0-2)", "default": 1},

"context": {"type": "integer", "description": "How many levels of parent context (0-1)", "default": 0},

},

"required": ["key"],

},

},

},

{

"type": "function",

"function": {

"name": "iwe_tree",

"description": "Show the document hierarchy starting from a given key.",

"parameters": {

"type": "object",

"properties": {

"key": {"type": "string", "description": "Root document key"},

},

"required": ["key"],

},

},

},

{

"type": "function",

"function": {

"name": "iwe_stats",

"description": "Get statistics about the entire knowledge base.",

"parameters": {"type": "object", "properties": {}},

},

},

]

def execute_tool(identify: str, args: dict) -> str:

if identify == "iwe_find":

outcomes = kg.discover(args["query"], roots_only=args.get("roots_only", False))

return json.dumps({"results": outcomes})

elif identify == "iwe_retrieve":

content material = kg.retrieve(

args["key"],

depth=args.get("depth", 1),

context=args.get("context", 0),

)

return content material[:3000]

elif identify == "iwe_tree":

return kg.tree(args["key"])

elif identify == "iwe_stats":

return json.dumps(kg.stats(), indent=2)

return "Unknown tool"

def run_agent(query: str, max_turns: int = 6, mannequin: str = "gpt-4o-mini") -> str:

system_prompt = textwrap.dedent("""

You're an AI assistant with entry to a private data graph (IWE).

Use the supplied instruments to navigate the graph and reply questions.

Workflow:

1. Use iwe_find to find related paperwork

2. Use iwe_retrieve to learn content material (set depth=1 to comply with hyperlinks)

3. Comply with relationships to construct complete understanding

4. Synthesize info from a number of paperwork

Be particular and cite which paperwork you discovered info in.

In case you can't discover sufficient info, say so clearly.

""")

messages = [

{"role": "system", "content": system_prompt},

{"role": "user", "content": question},

]

for flip in vary(max_turns):

response = consumer.chat.completions.create(

mannequin=mannequin, messages=messages, instruments=AGENT_TOOLS,

tool_choice="auto",

)

msg = response.selections[0].message

if msg.tool_calls:

messages.append(msg)

for tc in msg.tool_calls:

fn_name = tc.operate.identify

fn_args = json.hundreds(tc.operate.arguments)

print(f" 🔧 Agent calls: {fn_name}({fn_args})")

end result = execute_tool(fn_name, fn_args)

messages.append({

"role": "tool",

"tool_call_id": tc.id,

"content": end result,

})

else:

return msg.content material

return "Agent reached maximum turns without completing."

questions = [

"How does our authentication system work, and what database tables does it depend on?",

"What is our deployment pipeline, and what are the performance SLO targets?",

"Give me a high-level overview of the entire project architecture.",

]

for i, q in enumerate(questions, 1):

print(f"n{'═' * 72}")

print(f" Question {i}: {q}")

print(f"{'═' * 72}n")

reply = run_agent(q)

print(f"n💡 Agent Answer:n{answer}n")

print("n✅ Section 5 complete — Agentic RAG demonstrated.n")We construct the total agentic retrieval pipeline that embodies IWE’s “Context Bridge” idea: an AI agent that navigates our data graph utilizing OpenAI operate calling with 4 instruments: iwe_find for discovery, iwe_retrieve for context-aware content material fetching, iwe_tree for hierarchy exploration, and iwe_stats for data base analytics. We wire up the device executor that dispatches every operate name to our KnowledgeGraph occasion, and we implement the agent loop that iterates by means of search-retrieve-synthesize cycles till it assembles an entire reply. We then run three progressively complicated demo questions, asking about authentication dependencies, deployment and SLO targets, and a full undertaking structure overview, and watch the agent autonomously name instruments, comply with hyperlinks between paperwork, and produce complete solutions grounded in our notes.

print("─" * 72)

print(" 6 · AI-Powered Knowledge Graph Maintenance")

print("─" * 72)

def analyze_knowledge_gaps(mannequin: str = "gpt-4o-mini") -> str:

stats_info = json.dumps(kg.stats(), indent=2)

titles = [f"- {d.title} ({k}): links to {d.outgoing_links}"

for k, d in kg.documents.items()]

graph_overview = "n".be part of(titles)

response = consumer.chat.completions.create(

mannequin=mannequin,

messages=[

{"role": "system", "content": (

"You are a knowledge management consultant. Analyze this "

"knowledge graph and identify: (1) missing topics that should "

"exist, (2) documents that should be linked but aren't, "

"(3) areas that need more detail. Be specific and actionable."

)},

{"role": "user", "content": (

f"Knowledge base stats:n{stats_info}nn"

f"Document structure:n{graph_overview}"

)},

],

temperature=0.4, max_tokens=1000,

)

return response.selections[0].message.content material.strip()

def generate_new_note(subject: str, related_keys: record[str],

mannequin: str = "gpt-4o-mini") -> str:

context_parts = []

for key in related_keys[:3]:

doc = kg.get(key)

if doc:

context_parts.append(f"## {doc.title}n{doc.raw_content[:400]}")

context = "nn".be part of(context_parts)

response = consumer.chat.completions.create(

mannequin=mannequin,

messages=[

{"role": "system", "content": (

"You are a technical writer. Generate a new markdown note "

"about the given topic. Use wiki-links [[like-this]] to "

"reference related existing documents. Include relevant "

"headers, code examples where appropriate, and hashtag tags."

)},

{"role": "user", "content": (

f"Topic: {topic}nn"

f"Related existing notes for context:n{context}nn"

f"Available documents to link to: {list(kg.documents.keys())}"

)},

],

temperature=0.5, max_tokens=1200,

)

return response.selections[0].message.content material.strip()

print("n🔍 Analyzing knowledge gaps...n")

gaps = analyze_knowledge_gaps()

print(gaps)

print("nn📝 Generating a new note: 'Error Handling Strategy'...n")

new_note = generate_new_note(

"Error Handling Strategy",

related_keys=["api-design", "authentication", "frontend-stack"],

)

print(new_note[:1000] + "n... (truncated)")

kg.add_document("error-handling", new_note)

print(f"n✅ Added 'error-handling' to knowledge graph. "

f"Total documents: {len(kg.documents)}")

dot_output = kg.export_dot(highlight_key="error-handling")

attempt:

import graphviz

src = graphviz.Supply(dot_output)

src.render("knowledge_graph_v2", format="png", cleanup=True)

print("✅ Updated graph rendered to 'knowledge_graph_v2.png'")

attempt:

from IPython.show import Picture, show

show(Picture("knowledge_graph_v2.png"))

besides ImportError:

move

besides Exception as e:

print(f" ⚠ Graphviz rendering skipped: {e}")

print("n✅ Section 6 complete — AI-powered maintenance demonstrated.n")

print("─" * 72)

print(" 7 · Multi-Hop Reasoning Across the Knowledge Graph")

print("─" * 72)

complex_question = (

"If we increase our traffic from 1000 RPS to 5000 RPS sustained, "

"what changes would be needed across the entire stack — from database "

"connection pooling, to caching, to authentication token handling, "

"to deployment infrastructure?"

)

print(f"n🧠 Complex multi-hop question:n {complex_question}n")

reply = run_agent(complex_question, max_turns=8)

print(f"n💡 Agent Answer:n{answer}")

print("nn" + "=" * 72)

print(" ✅ TUTORIAL COMPLETE")

print("=" * 72)

print("""

You have explored all of the core ideas of IWE:

1. Data Graph — Paperwork as nodes, hyperlinks as edges

2. Markdown Parsing — Wiki-links, headers, tags

3. Maps of Content material — Hierarchical organisation (MOC)

4. Graph Operations — discover, retrieve, tree, squash, stats, export

5. AI Transforms — Rewrite, summarize, broaden, extract todos

6. Agentic Retrieval — AI agent navigating your data graph

7. Graph Upkeep — AI-powered hole evaluation and word technology

8. Multi-Hop Reasoning — Cross-document synthesis

To make use of IWE for actual (along with your editor):

→

→

IWE helps VS Code, Neovim, Zed, and Helix through LSP.

""")We use AI to research our data graph for structural gaps, figuring out lacking matters, unlinked paperwork, and areas that want extra depth. We then robotically generate a brand new “Error Handling Strategy” word that references present paperwork through wiki hyperlinks and add it to the dwell graph. We re-render the up to date Graphviz visualization, highlighting the brand new node to indicate how the data base grows organically as AI and human contributions layer on high of one another. We shut with a posh multi-hop reasoning problem, asking what modifications are wanted throughout your entire stack if we scale from 1000 to 5000 RPS, the place the agent should traverse database, caching, authentication, and deployment paperwork to synthesize a cross-cutting reply that no single word may present alone.

In conclusion, we now have an entire, working implementation of IWE’s core concepts working in Colab atmosphere. Now we have seen how structuring notes as a graph, moderately than treating them as flat information, unlocks highly effective capabilities: relationships develop into navigable paths, context flows naturally from mum or dad to little one paperwork, and AI brokers can uncover, traverse, and synthesize data precisely as we set up it. Now we have constructed the total pipeline from markdown parsing and backlink indexing to graph traversal operations, AI-powered doc transforms, agentic retrieval with tool-calling, data hole evaluation, and multi-hop reasoning spanning your entire data base. Every little thing we construct right here maps on to IWE’s actual options: the discover, retrieve, tree, squash, and export instructions, the config.toml AI actions and the Context Bridge philosophy, which positions your private data graph as shared reminiscence between you and your AI brokers.

Try the Full Pocket book right here. Additionally, be at liberty to comply with us on Twitter and don’t neglect to affix our 120k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you may be part of us on telegram as effectively.

Michal Sutter is a knowledge science skilled with a Grasp of Science in Information Science from the College of Padova. With a strong basis in statistical evaluation, machine studying, and knowledge engineering, Michal excels at reworking complicated datasets into actionable insights.